“Purifying” Photons: Scientists Found a Way to Clean Light Itself

•December 23, 2025

0

Why It Matters

Purified photon streams directly improve the efficiency and security of emerging photonic quantum technologies, accelerating their path to practical deployment.

Key Takeaways

- •Laser scatter can cancel multi-photon emissions

- •Purified photon streams boost quantum computer efficiency

- •Method relies on precise laser angle and beam shape

- •Defense funding supports foundational quantum photonics research

- •Experimental validation needed to confirm theoretical results

Pulse Analysis

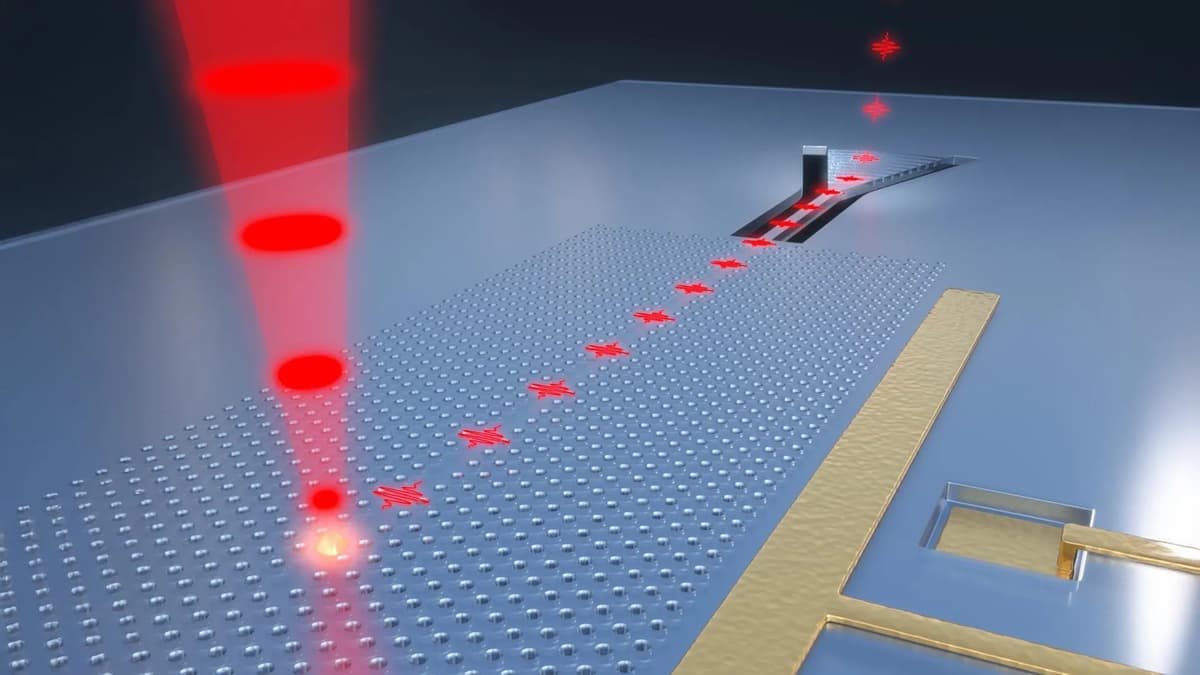

The quest for perfectly isolated single photons has long been a bottleneck for photonic quantum technologies. In conventional setups, a laser excites an atom to emit a photon, but the process inevitably generates stray laser scatter and occasional multi‑photon bursts. These unwanted quanta act like electrical noise in a circuit, degrading gate fidelity and limiting the scalability of optical quantum processors. As a result, many research groups have pursued complex filtering schemes or cavity designs, yet a simple, universal solution has remained elusive.

The University of Iowa team turned this problem on its head by demonstrating that the same laser scatter can be engineered to nullify extra photon emissions. By matching the wavelength spectrum and waveform of the scatter to the multi‑photon signal, the two interfere destructively, leaving only the desired single photon. Precise control of the laser’s incidence angle, beam profile, and timing is essential to achieve this cancellation. The study, published in Optica Quantum, provides a theoretical framework that could replace bulky optical filters with a software‑driven, hardware‑light approach.

If experimentally confirmed, this “noise‑assisted purification” could accelerate the rollout of photonic quantum computers and quantum‑key‑distribution networks by delivering cleaner qubit streams without sacrificing speed. A purer photon source improves error rates, reduces overhead for error‑correction, and strengthens security against eavesdropping. The research, funded by the U.S. Department of Defense and university seed grants, underscores growing governmental interest in quantum‑grade photonics. Companies developing integrated photonic chips are likely to monitor these results closely, as the technique promises a scalable path toward commercial quantum advantage.

“Purifying” photons: Scientists found a way to clean light itself

0

Comments

Want to join the conversation?

Loading comments...