Shows QSVM Generalisation Bounds Under Local Depolarising Noise for NISQ Devices

•February 5, 2026

0

Why It Matters

Accurate margin‑based bounds reveal the true limits of QSVMs on noisy hardware, guiding developers toward more reliable quantum machine‑learning solutions. This shifts industry practice from optimistic global noise assumptions to realistic local‑noise modeling.

Key Takeaways

- •Local depolarising noise sharply reduces QSVM margins

- •Global noise models overestimate QSVM performance

- •Margin size predicts QSVM generalisation accuracy

- •Analytical bounds validated on IBM quantum hardware

- •Findings guide robust quantum ML algorithm design

Pulse Analysis

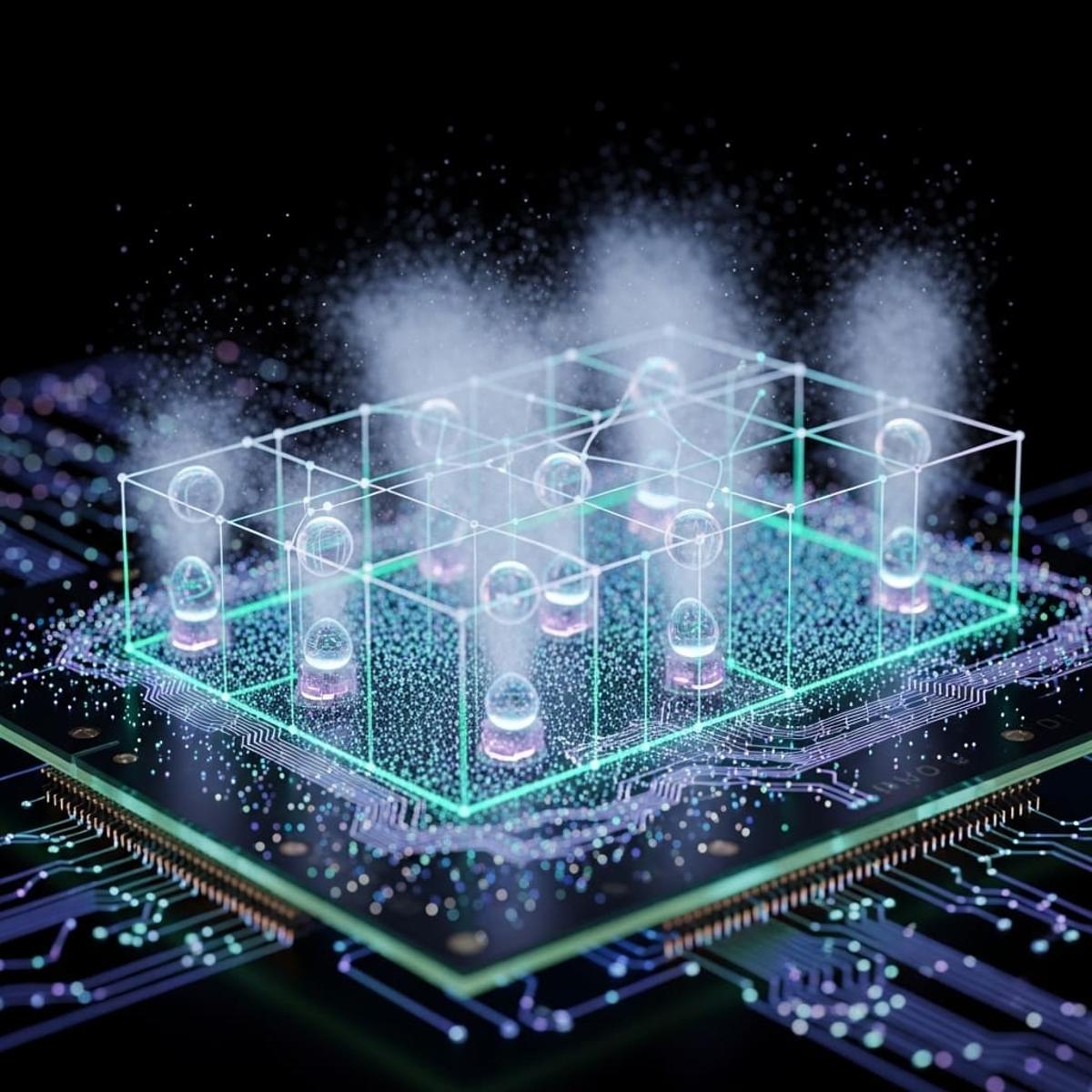

Quantum machine learning faces a fundamental hurdle: the fragile nature of qubits in Noisy Intermediate‑Scale Quantum (NISQ) devices. While quantum kernel methods promise exponential feature spaces, their practical advantage hinges on the model’s ability to generalise despite hardware imperfections. By focusing on the margin—a geometric measure of classifier confidence—researchers can translate quantum noise characteristics into concrete performance metrics, offering a bridge between theoretical promise and real‑world deployment.

The study by Govender and Sinayskiy introduces rigorous upper and lower bounds that quantify how local depolarising noise, which acts independently on each qubit, degrades QSVM margins. Unlike the globally applied depolarising model that assumes uniform error across the entire system, the local model aligns closely with observed error patterns on IBM’s ibm_fez processor. Numerical simulations across diverse datasets, including the Breast Cancer benchmark, and hardware experiments validate the analytical predictions, demonstrating that margins remain a reliable indicator of predictive accuracy even as noise intensifies.

These insights have immediate implications for both academia and industry. Developers can now incorporate margin‑based diagnostics into quantum algorithm pipelines, enabling early detection of noise‑induced performance loss and informing error‑mitigation strategies such as circuit recompilation or qubit‑wise error correction. Moreover, the validated bounds provide a benchmark for future research exploring alternative noise models or extending the analysis to deeper quantum circuits. As quantum hardware matures, grounding algorithmic expectations in realistic noise assessments will be essential for unlocking scalable, trustworthy quantum AI applications.

Shows QSVM Generalisation Bounds under Local Depolarising Noise for NISQ Devices

0

Comments

Want to join the conversation?

Loading comments...