Cerebras Closes $1 Billion Funding Round at $23 Billion Valuation After Landing OpenAI Deal

•February 4, 2026

0

Companies Mentioned

Why It Matters

The funding and OpenAI deal cement Cerebras as a serious challenger to Nvidia in the high‑performance inference market, accelerating the shift toward wafer‑scale hardware for enterprise AI workloads.

Key Takeaways

- •Cerebras raises over $1B, valuation $23B.

- •Funding led by Tiger Global, includes AMD, Fidelity.

- •OpenAI signs $10B deal for wafer-scale inference chips.

- •WSE-3 enables models ten times larger than GPT‑4.

- •Nvidia faces competition in AI inference market.

Pulse Analysis

Cerebras’ latest funding round signals a pivotal moment for AI‑focused silicon startups. By securing more than a billion dollars from a roster of deep‑pocketed investors, the company not only validates its wafer‑scale architecture but also gains the financial runway to scale production and R&D. The involvement of strategic players like AMD and Fidelity underscores a broader industry belief that specialized inference hardware can deliver cost‑effective performance gains beyond traditional GPUs.

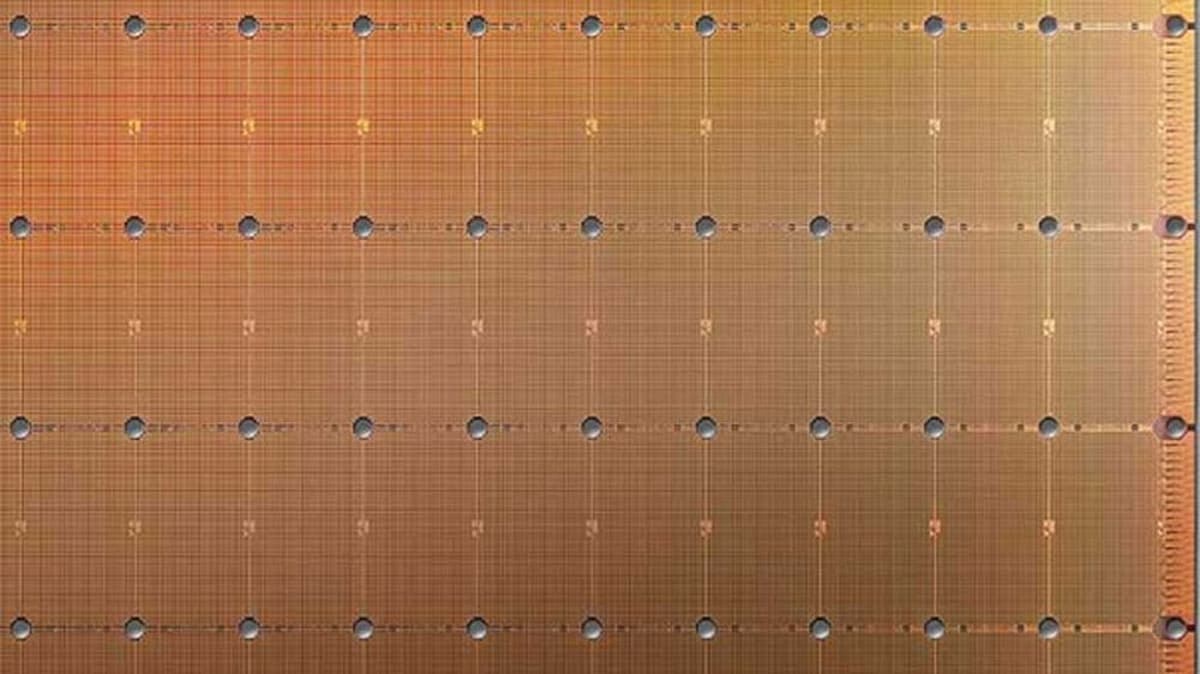

The $10 billion agreement with OpenAI highlights a growing impatience with existing inference solutions. OpenAI’s demand for 750 megawatts of compute over three years reflects a need for dramatically lower latency and higher throughput, especially for reasoning and code‑generation models. Cerebras’ Wafer Scale Engine 3, which integrates an entire silicon wafer into a single chip, promises to cut inference times and support models far larger than those feasible on conventional GPUs, positioning the startup as a viable alternative to Nvidia’s dominant offering.

Beyond the immediate partnership, the deal reshapes the competitive landscape of AI hardware. Wafer‑scale chips could become the new standard for enterprises seeking to run massive language models in production, prompting cloud providers and AI labs to diversify their hardware stacks. As investors pour capital into firms like Cerebras, the market is likely to see intensified innovation, price competition, and a faster migration toward custom silicon solutions that prioritize inference efficiency over raw training power.

Cerebras closes $1 billion funding round at $23 billion valuation after landing OpenAI deal

0

Comments

Want to join the conversation?

Loading comments...