Qontext Closes $2.7M Pre-Seed Round to Develop a Context Layer for AI

•February 5, 2026

0

Why It Matters

Reliable contextual data is the missing foundation for scalable AI, and Qontext’s solution could dramatically improve automation ROI for enterprises. The investment signals strong market demand for AI‑ready data infrastructure.

Key Takeaways

- •Qontext raised $2.7M pre‑seed led by HV Capital

- •Platform adds real‑time context to AI deployments

- •Targets fragmented data across enterprise systems

- •Aims to accelerate AI automation for enterprises

- •Funding fuels team expansion and reusable context layer

Pulse Analysis

The rapid adoption of generative AI has exposed a hidden bottleneck: the lack of reliable, up‑to‑date contextual data within enterprises. While large language models excel at pattern recognition, their outputs become unpredictable when the underlying customer, product, or policy information is scattered across legacy systems, siloed databases, and constantly changing spreadsheets. This fragmentation forces data engineers to rebuild context for each use case, slowing time‑to‑value and inflating operational costs. Investors and CIOs alike are therefore seeking infrastructure that can unify and continuously refresh this critical knowledge base.

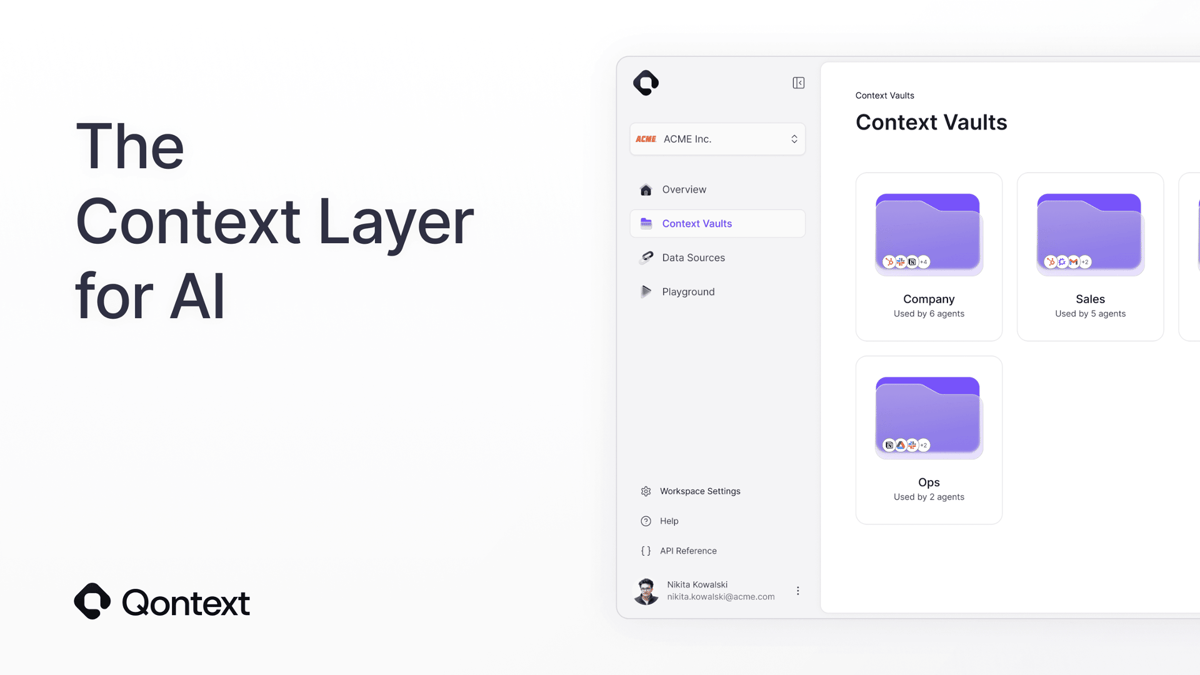

Qontext’s platform addresses that gap by providing an independent context layer that sits between AI models and enterprise data sources. Using connectors and access‑control mechanisms, it aggregates, normalizes, and curates information in real time, delivering a single source of truth to downstream agents in marketing, sales, or support workflows. The company’s founders—drawn from neo4j, Celonis, and n8n—leverage deep expertise in graph databases and automation to ensure scalability and fine‑grained permissions for both human users and AI bots. Early adopters report higher automation reliability and faster rollout cycles.

The $2.7 million pre‑seed round, led by HV Capital and backed by seasoned AI infrastructure founders, gives Qontext the runway to expand its engineering team and build reusable context modules. As enterprises move from pilot projects to enterprise‑wide AI strategies, a standardized context layer could become as essential as cloud compute today. Success would not only improve ROI on AI investments but also set a new baseline for compliance and data governance, positioning Qontext as a foundational piece of the emerging AI stack.

Qontext closes $2.7M pre-seed round to develop a context layer for AI

0

Comments

Want to join the conversation?

Loading comments...