Google Deepmind Gives Gemini 3 Flash the Ability to Actively Explore Images Through Code

•January 28, 2026

0

Companies Mentioned

Why It Matters

Agentic Vision transforms multimodal AI from passive perception to interactive analysis, unlocking higher accuracy and new enterprise use cases. It gives Google a competitive edge in the race to more capable, tool‑augmented language models.

Key Takeaways

- •Agentic Vision lets Gemini 3 Flash run Python on images.

- •Think‑act‑observe loop improves vision benchmarks 5‑10%.

- •Blueprint startup gains 5% accuracy using iterative inspection.

- •Some tools need explicit prompts; not fully autonomous yet.

- •Feature currently limited to Flash model, expansion planned.

Pulse Analysis

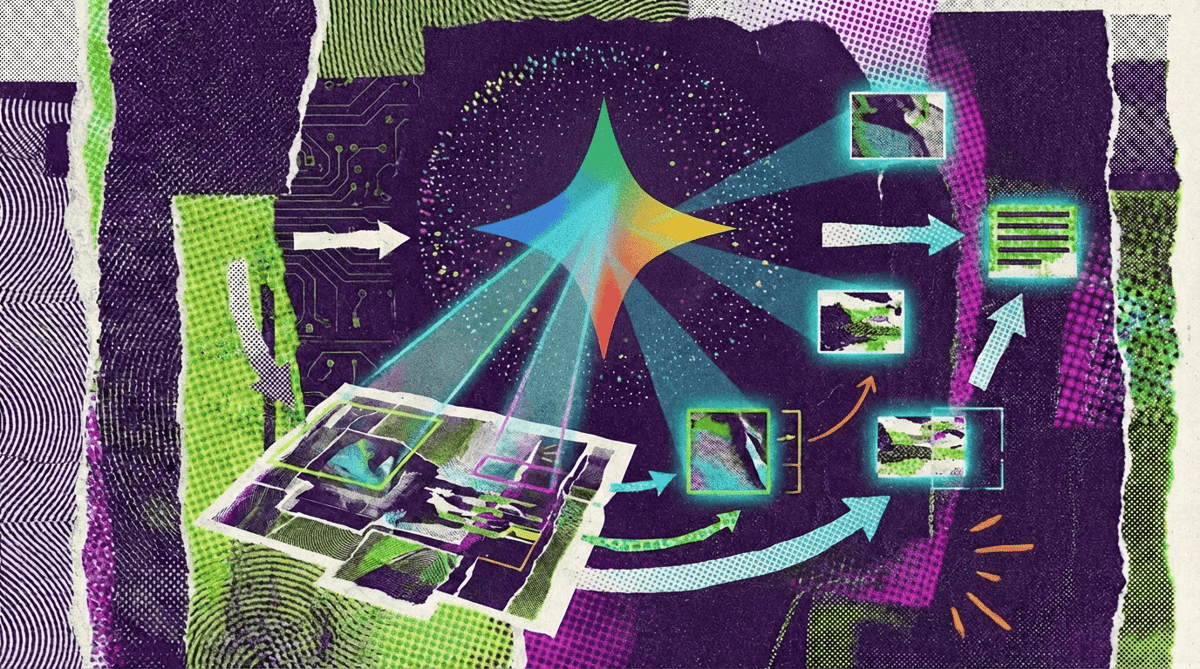

Google’s Agentic Vision marks a shift from static image processing to a dynamic, code‑driven workflow. By letting Gemini 3 Flash emit Python snippets that manipulate visual data—cropping, rotating, drawing bounding boxes—the model can iteratively refine its understanding. This mirrors the emerging “tool‑use” paradigm seen in OpenAI’s o3 model, but DeepMind’s implementation embeds the loop directly into the model’s reasoning chain, delivering measurable benchmark gains of 5‑10 percent.

The business impact is immediate. In a pilot with PlanCheckSolver.com, the model’s ability to dissect high‑resolution blueprints piece‑by‑piece lifted compliance‑checking accuracy by five points, a tangible improvement for construction firms that rely on precise code verification. Similar gains are evident in visual‑math tasks, where Gemini 3 Flash parses tables, runs calculations in a sandboxed Python environment, and returns charted results, reducing hallucinations. Developers can now integrate these capabilities via the Gemini API in AI Studio or Vertex AI, opening pathways for custom image‑analysis pipelines across sectors such as healthcare imaging, satellite reconnaissance, and e‑commerce visual search.

Despite the promise, Agentic Vision is not yet fully autonomous. Features like image rotation or complex visual reasoning still demand explicit user instructions, and the functionality is confined to the Flash model. Google’s roadmap includes expanding the toolset to other model sizes and adding web‑search and reverse‑image capabilities. As enterprises seek AI that can both see and act, DeepMind’s incremental rollout positions it as a strong contender against OpenAI and Anthropic, signaling a broader industry move toward integrated, code‑enabled multimodal agents.

Google Deepmind gives Gemini 3 Flash the ability to actively explore images through code

0

Comments

Want to join the conversation?

Loading comments...