Unprecedented 3D Views of Sensory Cells Accelerate Hearing Research

•January 28, 2026

0

Why It Matters

Rapid, automated 3D imaging of stereocilia dramatically shortens research cycles, enabling faster validation of therapies for hearing loss and accelerating the development of precision treatments.

Key Takeaways

- •VASCilia speeds stereocilia analysis fiftyfold.

- •AI generates 3D cochlear hair cell visualizations.

- •Open-source tool enables large‑scale quantitative hearing studies.

- •Facilitates evaluation of gene‑therapy outcomes for deafness.

Pulse Analysis

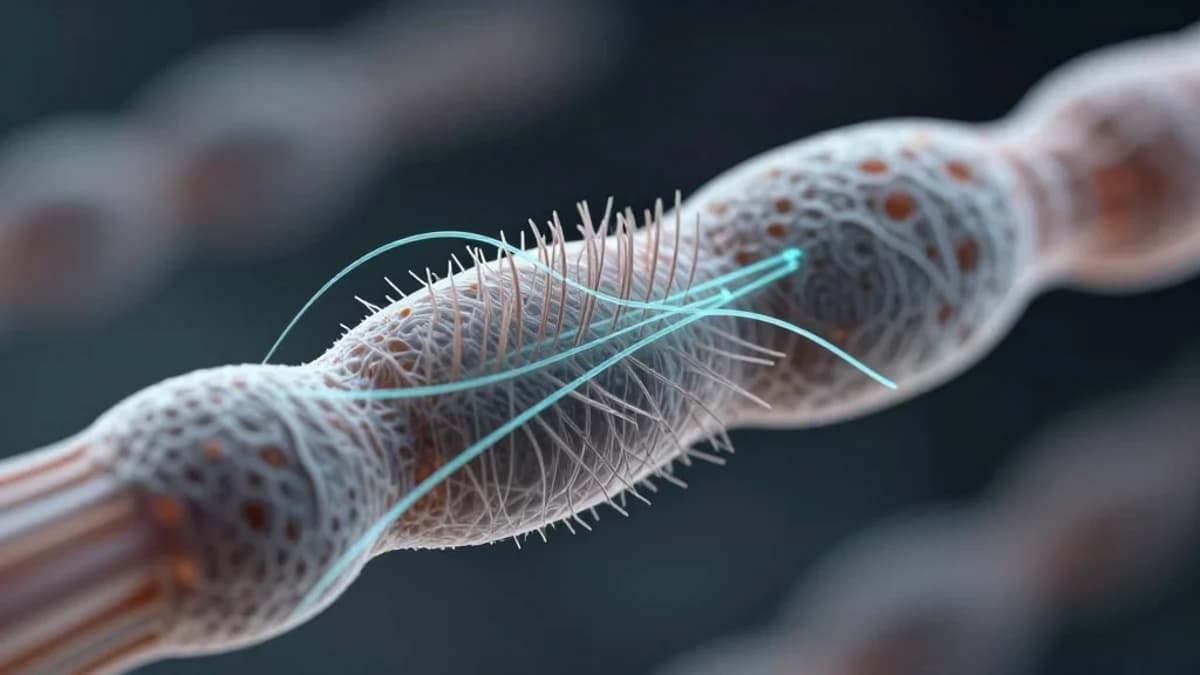

The inner ear’s cochlear hair cells have long been a bottleneck for auditory science because traditional microscopy requires painstaking manual annotation. VASCilia’s deep‑learning pipeline transforms raw confocal stacks into fully rendered 3D models in minutes, freeing researchers from years‑long labor and reducing human error. This speed boost not only expands sample sizes but also opens the door to longitudinal studies that track stereocilia morphology under varying acoustic stresses, a critical step toward deciphering the cellular basis of hearing loss.

Technically, VASCilia leverages five specialized convolutional networks trained on expertly labeled mouse datasets. The models segment individual stereocilia, calculate precise length metrics, and infer bundle orientation, producing quantitative outputs that were previously unattainable at scale. By publishing the code under an open‑source license, the UC San Diego team invites the global hearing‑research community to adapt the framework for other species, imaging modalities, and molecular markers, fostering a collaborative atlas of cochlear architecture.

Beyond basic science, the tool’s ability to rapidly benchmark gene‑therapy outcomes could accelerate clinical pipelines for congenital deafness and age‑related hearing decline. Quantifiable 3D phenotypes enable regulators and biotech firms to demonstrate efficacy with objective, reproducible data. As AI continues to permeate biomedical imaging, VASCilia exemplifies how domain‑specific deep learning can translate into tangible therapeutic advances, positioning the hearing‑loss market for a new wave of precision interventions.

Unprecedented 3D views of sensory cells accelerate hearing research

0

Comments

Want to join the conversation?

Loading comments...