Virtual Staining Advances: AI Uses Cell Context to Improve Imaging Accuracy

•January 20, 2026

0

Companies Mentioned

Why It Matters

The technology eliminates toxic fluorescent dyes, boosting live‑cell studies and accelerating biomedical research pipelines. Its scalability could reshape imaging workflows in pharma, biotech, and academic labs.

Key Takeaways

- •AI adds cell context to improve virtual staining accuracy

- •Reduces need for fluorescent dyes, preserving cell viability

- •Enables labeling of rare events like cell division

- •Paves way for foundation models for microscopy

- •Could accelerate drug discovery and disease research

Pulse Analysis

Label‑free microscopy has long offered a non‑invasive window into cellular dynamics, but its contrast is limited, prompting researchers to overlay fluorescent markers. Early AI‑driven "in silico staining" models learned a direct pixel‑to‑pixel mapping, delivering decent visualizations yet stumbling on ambiguous structures and low‑frequency events. Without contextual awareness, these systems behaved like a dictionary that knows words but not sentences, leading to misclassifications that required manual correction or additional staining—both costly and potentially harmful to cells.

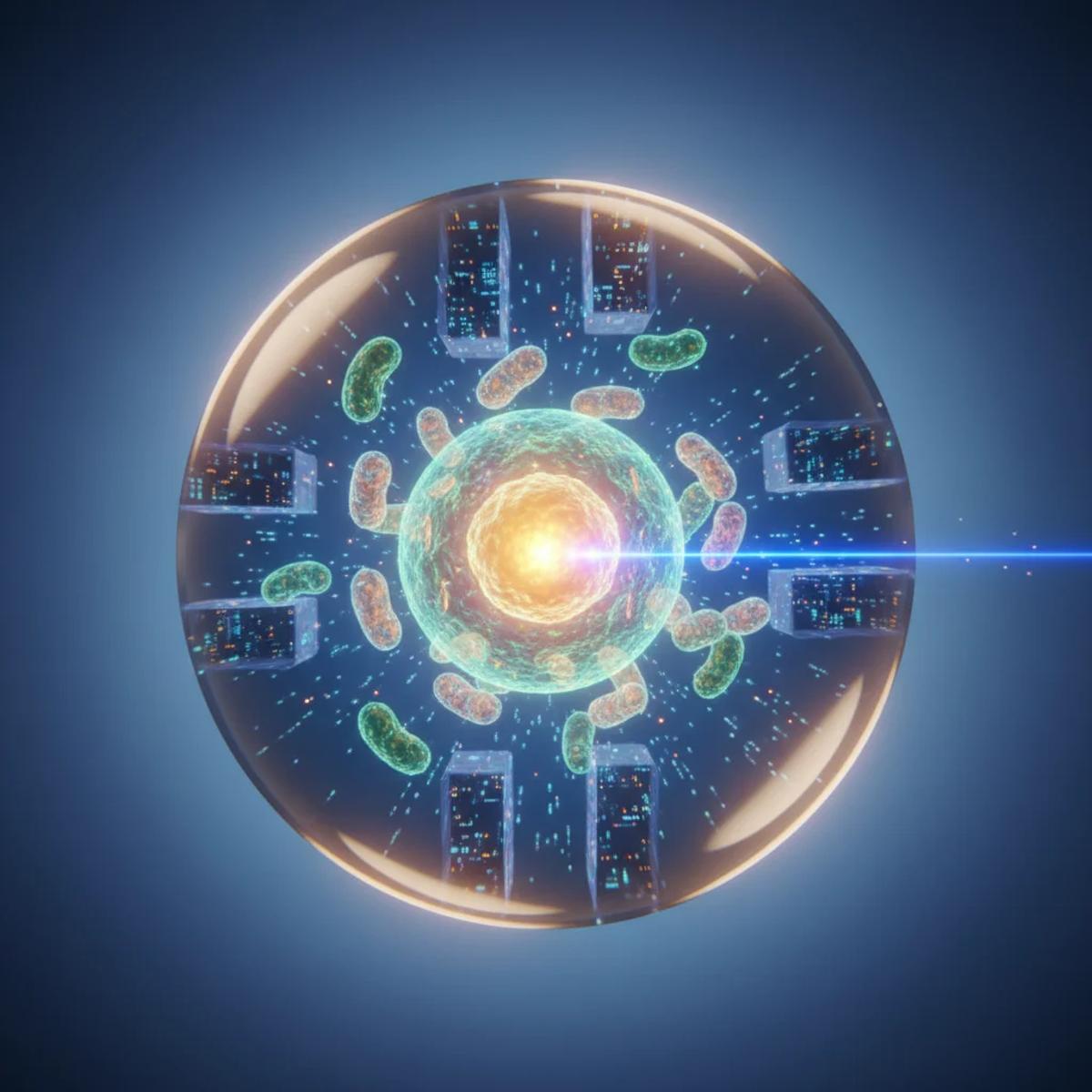

The breakthrough from Ben‑Gurion University injects biological context into the AI pipeline. By integrating metadata such as cell morphology, spatial relationships, and colony organization, the model interprets the image as a coherent scene rather than isolated pixels. This contextual reasoning sharply improves the fidelity of virtual stains, especially for rare processes like mitosis that differ markedly from the average cell. Laboratories can now generate high‑content, multi‑organelle visualizations without the phototoxicity and expense of traditional dyes, accelerating high‑throughput screening and longitudinal studies while maintaining physiological relevance.

Looking ahead, the researchers envision scaling this approach into a foundation model—a cellular language model capable of understanding inputs from diverse microscopes, cell types, disease states, and pharmacological perturbations. Such a model would act as a universal translator, enabling seamless cross‑platform data integration and predictive analytics. For the biotech and pharmaceutical sectors, this could translate into faster target validation, more precise phenotypic screens, and reduced time‑to‑market for therapeutics. Investment interest is already rising, as the convergence of AI, computational biology, and imaging promises a new era of data‑rich, minimally invasive cellular research.

Virtual staining advances: AI uses cell context to improve imaging accuracy

0

Comments

Want to join the conversation?

Loading comments...