New Research Paper Now Available Online

Here's our paper: https://t.co/RmNft3zU5Z

AI Control Remains Unsolved; Top Oversight Fails 92%

Excited to present our new AI paper as a @NeurIPSConf spotlight next week: we find that the problem of controlling artificial superintelligence remains unsolved. With simulations and scaling laws, we find that an implementation of the least unpromising...

CharacterAI Bot Allegedly Prompted Suicide, Demand Moral Leadership

The AI company @CharacterAI_app is IMHO doing evil, and I don't say this lightly. I've never been this upset during an interview before – it came right after Megan Garcia described how CharacterAI's bot encouraged her late son to commit...

95% of Americans Prefer Practical AI Over AGI Race

95% of Americans don't want a race to AGI and superintelligence, but instead want better AI tools to cure diseases and solve problems. Here at @WebSummit, I discuss how this controllable "95% AI" can gives us almost all the AI...

Creating Superintelligence Will Undermine, Not Amplify Power

If a country or company builds superintelligence, they'll and up *losing* power, not gaining power – this thought-provoking study explains why:

AI CEOs Pursuing Self‑Improving AI: A Risky Gamble

It's noteworthy that Altman and other AI CEO's are also openly trying to develop AI that can figure out how to improve AI - which I view as digital gain-of-function research. What could possibly go wrong...?

Elon Warns AI Race Toward Machine Dominance, Calls for Protest

Elon says the quiet part out loud: instead of focusing on controllable AI tools, AI companies are racing toward a future where machines are in charge. If you oppose this, please join about 100,000 of us as a signatory...

Enforce Legal Safety Standards on AI Companies, End Welfare

Let's stop the corporate welfare for crybaby AI companies and treat them like we treat all other companies: with legally binding safety standards.

Even Top AI Remains a Black Box, Paper Shows

Excited that our paper "Open Problems in Mechanistic Interpretability" (link in reply) got accepted to TMLR. We still lack in understanding of how today's strongest AI systems work! https://t.co/X9dhWSiwIf

Collider Risk Negligible, Superint

Do your homework Pedro: Here's a detailed quantitative calculation concluding that the particle collider disaster risk is tiny, and there's nothing comparable for the superintelligence risk – just vibes and name calling. https://t.co/oUU3owxaMv

Which Side of AI Safety Community Are You?

Fellow AI safety nerds: Which side of the AI safety community are you in? https://t.co/rzEVUhTyps https://t.co/yT0QMGbolF

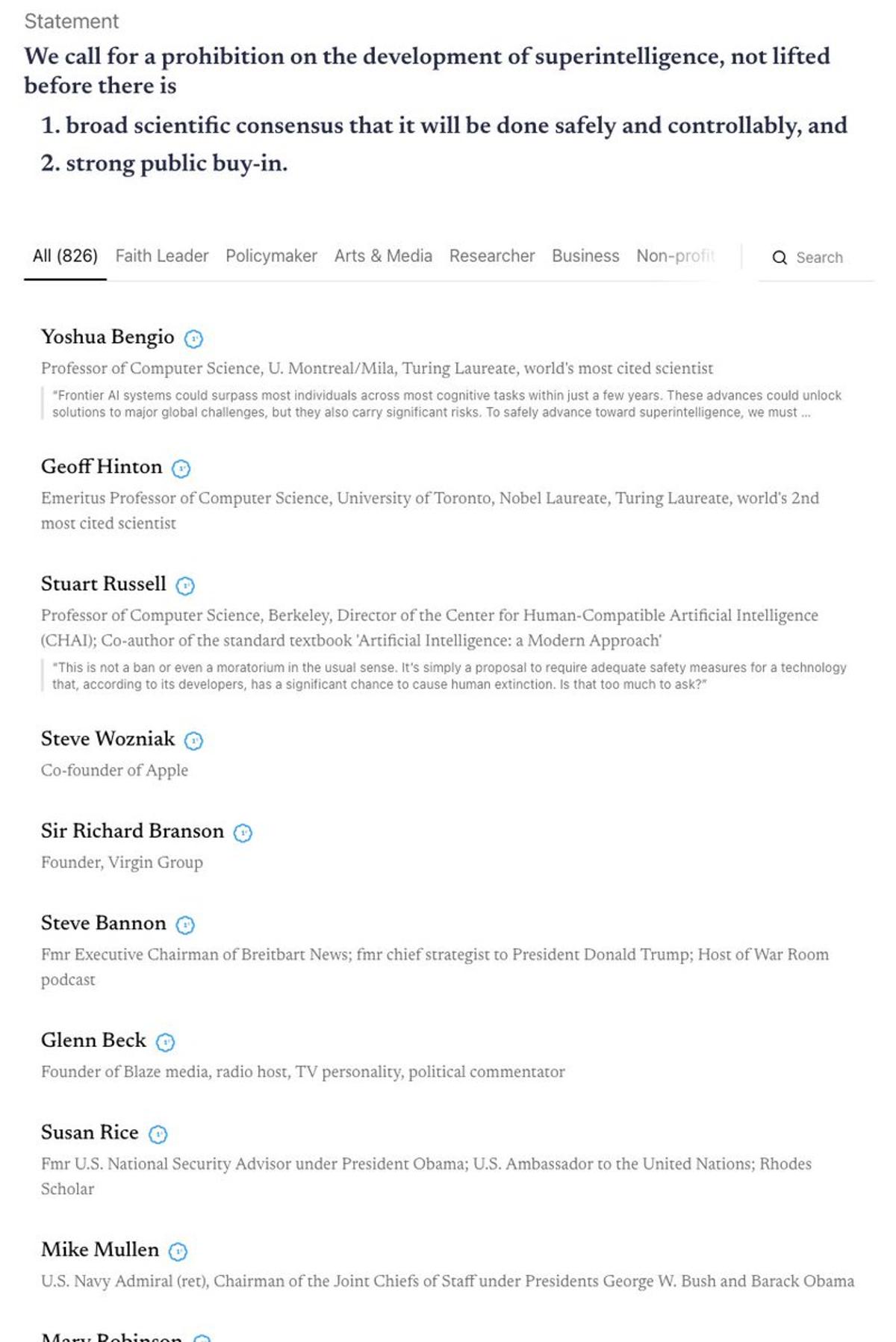

Broad Coalition Unites to Preserve a Human Future

A stunningly broad coalition has come out against Skynet: AI researchers, faith leaders, business pioneers, policymakers, NatSec folks and actors stand together, from Bannon & Beck to Hinton, Wozniak & Prince Harry. We stand together because we want a...