AI in Cardiovascular Imaging and Interventions: Boon or Bane?

•February 20, 2026

0

Why It Matters

AI promises to improve diagnostic accuracy and procedural efficiency, but inconsistent performance and ethical concerns could hinder widespread clinical adoption. Ensuring robust validation is essential for patient safety and regulatory acceptance.

Key Takeaways

- •AI predicts 10‑year mortality using GGT levels

- •Intravascular imaging AI still limited by small datasets

- •AI tools lack consistency across CCTA platforms

- •Pre‑procedure AI planning improves workflow efficiency

Pulse Analysis

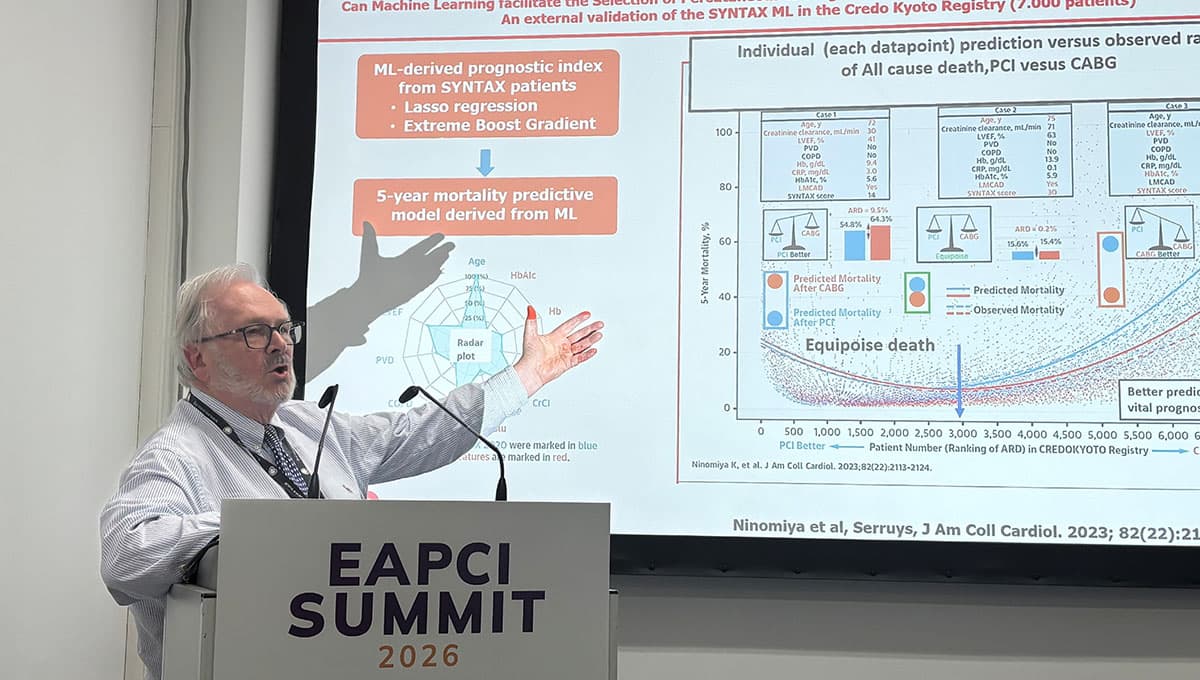

Artificial intelligence is rapidly moving from research labs into the cath lab, offering clinicians the ability to process massive imaging datasets in seconds. Machine‑learning models that integrate biochemical markers such as gamma‑glutamyl transferase with clinical variables can stratify long‑term mortality risk, as demonstrated in the SYNTAX and Japanese registry studies. This predictive power enables more informed patient selection for percutaneous coronary interventions, potentially reducing adverse events and optimizing resource allocation.

Intravascular imaging modalities like IVUS and OCT have benefited from AI‑driven segmentation and plaque characterization, yet the technology remains hampered by small, homogeneous training sets. Consequently, current algorithms can misclassify high‑risk lesions, limiting their reliability in everyday practice. Researchers stress that rigorous external validation across diverse populations is required before AI can replace expert interpretation, and that clinicians must retain ultimate decision‑making authority.

Beyond diagnostics, AI is reshaping procedural workflow. Pre‑procedure simulations of coronary anatomy, flow dynamics, and stent deployment allow heart teams to rehearse complex cases, while natural‑language tools streamline documentation and post‑procedure reporting. However, variability among commercial AI software—particularly in CCTA analysis—raises concerns about reproducibility and regulatory compliance. As professional societies push for standardized validation frameworks, the balance between innovation and patient safety will determine whether AI becomes a routine adjunct or a niche curiosity in cardiovascular care.

AI in Cardiovascular Imaging and Interventions: Boon or Bane?

0

Comments

Want to join the conversation?

Loading comments...