Microsoft Research Unveils Rho-Alpha Robotics Model Combining Vision, Language, and Touch

•February 1, 2026

0

Companies Mentioned

Why It Matters

By integrating touch and synthetic training, Rho‑alpha expands robot autonomy beyond factories, accelerating deployment in logistics, healthcare, and service sectors. Its early‑access model accelerates industry collaboration and data‑driven improvement cycles.

Key Takeaways

- •Rho‑alpha merges vision, language, and tactile sensing.

- •Model trained primarily on synthetic data via Nvidia Isaac Sim.

- •Enables bimanual manipulation from natural‑language commands.

- •Early Access Program opens to researchers for iterative feedback.

- •Mitigates robotics data scarcity by leveraging high‑fidelity simulations.

Pulse Analysis

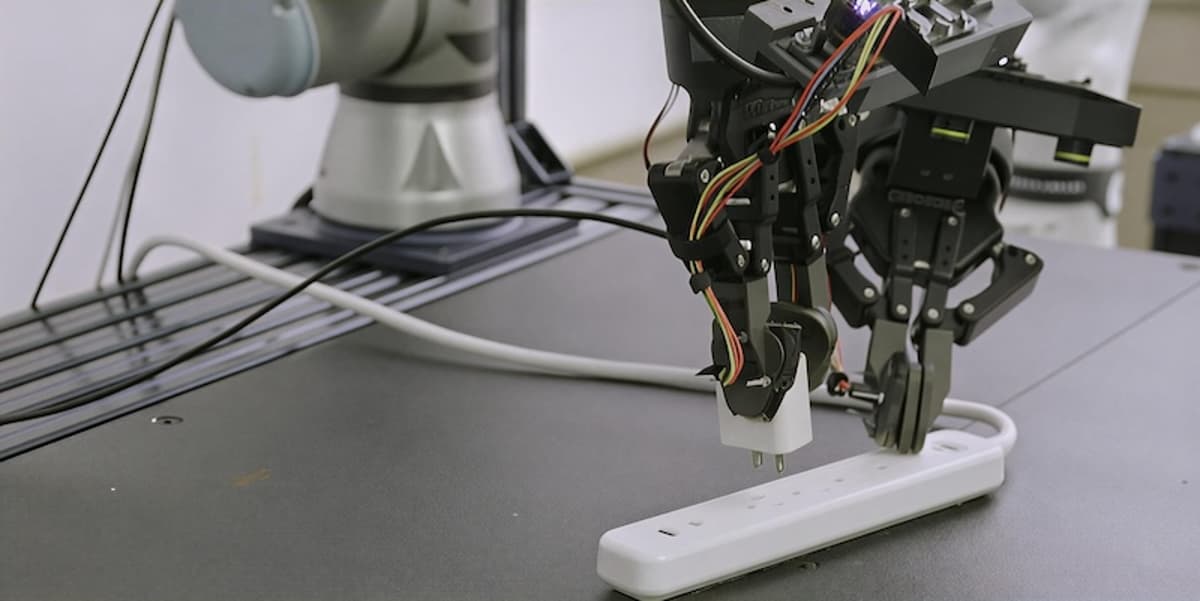

Robotics has long been confined to predictable, line‑of‑sight settings such as assembly lines, where precise programming can guarantee repeatable outcomes. The emergence of foundation models that fuse vision and language has begun to erode that barrier, but a critical missing piece has been the ability to sense and react to physical contact. Rho‑alpha addresses this gap by adding tactile perception to the VLA framework, enabling robots to understand not only what they see and hear but also how objects feel, a capability essential for delicate manipulation in unstructured spaces.

The technical backbone of Rho‑alpha hinges on large‑scale synthetic data generation. Microsoft leveraged Nvidia's Isaac Sim on Azure to create high‑fidelity, physics‑accurate environments where reinforcement‑learning agents produce diverse manipulation demonstrations without costly teleoperation. This approach sidesteps the chronic shortage of real‑world robotics datasets, while still allowing the model to fine‑tune its policies through human‑in‑the‑loop feedback during deployment. By treating touch as an additional modality, the system can infer force and compliance, improving safety and precision in tasks ranging from assembly to assisted living.

From a business perspective, the Research Early Access Program opens a collaborative pipeline for enterprises and academia to experiment with Rho‑alpha, accelerating the feedback loop that drives model refinement. Companies eyeing warehouse automation, healthcare assistance, or consumer‑grade service robots can prototype with a system that learns continuously, reducing time‑to‑market and development costs. As more organizations adopt this VLA+ paradigm, the competitive landscape will shift toward solutions that blend perception, language, and haptic intelligence, heralding a new era of adaptable, autonomous robots.

Microsoft Research unveils Rho-alpha robotics model combining vision, language, and touch

0

Comments

Want to join the conversation?

Loading comments...