NVIDIA Adds Cosmos Policy to Its World Foundation Models

•February 19, 2026

0

Companies Mentioned

Why It Matters

Cosmos Policy shows that large pretrained video models can be repurposed for robot control, cutting data and compute costs while boosting performance across simulation and real‑world tasks.

Key Takeaways

- •Single diffusion model handles actions, predictions, and value estimation.

- •Post‑training on demonstrations yields SOTA on LIBERO, RoboCasa.

- •No separate perception or control networks required.

- •Planning mode improves task success by ~12.5% in real tests.

- •Leverages pretrained physics understanding, reducing training data needs.

Pulse Analysis

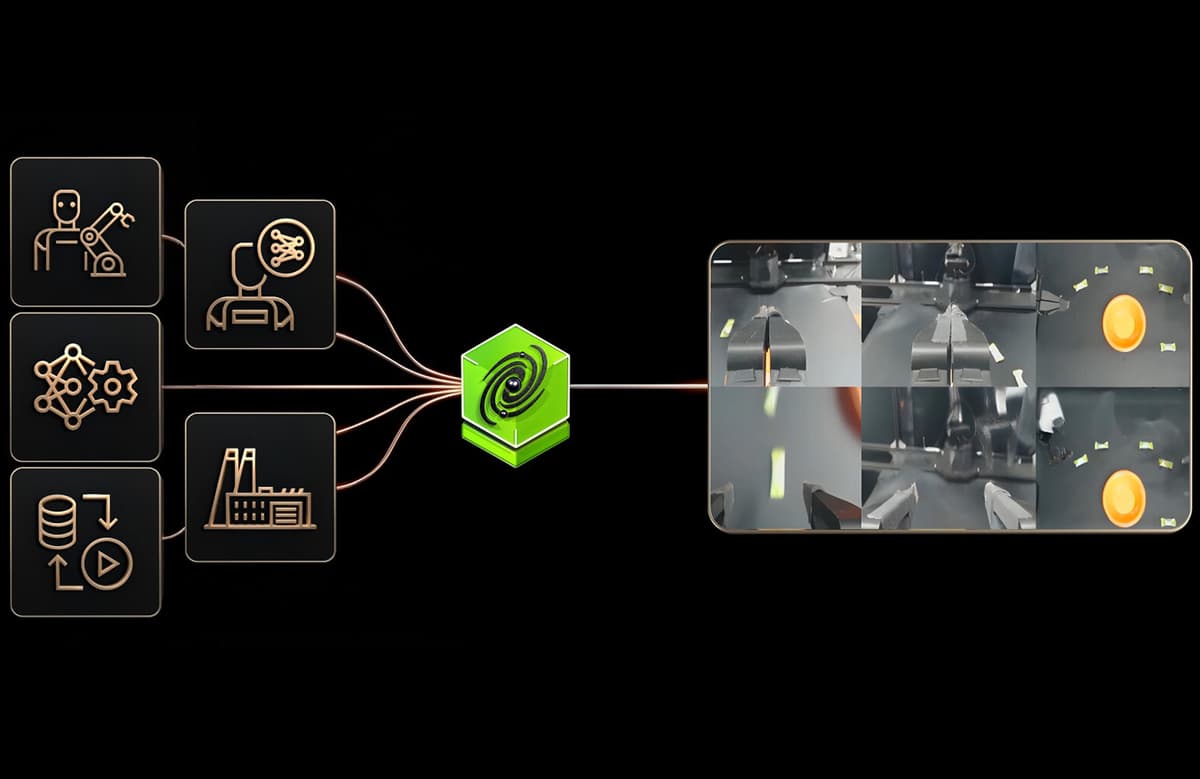

The robotics community has long sought a unified model that can perceive, plan, and act without the overhead of stitching together multiple neural networks. NVIDIA’s Cosmos family of world foundation models addresses this gap by training on massive video datasets to learn the physics of how scenes evolve. Their diffusion‑based architecture captures temporal dynamics in a latent space, enabling the model to generate plausible future frames—a capability that directly translates to predicting robot motions and outcomes.

Cosmos Policy extends this foundation by treating robot actions, state observations, and success scores as additional latent frames, effectively turning control into a video generation problem. This single‑stage post‑training on a modest set of robot demonstrations allows the model to inherit the pretrained understanding of gravity, object interactions, and multi‑modal cues. The result is a unified policy that can output action sequences, forecast subsequent observations, and estimate value functions—all within the same network—simplifying deployment and reducing the need for separate perception or planning modules.

For industry, the breakthrough promises faster development cycles for autonomous systems, from warehouse manipulators to autonomous vehicles. By leveraging a pretrained video model, developers can achieve higher success rates with fewer real‑world demonstrations, lowering data collection costs and accelerating time‑to‑market. Moreover, the ability to switch between direct execution and model‑based planning offers flexibility for safety‑critical applications, positioning NVIDIA’s Cosmos Policy as a competitive edge in the rapidly evolving AI‑driven robotics landscape.

NVIDIA adds Cosmos Policy to its world foundation models

0

Comments

Want to join the conversation?

Loading comments...