Why It Matters

The technology lowers the expertise barrier for product design, opening rapid, sustainable prototyping to non‑engineers and reshaping localized manufacturing.

Key Takeaways

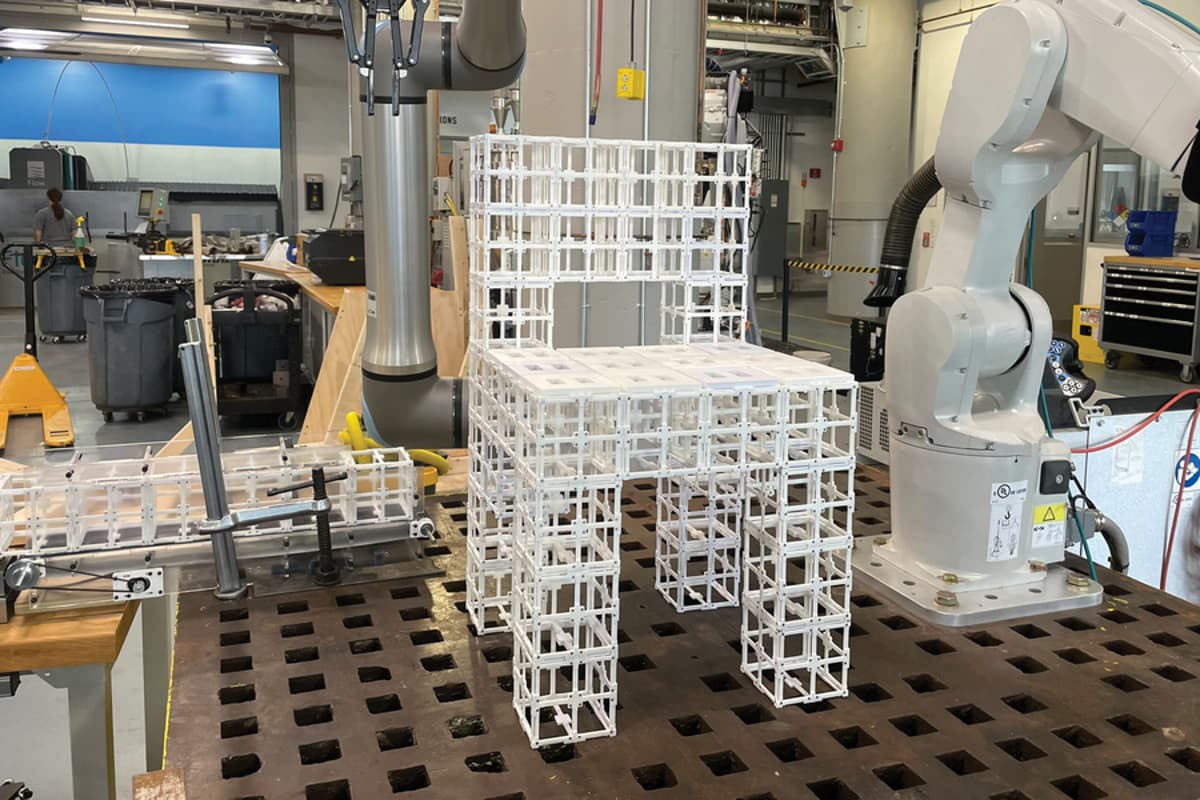

- •Text prompts generate 3D designs via dual generative AI models

- •Vision‑language model assigns panels based on function and geometry

- •Robot assembles objects from reusable prefabricated parts

- •User feedback loop yields >90% preference in study

- •System promises sustainable, on‑demand manufacturing for complex items

Pulse Analysis

The convergence of generative AI and robotics is redefining how physical products are conceived. By interpreting plain‑language requests, the MIT system bypasses traditional CAD workflows, which often demand specialized training. The dual‑model architecture—first generating a coherent 3‑D mesh, then using a vision‑language model to allocate functional components—creates a seamless pipeline from idea to tangible prototype. This approach not only accelerates design cycles but also democratizes creation, allowing designers, hobbyists, and small businesses to materialize concepts without deep engineering expertise.

A standout feature is the human‑in‑the‑loop mechanism that lets users iteratively refine outputs. Prompt adjustments such as “only use panels on the backrest” are instantly processed, producing updated geometry and assembly instructions. The study’s 90% preference rating underscores the system’s ability to meet aesthetic and functional expectations better than rule‑based or random placement algorithms. Moreover, the use of prefabricated, reusable components reduces material waste and simplifies logistics, aligning with circular economy principles.

Looking ahead, the platform could transform sectors that rely on rapid, custom fabrication—ranging from aerospace components to architectural installations. By expanding the library of modular parts to include hinges, gears, and diverse materials, the technology promises to handle increasingly complex designs. In the longer term, household‑level manufacturing becomes plausible, cutting shipping costs and carbon footprints. As generative AI models continue to improve, their integration with physical assembly robots may become a cornerstone of on‑demand, sustainable production ecosystems.

“Robot, make me a chair”

Comments

Want to join the conversation?

Loading comments...