The $100B Question: AI’s Appetite for Compute Is Rewriting the Rules of Tech

Companies Mentioned

Why It Matters

The economics of AI compute will determine which companies can sustain profitability and dominate the market, influencing investor capital allocation across the tech sector. Firms that lower hardware costs or secure high‑margin enterprise contracts will capture the $100 billion upside.

Key Takeaways

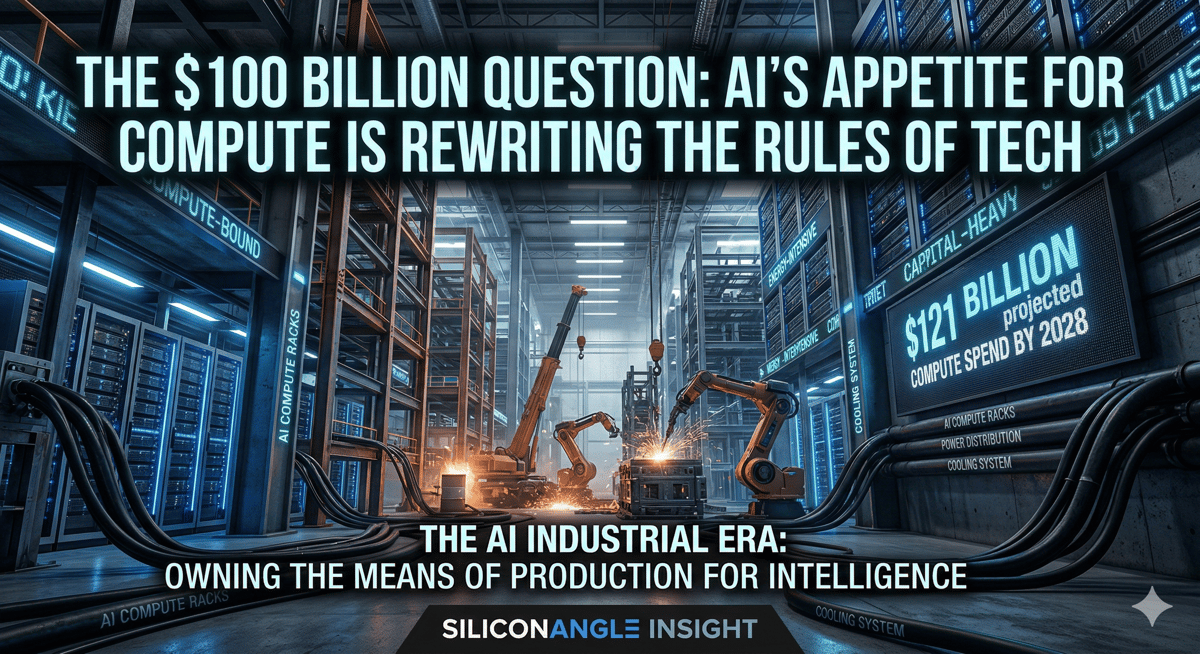

- •AI compute spend projected $121 B by 2028.

- •Inference will represent 65% of AI compute by 2026.

- •OpenAI valued near $300 B; Anthropic $183 B.

- •Training costs now classified as cost of goods sold.

- •Hardware efficiency will dictate AI profit margins.

Pulse Analysis

The surge in artificial‑intelligence workloads is reshaping the technology landscape much like the rise of cloud computing did a decade ago. Companies now view large language models as a form of infrastructure‑as‑a‑service, requiring massive data‑center capacity, specialized chips, and relentless electricity consumption. Hyperscalers such as Amazon, Microsoft and Google are already earmarking over $650 billion in capital expenditures through 2026 to support this demand, compressing years of build‑out into a few rapid cycles.

Financial disclosures from OpenAI and Anthropic expose the new cost structure: training expenses are no longer a research line item but a cost of goods sold, while inference—running the models for end users—will dominate 65% of total compute by 2026. OpenAI’s projected $121 billion compute spend and Anthropic’s $7 billion revenue illustrate a market where high‑margin enterprise APIs coexist with massive consumer platforms. This dual model creates divergent margin profiles; enterprises offer predictable cash flow, whereas consumer‑driven services rely on data collection and scale to offset steep inference bills.

The industry’s future hinges on hardware efficiency breakthroughs. Advances in Nvidia’s Blackwell GPUs or Google’s next‑gen TPUs could lower per‑inference costs faster than demand grows, preserving margins and rewarding early investors. Conversely, stagnant efficiency would compress profits and accelerate consolidation, favoring firms that can bundle compute, distribution and monetization into a single, cost‑effective stack. Investors and executives should therefore monitor chip roadmaps, data‑center energy strategies, and the balance between training and inference spend to gauge who will answer the $100 billion question.

The $100B question: AI’s appetite for compute is rewriting the rules of tech

Comments

Want to join the conversation?

Loading comments...