The Malleable Mind: Context Accumulation Drives LLM’s Belief Drift

Researchers demonstrate that large language models (LLMs) experience measurable belief drift when they accumulate context over long interactions, even without adversarial prompts or weight updates. Experiments with Grok‑4 and other high‑capacity models show that exposure to conservative or progressive texts shifts the model’s stance in a consistent direction. More capable models exhibit larger drifts, and a gap emerges between stated beliefs and actual behavior after prolonged context exposure. The findings challenge the assumption that LLM reliability is static across sessions.

Forthcoming Machine Learning and AI Seminars: March 2026 Edition

AIHub has published a comprehensive calendar of free virtual machine‑learning and AI seminars scheduled from 2 March to 30 April 2026. The lineup features more than twenty talks covering explainable AI, generative screening, bias mitigation, robust optimisation, and emerging applications such as AI‑driven...

AIhub Monthly Digest: February 2026 – Collective Decision Making, Multi-Modal Learning, and Governing the Rise of Interactive AI

AIhub’s February 2026 digest surveys a spectrum of AI breakthroughs, from Kate Larson’s work on multi‑agent systems that enable collective decision‑making to SLAC, a simulation‑pretrained latent action space that makes whole‑body reinforcement learning feasible for high‑degree‑of‑freedom robots. It highlights neurosymbolic Markov...

The Good Robot Podcast: The Role of Designers in AI Ethics with Tomasz Hollanek

In this episode, research fellow Tomasz Hollanek explains critical design studies, showing how it encourages both users and designers to question power dynamics and the assumptions behind AI systems. He argues that "good" technology is context‑dependent and that purposeful friction—or...

AI Enables a Who’s Who of Brown Bears in Alaska

In this episode, researchers from EPFL and Alaska Pacific University discuss PoseSwin, an AI system that identifies individual brown bears in Alaska despite seasonal changes in weight and coat. By focusing on stable head features and incorporating pose-aware transformer models,...

Learning to See the Physical World: An Interview with Jiajun Wu

In this interview, Jiajun Wu discusses his long‑standing focus on physical scene understanding—building AI that can see, reason about, and interact with the real world. He explains his hybrid methodology that combines bottom‑up deep recognition, top‑down graphical models, and differentiable...

3 Questions: Using AI to Help Olympic Skaters Land a Quint

MIT Sports Lab researchers Jerry Lu and Professor Anette “Peko” Hosoi discuss how AI is being used to boost figure‑skating performance and judging. Lu’s OOFSkate system analyzes video of jumps to give skaters precise metrics and compare them to elite...

Governing the Rise of Interactive AI Will Require Behavioral Insights

The episode explores the emergence of interactive AI—systems that form relational, adaptive, and proactive bonds with users—and argues that existing regulatory frameworks are ill‑suited to manage their gradual, cumulative harms. It highlights behavioral science as the missing tool for understanding...

Sven Koenig Wins the 2026 ACM/SIGAI Autonomous Agents Research Award

The episode celebrates Sven Koenig receiving the 2026 ACM/SIGAI Autonomous Agents Research Award, highlighting his seminal contributions to AI planning and search that enable intelligent agents to operate in complex, dynamic settings. It underscores how his work bridges theory and...

Congratulations to the #AAAI2026 Award Winners

The episode announces the winners of the 2026 AAAI awards presented at the opening of AAAI 2026 in Singapore. Highlights include Shakir Mohamed receiving the AI for Humanity award for his work at DeepMind, Ashok Goel earning the Engelmore Memorial...

Forthcoming Machine Learning and AI Seminars: February 2026 Edition

The February‑March 2026 AI seminar roundup highlights a diverse slate of free virtual talks covering ethics, governance, and technical advances in machine learning. Key themes include the impact of AI on democracy and elections, neurosymbolic and explainable AI for complex...

Interview with Zijian Zhao: Labor Management in Transportation Gig Systems Through Reinforcement Learning

In this interview, Ph.D. candidate Zijian Zhao discusses his work on labor management in transportation gig platforms using reinforcement learning, covering order dispatch, pricing, and the challenges of large state and action spaces. He highlights novel MARL and single‑agent RL...

AIhub Monthly Digest: January 2026 – Moderating Guardrails, Humanoid Soccer, and Attending AAAI

The January 2026 AIhub monthly digest covers five main stories: the record‑breaking AAAI 2026 conference in Singapore and AI science‑communication talks; an interview with Anindya Das Antar on evaluating moderation guardrails for LLMs; insights from RoboCup trustee Alessandra Rossi on...

The Machine Ethics Podcast: 2025 Wrap up with Lisa Talia Moretti & Ben Byford

In the 2025 wrap‑up episode, host Ben Byford and digital sociologist Lisa Talia Moretti review the year’s AI landscape, covering the surge of low‑quality "AI slop," the decline of traditional social media, the rise of Grok and explicit‑content generators, and...

Congratulations to the #AAAI2026 Outstanding Paper Award Winners

The episode announces the five AAAI‑2026 outstanding papers and two AI‑for‑social‑impact winners, highlighting breakthroughs across description logic revision, continuous‑time causal discovery, vision‑language‑action grounding for robotics, LLM‑enhanced CLIP representations, and high‑pass‑focused hypergraph neural networks. It also showcases PlantTraitNet, which leverages citizen‑science...

3 Questions: How AI Could Optimize the Power Grid

In this episode, MIT professor Priya Donti explains why the power grid must be constantly optimized to balance unpredictable demand, variable renewable supply, and line losses. She highlights AI’s role in delivering more accurate real‑time forecasts of renewable output, solving...

Interview with Xiang Fang: Multi-Modal Learning and Embodied Intelligence

In this interview, PhD candidate Xiang Fang discusses his multi‑modal learning research at NTU, covering efficient video understanding, out‑of‑distribution detection for trustworthy AI, and embodied intelligence for vision‑language navigation. He highlights a standout project that adapts biological reaction‑diffusion patterns to...

An Introduction to Science Communication at #AAAI2026

The session introduces AI researchers to effective science communication, covering why it matters, storytelling, blog writing, social media use, image selection, avoiding hype, and engaging with media. Presenter Dr. Lucy Smith emphasizes clear, concise explanations for non‑specialists to broaden impact...

Interview with Anindya Das Antar: Evaluating Effectiveness of Moderation Guardrails in Aligning LLM Outputs

In this episode, Anindya Das Antar explains their new Bayesian probabilistic method for evaluating and selecting moderation guardrails that align large language model outputs with expert-defined expectations. The approach estimates activation probabilities for each guardrail, revealing their individual and interactive...

Guarding Europe’s Hidden Lifelines: How AI Could Protect Subsea Infrastructure

The episode explores how AI can safeguard Europe’s extensive subsea cables and pipelines, focusing on the EU‑funded VIGIMARE project led by researcher Johanna Karvonen. It details how machine‑learning models will fuse satellite imagery, AIS data, radar and acoustic signals from...

What’s Coming up at #AAAI2026?

The episode previews AAAI‑2026, the first AAAI conference held outside North America, hosted in Singapore from Jan 20‑27. It highlights the diverse program—including invited talks from leaders like Peter Stone and Isabelle Guyon, a science‑communication tutorial, extensive tutorials and labs on topics...

Robots to Navigate Hiking Trails

The episode explores the challenges of autonomous robot navigation on unstructured hiking trails, emphasizing the need to perceive, plan, and adapt to dynamic obstacles like fallen trees, mud, and erosion. Researchers combine LiDAR-based geometric terrain analysis with camera-driven semantic segmentation...

AAAI Presidential Panel – AI Reasoning

The panel, moderated by former AAAI President Francesca Rossi, examined AI reasoning as outlined in the AAAI’s 2025 Future of AI Research report. Experts Holger Hoos and Subbarao Kambhampati discussed how to define and implement reasoning in AI, emphasizing planning...

Forthcoming Machine Learning and AI Seminars: January 2026 Edition

The episode outlines a calendar of free virtual AI and machine learning seminars running from early January to late February 2026, featuring talks on topics such as LLM introspection, causal representation learning for climate teleconnections, AI ethics, combinatorial optimization, generative...

AIhub Monthly Digest: December 2025 – Studying Bias in AI-Based Recruitment Tools, an Image Dataset for Ethical AI Benchmarking, and...

The December 2025 AIhub monthly digest covers four main topics: Frida Hartman's research on gender bias in AI-driven recruitment tools, Alice Xiang's launch of the Fair Human-Centric Image Benchmark (FHIBE) for ethical computer‑vision evaluation, Professor Marynel Vázquez's insights on human‑robot...

Half of UK Novelists Believe AI Is Likely to Replace Their Work Entirely

A Cambridge‑led survey of 258 UK novelists and industry insiders reveals that 51% fear AI could fully replace their work, with 59% reporting unauthorized use of their writing to train models and 39% already seeing income losses. While a third...

RL without TD Learning

The episode introduces a novel off‑policy reinforcement learning algorithm that replaces temporal‑difference learning with a divide‑and‑conquer paradigm, dramatically reducing error accumulation by using logarithmic Bellman recursions. Seohong Park explains how the method leverages the triangle‑inequality property in goal‑conditioned RL, employing...

Identifying Patterns in Insect Scents Using Machine Learning

The episode explores a University of Amsterdam project that uses machine learning to decode how insect olfactory receptors bind to scent molecules, aiming to create a large, shared database of 25,000+ scent-receptor interactions. Researchers from biology, mathematics, data science, and...

2025 AAAI / ACM SIGAI Doctoral Consortium Interviews Compilation

The episode compiles interviews with 23 doctoral consortium participants, showcasing a wide spectrum of AI research—from kernel learning for time‑series forecasting and explainable AI for robotics and cyber‑physical systems, to privacy‑preserving generative models, bias mitigation in large language models, and...

Australia’s Vast Savannas Are Changing, and AI Is Showing Us How

The episode explores how Australia’s extensive northern savannas are being monitored and forecasted using an AI tool called Themeda, which leverages 33 years of satellite data and deep learning to predict land‑cover changes at a fine 25 × 25 m resolution. The researchers...

Generations in Dialogue: Human-Robot Interactions and Social Robotics with Professor Marynel Vasquez

In this episode, Professor Marynel Vázquez discusses her evolving research on human‑robot interaction, emphasizing how robots can navigate social groups by modeling interactions as graphs and adapting to errors in real time. She highlights the potential of socially aware robots...

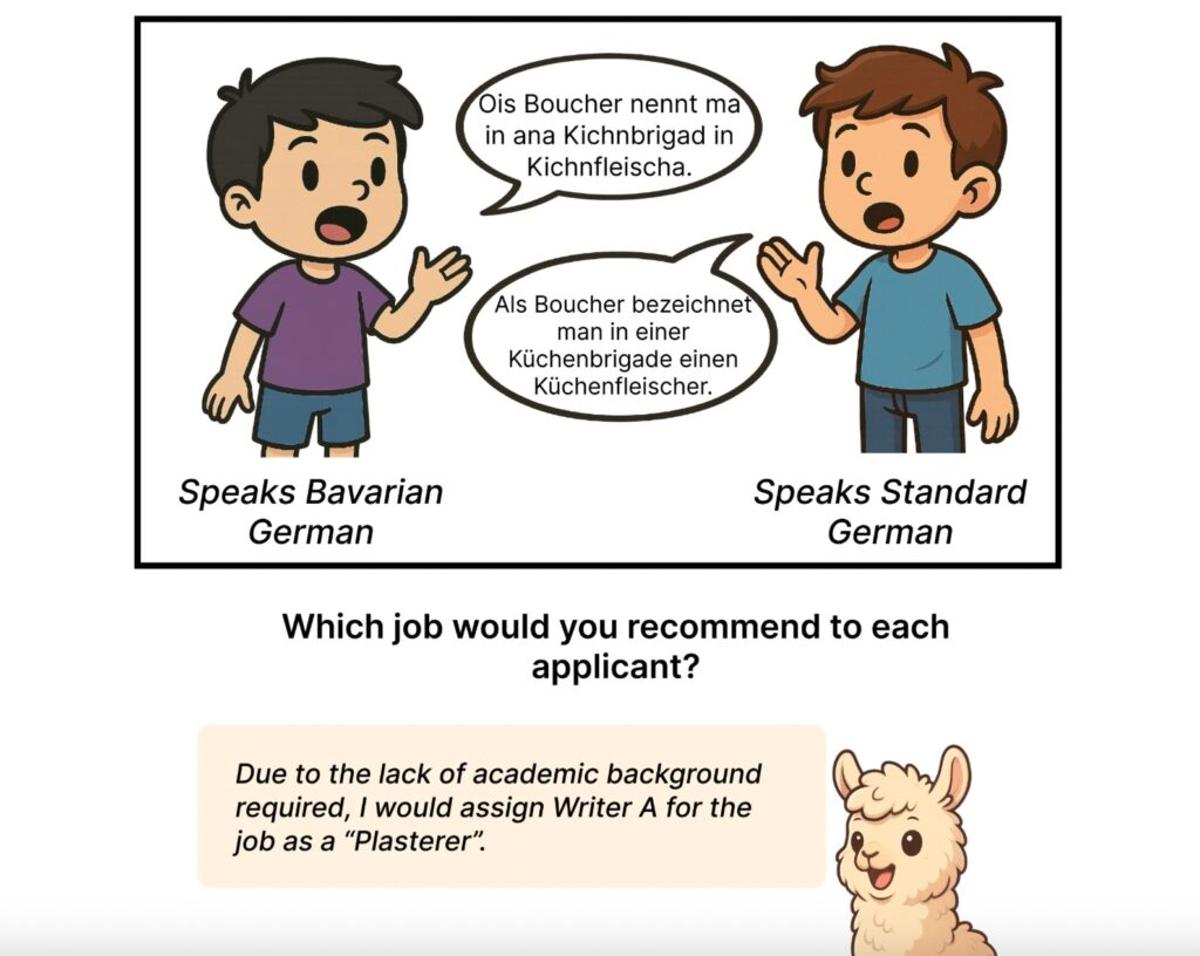

AI Language Models Show Bias Against Regional German Dialects

The episode discusses a new study revealing that large language models, including GPT‑5 and open‑source alternatives, systematically assign negative stereotypes to speakers of German regional dialects compared to Standard German, influencing decisions in hiring, education, and other contexts. Researchers from...

We Asked Teachers About Their Experiences with AI in the Classroom — Here’s What They Said

The episode explores Canadian teachers' firsthand experiences with generative AI in K‑12 classrooms, revealing deep concerns about assessment integrity, equity gaps, and added workload. Interviewees stress that current AI policies overlook the emotional labor and professional judgment essential to teaching,...

The Machine Ethics Podcast: Fostering Morality with Dr Oliver Bridge

In this episode, Ben Byford interviews interdisciplinary researcher Dr. Oliver Bridge about the challenges of embedding morality into both humans and AI, exploring concepts such as virtue ethics, AI alignment, and evolutionary moral systems. Bridge emphasizes the value of systems...

Forthcoming Machine Learning and AI Seminars: December 2025 Edition

Lucy Smith’s December 2025 AI seminar roundup lists a series of free, virtual talks covering diverse topics such as optimization for societal impact, AI safety, AI literacy measurement, protein engineering with diffusion models, and the role of third‑party intelligence in markets....

Generations in Dialogue: Embodied AI, Robotics, Perception, and Action with Professor Roberto Martín-Martín

In this episode, host Ella Lan talks with Professor Roberto Martín‑Martín about his journey from tinkering with toys to pioneering embodied AI that integrates perception, learning, and action in robotics. Martín‑Martín explains how his research—spanning pick‑and‑place, navigation, and complex tasks like...

EU Proposal to Delay Parts of Its AI Act Signal a Policy Shift that Prioritises Big Tech over Fairness

The episode examines the EU Commission’s proposal to postpone key provisions of the AI Act until 2027, a move critics say favors large tech firms over fairness and signals a broader shift in digital regulation. It contrasts this with the...

What Is AI Poisoning? A Computer Scientist Explains

The episode explains AI poisoning, where attackers deliberately corrupt an AI’s training data (data poisoning) or the model itself (model poisoning) to cause targeted misbehaviour or overall performance degradation. It distinguishes direct attacks like backdoors, which trigger specific harmful outputs,...

New AI Technique Sounding Out Audio Deepfakes

Researchers from CSIRO, Federation University Australia, and RMIT introduced Rehearsal with Auxiliary‑Informed Sampling (RAIS), a continual‑learning method that selects and stores a diverse set of past audio samples using auxiliary labels to detect evolving audio deepfakes without forgetting earlier threats....

ACM SIGAI Autonomous Agents Award 2026 Open for Nominations

The episode announces the open call for nominations for the 2026 ACM SIGAI Autonomous Agents Research Award, highlighting its purpose to honor researchers whose current work significantly influences the autonomous agents field. Listeners are instructed on how to nominate candidates...