Recent Posts

Social•Feb 21, 2026

AI Must Train on Real‑world Data, Not Idealized Datasets

To succeed, AI systems must be tested and trained on real-world conditions, not just idealized data. https://t.co/HFZ8yPwycm

By Satya Mallick

Social•Feb 20, 2026

AI Lets Anyone Craft Complex Images in an Hour

I created this image in about 1 hour using AI prompts after about a dozen tries. The worst part is that I had to carefully check the image after every attempt because the mistakes it was making were subtle....

By Satya Mallick

Social•Feb 20, 2026

Speculative Decoding Doubles LLM Speed Without Quality Loss

This Trick Makes LLMs 2X Faster Autoregressive decoding has a hard ceiling-one token at a time. Speculative Decoding uses a "draft" model to jump ahead without losing quality. #Innovation #AI #FutureTech #Python https://t.co/OgsON1kbzw

By Satya Mallick

Social•Feb 19, 2026

Repeating Prompts Boosts LLM Performance Without Extra Compute

Repeat, Repeat: Why Simply Repeating a Prompt Can Make LLMs Smarter In this episode of Artificial Intelligence: Papers and Concepts, we explore the surprisingly simple idea behind “Prompt Repetition Improves Non-Reasoning LLMs,” a new study from Google Research that challenges how...

By Satya Mallick

Social•Feb 19, 2026

Unverified AI Decisions Risk Catastrophic Consequences

Relying on AI to make important decisions without verification can lead to catastrophic outcomes. https://t.co/PZYzLfjyYE

By Satya Mallick

Social•Feb 18, 2026

AI Agents Let You Build Vision Apps without Coding

🚀 Building a Computer Vision app - without writing a single line of code. In this walkthrough, we used an AI coding agent to create a real-time face detection application that can blur or pixelate faces on a live video feed....

By Satya Mallick

Social•Feb 17, 2026

Share One Base Model, Deploy Many LoRA Adapters Efficiently

Why Fine‑Tuned Models Break the Bank 💸 Every LoRA adapter shouldn’t need its own full base model copy. That’s how dozens become hundreds… and inference becomes impossible. 👉 Multi‑LoRA serving fixes this: one base model, many adapters, applied per request with custom...

By Satya Mallick

Social•Feb 16, 2026

Seedance 1.0 Elevates AI Video to Production‑Ready Storytelling

Seedance 1.0: The Next Leap in AI Video Generation In this episode of Artificial Intelligence: Papers and Concepts, we explore Seedance 1.0, a new foundation model from ByteDance that is pushing the boundaries of AI-generated video. Positioned at the top of...

By Satya Mallick

Social•Feb 16, 2026

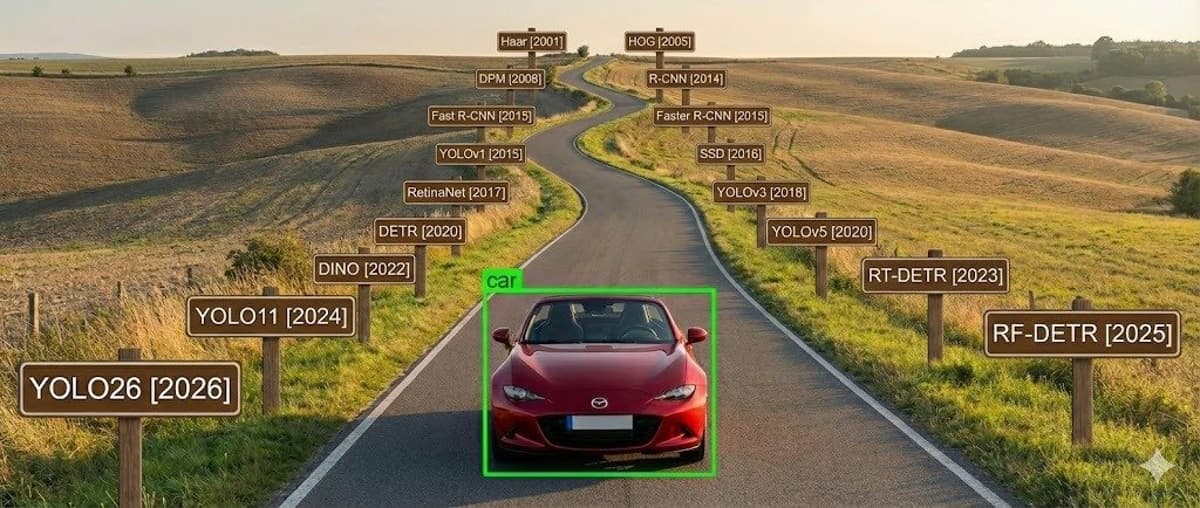

Transformers Overtake YOLO with Real‑Time Detection

Is YOLO officially dead? 💀 RFDETR (Roboflow Detection Transformers) just redefined real-time detection. ✅ Object Detection ✅ Instance Segmentation ❌ No Keypoints (yet) This is why Transformers are taking over. https://t.co/6LXlbsGWJt

By Satya Mallick

Social•Feb 16, 2026

Chunked Prefill Prevents Token Starvation From Long Prompts

How Long Prompts Break AI Apps 🚫 A single 128K prompt can starve other users of tokens. Use Chunked Prefill to keep time-to-first-token low. #ProgrammingTips #GenerativeAI #DataScience #Tech https://t.co/BJGFm8dxAk

By Satya Mallick

Social•Feb 15, 2026

Fluency, Not AI Smarts, Undermines Human Judgment

Human judgment is under threat not because AI is smart, but because we confuse fluency with understanding https://t.co/sLuxpkk0uz

By Satya Mallick

Social•Feb 13, 2026

LoRA Enables Cheap, Efficient Fine‑Tuning of Giant Models

LoRA: Teaching Massive AI Models New Skills Without Retraining Everything In this episode of Artificial Intelligence: Papers and Concepts, we break down LoRA (Low-Rank Adaptation) - a breakthrough technique that makes fine-tuning large language models faster, cheaper, and far more efficient....

By Satya Mallick