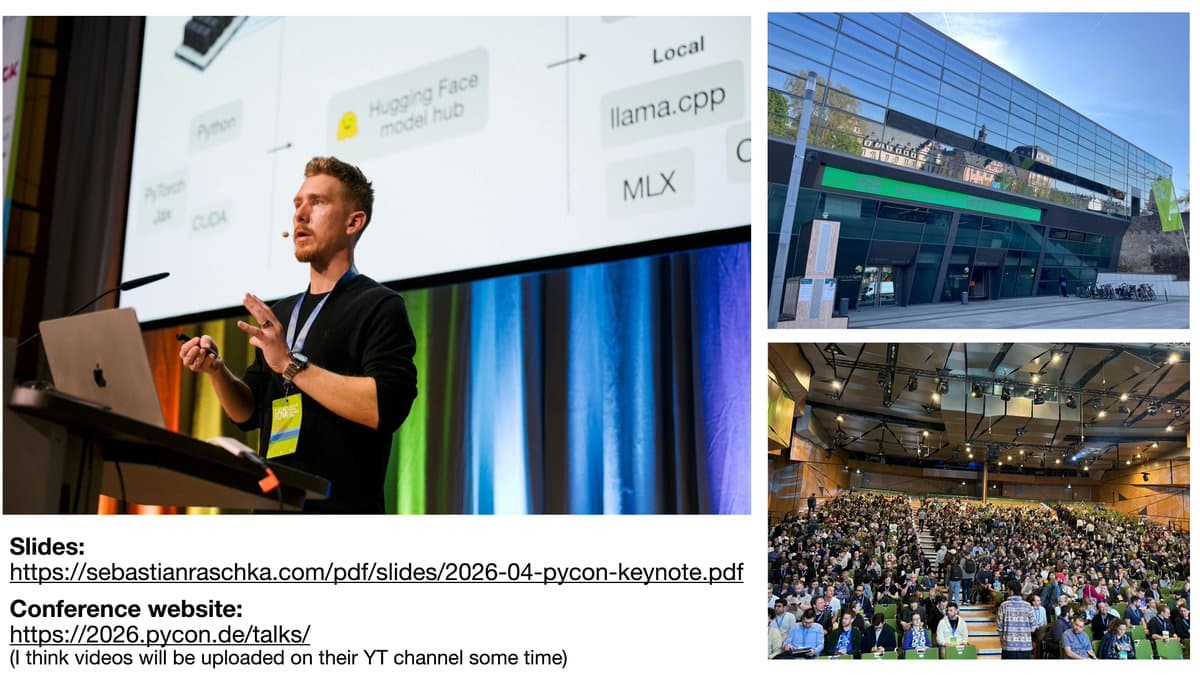

Loved PyCon DE: AI Community, Now on Family Break

Had a great time at PyCon & PyData DE. Highly recommend it. Great open-source, community-focused conference with lots of builders in the Python AI, LLM and agent space. Taking a short family break, my first "vacation" in years (hopefully, I won't miss the DeepSeek V4 drop 🤞😆) https://t.co/kbKtRZK9aa

New RSS Feed Simplifies Tracking LLM Architecture Updates

Added an RSS feed to the LLM Architecture Gallery so it is a bit easier to keep up with new additions over time: https://t.co/NO7z6XSRHS https://t.co/7PKrLT1A6S

Inside Coding Agents: Repo Context, Tools, Memory, Delegation

Components of a coding agent: a little write-up on the building blocks behind coding agents, from repo context and tool use to memory and delegation. Link: https://t.co/iF4DsMcnhj https://t.co/zImf32iegt

Build A Reasoning Model Chapters Now in Early Access

It’s done. All chapters of Build A Reasoning Model (From Scratch) are now available in early access. The book is currently in production and should be out in the next months, including full-color print and syntax highlighting. There’s also a preorder up on...

Open-Source Hard Distillation for Any LLM Released

The Ch08 Nb on distilling LLMs is now on GitHub: https://t.co/bPRyIU5BhH Hard distillation that works with any LLM (minding the terms of service, of course). https://t.co/KscPulkj7q

India's Sarvam 105B Matches Top LLMs Using MLA

While waiting for DeepSeek V4 we got two very strong open-weight LLMs from India yesterday. There are two size flavors, Sarvam 30B and Sarvam 105B model (both reasoning models). Interestingly, the smaller 30B model uses “classic” Grouped Query Attention (GQA), whereas the larger 105B variant switched...

Tiny Qwen3.5 Reimplementation: Top Small LLM For

A small Qwen3.5 from-scratch reimplementation for edu purposes: https://t.co/OnupgeE55l (probably the best "small" LLM today for on-device tinkering) https://t.co/LwyF8x6sle

New Tools Simplify Distillation Data From Open-Weight Models

Claude distillation has been a big topic this week while I am (coincidentally) writing Chapter 8 on model distillation. In that context, I shared some utilities to generate distillation data from all sorts of open-weight models via OpenRouter and Ollama: https://t.co/IsfNDpcGAw https://t.co/LKXuGrjO84

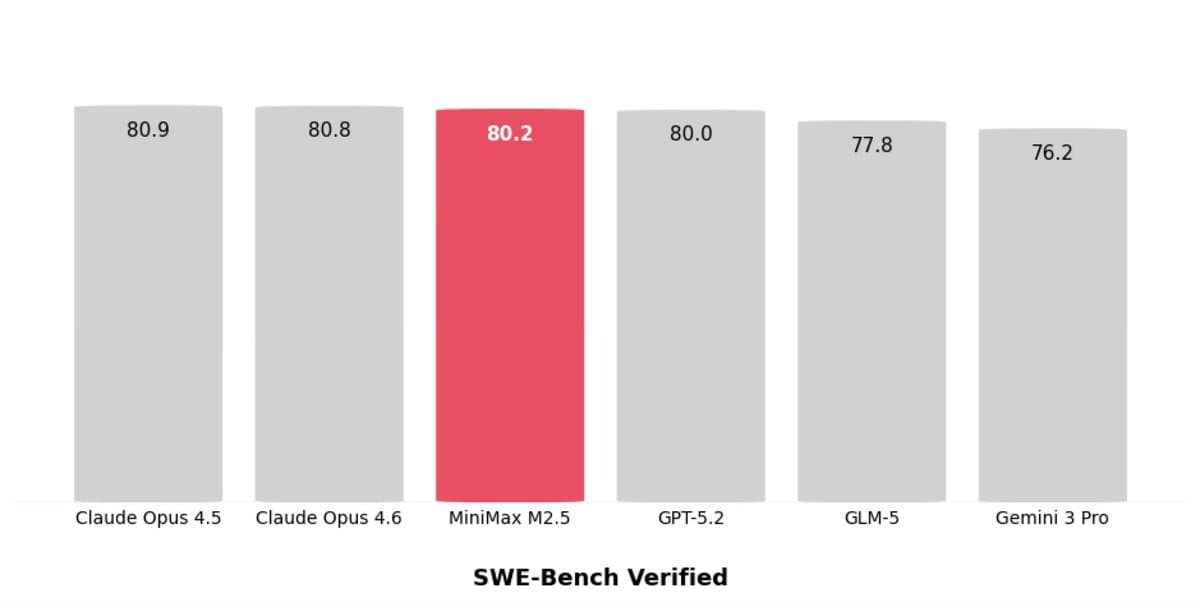

SWE‑Bench Verified Flawed Tests Reveal Data Leakage Issues

Am currently putting together an article, and yeah, the SWE-Bench Verified numbers are definitely a bit sus across all models -- the benchmark suggest they are more similar than they really are. So, I went down a rabbit hole looking into...

February's AI Explosion: Six New Models Debut

February is one of those months... - Moonshot AI's Kimi K2.5 (Feb 2) - z. AI GLM 5 (Feb 12) - MiniMax M2.5 (Feb 12) - ByteDance Seed-2.0 (Feb 13) - Nanbeige 4.1 3B (Feb 13) - Qwen 3.5 (Feb 15) - Cohere's Tiny Aya (Feb 17) (+Hopefully...

AI Should Free Experts, Not Add Extra Tasks

Yeah, in an ideal world, we would use AI to enable experts to do higher-quality work. But in the real world, they are also expected take on a wide range of additional responsibilities that detract (& distract) from their core work.

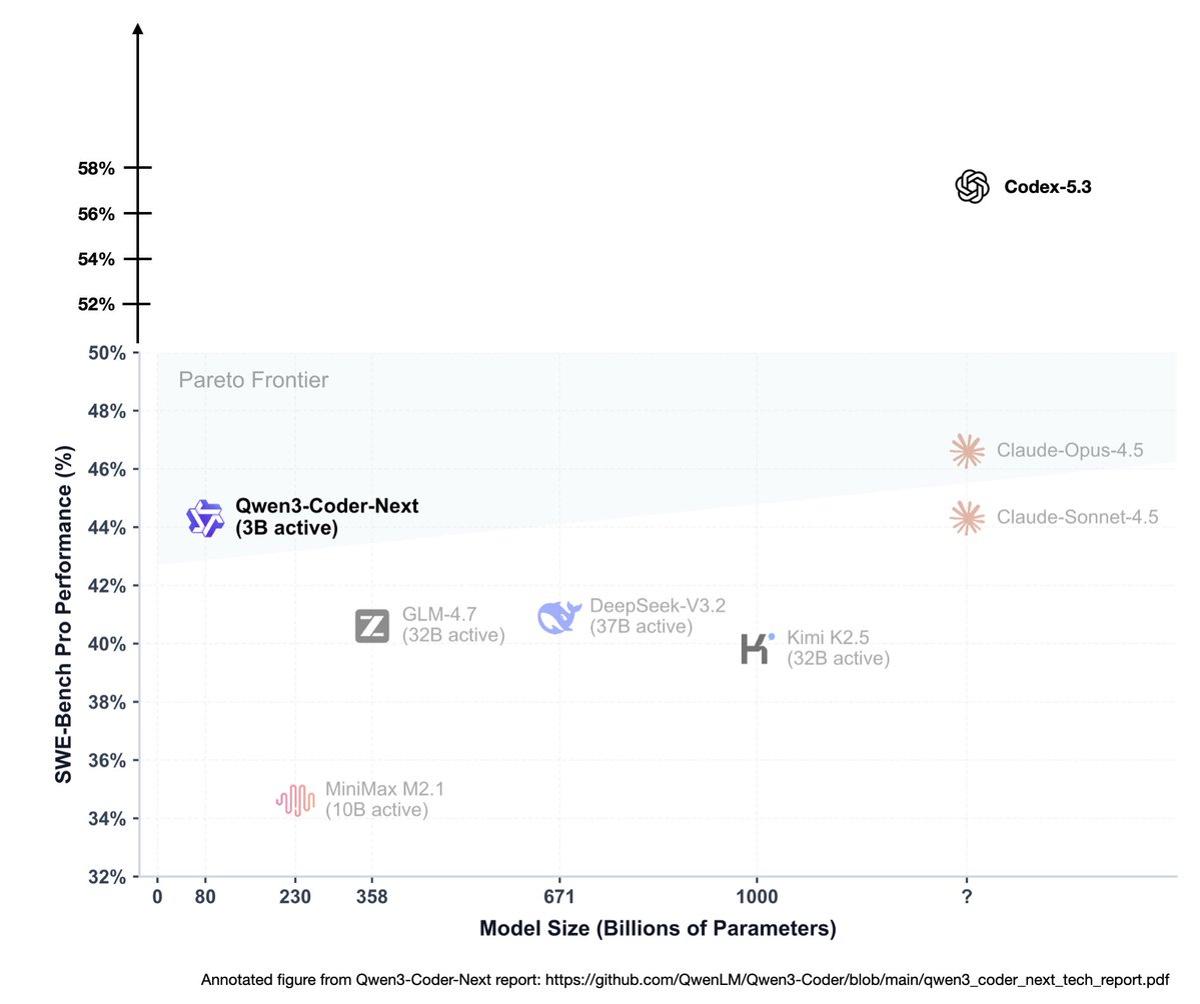

Half the Tokens, Same Performance: Efficiency Wins

> "Less than half the tokens of 5.2-Codex for same tasks" That one line already says a lot. There is no assumption anymore that compute or budget is infinite in 2026. But if you can get better modeling performance while using...

Moltbook Shows LLMs Remain Pure Next‑Token Predictors

Yes, Moltbook (by clawdbot) is still next-token prediction combined with some looping, orchestration, and recursion. And that is exactly what makes this so fascinating. (It is also why understanding how LLMs actually work really does pay off. Lets us see through the...

LLM Future: Transformers Evolve, Inference Scaling Takes Spotlight

Had a fun chat with @mattturck the other day where we talked about a bunch of interesting LLM stuff... Basically everything from the future of the transformer architecture to inference-time scaling as a recent MVP of LLM performance:

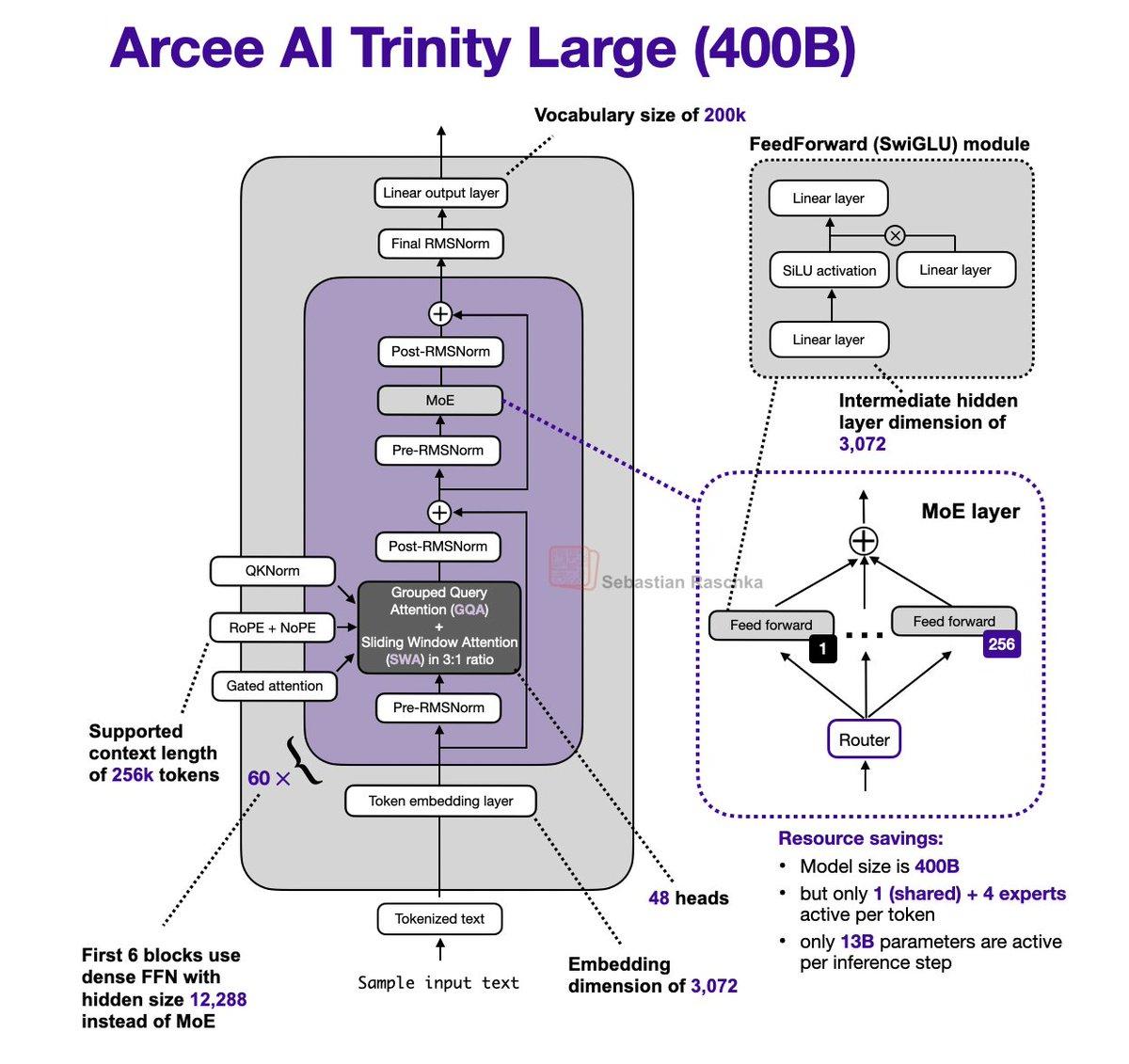

Arcee Trinity Large Merges MoE, Gated

It's been a while since I did an LLM architecture post. Just stumbled upon the Arcee AI Trinity Large release + technical report released yesterday and couldn't resist: - 400B param MoE (13B active params) - Base model performance similar to GLM...