Why a 17-Year-Old Built an AI Model to Expose Deepfake Maps

•December 16, 2025

0

Companies Mentioned

Why It Matters

Geospatial deepfakes could distort disaster response, national security, and market decisions, eroding trust in critical data sources. Early detection and public awareness are essential to safeguard democratic processes and infrastructure.

Key Takeaways

- •High‑schooler builds AI model to detect satellite deepfakes.

- •Geospatial forgeries can mislead disaster response and security.

- •GANs and diffusion models leave distinct detection fingerprints.

- •Detection must evolve faster than emerging fake generation techniques.

- •Education and ethics clubs raise awareness of geospatial manipulation.

Pulse Analysis

Satellite imagery underpins everything from humanitarian aid to national‑security planning, yet its authenticity is rarely questioned. Recent experiments have shown that generative AI can splice cityscapes or fabricate disaster scenes, creating what researchers call ‘deepfake geography.’ Because maps are perceived as objective truth, even subtle alterations can steer policy, trigger market moves, or conceal military assets. The academic community has produced only a handful of studies on this vector, leaving a critical blind spot as governments and corporations increasingly depend on real‑time geospatial feeds.

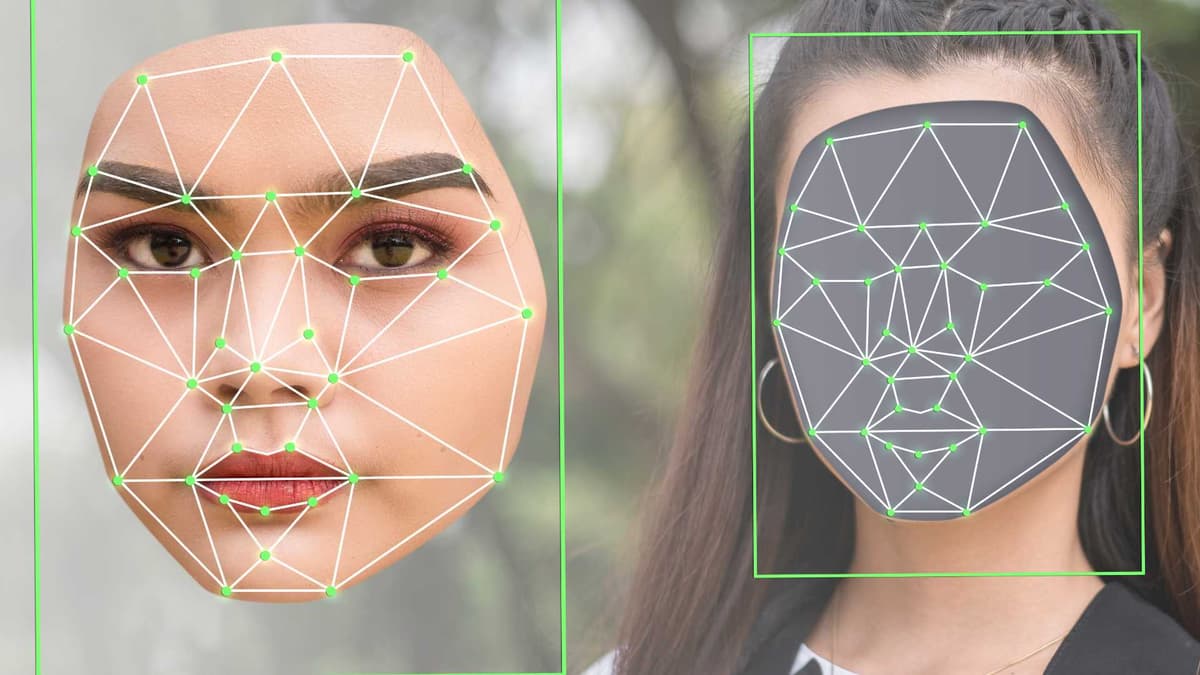

Vaishnav Anand, a California high‑school junior, turned a personal deepfake scare into a prototype detection system presented at MIT’s IEEE Undergraduate Research Technology Conference. His approach isolates the unique statistical residues left by generative adversarial networks and diffusion models, focusing on structural inconsistencies that survive compression and scaling. The method highlights that each generation family imprints a measurable fingerprint, enabling automated screening of massive image streams. However, the rapid emergence of new synthesis techniques means defenses must be continuously retrained, turning detection into an ongoing arms race rather than a one‑off fix.

The broader lesson extends beyond a single algorithm. Industry stakeholders must embed verification pipelines into geospatial data workflows, adopt shared standards for provenance, and invest in open‑source tooling that can keep pace with AI advances. Simultaneously, education initiatives—like Anand’s Tech and Ethics club and his cybersecurity guide—raise public literacy about visual manipulation, a prerequisite for democratic resilience. As younger innovators demonstrate, curiosity‑driven research can surface hidden threats before they become systemic, reinforcing the need for cross‑disciplinary collaboration between AI scientists, policymakers, and civil‑society watchdogs.

Why a 17-Year-Old Built an AI Model to Expose Deepfake Maps

0

Comments

Want to join the conversation?

Loading comments...