Optimizing the Wrong Part of the Testing Process

Key Takeaways

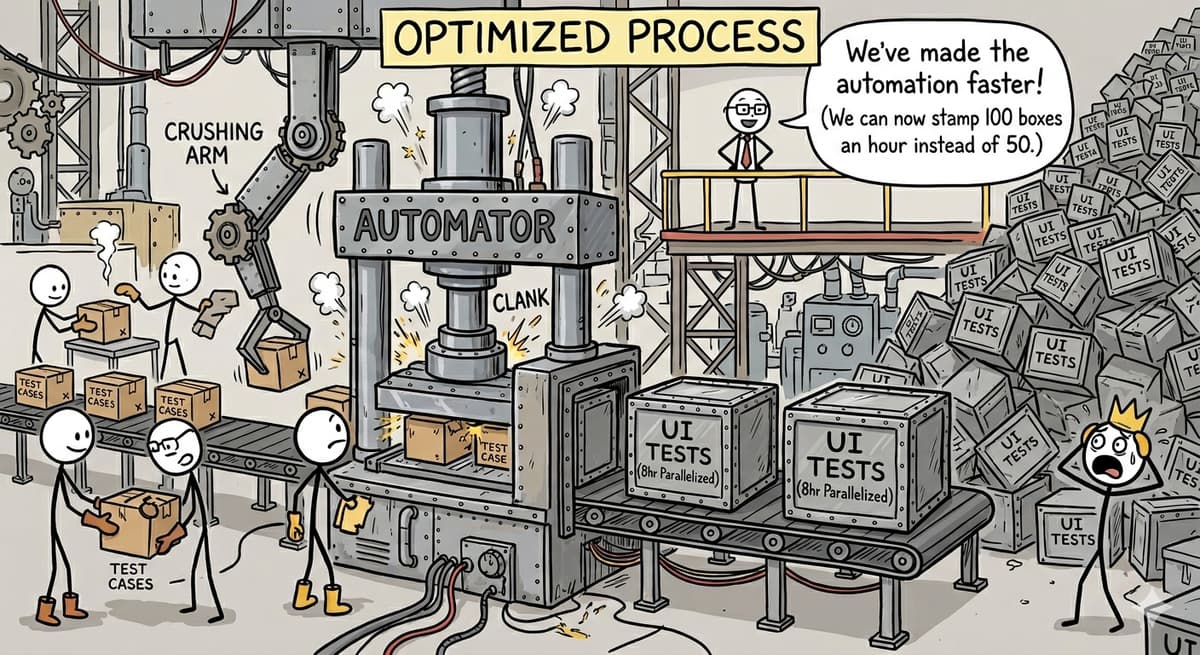

- •2,500 Cypress UI tests need 8 hours when parallelized

- •Automation backlog includes 3,000 planned UI‑only tests

- •Test case creation is automatically routed to automation team

- •Current focus is test artifacts, not product risk mitigation

- •Introduce justification step to prioritize API over UI tests

Pulse Analysis

The surge in UI‑centric test automation reflects a broader industry trend: teams equate test volume with quality. While tools like Cypress make it easy to record user flows, scaling hundreds of UI tests quickly overwhelms CI pipelines, leading to long feedback cycles that delay releases. In contrast, API‑level tests execute in seconds and provide deeper insight into business logic, yet they remain underutilized when organizations chase scenario counts rather than outcomes.

When a testing process is optimized for the number of test cases, the metric becomes a vanity indicator. Engineers spend weeks converting manual scenarios into brittle UI scripts, only to discover that the suite’s 8‑hour runtime fails to surface high‑impact defects promptly. This misalignment hampers risk‑based decision making, as teams lack timely signals about regressions that truly affect users. Moreover, the mandatory hand‑off from testers to SDETs creates a bottleneck, inflating the backlog with low‑value tests that add little to product stability.

A strategic pivot toward risk‑driven automation can reclaim developer productivity. By decoupling test case creation from automatic UI automation, teams can vet each proposed test against a risk matrix, favoring API contracts, data‑seeded scenarios, and targeted smoke checks. Instituting a justification step for UI tests ensures that only high‑risk user journeys consume precious execution time. Over time, this approach shrinks the suite, shortens feedback loops, and aligns testing investments with business outcomes, delivering measurable quality improvements without the overhead of a 90‑hour test run.

Optimizing the wrong part of the testing process

Comments

Want to join the conversation?