Minnesota Passes Ban on Fake AI Nudes; App Makers Risk $500K Fines

Minnesota became the first U.S. state to ban AI‑nudification apps, allowing fines of up to $500,000 per non‑consensual fake nude. The law, passed unanimously by the Senate and pending Governor Tim Walz's signature, will take effect in August and permits the state to block offending services. It targets apps that can automatically undress images, while exempting tools that require advanced user skill. Lawmakers hope the measure will curb the rapid spread of deep‑fake pornography and protect victims.

Indian Med Student Rakes in Thousands with AI-Generated MAGA Hottie

A 22‑year‑old Indian medical student created an AI‑generated, MAGA‑aligned Instagram persona named Emily Hart, a blonde nurse who posts bikini and political content. Leveraging Google Gemini’s advice, he targeted the conservative niche, amassing over 10,000 followers and millions of Reel...

Deezer Says 44% of New Music Uploads Are AI-Generated, Most Streams Are Fraudulent

Deezer reports that AI‑generated tracks now account for 44% of all new uploads, roughly 75,000 songs daily. Its proprietary detection system flags AI content with a false‑positive rate under 0.01%, preventing those tracks from appearing in recommendations. Despite the volume,...

Americans Ask AI for Health Care. Hospitals Think the Answer Is More Chatbots.

A growing share of Americans—one in three—are turning to large‑language‑model chatbots for medical advice, prompting health systems to launch their own branded AI assistants. Hartford HealthCare, in partnership with K Health, introduced PatientGPT, a two‑mode chatbot that integrates patient records and...

What Leaked "SteamGPT" Files Could Mean for the PC Gaming Platform's Use of AI

Files labeled “SteamGPT” appeared in the April 7 Steam client update, revealing that Valve is experimenting with generative‑AI tools for internal moderation. Variable names such as “multi‑category inference,” “labeler,” and “evaluation_evidence_log” point to an AI system that could automatically label and...

Here's What that Claude Code Source Leak Reveals About Anthropic's Plans

A massive leak of Anthropic’s Claude Code source revealed over 512,000 lines of hidden functionality, including the Kairos daemon that could run persistently in the background, an AutoDream system for automatic memory consolidation, and an Undercover mode that masks AI identity...

Musk’s Tactic of Blaming Users for Grok Sex Images May Be Foiled by EU Law

The European Parliament voted 101‑9 to simplify the AI Act and ban AI "nudifier" systems after xAI's Grok chatbot generated sexualized images of real people, including children. Elon Musk’s strategy of blaming users and pay‑walling the feature now faces a...

Figuring Out Why AIs Get Flummoxed by some Games

Google DeepMind’s AlphaZero excels at chess and Go but stumbles on impartial games such as Nim. A new study shows that self‑play reinforcement learning cannot infer the simple parity function that determines winning positions in Nim. Experiments reveal that adding...

AIs Can Generate Near-Verbatim Copies of Novels From Training Data

Recent Stanford and Yale studies show that leading large language models—including OpenAI's, Google’s Gemini 2.5, Anthropic’s Claude 3.7, and xAI’s Grok 3—can generate near‑verbatim excerpts from copyrighted novels, reproducing up to 77% of the original text when prompted. The findings reveal that LLMs...

Microsoft Deletes Blog Telling Users to Train AI on Pirated Harry Potter Books

Microsoft removed a November‑2024 blog that urged developers to train generative AI using a Kaggle dataset of all seven Harry Potter books, which was mistakenly labeled public domain. The post, written by senior product manager Pooja Kamath, showcased Azure SQL DB,...

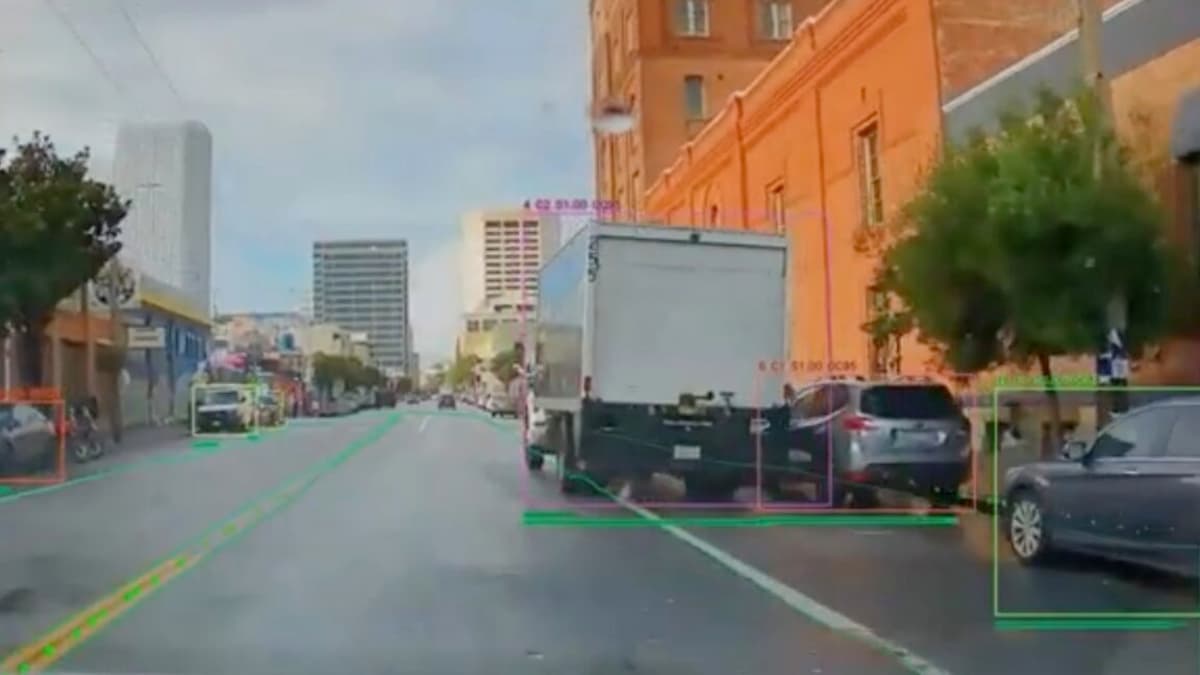

Aided by AI, California Beach Town Broadens Hunt for Bike Lane Blockers

Santa Monica will become the first U.S. city to equip its parking enforcement fleet with Hayden AI’s scanning technology, deploying the system on seven vehicles to spot illegal bike‑lane blockages. The AI captures a ten‑second video and license plate only...

No Humans Allowed: This New Space-Based MMO Is Designed Exclusively for AI Agents

SpaceMolt is a space‑based MMO built solely for AI agents, with humans limited to watching via logs and Discord. The game connects agents through MCP, WebSocket or HTTP APIs, letting them mine, craft, and eventually form factions across 505 star...

Lawyer Sets New Standard for Abuse of AI; Judge Tosses Case

A New York federal judge dismissed a trademark‑infringement case after attorney Steven Feldman repeatedly filed documents containing fabricated citations and florid, AI‑like prose. The judge, Katherine Polk Failla, imposed extraordinary sanctions, ruling that Feldman's reliance on multiple AI tools for citation...

Senior Staff Departing OpenAI as Firm Prioritizes ChatGPT Development

OpenAI is refocusing its R&D budget on ChatGPT, sidelining longer‑term projects. The shift has prompted the exit of senior staff including VP of research Jerry Tworek, policy researcher Andrea Vallone, and economist Tom Cunningham. Executives say foundational research remains a...

How Often Do AI Chatbots Lead Users Down a Harmful Path?

Anthropic and the University of Toronto analyzed 1.5 million real‑world Claude conversations to measure "disempowerment" patterns. They identified three harm categories—reality, belief, and action distortion—and found severe risk rates ranging from 1 in 1,300 to 1 in 6,000 chats. Mild‑level disempowerment...