Architecting Modern AI Systems

The panel discussion centered on architecting modern AI systems, using a recent mental‑health hackathon as a case study. Organizers leveraged a self‑service Kubernetes platform on BuzzHPC to evaluate over a thousand team submissions, enabling rapid scoring, leaderboards, and a surprise hidden dataset that spurred intense competition. Key insights included the value of sovereign, renewable‑powered GPU clusters that keep data within Canadian jurisdiction while scaling globally, and the strategic advantage of open‑source models hosted on Buzz to cut token expenses and gain fine‑grained control over outputs. Participants highlighted how agent harnesses allow seamless model swapping, reducing engineering overhead and supporting diverse use cases. Notable moments featured a viral AI‑generated painting controversy illustrating public bias against recognizable AI signatures, and a discussion of GPU pricing dynamics where older A100 units remain cost‑effective despite newer Blackwell GPUs offering performance gains. The conversation also underscored the importance of built‑in guardrails, such as Model Armor, to meet enterprise governance requirements. Overall, the dialogue emphasized that modern AI architecture must balance platform reliability, tokenomics, and hardware selection while ensuring data sovereignty and sustainable compute. Companies that adopt flexible, locally hosted infrastructures can accelerate innovation, control costs, and mitigate regulatory risks.

Whats Special About Meta's Multi-Agent Systems

Meta’s latest presentation detailed a multi‑agent system designed to police short‑form video at the scale of hundreds of millions of daily views. The talk highlighted two core threats: modality‑misalignment, where a video’s audio or text conflicts with visual content,...

The Latency Goldilocks Zone Explained

The video explores iFood’s new conversational agent, ILO, which aims to move recommendation engines from a reactive, click‑based model to a proactive, AI‑driven experience. Rafael, head of innovation, and Daniel, data‑science manager, explain how ILO combines a rich user profile...

Building MCP Before MCP Existed: Inside Despegar's Sofia Agent

Despegar’s new AI concierge, Sofia, is a home‑grown travel assistant that predates the industry‑wide MCP standard. The company built a multi‑agent platform where a core "brain" called Chappie powers category‑specific flows—flights, hotels, cars, activities—allowing any squad to create and customize...

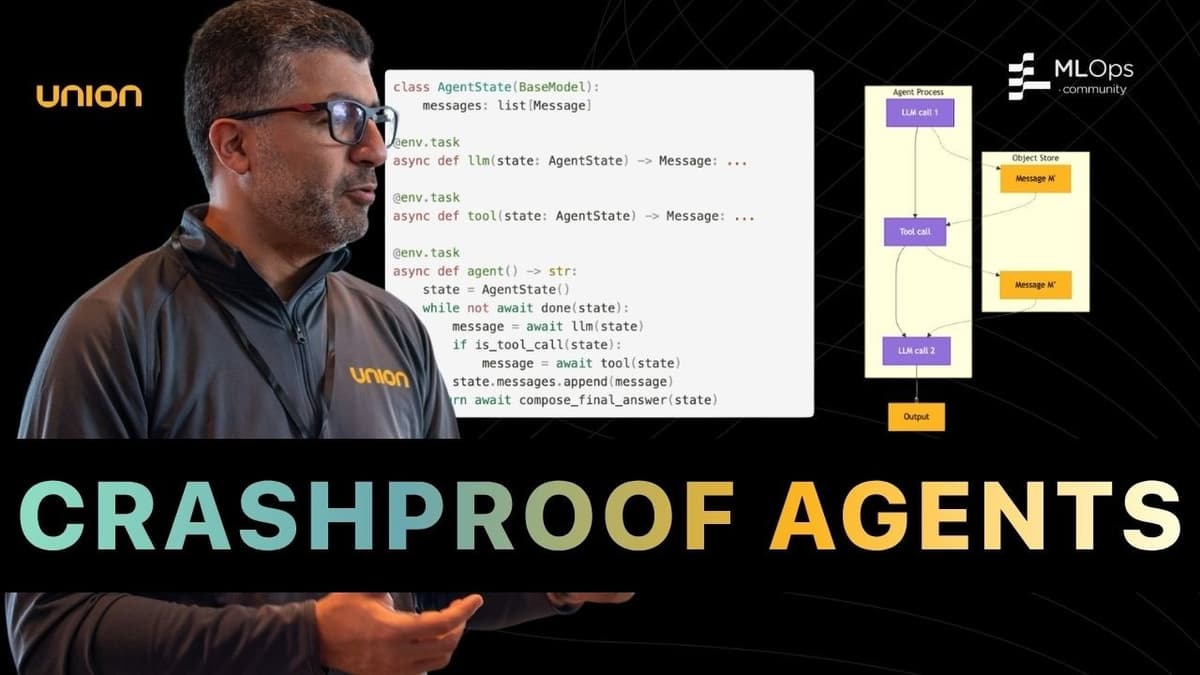

Building AI Agents That Survive Production

The Seattle AI agents conference opened with Demetrios Brinkman introducing Union AI CTO Hayam, who framed the session around building AI agents that can survive real‑world production. Hayam highlighted the gap between lab‑tested prototypes and the harsh realities of deployment—memory...

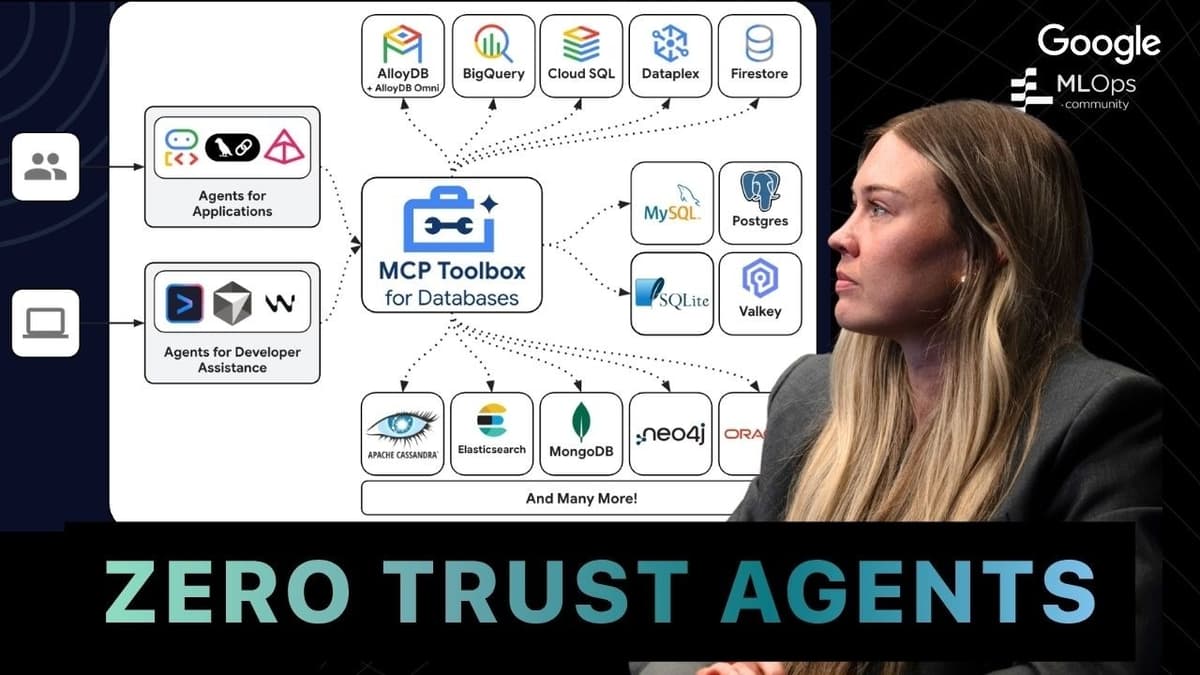

Stop AI Agents From SQL Injecting Your Database

Avery Kit, a Google staff engineer, explained how his team safeguards AI‑driven database access using the MCP Toolbox. The framework offers a self‑hosted, customizable layer that abstracts credentials, enforces connection pooling, and integrates with Google Cloud services, enabling developers to...

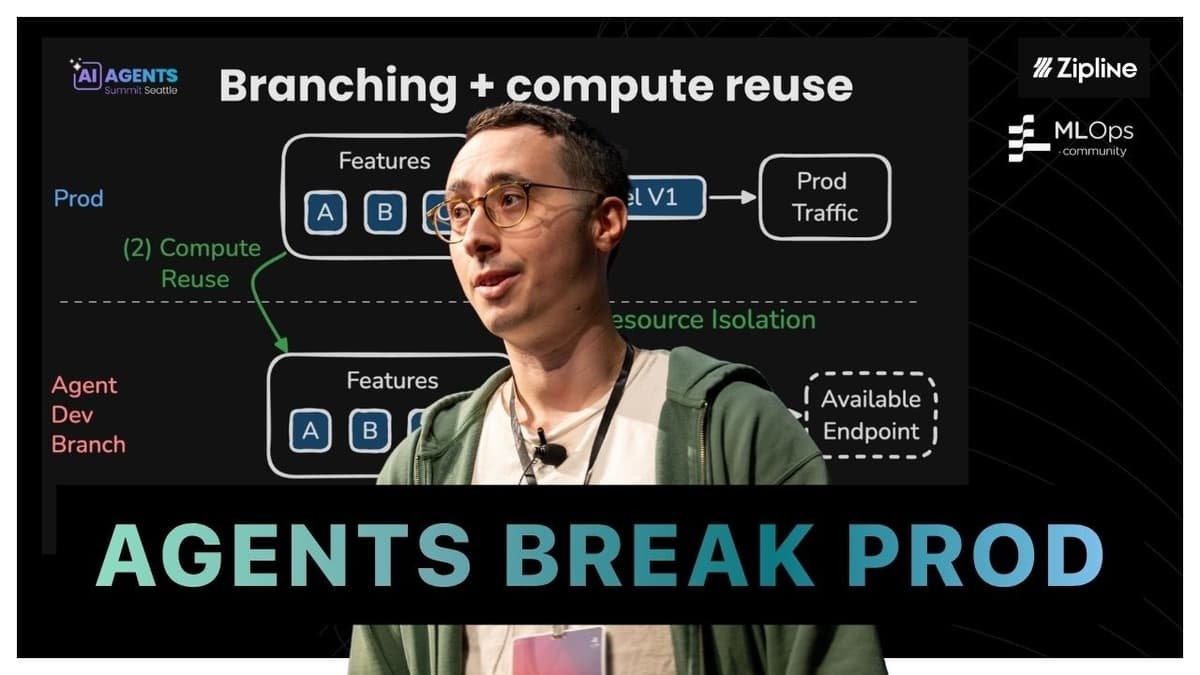

Why AI Agents Shouldn't Replace Your Fraud Models

The talk centered on why AI agents should augment, not replace, fraud detection and other high‑stakes ML systems. Ferran Sanoyan highlighted Chronon, an open‑source data‑foundation platform originally built at Airbnb to streamline feature engineering, and described how it now powers...

Getting Humans Out of the Way: How to Work with Teams of Agents

The conversation centers on how developers can "get humans out of the way" by building robust validation pipelines for AI‑driven coding agents. Rather than manually QA every feature, engineers define clear criteria—such as visual regression walk‑throughs and custom lint rules—so...

OpenXData Conference

OpenXData is hosting a full‑day conference on April 29, 2026 featuring two parallel tracks that explore AI‑native data platforms, data engineering for AI, and large‑scale cost‑performance optimization. Speakers include senior technologists from Uber, Snowflake, Microsoft, IBM, Anthropic, Walmart, Booking.com, and other industry...

A New Kind of Marketplace

The conversation between Pedro of Ox and Dona of Process centers on redesigning online classifieds—specifically real‑estate and automotive marketplaces—through AI‑driven agents. Rather than a pure chat interface, they champion a hybrid UI that blends conversational prompts with clickable shortcuts, allowing...

The Modern Software Engineer

The discussion centers on how AI‑driven coding agents are reshaping the role of the modern software engineer. By automating routine implementation and offering instant learning pathways, these agents enable developers to acquire front‑end or back‑end skills in weeks rather than...

AI Agents Summit Seattle

AI Agents Summit Seattle promises practical, hands‑on guidance for firms grappling with the growing gap between AI agent capabilities and operational stability. Speakers, including industry leaders from 47Billion and Yi Zhou, warned that the rapid rollout of agents in 2025...

Production Sub-Agents for LLM Post Training

The talk introduced a new production workflow for post‑training large language models, championed by Pinterest’s growth AI lead. Traditional pipelines required a linear, manual sequence—data cleaning, model selection, hyper‑parameter tuning, evaluation loops, and reinforcement learning—taking four to six weeks. By...

Fixing GPU Starvation in Large-Scale Distributed Training

The video examines a pervasive problem in large‑scale distributed machine‑learning: GPUs sit idle because the data pipeline cannot feed them fast enough. Engineers at Uber and former Google staff explain that the bottleneck is not model architecture or quantization,...

Practical Security for AI-Generated Code

Milan Williams, product manager at Segrep, opened the session by warning that AI‑driven code generators are no longer limited to single‑line suggestions; they now produce thousands of lines of code and execute shell commands with elevated credentials. He framed the...