![MCP Dev Summit [Day 1] Ft. Anthropic, Hugging Face, Open AI & Microsoft](/cdn-cgi/image/width=1200,quality=75,format=auto,fit=cover/https://i.ytimg.com/vi/jfd1UBEYJs0/maxresdefault.jpg)

MCP Dev Summit [Day 1] Ft. Anthropic, Hugging Face, Open AI & Microsoft

The third MCP Dev Summit kicked off in New York, showcasing the rapid maturation of the Agentic AI Foundation (AIF) and its flagship Model Connectivity Protocol (MCP). Organizers highlighted a surge in community participation, a slate of global events, and a leadership transition that positions the foundation for sustained growth. Within just four months, AIF’s membership swelled to 170 organizations—more than double CNCF’s early‑stage numbers—while sponsors ranging from Anthropic to Google Cloud underscored industry confidence. The summit also unveiled a worldwide tour covering seven cities and two flagship conferences (Agent Con and MCPCon), and announced Masin Gilbert, a PhD‑trained AI researcher with an MBA from Wharton, as the new executive director. MCP’s adoption metrics were a focal point: over 110 million SDK downloads per month, a pace that React took three years to achieve, illustrating a clear market demand for a universal agentic‑AI interface. Speakers emphasized the importance of open‑source interoperability, noting that the foundation has instituted a formal project‑life‑cycle (growth, impact, emeritus) to streamline contributions and governance. The rapid expansion signals that MCP is becoming the de‑facto standard for connecting AI agents to data, services, and legacy systems. For enterprises, this means faster integration, reduced duplication of effort, and a clear roadmap for participating in an open‑source ecosystem that could shape the next generation of autonomous applications.

Choosing the Right Model Is Hard. Maintaining Accuracy Is Harder.

Ash Lewis, founder and CEO of Fast Labs, opened the session by highlighting a growing pain point for AI product teams: picking the right large‑language model (LLM) and keeping its performance steady once it’s in production. He noted that the...

Stop Shipping on Vibes — How to Build Real Evals for Coding Agents

At the Coding Agents Conference, Braintrust’s developer advocate Jessica Wang warned that many AI coding teams are “shipping on vibes,” deploying agents without solid evaluation frameworks. She emphasized that without real eval datasets, scoring systems, and controlled experiments, organizations are...

Decomposing the Agent Orchestration System: Lessons Learned

At the Coding Agents Conference, Union.ai’s chief ML engineer Niels Bantilan warned that building agents is less about novel features and more about resilient infrastructure. He emphasized that durable, self‑healing, and easily debuggable systems prevent costly downtime. Bantilan highlighted Flyte’s...

How to Make a Coding Agent a General Purpose Agent - Harrison Chase

At the Coding Agents Conference on March 3, 2026, LangChain CEO Harrison Chase and Arcade AI CTO Sam Partee delivered a keynote arguing that the real barrier to scaling AI agents is not model intelligence but foundational infrastructure. They highlighted...

How AI Agents Store Memmories

The video explores how artificial‑intelligence agents manage memory, contrasting traditional file‑system storage with newer, more dynamic approaches. It highlights the distinction between personalization memory and task‑execution memory, and why the choice of storage architecture matters for different agent designs. For agents...

Your Code Remembers Where It Broke

The video introduces Temporal’s ability to remember exactly where a piece of code failed and resume execution once the error is fixed. This feature eliminates the traditional need to restart a server or rewrite logic after a syntax or runtime...

Lessons From 25 Trillion Tokens — Scaling AI-Assisted Development at Kilo

Kilo’s co‑founder and CEO Scott outlined how the company processed more than 25 trillion tokens since its May launch and used that data to reshape software engineering. By treating 2027‑level AI tools as core collaborators, Kilo shifted developers from manual coders...

Performance Optimization and Software/Hardware Co-Design Across PyTorch, CUDA, and NVIDIA GPUs

The conversation centers on performance optimization and software‑hardware co‑design spanning PyTorch, CUDA, and NVIDIA GPUs, highlighted by the launch of SageMaker HyperPod—a service that keeps GPUs pre‑warmed for instant swapping. The speaker also promotes his new O'Reilly book that stitches...

Explaining Durable Execution

The video explains Temporal’s durable execution model, emphasizing that workflow code must be deterministic. By restricting programs to repeatable logic—no random number generators or external nondeterministic calls—Temporal ensures that rerunning a workflow with identical inputs yields the same results. Key insights...

The Promise of Serverless

The video revisits the original promise of serverless computing, explaining how the term emerged organically as developers imagined writing code, uploading it to a massive cloud, and letting the platform handle execution without manual server management. It highlights key attributes such...

The Hard Truth About Building AI Agents

The speaker warns that building agentic AI requires careful, selective instruction design because developers cannot embed every rule or constraint into prompts. Even with very large context windows, empirical limits mean only a fraction of tokens should be used effectively,...

“I’ll Burn Out 2 in Minutes” The Brutal Reality of GPU Clusters

The speaker describes stress-testing new GPU clusters by immediately pushing them to maximum load and routinely causing about 2% of units to fail within minutes because many accelerators are engineered to run extremely hot and rely on substantial cooling. He...

Write Reliable Software with Temporal

The video introduces Temporal’s durable execution model as a way to boost developer productivity when building agentic systems. It explains how Temporal abstracts reliability concerns, allowing developers to write ordinary code that runs to completion despite cloud‑scale failures, flaky services,...

MLOps Coding Skills: Bridging the Gap Between Specs and Agents

The article introduces Agent Skills, a lightweight markdown‑based tool that injects organization‑specific engineering standards into AI coding agents. By converting sections of the MLOps Coding Course into SKILL.md files, the author shows how agents can automatically apply preferred tools such...

Using Agents in Production: Past Present and Future // Euro Beinat

Prosus announced it has shipped nearly 8,000 AI agents, with only 15% achieving production status while the remainder function as learning experiments. The data was presented at the Computer History Museum’s Coding Agents virtual conference on March 3, where industry...

Context Engineering 2.0: MCP, Agentic RAG & Memory // Simba Khadder

Simba Khadder unveiled Redis Context Engine at the Coding Agents Conference, positioning it as "Context Engineering 2.0" that merges retrieval, tool invocation, and memory into a single MCP‑native surface. The platform treats documents, databases, events, and live APIs as addressable resources via...

Enterprise-Ready MCP // Jiquan Ngiam

The Coding Agents Conference on March 3 will feature Jiquan Ngiam discussing the rapid enterprise adoption of agents and Model Context Protocols (MCPs). Over 80 % of professional developers now use AI tools daily, and agentic coding platforms such as Claude Code...

MLflow Leading Open Source

Databricks’ leaders Corey Zumar, Jules Damji, and Danny Chiao discussed the latest evolution of MLflow on the MLOps Podcast. The open‑source platform is being rebuilt to handle generative AI, agent workloads, and production‑grade governance, moving beyond its original data‑science‑only focus....

Simulate to Scale: How Realistic Simulations Power Reliable Agents in Production // Sachi Shah

At the Computer History Museum’s Coding Agents Conference, Sachi Shah presented how realistic, scalable simulations are essential for deploying reliable AI agents in production. She explained that simulations can mirror messy real‑world interactions—including multilingual dialogue, emotional states, background noise, and...

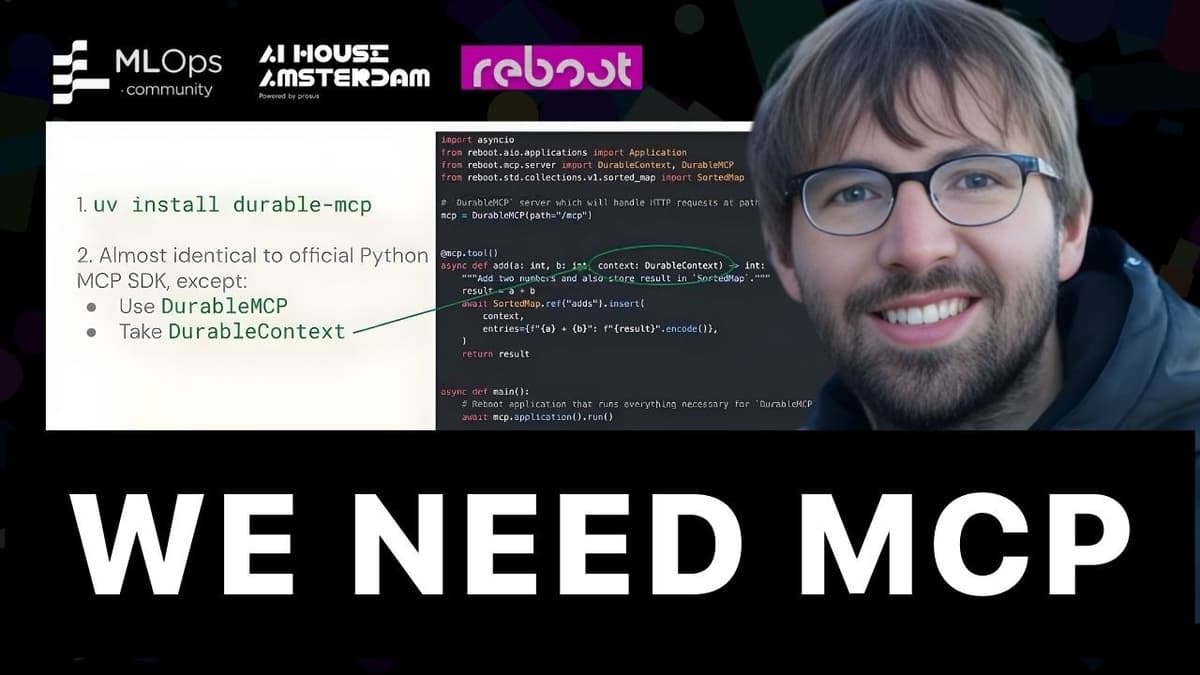

Yes, We Do Need MCP

The upcoming Coding Agents Conference will feature a deep‑dive into MCP, a stateful communication protocol designed for AI agents. Organizers argue that MCP’s built‑in statefulness differentiates it from gRPC and HTTP, enabling conversations to resume after interruptions. The talk will...

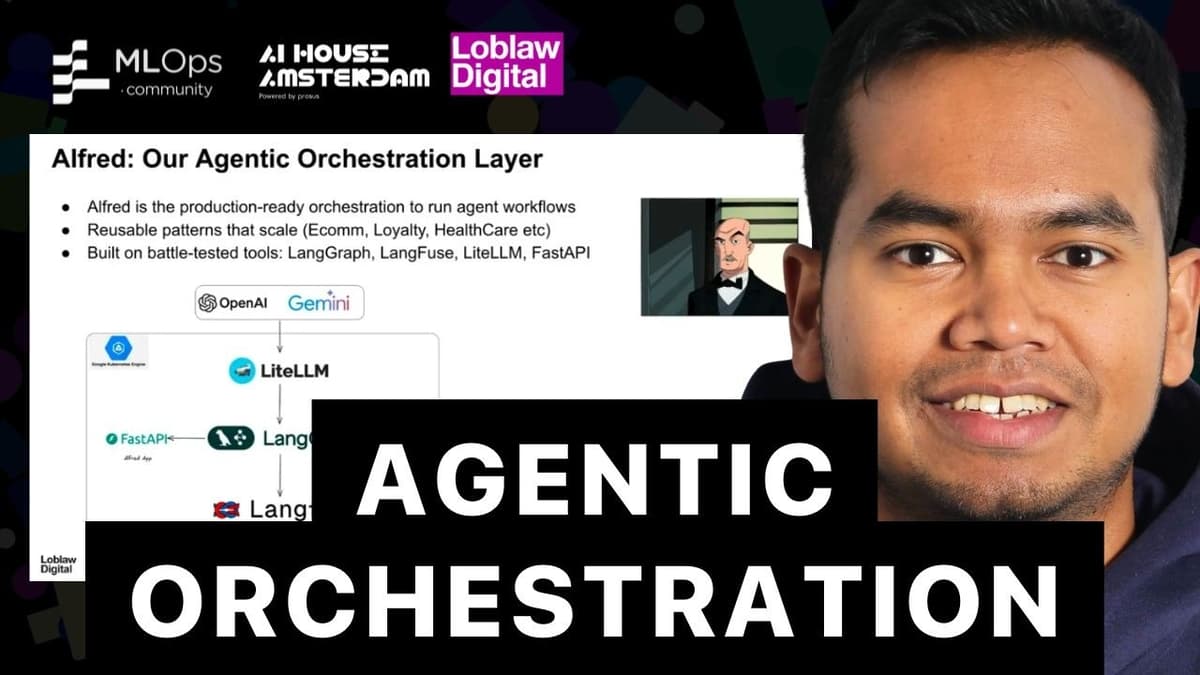

Building an Orchestration Layer for Agentic Commerce at Loblaws

The talk introduced Alfred, Loblaws’ production‑grade orchestration layer designed to power agentic commerce across its massive retail ecosystem. Built on Google Kubernetes Engine with a FastAPI gateway, Alfred abstracts LLM providers, leverages LangChain‑style execution graphs, and connects to over fifty...

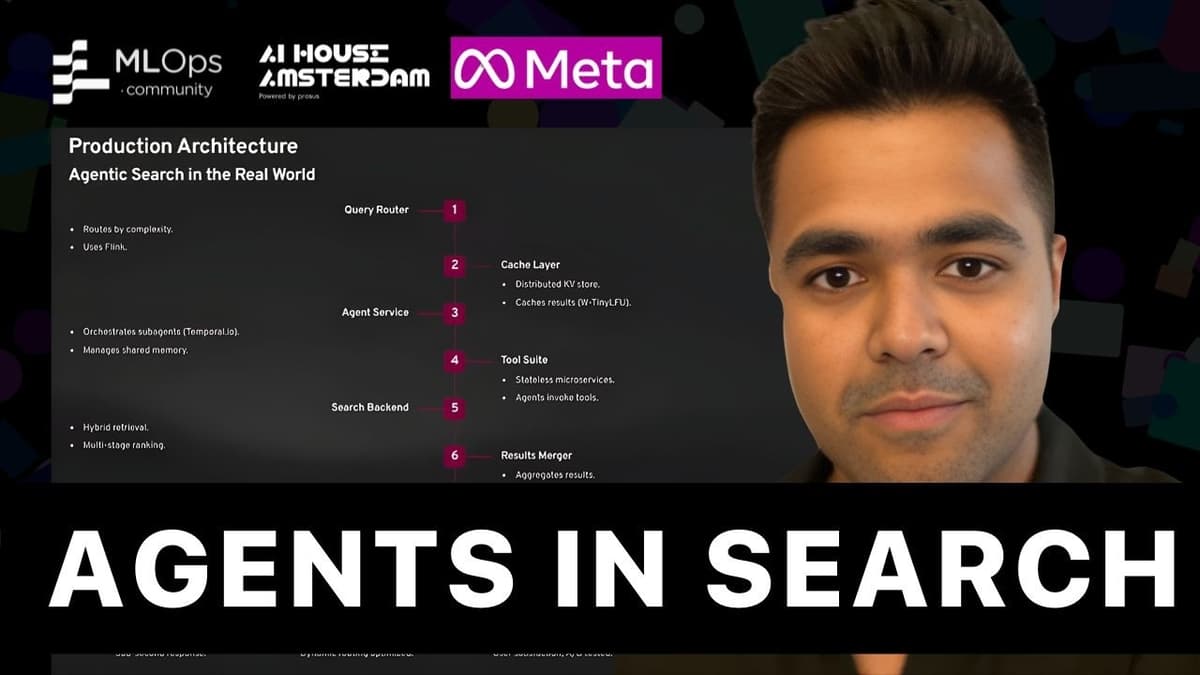

Agents as Search Engineers // Santoshkalyan Rayadhurgam

Santoshkalyan Rayadhurgam argues that the foundational assumption of classic retrieval—users supply fully formed intent—is collapsing, prompting a transition from deterministic, stateless pipelines to agentic, stateful search systems that reason across turns. He contrasts three generations: lexical BM25 pipelines, vector‑based RAG models,...

How AI Covered a Human’s Paternity Leave // Quinten Rosseel

During a head of data’s paternity leave, a logistics SaaS firm relied on an AI analyst named “Wobby” to handle incoming data questions. The agent answered roughly 60 % of queries, demonstrating that a well‑engineered AI can fill staffing gaps without...

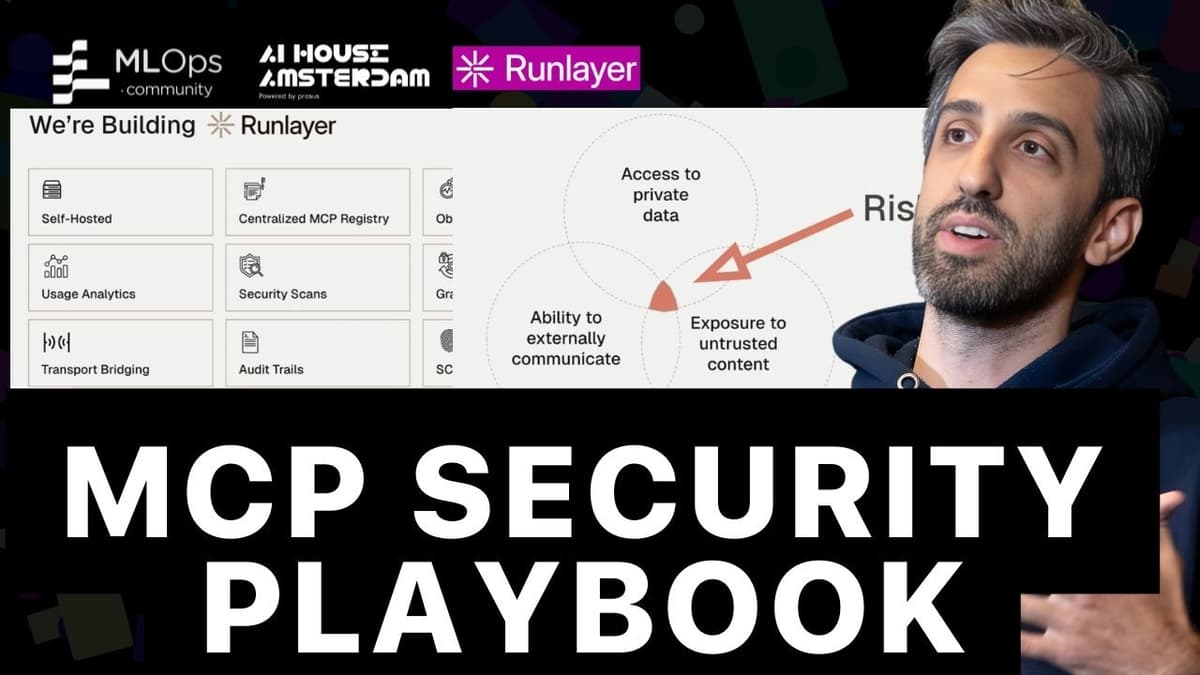

MCP Security: The Exploit Playbook (And How to Stop Them)

The video spotlights the rapid rise of the MCP (Model‑Centered Programming) standard since its November 2024 launch and the stark security lag that now threatens its expanding ecosystem. While major platforms are racing to support MCP, developers are left scrambling to...

The Future of Coding: AI Agents & the Next Tech Revolution // Ricky Doar

The conversation centers on Cursor, an AI‑driven coding assistant, and how developers are adapting to a new paradigm where large language models act as pair programmers or autonomous agents. Ricky Doar and his guest discuss the rapid adoption of Cursor...

Fast & Asynchronous: Drift Your AI, Not Your GPU Bill // Artem Yushkovskiy

The talk introduced ASEA, an open‑source asynchronous‑actor framework designed to replace traditional batch pipelines for generative AI workloads. By decoupling each processing step into self‑hosted GPU actors that communicate via message queues, the team at a global food‑delivery platform eliminated...

Beyond the Gold Standard: Evaluating and Trusting Agents in the Wild // Sanjana Sharma

AI agents look impressive in demos, but production reliability hinges on context, evaluation, and trust. Sanjana Sharma argues enterprises must shift from model‑first to system‑first thinking, embedding explicit business rules, subject‑matter‑expert (SME) heuristics, and versioned context layers. The talk outlines three...

Speed and Scale: How Today's AI Datacenters Are Operating Through Hypergrowth

The video, hosted by Chris, co‑founder and CEO of Netbox Labs, examines the unprecedented speed and scale of today’s AI datacenter construction. He frames Netbox as the de‑facto system‑of‑record that tracks everything from power and cooling to rack‑level configurations, giving...