Can AI Do Neuroscience without Understanding?

Why It Matters

Predictive AI can accelerate applications, but without underlying understanding it limits generalization, cross‑domain insight, and long‑term scientific value.

Key Takeaways

- •AlphaFold predicts protein structures but offers no human‑readable theory.

- •Transformers model neural recordings, yet struggle to reveal circuit computations.

- •Mechanistic interpretability seeks human‑interpretable features inside AI models.

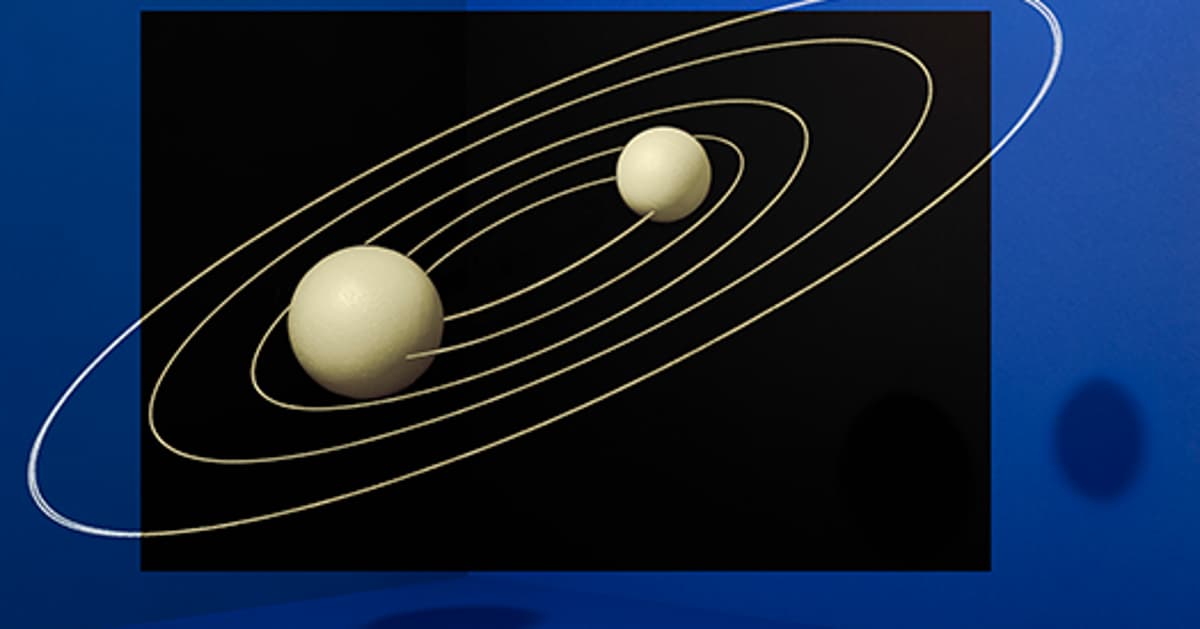

- •Vafa's transformer predicted orbits but couldn't infer gravity law.

- •Compression yields portable theories that generalize beyond raw predictions.

Pulse Analysis

The rise of AI‑driven prediction has reshaped how scientists approach discovery. Tools like AlphaFold deliver near‑experimental accuracy for protein folding, yet they provide no explanatory framework that researchers can internalize. Historically, breakthroughs such as the Hodgkin‑Huxley model combined precise prediction with a compact, mechanistic description, enabling mental simulation and further hypothesis generation. Today’s deep‑learning systems sidestep that compression step, delivering answers that are difficult for humans to parse, which raises questions about the role of understanding in modern science.

In neuroscience, transformer architectures now ingest massive Neuropixels and calcium‑imaging datasets, reproducing firing patterns with impressive fidelity. However, without interpretability, these models act as black boxes, offering little insight into the computations performed by cortical circuits. The emerging field of mechanistic interpretability attempts to reverse‑engineer these networks, extracting human‑readable features. A recent paper by Keyon Vafa illustrates the limitation: a transformer trained on millions of simulated planetary orbits predicted positions accurately but could not recover the universal law of gravitation, underscoring that high‑dimensional prediction does not guarantee discovery of underlying principles.

The practical implications are profound. Industries betting on AI‑generated drug candidates or neuro‑stimulation protocols may reap short‑term gains, yet the lack of explanatory models hampers regulatory approval, safety validation, and transfer of knowledge across domains. Funding agencies continue to prioritize outcomes over theory, but the long‑term health of scientific enterprise depends on compressing data into portable, testable theories. Investing in interpretability research and hybrid approaches that blend predictive power with mechanistic insight will ensure AI augments, rather than replaces, the human drive to understand.

Can AI do neuroscience without understanding?

Comments

Want to join the conversation?

Loading comments...