Why It Matters

It reveals fundamental limits to algorithmic proof and automated reasoning, affecting fields from software verification to AI alignment.

Key Takeaways

- •Gödel proved no consistent system can prove all true arithmetic statements.

- •Hilbert’s quest for a complete axiomatic foundation was permanently halted.

- •Incompleteness underlies modern cryptographic hardness assumptions.

- •The theorem influences AI safety by exposing limits of formal verification.

- •Gödel’s work sparked computability theory and Turing machines.

Pulse Analysis

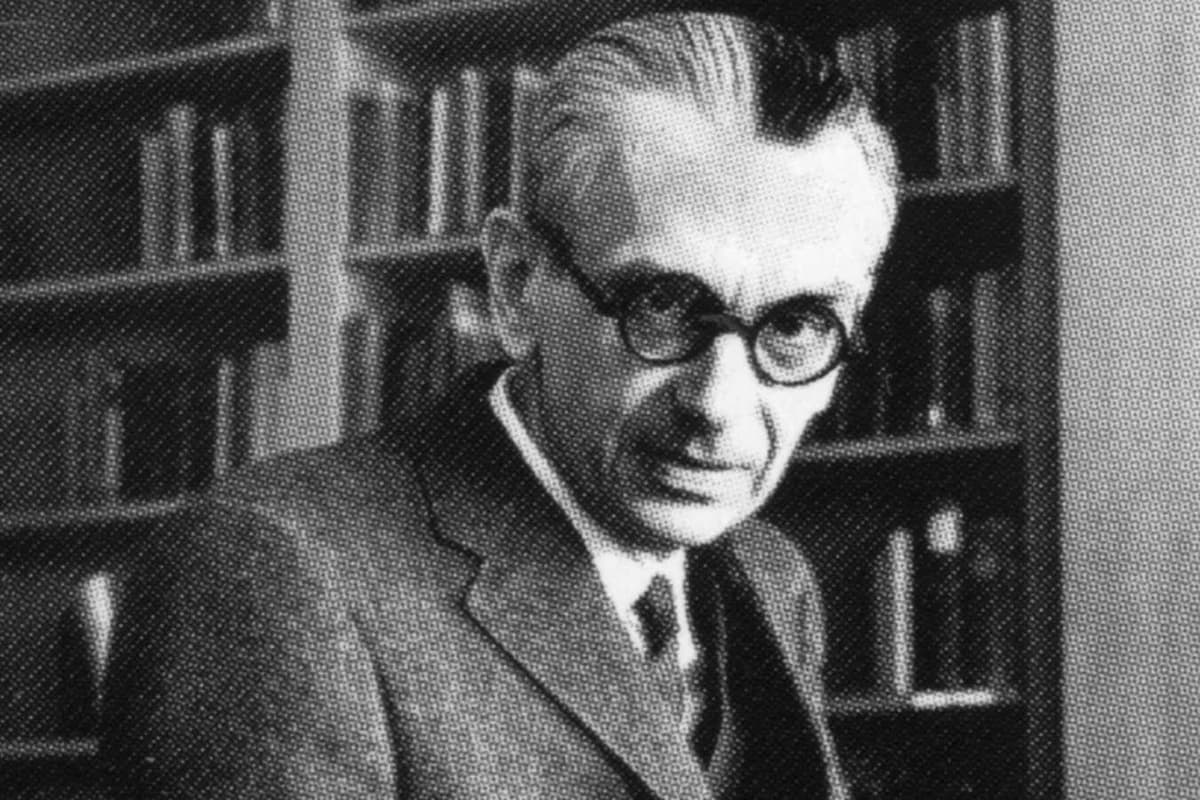

The early 20th‑century mathematical community rallied around David Hilbert’s bold proposal to formalize all of mathematics within a finite, complete set of axioms. Gödel, an Austrian logician born in 1906, entered this debate while studying at the Institute for Advanced Study. In 1931, his groundbreaking paper “Über formal unentscheidbare Sätze” introduced the incompleteness theorem, instantly overturning the prevailing optimism. By demonstrating that any system capable of expressing basic arithmetic inevitably contains true statements it cannot prove, Gödel forced mathematicians to accept an intrinsic ceiling on formal deduction.

The theorem comes in two parts: first, no consistent, effectively generated theory can capture every arithmetic truth; second, such a theory cannot demonstrate its own consistency. This insight laid the groundwork for Alan Turing’s later work on computability, linking logical limits to the concept of a universal machine. In practical terms, it means that no software verifier can guarantee correctness for all possible programs, a reality that shapes modern formal methods and verification tools.

For today’s technology firms, Gödel’s legacy is a double‑edged sword. Cryptographic protocols often rely on problems that are provably unsolvable within certain formal systems, a direct descendant of incompleteness reasoning. At the same time, AI developers cite the theorem when arguing that fully autonomous, self‑verifying systems may remain out of reach, prompting a focus on layered safety frameworks rather than absolute guarantees. Understanding these limits helps executives allocate resources to risk mitigation, research, and regulatory compliance in an era where algorithmic decision‑making is ubiquitous.

The man who ruined mathematics

Comments

Want to join the conversation?

Loading comments...