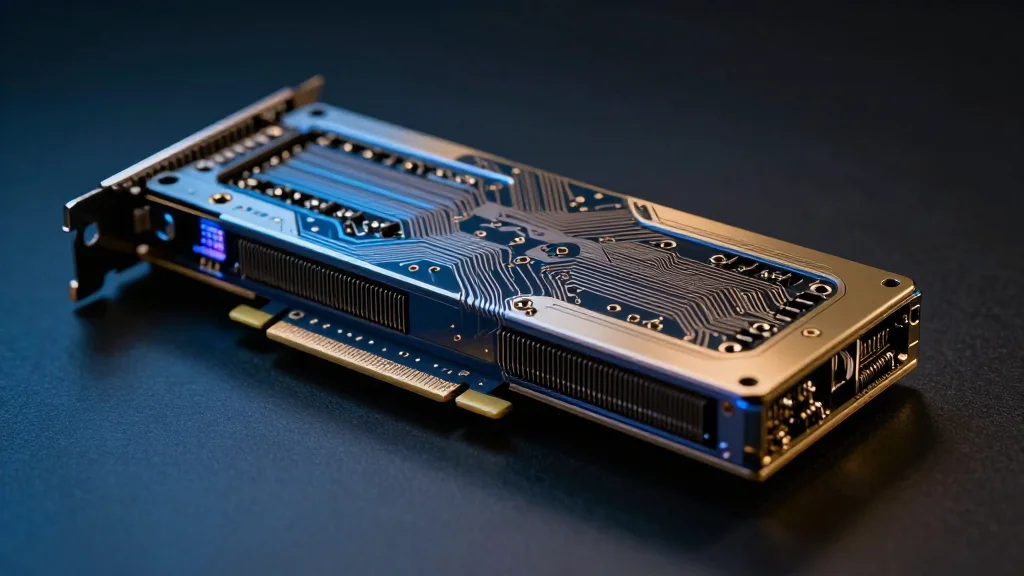

Nvidia Projects $1 Trillion in GPU Orders by 2027, Spurring B2B AI Demand

Why It Matters

The $1 trillion GPU outlook redefines the scale of enterprise AI investment, turning data‑center capacity into a strategic commodity for every major industry. Companies that secure access to Nvidia’s hardware will be able to accelerate product development, cut time‑to‑market for AI‑driven services, and lock in long‑term revenue streams from cloud and on‑premise deployments. At the same time, the forecast highlights the geopolitical stakes of AI hardware. Export‑control violations and the U.S.–China rivalry could constrain supply, prompting firms to diversify across vendors or invest in domestic chip programs. For investors and B2B decision‑makers, understanding how Nvidia’s roadmap aligns with regulatory trends will be critical to managing risk while capitalizing on the AI boom.

Key Takeaways

- •Nvidia projects >$1 trillion in data‑center compute and networking revenue through 2027, up $500 billion from its prior outlook.

- •Goldman Sachs maintains a $250 price target for Nvidia, implying a 36% upside from current levels.

- •Dan Ives (Wedbush) said Nvidia is "alone at the top of the AI mountain" after the GTC keynote.

- •Anthropic CEO Dario Amodei warned against shipping Nvidia AI chips to China, likening it to nuclear proliferation.

- •Big‑tech firms are investing billions in AI‑focused data centers, with Nvidia GPUs powering the majority of new capacity.

Pulse Analysis

Nvidia’s trillion‑dollar forecast is less a surprise than a confirmation of a structural shift in how enterprises consume compute. Ten years ago, GPU spend was a niche expense for graphics rendering; today it underpins everything from large‑language‑model training to real‑time inference in finance, manufacturing, and healthcare. The guidance effectively quantifies AI as a utility, and the market is responding by treating GPU capacity as a core operating expense rather than a discretionary capex line item.

Historically, hardware cycles have been punctuated by incremental upgrades, but the Blackwell and Rubin platforms represent a generational leap in performance‑per‑watt and integration with networking fabrics. By bundling compute, storage, and high‑speed interconnects, Nvidia is creating a lock‑in effect that forces B2B customers to align their roadmaps with Nvidia’s product cadence. This dynamic gives Nvidia pricing power and a defensive moat against emerging competitors such as AMD’s MI300X or China’s home‑grown AI chips, which still lag in ecosystem maturity.

The geopolitical backdrop adds a layer of complexity. The Super Micro indictment illustrates how valuable these chips have become as strategic assets, prompting tighter export controls that could fragment the global supply chain. Companies that rely heavily on Nvidia may need to hedge against potential disruptions by diversifying suppliers or co‑investing in domestic fabs. For investors, the key risk is not demand erosion but supply constraints that could throttle the very growth Nvidia is forecasting. Monitoring regulatory developments and the pace of alternative chip development will be essential for anyone betting on the AI hardware playbook.

Nvidia projects $1 trillion in GPU orders by 2027, spurring B2B AI demand

Comments

Want to join the conversation?

Loading comments...