Recent Posts

Social•Feb 22, 2026

Distilled Small Models Power Local AI Writing Assistants

MacBooks run AI writing assistants locally. No internet needed. That's what small language models make possible. Big AI models are powerful. But they’re also expensive to run. As AI moves into real products, that becomes a problem. SLMs are built to do specific tasks well. They’re faster, cheaper, and can even run locally. But how do small models stay capable? That's where distillation comes in. A large model teaches a smaller one how to behave and make decisions without copying everything. Swipe through the carousel to understand: Small Language Models (SLMs) Distillation This is part of Introduction to AI in 42 Terms (we’re now at 25/42) If this helps, give it a Like 👍 For deeper explanations, check the comments 👇 Follow me to understand the actual tech behind AI.

By Louis Bouchard

Social•Feb 19, 2026

Show Process, Not Perfection, in AI Take‑Home Interviews

A question I get all the time: “How do I prepare for AI engineering interviews? Especially now that companies give 24-hour take-homes?” Here’s the uncomfortable answer. Most people prepare for interviews as if they were trivia games. They memorize definitions. They collect question lists. They grind...

By Louis Bouchard

Video•Feb 17, 2026

The AI Engineer's Dilemma - Choose the Right AI System

The video centers on a pivotal design choice for AI engineers: whether to build a predictable, step‑by‑step workflow or an autonomous LLM‑driven agent. This decision shapes development speed, operational expenses, reliability, and the end‑user experience, and the speaker warns that...

By Louis Bouchard

Video•Feb 17, 2026

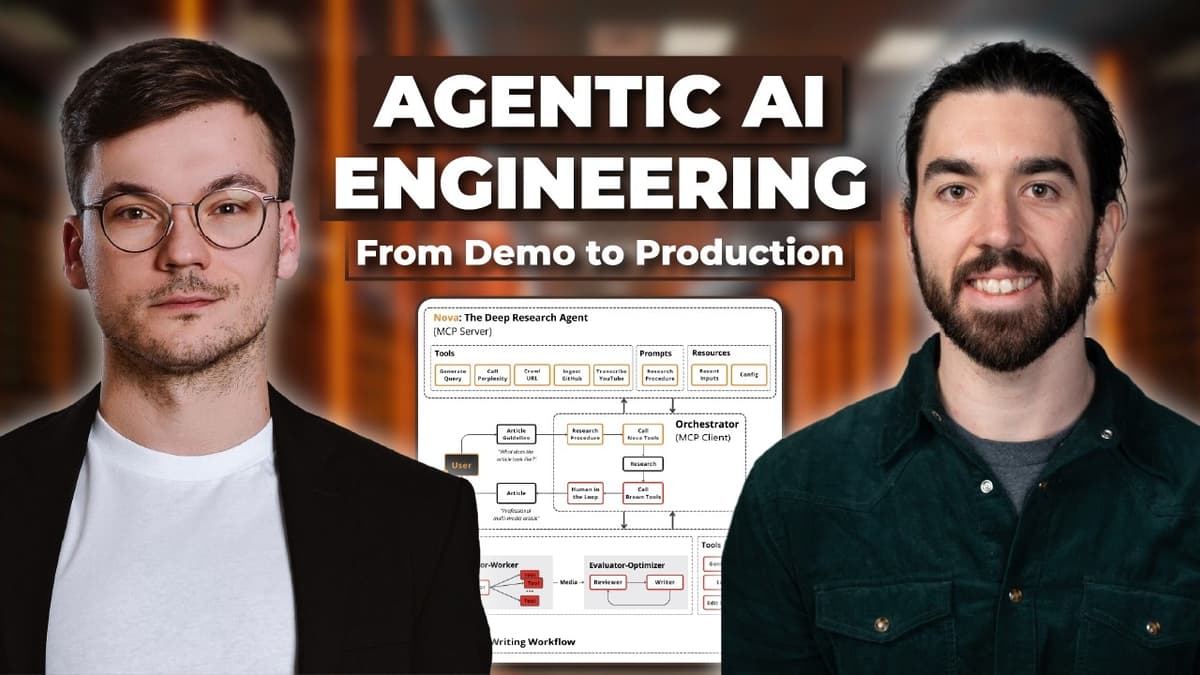

Our New Agentic AI Engineering Course!

The video announces a new Agentic AI Engineering course designed to turn Python‑savvy developers into production‑ready AI engineers capable of building trustworthy, multi‑agent systems. It highlights the industry gap between flashy demos and deployable agents, emphasizing that real‑world agents must handle...

By Louis Bouchard

Social•Feb 17, 2026

Own Your Data, Not Just Model Tuning

AI teams love tuning models. But they ignore the bike chain: data. Outsourcing labeling to people that care much less on the app’s success. Messy internal docs. No structured knowledge base. No call transcripts. No clean SOPs. Then they ask: “Why isn’t the model improving?” The highest ROI in...

By Louis Bouchard

Social•Feb 15, 2026

Synthetic Data Ceiling Threatens Future AI Progress

Stack Overflow raised this generation of AI. ChatGPT, Claude, Gemini… they all grew up on human-written code, answers, debates, and mistakes. That human data was the fuel. Now the weird part: More and more content online is generated by models. Future models will increasingly train...

By Louis Bouchard

Social•Feb 13, 2026

Friction, Not AI, Stops Non‑Technical Teams From Adopting

Non-technical teams aren't afraid of AI. They’re afraid of... - Clunky setup processes - Gatekept technical complexity - Theory they can't act on immediately - Having to master abstractions before seeing results - Learning Python just to "get started." - Outdated training models Teams checking out of AI...

By Louis Bouchard

Social•Feb 12, 2026

Teaching What You Learn Turns Struggle Into Mastery

I failed to complete my PhD. But I discovered something 10x more valuable along the way... In 2019, I was a Systems Engineering student who loved math. And I fit the typical stereotype for people in my area of study: - Quiet - Nerdy - Introverted -...

By Louis Bouchard

Blog•Feb 10, 2026

42 AI Concepts You Actually Need to Understand LLMs

The episode breaks down the 42 essential concepts that underpin large language models (LLMs) and generative AI, covering topics such as tokens, context windows, hallucinations, embeddings, retrieval‑augmented generation, agents, alignment, and evaluation. By presenting these ideas in plain English without...

By Louis Bouchard

Social•Feb 4, 2026

Ask 13 Critical Questions Before Building AI Projects

Most AI projects fail before you write a single line of code. Not because the tech is hard. Because you skipped the questions that actually matter. Most teams choose tools based on hype, not requirements. They pick frameworks before understanding scope. And start building before...

By Louis Bouchard

Social•Feb 3, 2026

Agentic AI: Self‑Directed Planning vs Fixed Workflows

There are two ways to build AI systems. One follows predefined steps. The other decides its own next move. That’s the difference between workflows and agents. And when an AI can plan, choose tools, and act toward a goal on its own — that’s agentic AI. Swipe...

By Louis Bouchard