AI Is a Tool, Not a Replacement for Learning

I sincerely hope the first take in this post is trolling. Yes, school should evolve. Of course it should. AI changes what we do and how we do it. But the point of education was never just memorizing facts or producing perfectly formatted essays. It is learning how to think, how to question, how to analyze, how to work with others, how to struggle through a hard problem, and how to grow. Calculators did not make math useless. Computers did not make learning obsolete. And AI will not make education irrelevant. What we teach will keep changing. It has to. But learning itself still matters. A lot. So do attention, discipline, curiosity, friendship, and the ability to build your own mind before outsourcing everything to a machine. Use AI in school, absolutely. But do not confuse using a tool with becoming educated. I’m Louis-François, PhD dropout, now CTO & co-founder at Towards AI. Follow me for tomorrow’s no-BS AI roundup 🚀

Preserve Whole Tables in RAG to Stop Hallucinations

Your RAG pipeline answers everything correctly. Except anything from a table. Pricing data. Comparison charts. Structured specs. Ask about any of these and the answer is either wrong or completely made up. The model isn't hallucinating because it's bad. It's hallucinating because it never...

Context or RAG Isn’t Training; only Fine‑tuning Changes the Model

If you paste your company data into ChatGPT, you did NOT just train it. ❌ I keep getting different versions of this same question: → Can I inject knowledge directly into the model? → Does adding data through RAG actually change how the...

Why the U.S. Government Turned on Anthropic

The video unpacks the Pentagon’s showdown with AI startup Anthropic, focusing on the February 2026 episode where the U.S. government threatened to bar the company unless it stripped two controversial safeguards from its cloud‑based models. Anthropic had already been supplying classified‑level...

Latent Space Isn't a Modifiable Database—Use Prompting or RAG

The latent space is not a database inside your model. You can't open it. you can't edit it. you can't inject facts into it. When words enter an LLM, they become vectors. These vectors get transformed again and again across many layers. All those...

Human Learning Is Process‑based; LLMs Learn only From Outcomes

Both humans and LLMs use reinforcement to learn. But the mechanism is not the same. When you learn a climbing move, the feedback is continuous. You fall, adjust your grip, shift weight, feel tension through your body. Your internal model updates in...

Unchecked Tool Calls Turn Errors and Costs Into System Crises

What actually happens when you add a 'tool' to a multi-agent system? In Part 1, we covered 3 hidden complexities. Here are 3 more that we've seen show up only in production. 4️⃣ Tool Outputs Become a Single Point of Failure Your agent picked...

AI Engineering Cheatsheets: Instant Decision Guides for Production

I just put together a repo with all my AI engineering cheatsheets as markdown files (because sometimes all you need is the right reference at the right time). Cheatsheets are really powerful for making quick engineering decisions. You don't have to...

Stop Preparing for AI Interviews the Wrong Way

The video addresses a common question—how to prepare for AI engineering interviews, especially the growing prevalence of 24‑hour take‑home assignments. Unlike traditional white‑board coding, these projects evaluate a candidate’s end‑to‑end thinking rather than rote knowledge. The speaker argues that memorizing definitions...

Clear Prompts, Not Models, End AI Writing Slop

I made a free Anti-Slop Prompt Template that fixes the #1 problem with AI writing. The problem isn't the model. It's the instructions. Vague instructions give the AI room to be generic. These rules fix that. Here's what's inside: - A 7-section template...

No, Pasting Data Into ChatGPT Does Not Train It

The video tackles a common misconception: pasting company documents into ChatGPT does not train the model. It clarifies the difference between simple prompting, Retrieval‑Augmented Generation (RAG), and genuine model fine‑tuning, emphasizing that only weight adjustments constitute real learning. Key insights include...

Revenue Follows Quality, Not the Other Way Around

I realized I don't care about my revenue now.💸 Not because money doesn't matter. But because revenue is just a consequence. What I actually care about: - Did our students get a job after the course? - Did they build something real? - Did they message...

Master LLM Engineering at UphillConf 2026 Workshop

I've spent years teaching AI engineering (mostly online). This May, I'm taking it to the stage. I'll be at @uphillconf 2026 in Bern, Switzerland. A full-day workshop on May 7 and a conference talk on May 8. → 1/Workshop - May 7 "AI Engineering...

Most People Prepare Wrong for AI Engineering Interviews

The post argues that most candidates prepare incorrectly for AI engineering interviews, focusing on perfect answers or flashy demos instead of the underlying problem‑solving process. Interviewers are more interested in how candidates approach ambiguity, make trade‑offs, evaluate their work, and...

What I Look For When Hiring AI Engineers

In the video, Louis Bouchard outlines the core attributes he seeks when hiring AI engineers, emphasizing a blend of solid theoretical knowledge, practical implementation skills, and the ability to translate research into production. He highlights the importance of problem‑solving mindset,...

Build Real AI Projects, Not Prompt Tricks, for Interviews

You don’t prepare for AI engineering interviews by collecting prompt tricks or LeetCode. You prepare by building something real. Because interviews don’t reward: “I know this technique.” They reward: “I built this. Here’s how it works. Here’s what failed. Here’s what I measured. Here’s what...

Scale AI Training: Replace Human Feedback with AI Evaluations

Training AI with human feedback works well. But humans are slow, expensive, and inconsistent. So how do you scale feedback to millions of examples? You replace the human with AI — or remove opinions entirely. That's RLAIF and RLVR. Swipe through the carousel to understand: RLAIF...

Ask Three Questions Before Building or Using APIs

A client messaged me frustrated last month. They'd spent 3 months building something from scratch... that an API could have handled in a week. I've seen the opposite too. Teams so dependent on third-party APIs that one pricing change broke their entire business model. Both...

Boring, Well-Documented Work Beats Flashy AI Projects

After 100+ AI engineering interviews, here’s the pattern I didn’t expect: The strongest candidates are not the ones with the coolest projects. They’re the ones that care enough to make the boring work. Boring is good. Boring means: clear scope clear outputs clear measurement clear tradeoffs clear documentation One...

How AI Agents Actually Work: ReAct vs Plan-and-Execute

The video explains two agentic AI patterns—ReAct (Reason+Act) and Plan-and-Execute—that enable large language models to plan, use tools, verify results, and adapt mid-task instead of producing single-pass, unverified outputs. ReAct interleaves thought, action, and observation in loops, allowing dynamic recovery...

Quantify AI Evaluations: Simple Accuracy Beats Intuition

Quick question for AI engineers (and yes, it’s uncomfortable): If your evaluation is “it looks right”… what happens when it looks right and is wrong? With these kinds of evaluations, you probably won't pass the first interview. This is why I push...

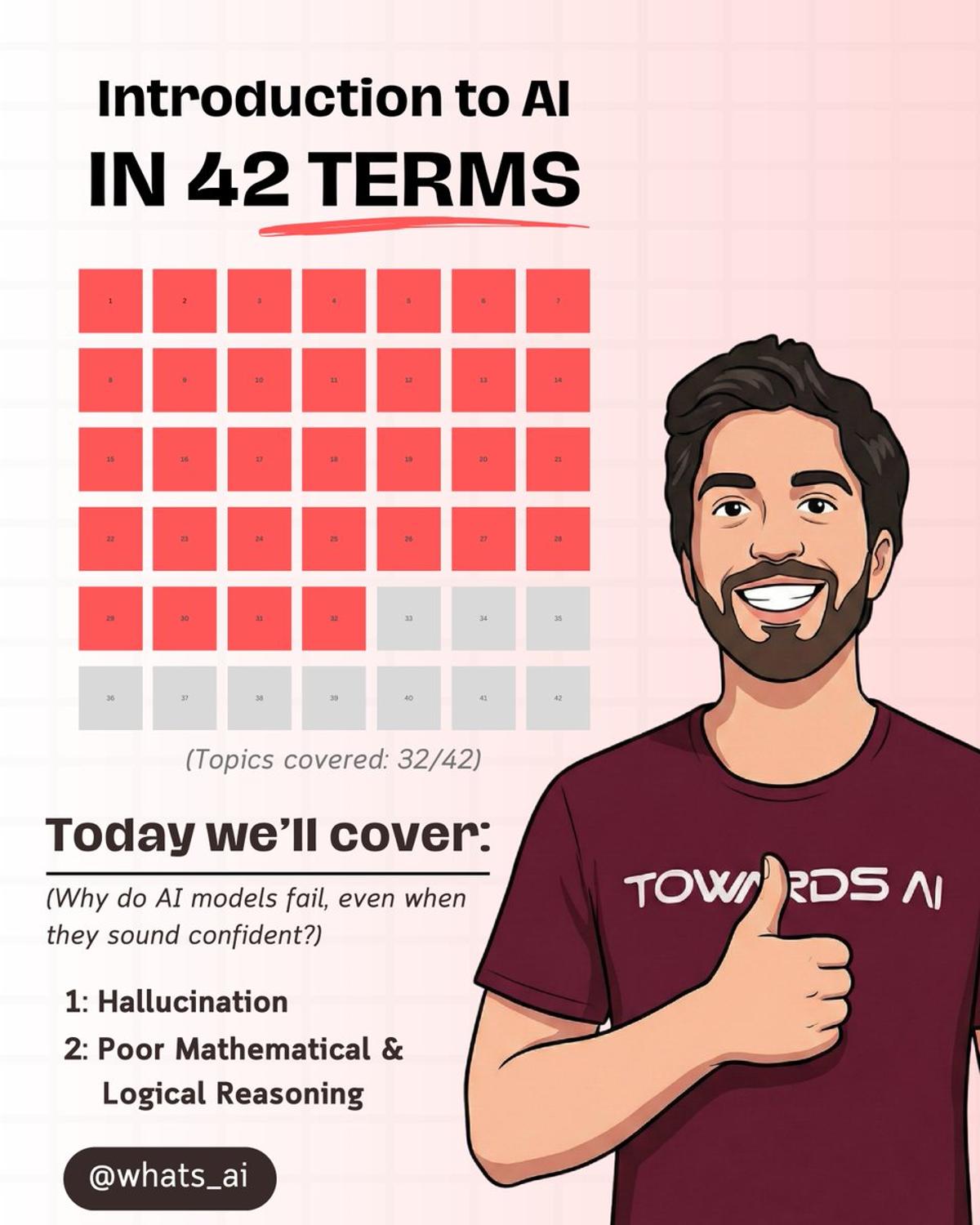

LLMs Hallucinate; They’re Pattern Matchers, Not Calculators

You asked ChatGPT a question. It answered confidently. It was completely wrong. LLMs don't check facts. They predict the next likely word. That's called Hallucination. Also LLMs have Poor Mathematical & Logical Reasoning. They are not calculators. They are pattern-matchers. Swipe through the carousel to understand: Hallucination...

Reproducibility Beats Impressiveness in AI Take‑Home Submissions

I have a simple take-home rule for our AI engineering interviews: If I can’t run your project in a fresh environment quickly, the project isn’t done. Not because I’m strict. Because that’s what working in a team feels like. A strong README doesn’t read...

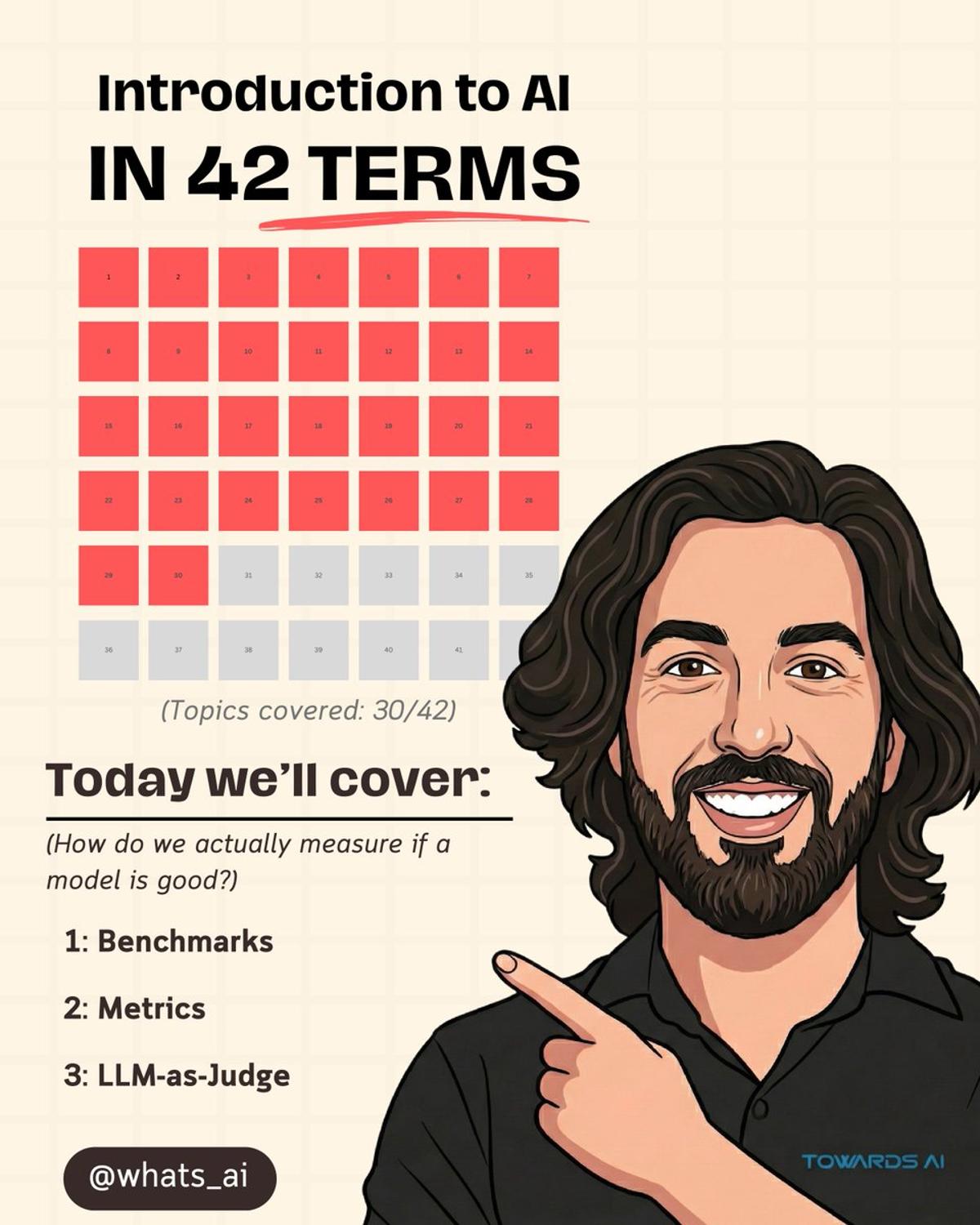

Benchmarks Aren’t Enough: Use Metrics for Real AI Performance

How do you know which model is actually better? For that we use Benchmarks. Benchmarks measure raw capability. But you can't rely only on benchmarks. A model can score high on tests and still give terrible answers in real use. That's why we also measure...

Build a Tiny End‑to‑end Pipeline, Then Ship, Measure, Explain

If you want practice that actually maps to modern AI engineering take-homes, do this. Not “study more questions”. Build a small pipeline and prove it works. A realistic weekend plan: Hour 0 to 1: pick 10 messy documents (invoices are perfect) Hour 1 to 3:...

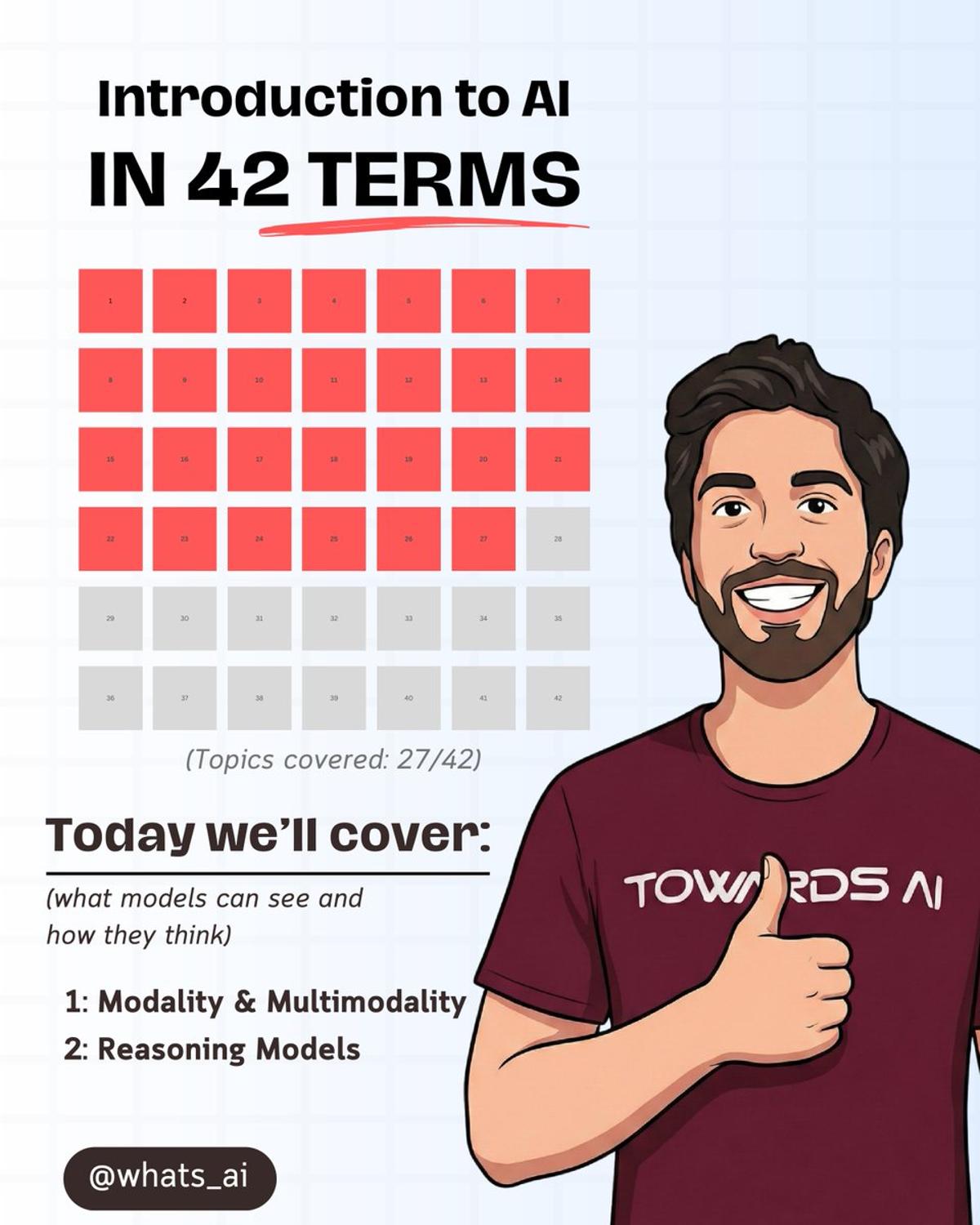

AI Now Handles Text, Images, and Audio Simultaneously

Early AI models could only handle one type of input. Now they can process text, images, and audio at the same time. That's multimodality. Reasoning models work differently. They break problems into steps first. Like a built-in "let's think step by step." Swipe through the carousel...

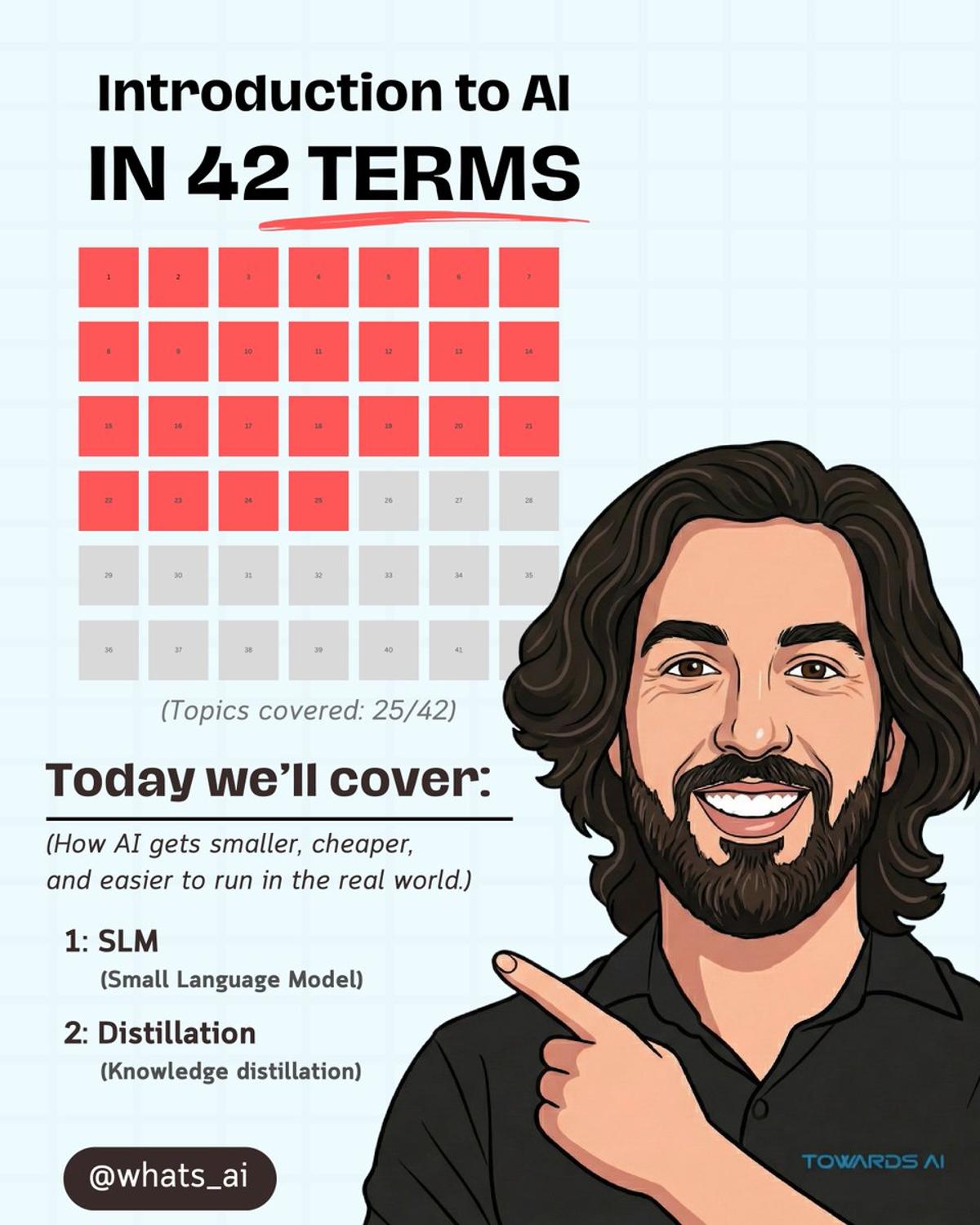

Distilled Small Models Power Local AI Writing Assistants

MacBooks run AI writing assistants locally. No internet needed. That's what small language models make possible. Big AI models are powerful. But they’re also expensive to run. As AI moves into real products, that becomes a problem. SLMs are built to do specific tasks well. They’re faster,...

Show Process, Not Perfection, in AI Take‑Home Interviews

A question I get all the time: “How do I prepare for AI engineering interviews? Especially now that companies give 24-hour take-homes?” Here’s the uncomfortable answer. Most people prepare for interviews as if they were trivia games. They memorize definitions. They collect question lists. They grind...

The AI Engineer's Dilemma - Choose the Right AI System

The video centers on a pivotal design choice for AI engineers: whether to build a predictable, step‑by‑step workflow or an autonomous LLM‑driven agent. This decision shapes development speed, operational expenses, reliability, and the end‑user experience, and the speaker warns that...

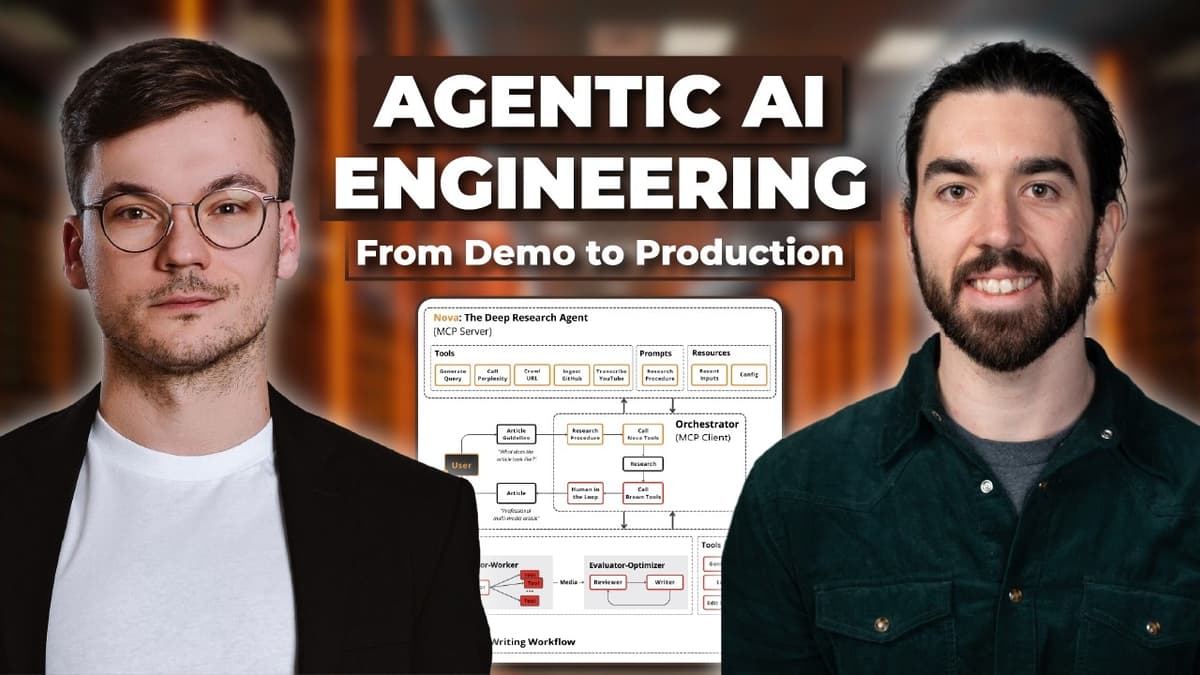

Our New Agentic AI Engineering Course!

The video announces a new Agentic AI Engineering course designed to turn Python‑savvy developers into production‑ready AI engineers capable of building trustworthy, multi‑agent systems. It highlights the industry gap between flashy demos and deployable agents, emphasizing that real‑world agents must handle...

Own Your Data, Not Just Model Tuning

AI teams love tuning models. But they ignore the bike chain: data. Outsourcing labeling to people that care much less on the app’s success. Messy internal docs. No structured knowledge base. No call transcripts. No clean SOPs. Then they ask: “Why isn’t the model improving?” The highest ROI in...

Synthetic Data Ceiling Threatens Future AI Progress

Stack Overflow raised this generation of AI. ChatGPT, Claude, Gemini… they all grew up on human-written code, answers, debates, and mistakes. That human data was the fuel. Now the weird part: More and more content online is generated by models. Future models will increasingly train...

Friction, Not AI, Stops Non‑Technical Teams From Adopting

Non-technical teams aren't afraid of AI. They’re afraid of... - Clunky setup processes - Gatekept technical complexity - Theory they can't act on immediately - Having to master abstractions before seeing results - Learning Python just to "get started." - Outdated training models Teams checking out of AI...

Teaching What You Learn Turns Struggle Into Mastery

I failed to complete my PhD. But I discovered something 10x more valuable along the way... In 2019, I was a Systems Engineering student who loved math. And I fit the typical stereotype for people in my area of study: - Quiet - Nerdy - Introverted -...

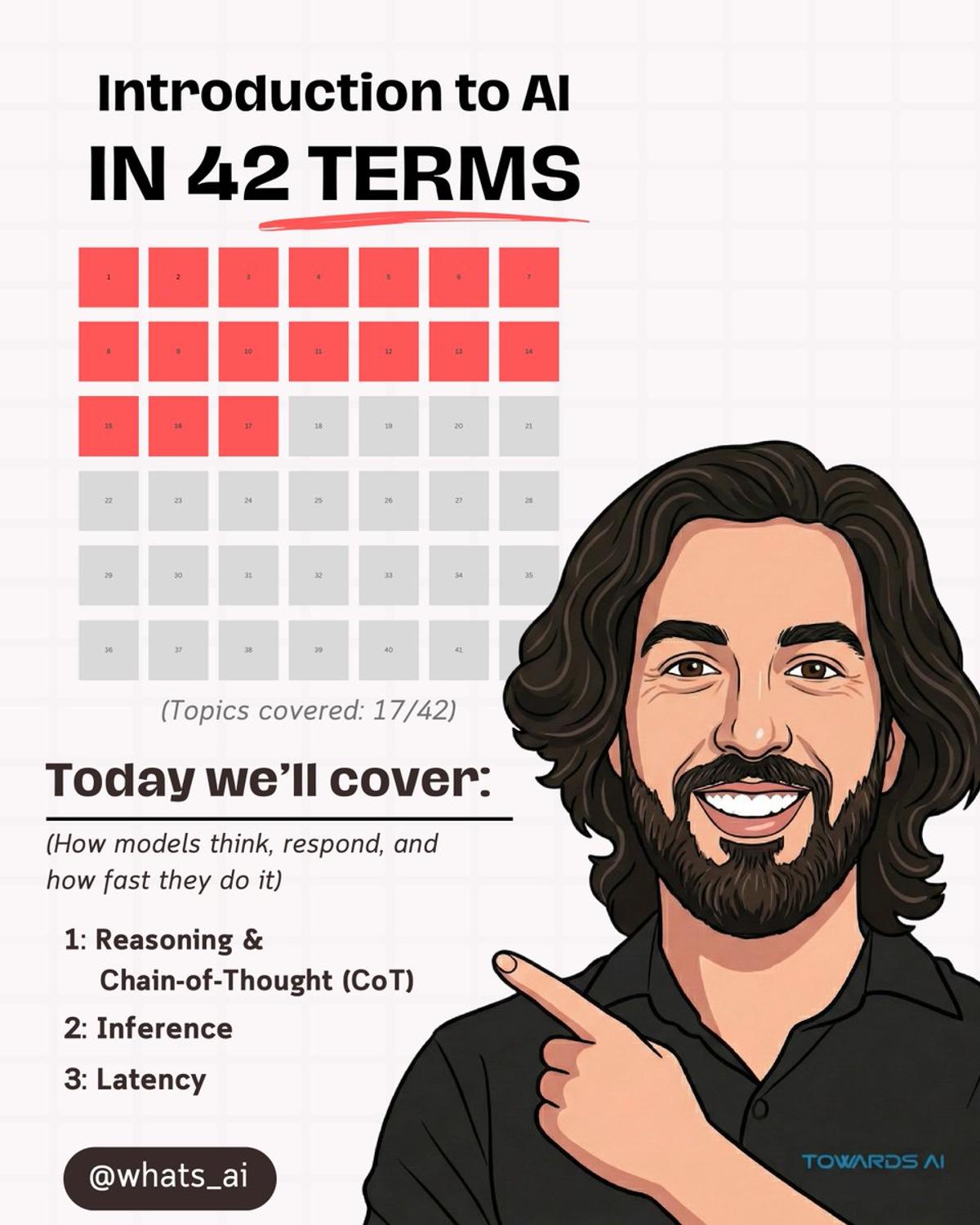

42 AI Concepts You Actually Need to Understand LLMs

The episode breaks down the 42 essential concepts that underpin large language models (LLMs) and generative AI, covering topics such as tokens, context windows, hallucinations, embeddings, retrieval‑augmented generation, agents, alignment, and evaluation. By presenting these ideas in plain English without...

Ask 13 Critical Questions Before Building AI Projects

Most AI projects fail before you write a single line of code. Not because the tech is hard. Because you skipped the questions that actually matter. Most teams choose tools based on hype, not requirements. They pick frameworks before understanding scope. And start building before...

Agentic AI: Self‑Directed Planning vs Fixed Workflows

There are two ways to build AI systems. One follows predefined steps. The other decides its own next move. That’s the difference between workflows and agents. And when an AI can plan, choose tools, and act toward a goal on its own — that’s agentic AI. Swipe...

LLMs Don’t Think Like Humans (Here’s Why)

The video argues that large language models (LLMs) do not think like humans; they are trained to predict the next token in a sequence, not to understand meaning or intent. Luis Frana explains that while both humans and machines learn...

Everything You Need to Know About LLMs

The video explains that large language models (LLMs) are inherently limited—hallucinating facts, faltering on complex reasoning, inheriting biases, and being bound by a static knowledge cutoff. It argues that recognizing these constraints is the first step toward building dependable AI...

Unseen AI Agents Pose Hidden Risks without Human Oversight

You're probably already using AI agents without realizing they're making decisions you can't trace back. And if something goes wrong, you won't know until it's too late. AI agents aren't chatbots waiting for prompts. They set goals, plan multiple steps ahead, and take...

How AI Systems Reduce Mistakes

The video explains how AI developers use model ensembles—multiple models or versions working together—to cut errors that single models inevitably make. By aggregating diverse outputs and merging them intelligently, teams can achieve more reliable, stable results in high‑stakes environments. Three primary...

Vibe Coding Lets Non‑experts Build AI Apps Fast

Something funny happened during our recent AI training at NYPL. We had ~30 professionals in the room. Developers, IT folks, managers. Different stacks. Different backgrounds. And suddenly… they were all vibe coding. People who: didn’t have Python installed a week ago hadn’t touched frontend in years were used to...

How AI Double-Checks Itself

The video introduces self-consistency, a technique that transforms the inherent randomness of large language models into a reliability boost by generating several independent answers and aggregating them. Instead of forcing a single deterministic response, the model is run multiple times...

AI Projects Fail From Bad Decisions, Not Bad Models

Most AI projects don’t fail because of bad models. They fail because the wrong decisions are made before implementation even begins. Here are 12 questions we always ask new clients about our AI projects before we even begin work, so you don't...

When Facts Beat Preferences

The video introduces Reinforcement Learning from Verifiable Reward (RLVR), a framework that replaces human or model‑based preference judgments with an automated verifier that checks factual correctness. By tying rewards directly to objective outcomes—such as passing unit tests, solving equations, or...

RLAIF Explained Simply

The video introduces Reinforcement Learning from AI Feedback (RLAF), a method that replaces costly human reviewers with an AI “judge” to evaluate and rank model outputs, enabling small teams to scale alignment work. Human feedback is slow, expensive, and inconsistent, limiting...

Preference Tuning Explained

The video introduces preference tuning as the next step after instruction‑following models, focusing on shaping responses to sound helpful, clear, and human‑like. Rather than merely judging right or wrong answers, developers present paired outputs and label the one people prefer,...

Hacking AI without Code

The video explains that large language models (LLMs) are vulnerable to two distinct attack vectors—prompt injection and prompt hacking—where malicious text can override system instructions or bypass safety filters. Prompt injection occurs when an LLM consumes external content, such as a...

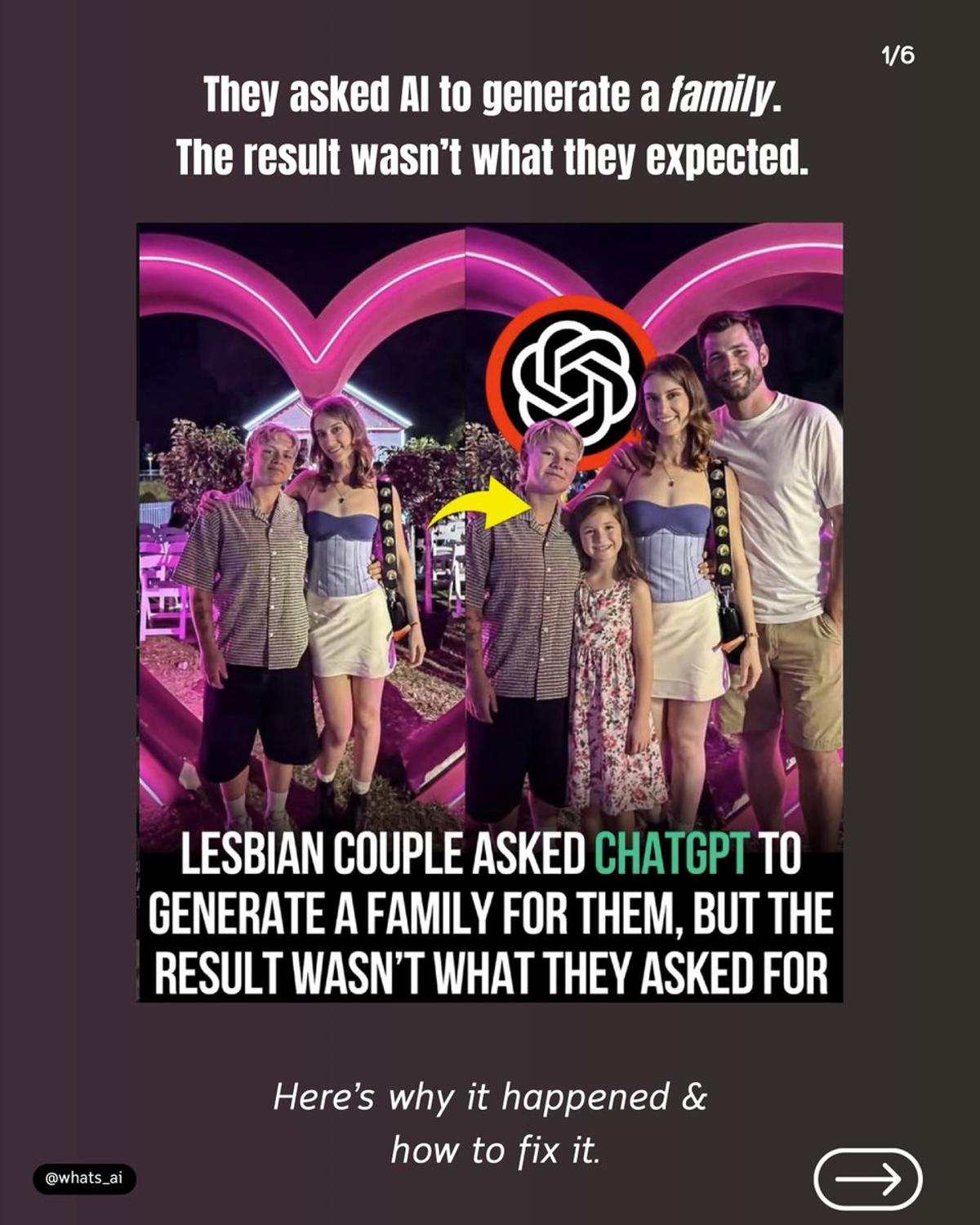

AI Defaults to Common Patterns; Prompt Carefully

This couple asked AI to generate a family for them. The result wasn’t what they asked for... This wasn’t AI judging them. It wasn’t choosing values. And it wasn’t preferring one family over another. It happened because of what AI is trained on. Image models learn...

Chain‑of‑Thought Reasoning Reduces AI Mist

Some questions are easy. Others need real reasoning. If a model jumps straight to the final answer, it can easily make mistakes. That’s where reasoning and chain-of-thought matter. Instead of guessing, the model breaks a problem into steps before reaching a conclusion. Once the prompt is set,...