Stop Overengineering: Workflows vs AI Agents Explained

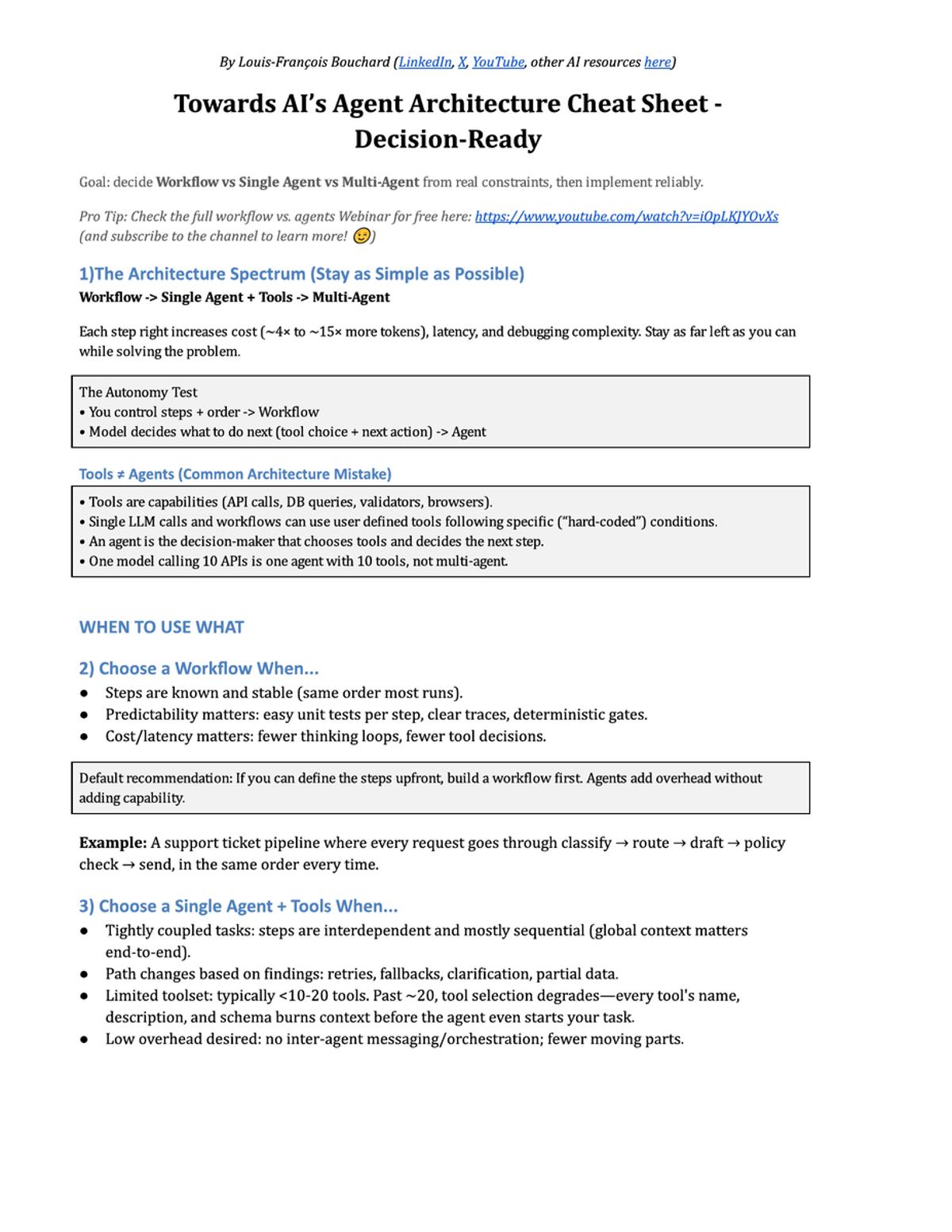

The video clarifies the often‑confused terminology around AI‑driven workflows, agents, tools and multi‑agent systems, warning that many clients overengineer solutions by mis‑labeling simple pipelines as complex agents. The presenter draws a clear line: workflows are deterministic sequences you predefine, while agents retain autonomy, deciding the next step based on a goal. Tools are merely functional capabilities; an agent selects among them. A three‑level spectrum—workflow, single‑agent with tools, multi‑agent—guides architecture choices, with each step rightward increasing token cost, latency and debugging difficulty. Concrete cases illustrate the principle: a support‑ticket routing pipeline works best as a workflow; a marketing‑content generator benefits from a single agent equipped with specialized tools; an article‑generation project required two agents—research and writing—because the research phase is exploratory and the writing phase is deterministic. The orchestrator‑worker pattern is recommended for larger multi‑agent setups to avoid information silos. The takeaway for AI product teams is to start with the simplest design that meets the requirement, only advancing to agents or multi‑agents when the problem’s flow is truly dynamic or when tool‑set size exceeds roughly twenty items. Following this discipline reduces cloud spend, improves reliability and accelerates time‑to‑market, and the speaker offers a free cheat‑sheet to aid decision‑making.

AI Forgetfulness Explained: Context Windows Limit Memory

Ever noticed how an AI suddenly forgets what you were talking about? That’s not a mistake. It’s the context window. A model can only see a limited amount of text at once. Once that window fills up, older context drops out. Inside that window, prompting decides...

LLMs Aren’t Bulletproof Superhumans, They Have Vulnerabilities

Please stop considering LLM-based systems like bulletproof super humans. It’s powerful but has as much if not more vulnerabilities than one individual could. https://t.co/sYCgwmBDUO

How AI Evaluates Other AI

The video explains a growing solution to a fundamental bottleneck in AI development: evaluating model outputs at scale. Traditional human review of thousands of conversational turns is impossible, so researchers are turning to a technique called “LLM-as-judge,” where a state‑of‑the‑art...

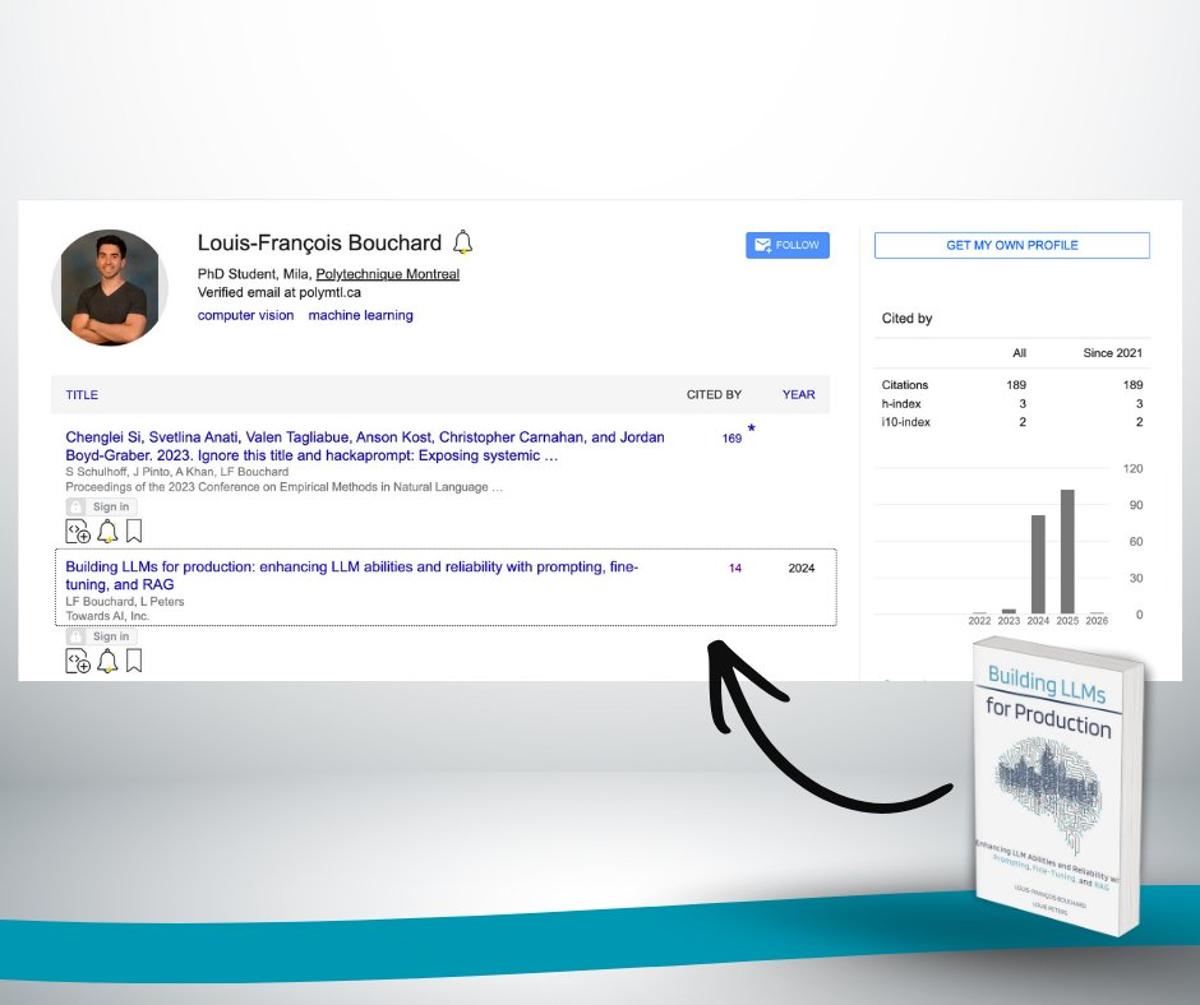

Practical LLM Production Guide Gains Academic Citations

Our book Building LLMs for Production is now being cited by research papers.📚✨ This book came from real work - building systems, fixing failures, rewriting chapters and learning what actually matters in production. It covers how to design, evaluate, and deploy reliable LLM...

Model Scores vs Real Performance

When choosing between LLMs such as GPT‑5, LLaMA or Claude, the video stresses that objective comparison hinges on benchmarks—standardized tests that quantify raw capabilities across diverse tasks. By applying the same evaluation suite, practitioners can rank models and pinpoint strengths...

Choosing the Right Model Type

The video explains how to choose between reasoning models and compact instruct models, emphasizing that architectural labels alone don’t guarantee suitability. Reasoning models are a newer class of large language models built to handle multi‑step problem solving by taking a...

Daily AI Term Videos: 42 Concepts, Halfway Done

For the past 29 days, I’ve been posting one short video every day explaining an AI term🎯 It’s part of a series I’m calling: “Introduction to AI in 42 Terms.” Each video explains one AI concept in simple language - no jargon, no hype,...

What Multimodal Really Means

The video explains that most existing AI systems are limited to a single modality—typically text—meaning they cannot directly interpret images or audio. This constraint hampers their usefulness when users pose questions that involve visual or auditory data, such as asking...

Make AI Drafts Sound Like Your Own Voice

Let's finally make LLMs work for you instead of against you, so your drafts stop sounding generic and start sounding like you. We’ll break down how to spot and remove “AI slop,” fix the generated-looking structure that gives it away, and...

5 Edits That Instantly Make AI Text Sound Human

The video explains how to edit AI‑generated text so it reads like a human author rather than a generic LLM output. Drawing on two years of experience at TORZI, the presenter outlines concrete techniques and a prompt template that keep...

Context, Not Prompts, Drives Consistent AI Answers

Your Prompts Aren’t the Problem❌ A full guide to getting consistently better answers from AI👇 When you use an AI assistant and think “Why is it suddenly confused?” Or “Why did this work 5 minutes ago but not now?” It’s rarely about wording. It’s about context. What the...

How Small Models Stay Smart

Distillation is the core method for turning massive, high‑performing AI models into compact, fast‑running versions without sacrificing much capability. By treating a large pretrained model as a teacher and a smaller model as a student, developers let the student mimic...

Multiple APIs ≠ Multi‑Agent: Use One Agent Wisely

One model calling 10 APIs is NOT a multi-agent system.❌ This is one of the most common mistakes I see. Tools are capabilities. Agents are decision-makers. If one model decides what to do next and calls multiple tools, you still have one agent, not many. Misunderstanding this...

Fine‑tuning Improves Feel, Alignment Ensures Safe Behavior

Do you know why a model feels “better” after fine-tuning? And why a very smart model can still give unsafe or confusing answers? In this post, we break down Fine-tuning and Alignment. This is part of Introduction to AI in 42 terms (we’ve covered...

What an API Actually Does

The video explains that an application programming interface (API) is the conduit through which software interacts with large language models, whether the model is proprietary, open‑weight, or open‑source. When a developer sends a prompt, the API forwards it to the provider’s...

The Future and Risk of Agents

The video examines how developers must decide which class of large‑language model to adopt when moving from experimentation to production. It outlines three categories—proprietary models such as OpenAI’s GPT‑5 or Google’s Gemini, open‑weight models like Meta’s Llama 3.1, Mistral, and Google’s Gemma,...

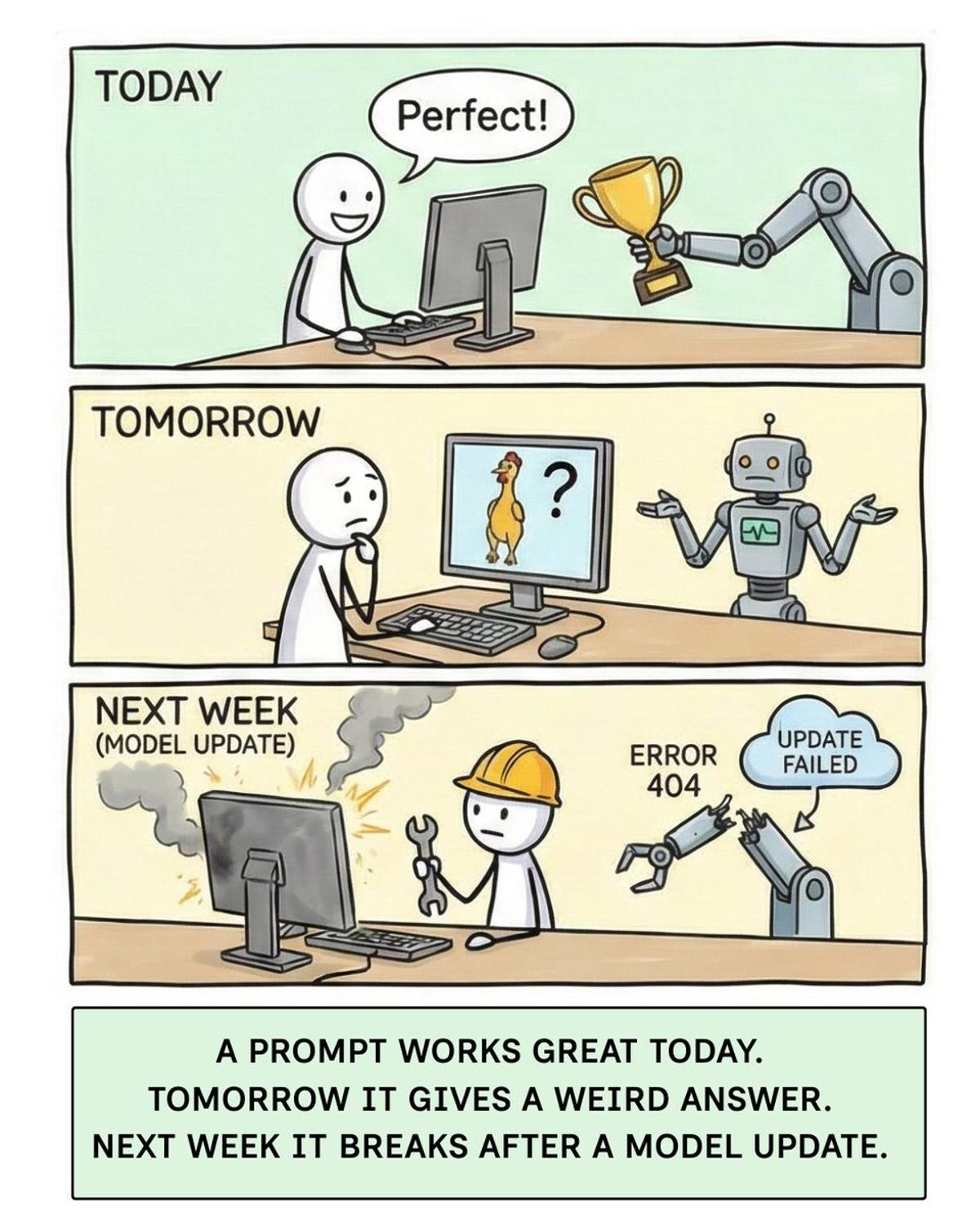

Make Prompts Reliable Through Systematic Testing, Not Tricks

If you’re a student or a professional using AI daily, you’ve seen this happen. A prompt works great today. Tomorrow it gives a weird answer. Next week it breaks after a model update 😅 A prompt that works once for one model isn’t reliable. A...

When NOT to Use Agents

The video examines the emerging class of agentic AI systems and warns against indiscriminate deployment. Unlike traditional reactive chatbots that wait for a prompt and return a single answer, agentic models can formulate plans, execute multiple actions, and deliver complex...

LLMs Learn by Predicting Tokens, Then Get Instructed

LLMs don’t wake up smart. They’re trained into it. Before a model can answer questions, follow instructions, or sound helpful, it goes through a long phase called pre-training. This is where: • random parameters • massive amounts of text and code • and one simple task come...

Free Cheatsheet Guides Practical Agent Architecture Decisions

We created a free Agents Architecture Cheatsheet. Here’s why 👇 A lot of people are building agent systems without a clear reason to do so. They mix tools with agents, over-complicate architectures, and struggle to move from demos to production. This cheatsheet is designed to be...

Why AI Makes Things Up

The video explains grounding – the practice of constraining large language model (LLM) responses to information drawn from verifiable external sources – as a core strategy to curb hallucinations. By forcing the model to rely on trusted data rather than...

This Setting Controls Randomness

The video explains how the temperature parameter governs the randomness of token selection in large language models, shaping whether outputs are deterministic or stochastic.\n\nA temperature of zero forces the model to pick the single most probable token, producing identical responses...

Learn AI in Months, Job-Ready in 1‑2 Years

I’ve done quite a few AI workshops recently, and I keep getting the same questions 👇 “Where do I start?” “How long does it really take to learn AI?” “Can I actually become job-ready?” So to clear the confusion, I put all the resources...

From Workflows to Multi-Agent Systems: How to Choose

In this talk Luis Franis, CTO of TORZI, explains how AI engineers decide between workflows, single agents, and multi‑agent systems when building client solutions. He frames AI engineering as a bridge between model development and product integration, emphasizing constraints such...

LLMs Predict Tokens, Humans Predict Meaning

People say “LLMs learn like humans, we both copy patterns.” Sounds right. It’s also misleading. LLMs don’t learn language to understand meaning. They learn to predict the next token. Not the next word. Tokens. IDs. Math. Over and over, trillions of times,...

How LLMs Think Step by Step & Why AI Reasoning Fails

The video explains how large language models (LLMs) often stumble on multi‑step questions because they attempt to jump straight to a final answer, leading to logical slips and hallucinations. To mitigate this, practitioners employ a prompt‑engineering technique called chain‑of‑thought (CoT),...

2025: Massive Growth, New Courses, and Personal Milestones

My 2025 wrapped: - released our first ever course & product 🚀 - followed up with 3 more courses (and a 4th coming soon with a great friend, @pauliusztin_) - invited to NVIDIA GTC and briefly met Jensen + many amazing people - landed...

The Easiest Way to Improve Prompts

The video explains two foundational prompting strategies—zero-shot and few-shot learning—used to shape large language model outputs. Zero-shot prompting presents a plain instruction without any exemplars, trusting the model’s pre‑trained knowledge to generate an answer, such as asking a general‑purpose assistant...

Accuracy Isn’t Enough: Prioritize Relevance, Grounding, Faithfulness

Accuracy is a terrible metric for LLMs. And it’s the reason many AI demos look great but fall apart in real usage. LLMs don’t usually fail by being wrong. They fail by being: irrelevant ungrounded confidently misleading An answer can be “accurate” in isolation and still be useless...

This Is How Much AI Can Remember

The video explains that a language model’s ability to remember is bounded by its context window – the maximum number of tokens it can see at once. The window comprises the system prompt, the full dialogue history, and any tokens the...

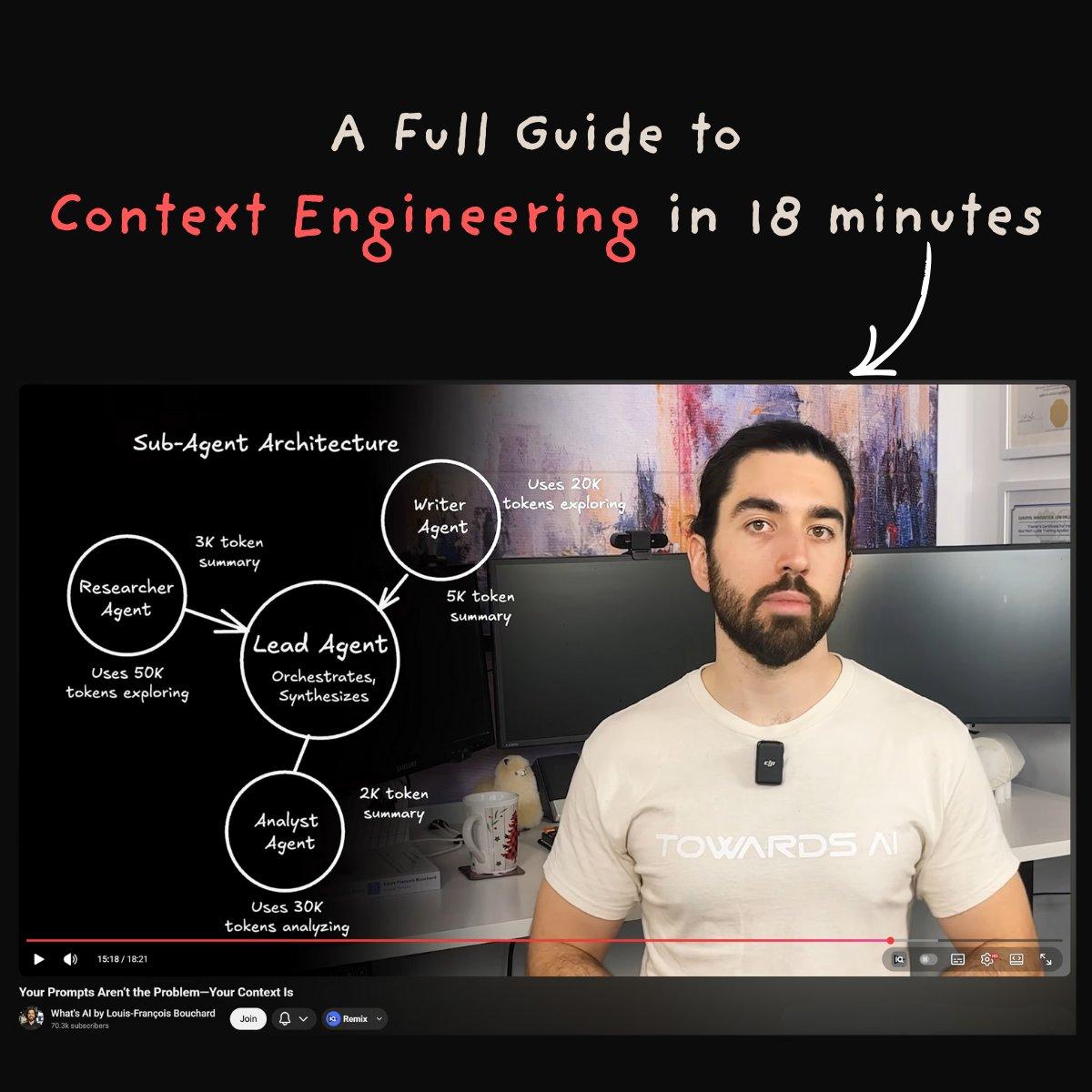

Context Rot Causes AI Failures; Engineer Memory

Most AI failures don’t come from bad prompts or weak models. They come from bad context. As tasks get longer and agents take more steps, important information gets buried, forgotten, or drowned in noise, something we call “context rot.” The result looks...

Your Prompts Aren’t the Problem—Your Context Is

The video argues that the real bottleneck in AI assistants isn’t how you phrase a question but what information the model actually sees when it generates a reply. While traditional prompt engineering tweaks wording to coax better answers, "context engineering"...

Why Prompts Actually Work

The video breaks down why prompts work, defining a prompt as the full set of instructions and context sent to an LLM. It distinguishes two parts: a system prompt that establishes the model’s role and constraints, and a user prompt...

Constraints, Not Model Choice, Drive Real-World AI Success

Do you still care about picking the right model? GPT. Gemini. Claude. Bigger models. Bigger context windows. But when you actually work on real projects, you quickly realize something else. Most decisions aren’t driven by models. They’re governed by constraints. Cost Latency Quality Data privacy Every model call has a...

RLHF Explained Simply

RLHF, or reinforcement learning from human feedback, is the technique powering modern large‑language‑model alignment. Rather than relying solely on static text corpora, developers augment training with human‑generated preference data, teaching models what constitutes a helpful, safe response. The workflow begins with...

Apply an Autonomy Slider:

Recently, a close friend of mine, @pauliusztin_, launched a free 9-lesson course on AI agent foundations, and I went through it. It’s short (around 1.5 hours total) and focuses purely on end-to-end fundamentals - no tools, no frameworks, just the core...

LLMs Turn Words Into Numbers Before Understanding Meaning

Your model doesn’t understand words. It understands numbers. That single fact explains a lot of confusing LLM behavior. Before an LLM can answer anything, your text goes through two quiet steps most people never see: Tokens: your sentence is broken into small pieces and...

How AI Gets Specialized (Fine-Tuning Explained)

The video demystifies fine‑tuning, the technique of taking a pre‑trained large language model and further training it on a narrow, high‑quality dataset to make it proficient at a specific task. Unlike the massive, generic corpus used for pre‑training, fine‑tuning relies on...

Base vs Instruct Models Explained

The video explains the fundamental distinction between base models and instruct models in modern AI development. A base model is the product of large‑scale pre‑training; it stores vast factual information but is not optimized for following user instructions or sustaining...

This Is How GPT Gets Built

The video walks through the foundational phase that turns a random‑parameter network into a functional language model, known as pre‑training. It describes how the model is fed an enormous corpus of text and code from the internet and tasked with...

Learn Python for AI by Building Real Projects

One of the best feelings in teaching AI? When a student describes your course exactly the way you hoped it would work. We just received a new review for our Beginner Python for AI Engineering course, and the part that hit me...

2026 Will Transform Creators After 2025 Image Boom

If you’re a creator, marketer, or video editor… 2026 is going to be very different.👇 2025 was dominated by image generation. Google’s Nano Banana Pro changed how we control style and lighting. ChatGPT made image consistency crazy. Images finally started doing what we asked fo...

Day 4/42: How AI Understands Meaning

The video explains how modern language models move beyond simple token IDs toward semantic representations called embeddings. While tokenization converts user input into arbitrary numeric identifiers, those IDs carry no information about word meaning or relationships, preventing the model from...

Prediction Isn’t Understanding and That Difference Matters

The video tackles a common misconception that large language models (LLMs) learn in the same way humans do, arguing that the similarity ends at a superficial level of pattern imitation. It breaks the discussion into three parts – pre‑training, fine‑tuning/reinforcement...

ChatGPT Doesn’t “Know” Anything. This Is Why

The video demystifies large language models (LLMs) by framing them as sophisticated autocomplete engines. It explains that an LLM’s core task is to predict the most probable next token—whether a whole word, a sub‑word fragment, or punctuation—based on the preceding...

Six Years, 70k Subs: Persistence Beats Early Momentum

70k YouTube subscribers after 6 years. Sounds simple on paper. It wasn’t. For the first few years, everything felt easy. I was covering AI research papers, I loved it, and people loved it too. Consistency wasn’t a struggle because the content was...

Day 1/42: What Is Generative AI?

The video introduces a new daily short‑form series aimed at demystifying generative AI for a broad audience. It opens by acknowledging the common frustration of receiving slow, vague, or inaccurate answers from tools like ChatGPT, Gemini, or Google Cloud, and...

42 Days of No‑Hype AI Concept Videos

I’m publishing one AI video every day for the next 42 days. No math. No code. No hype. Just the concepts you actually need to understand LLMs. YouTube is where 𝗲𝘃𝗲𝗿𝘆𝘁𝗵𝗶𝗻𝗴 𝘀𝘁𝗮𝗿𝘁𝗲𝗱 for me. And honestly, I miss it. So on Monday, I’m coming back...

LLMs Trained on Reddit, Wikipedia, Now Eroding Them

It’s funny how LLMs depends on Reddit and Wikipedia content to be trained, but at the same time it’s killing both… https://t.co/MhE4knbw0t