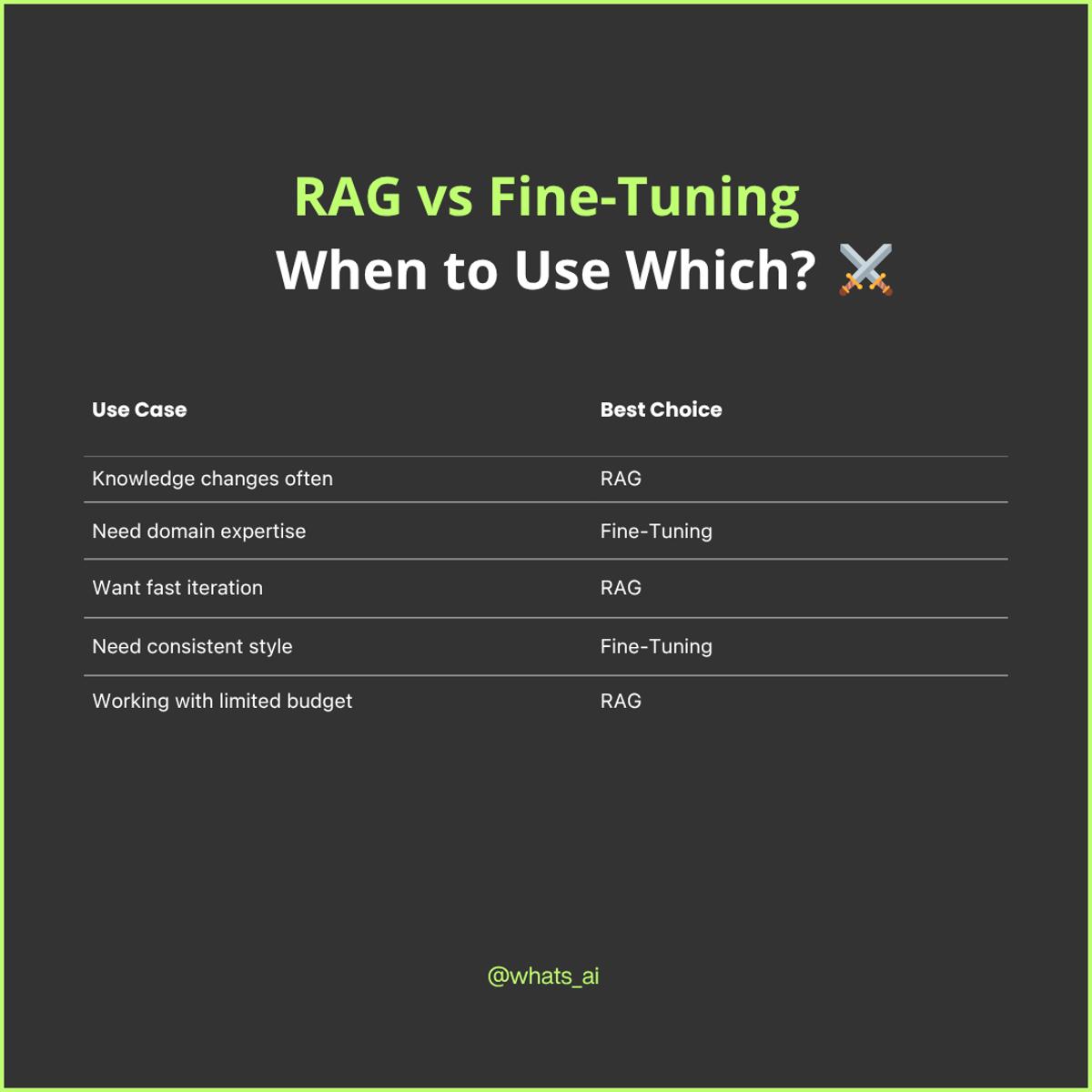

RAG or Fine-Tuning? Most People Get This Wrong...

The speaker warns that many organizations mistakenly favor fine‑tuning LLMs over Retrieval‑Augmented Generation (RAG), despite fine‑tuning’s high data, expertise, and cost requirements. Fine‑tuning demands millions of tokens, extensive data cleaning, and specialized ML talent to avoid over‑ or under‑training, making it time‑ and budget‑intensive. RAG, by contrast, externalizes knowledge, letting the model reference up‑to‑date external data without altering the model itself, simplifying maintenance and enabling source citation. While RAG is generally the preferred first step for domain‑specific applications, fine‑tuning may be added later for deeper expertise or response tailoring.

4x Faster Coding with AI? Meet Composer by Cursor

Cursor unveiled its 2.0 platform alongside a new AI model called Composer, which the company says generates code up to four times faster than competing models while delivering near‑state‑of‑the‑art quality. Composer appears to be a fine‑tuned version of a Chinese...

How to Fix LLM Hallucinations ?

The video explains that LLM hallucinations arise when context is missing, ambiguous, or overly large, and can be curbed by grounding the model in clean, factual data through precise prompts and retrieval‑augmented generation. It details a pipeline that includes clear...

New AI Models Hype, but Performance Often Unchanged

Have you seen OpenAI’s Atlas? And googles new update? And and minimax m2!! I just switched to the most recent Qwen model and… it performs the same 😂 This field is quite special to work in. So much hype around so...

Last Call: November AI Engineering Cohort Closes in 48 Hours

Louis‑François Bouchard’s October 31 note warns that enrollment for the November Full‑Stack AI Engineering cohort closes in 48 hours, with a live kickoff on November 2. The program, priced at $349 (one‑time) after a free preview, promises a production‑ready GenAI playbook previously...

MiniMax M2: The Open LLM Beating Claude and Gemini!

Minimax M2, an open 200-billion-parameter mixture-of-experts (MoE) model with only ~10 billion active parameters at inference, is being touted as a frontier alternative that outperforms many proprietary models on key benchmarks. The model ranks fifth on the artificial analysis benchmark,...

Research Skills Grow Without a PhD: Learn, Build, Share

During my conversation with @PetarV_93 - Senior Staff Research Scientist at @GoogleDeepMind and Lecturer at Cambridge. He said something that stuck with me. “You don’t need a PhD to think like a researcher. You need curiosity, discipline, and the courage to keep...

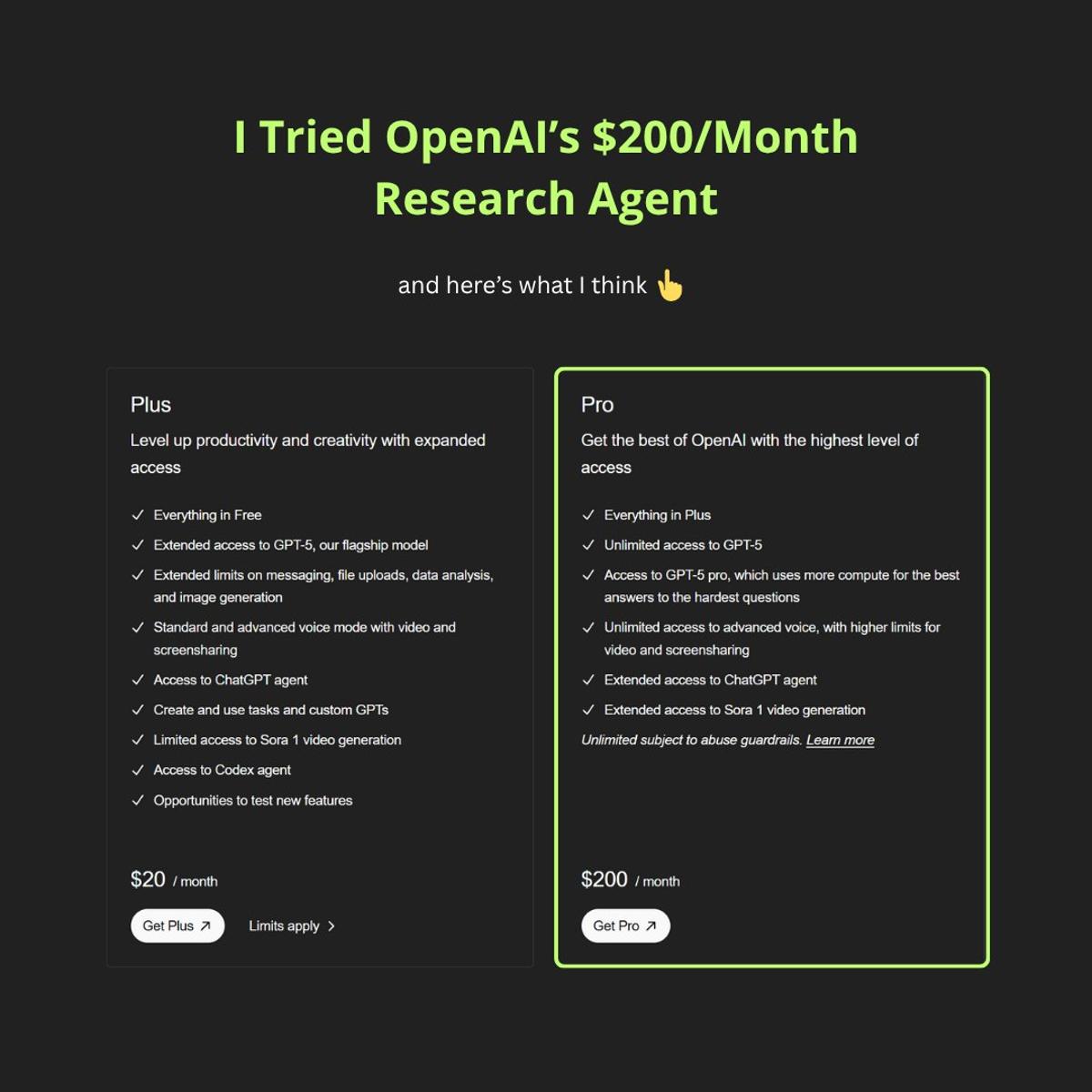

AI Research Agents Deliver Deep, Cited Analyses Faster

Ever tried using ChatGPT or Gemini for research, only to hit a wall when you need real multi-step analysis or technical synthesis? That’s why I tried OpenAI’s $200/Month plan for their research agent! After wasting hours piecing together reports and technical...

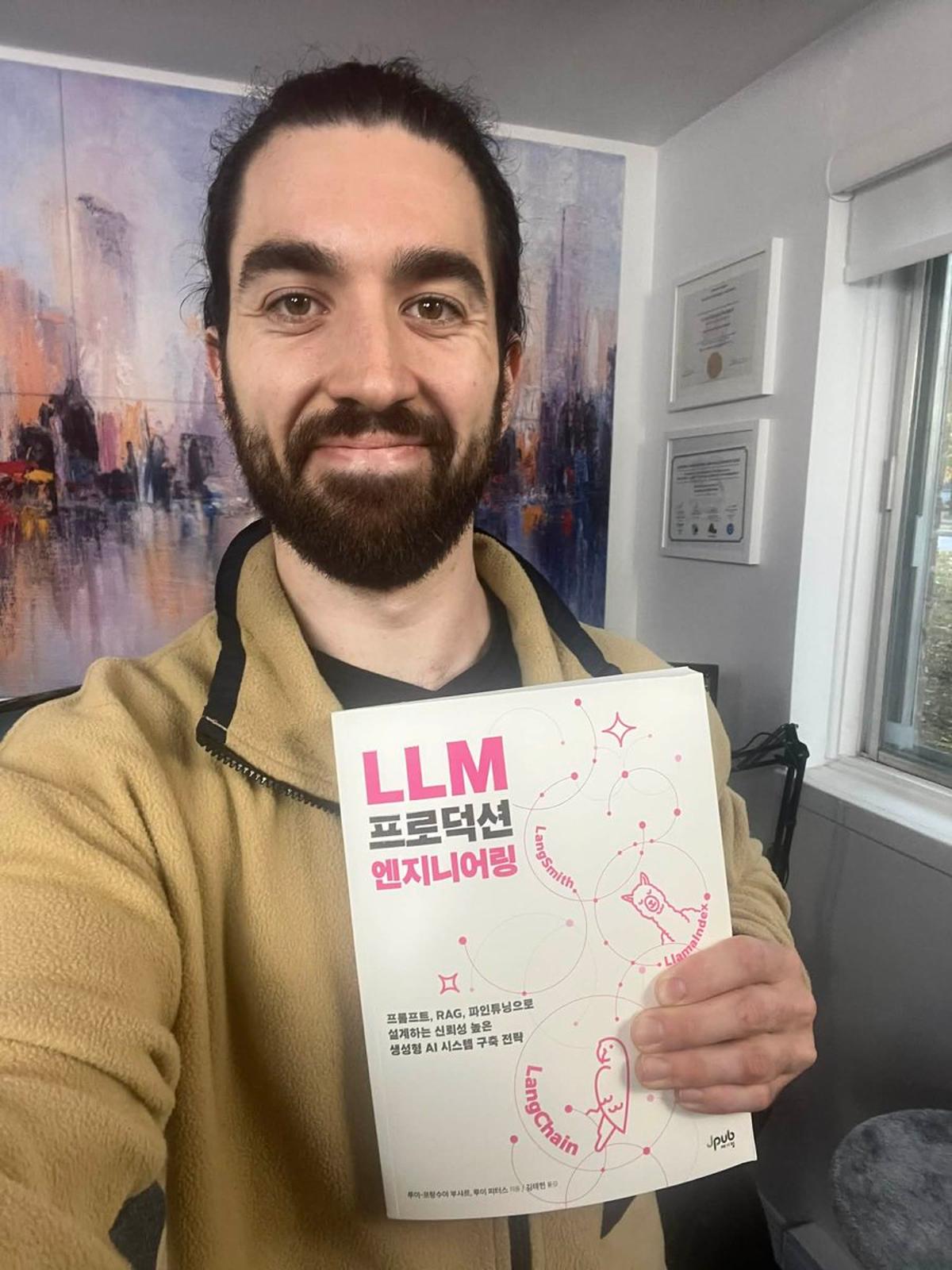

AI Book Crosses Language Barrier with Korean Translation

My book Building LLMs for Production just got translated into Korean. …and I can’t even read it to proof it. 😅 Still, it feels incredible. A Korean publisher reached out last year asking to translate the book, and now it’s available in Korean...

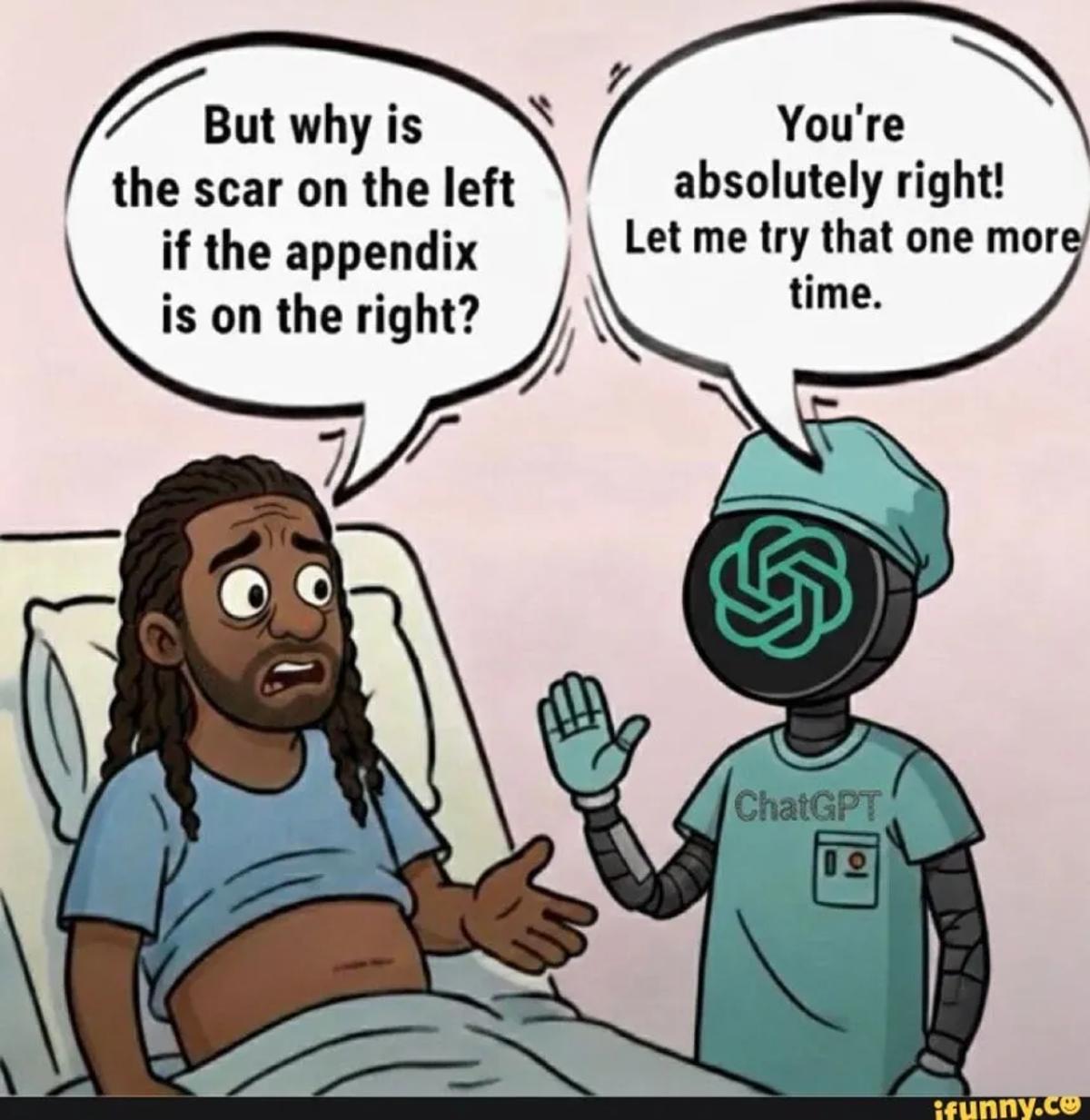

LLMs Follow Instructions, Not Truth—Verify Your Assumptions

When you use an LLM, remember this: it’s been trained to follow instructions, not to know what’s true. That means if you don’t know what you’re talking about, you might just be confidently guiding it toward your own mistakes — and it...

Use RAG for Dynamic Facts, Fine‑tune for Stable Expertise

Most people still mix up RAG and fine-tuning. Many of our clients start by wasting time fine-tuning when RAG would've worked. Here's the real difference:👇 ✅ RAG = Your model's external brain It doesn't change the model at all. Just connects it to a...

Agent Skills vs MCP: Complementary Roles in AI

New video! Agent Skills vs MCP: Which Is Better? A short video to understand why both exist and where each sits in the AI ecosystem. https://t.co/PodcbFyLFW https://t.co/PodcbFyLFW

Agent Skills vs MCP Which Is Better?

Entropic’s new agent skills package a skill as a simple folder containing a YAML metadata file, a skill.md description, and optional scripts or documents, providing a file-system, plugin-style alternative to the MCP client-server protocol. Unlike MCP, which exposes tools via...

Google’s Veo 3.1 Just Beat Sora?! 😳

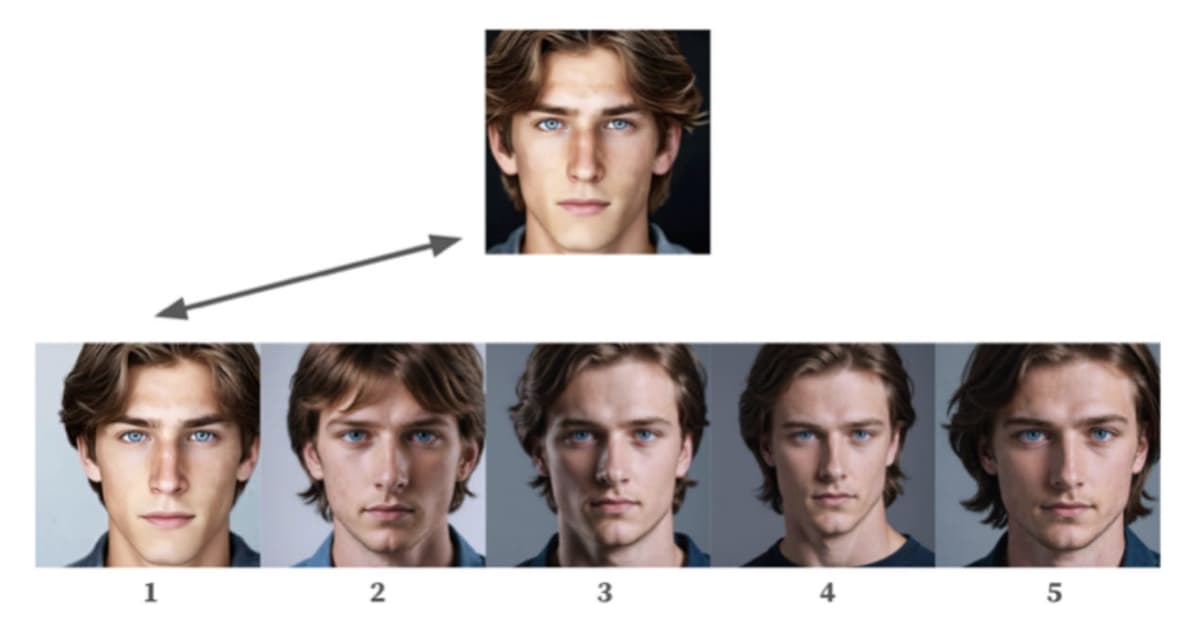

Google has released Veo 3.1, a significant update to its AI video-generation model that improves output quality and introduces several new creative controls. Users can now supply one or multiple images as “ingredients” to populate or style generated videos, animate...

Weekly Free AI Deep Dives, No Fluff

Every week, I share something exclusive (and completely free) that I don’t post anywhere else. New tools, research, lessons, and ideas shaping the future of AI - broken down simply, without the noise. If you like learning how things actually work, this...

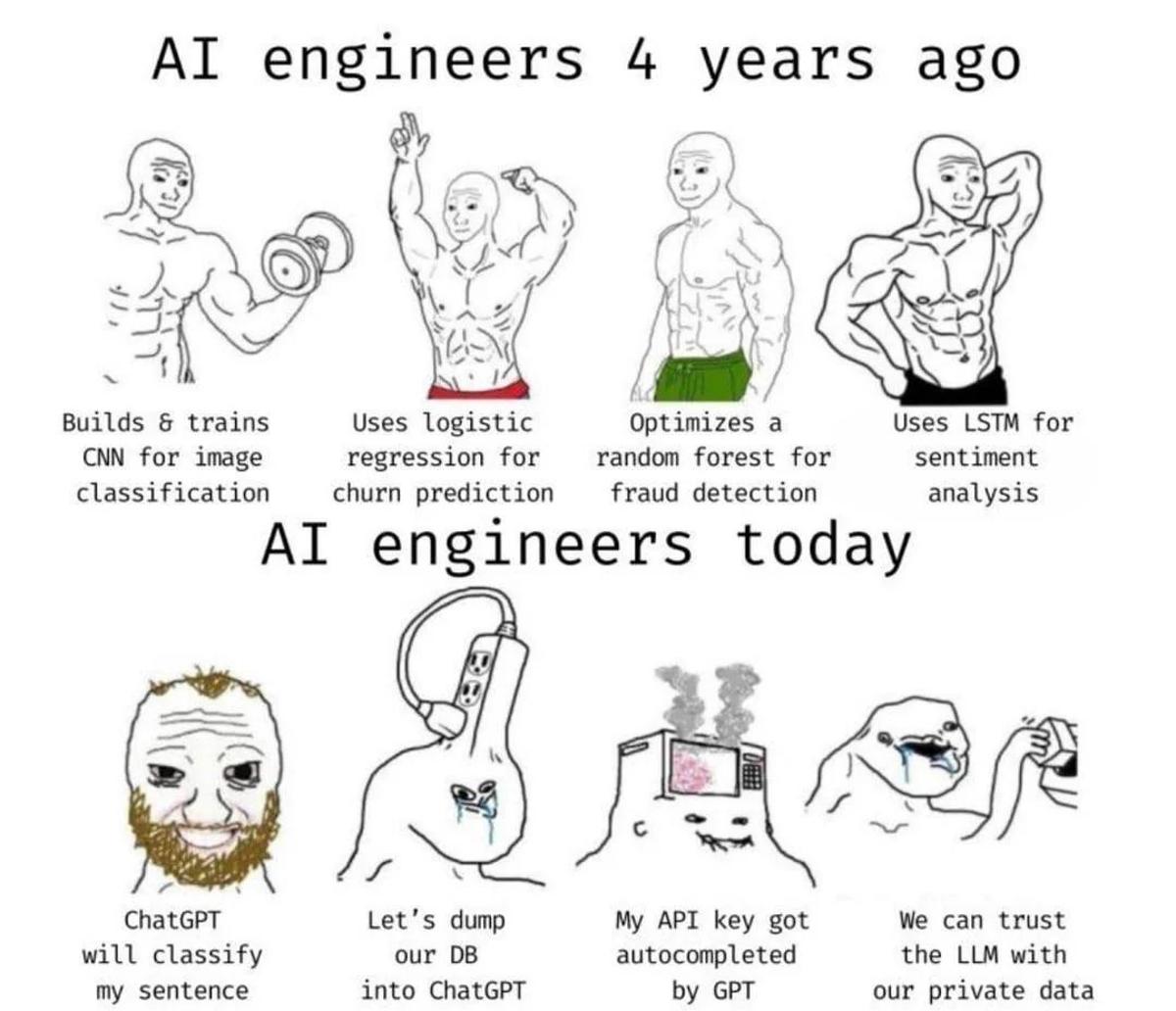

From Fine‑tuned CNNs to ChatGPT’s Pixel‑perfect Descriptions

It do be like that these days... 😅 I remember when we had to fine-tune an ImageNet-pre-trained CNN and preprocess the image to then send it and get a top-5 classification that was somewhat accurate... Now ChatGPT describes every pixel...

Thinking Less Can Outperform Chain‑of‑Thought Prompts

Last week, I had the chance to attend the Conference on Language Modeling (COLM 2025) here in Montreal - a must-attend conference for anyone starting out in AI/LLM I was lucky to get an invite, it was awesome. Almost every session circled...

This Week in AI Engineering

This week’s AI engineering roundup highlights three developments reshaping developer workflows and compute economics: OpenAI launched Agent Builder, a no-code drag-and-drop tool that lets non-developers assemble multi-agent workflows alongside ChatKit, Evals and reinforcement fine-tuning; NVIDIA unveiled the DGX Spark, a...

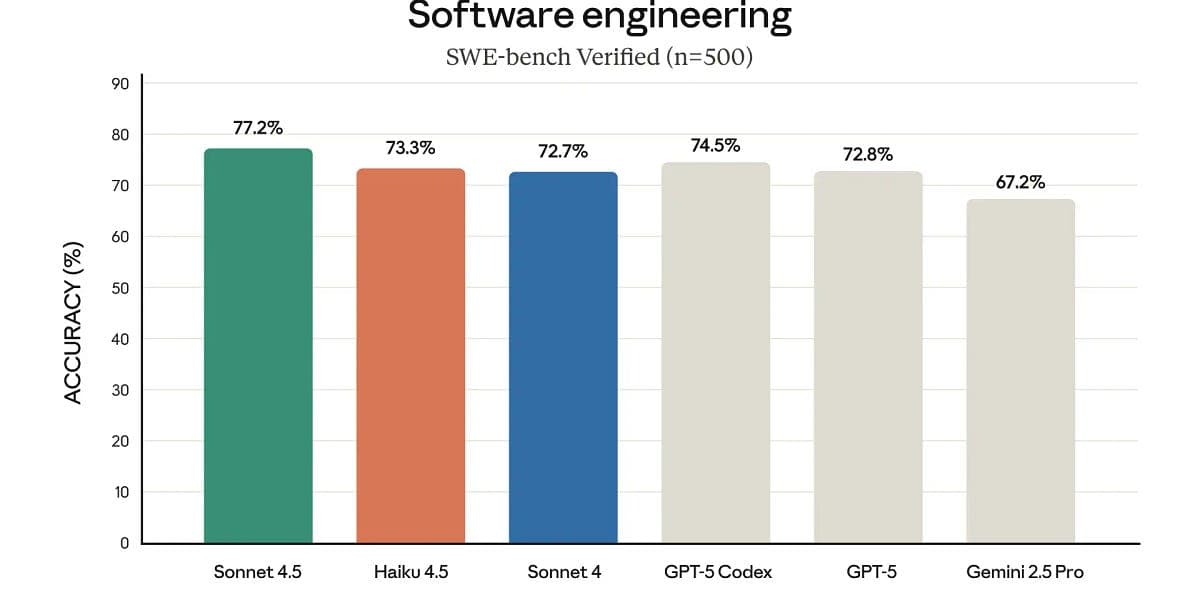

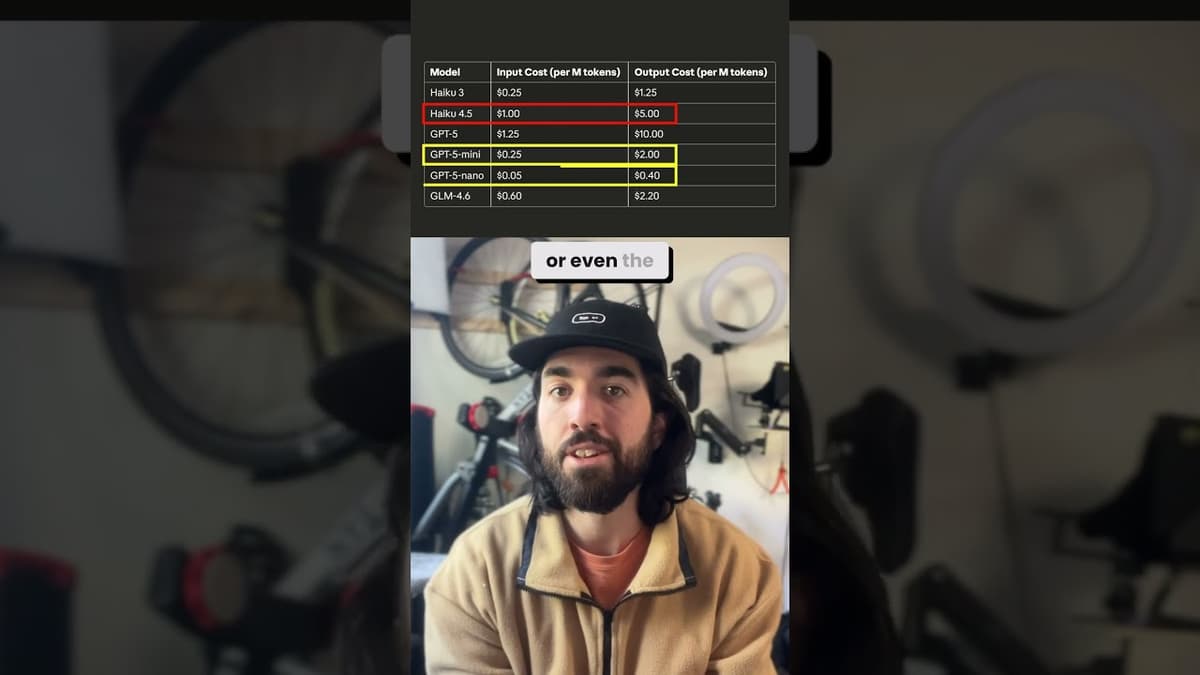

Claude Haiku 4.5 Just Matched Sonnet 4... At 2x Speed!

Anthropic has launched Cloud Haiku 4.5, a lighter-weight variant of its Sonnet model that the presenter says matches Sonnet’s performance while running twice as fast and costing one-third as much. The update claims Haiku 4.5 is marginally superior to GPT-5...

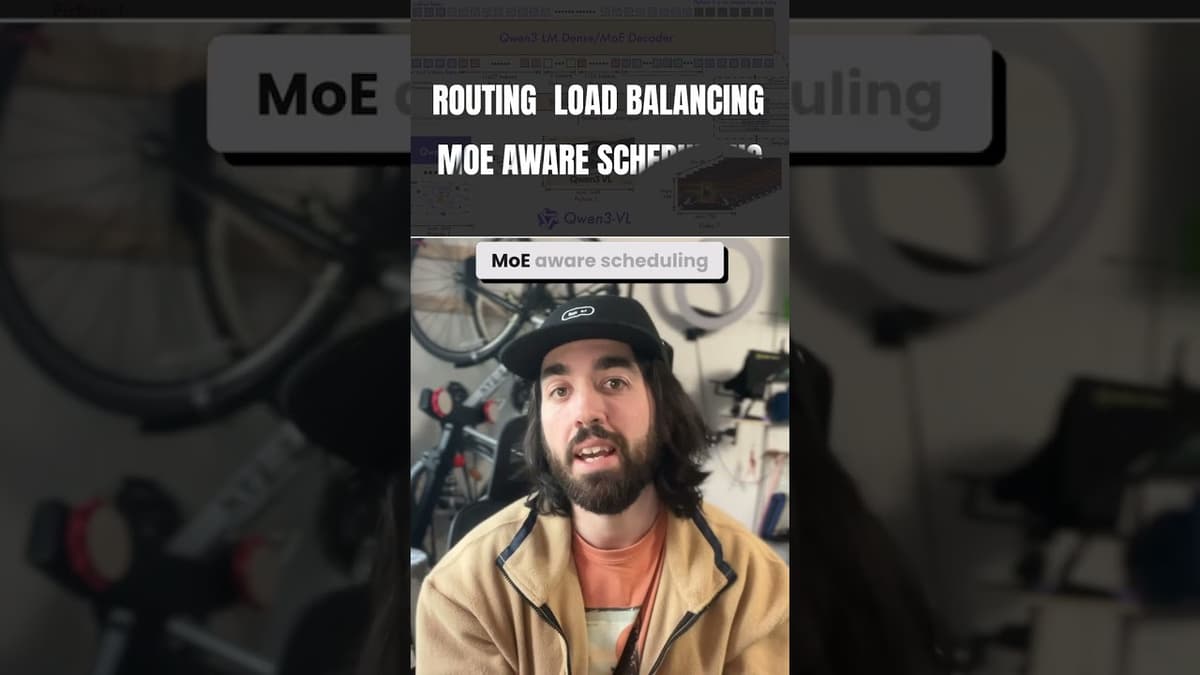

Qwen3-VL Just Changed Multimodal AI (Again) 🔥

OpenAI competitor Qwen released two compact vision-language models, Qwen-VL 4B and 8B, that pack multimodal capabilities into highly efficient, small architectures. They support FP8 for lower-precision inference, offer both dense and Mixture-of-Experts (MoE) variants, and expand language coverage to 32...

Petaflop Desktop AI: Powerful Yet Hybrid Future

A petaflop on your desk. Let that sink in. NVIDIA’s new DGX Spark squeezes what used to be an entire data center into desktop size — Grace Blackwell chips, 128GB unified memory, and a full AI stack out of the box....

DGX Spark vs Cloud: Who Wins for AI Work?

NVIDIA’s DGX Spark is being touted as the world’s smallest portable AI supercomputer, packing up to 1 petaflop of compute, 128GB of memory and the capacity to train ~70B-parameter models or run inference on models up to 200B parameters (two...

More Thinking. Worse Results. Wait, What?

From face recognition to grammar learning, both humans and models perform better when they don’t reason step-by-step. Here’s the paradox.

Fine-Tuning Explained in 60 Seconds (No Math!)

Fine-tuning adjusts a pre-trained language model’s billions of parameters to make it specialize on a specific task or domain rather than teach it entirely new knowledge. Instead of full retraining—costly in compute—practitioners often tune small parameter subsets using methods like...

ChatKit + Agent Builder = Instant AI Apps (No Coding Needed)

OpenAI has launched two no-code tools—Agent Builder and ChatKit—allowing users to assemble agentic workflows and embed chatbots via drag-and-drop interfaces without programming. Agent Builder supports complex multi-step agents and integrations, primarily using OpenAI models but permitting external models for evaluation,...

Show End‑to‑End AI Projects, Not Just Buzzwords

In interviews, most candidates talk about AI. But few build something that truly stands out. After reviewing hundreds of projects and interviewing candidates, here’s what actually makes the difference. 👇 1️⃣ Complete end-to-end projects A strong portfolio project isn’t just a chatbot or a...