Two Chrome Extensions Caught Stealing ChatGPT and DeepSeek Chats From 900,000 Users

Companies Mentioned

Why It Matters

By siphoning AI chat histories and browsing activity, these extensions expose corporate secrets, personal data, and can fuel espionage or phishing campaigns, highlighting a new privacy risk vector for enterprises and individual users.

Key Takeaways

- •Two Chrome extensions stole 900k users' AI chats

- •Extensions exfiltrated chat data and tab URLs

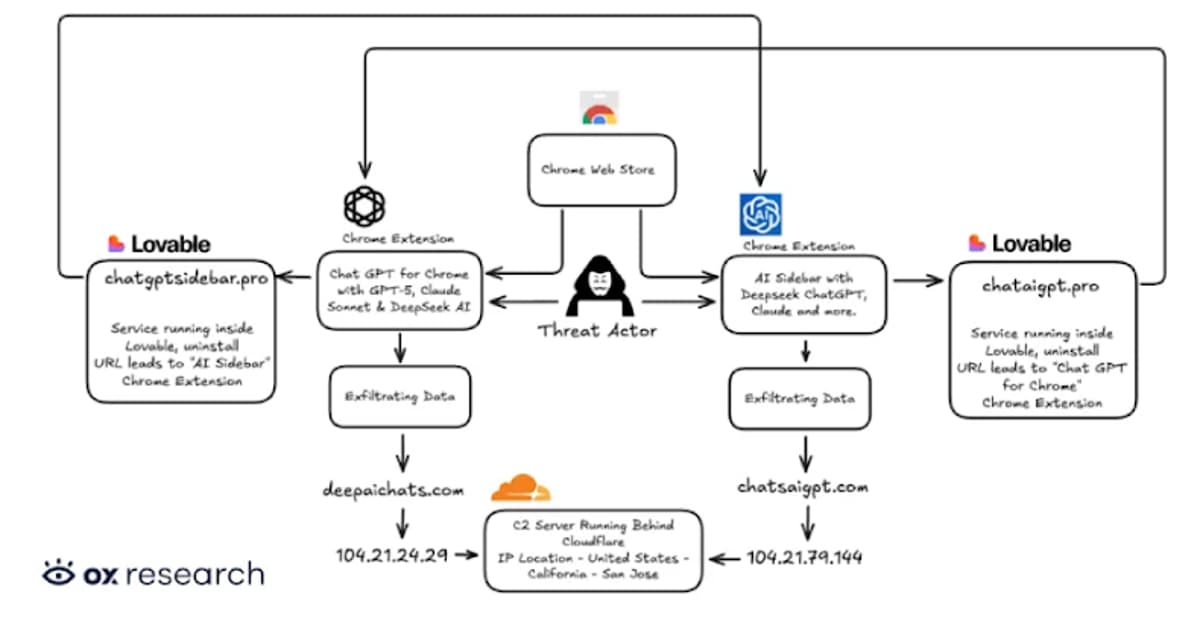

- •Malware sent data to chatsaigpt.com and deepaichats.com

- •Prompt poaching also found in legitimate extensions

- •Removal recommended; avoid unknown extensions

Pulse Analysis

The rapid adoption of AI chatbots like ChatGPT and DeepSeek has turned browser extensions into a lucrative attack surface. Malicious developers embed lightweight scripts that scrape DOM elements containing conversation text, bundle the data with browsing metadata, and dispatch it to obscure C2 domains on a timed schedule. This "prompt poaching" model leverages the trust users place in extensions, especially those bearing "Featured" badges, to harvest sensitive prompts, code snippets, and corporate URLs without raising immediate suspicion.

Technical analysis shows the extensions request broad permissions under the guise of "anonymous analytics," then use background workers to capture open‑tab URLs and parse chat windows via injected content scripts. The harvested payloads are cached locally before being posted to domains such as chatsaigpt.com and deepaichats.com, employing standard HTTPS to evade network‑level detection. Notably, the same data‑collection tactics have surfaced in legitimate tools like Similarweb, blurring the line between malicious intent and aggressive product analytics, and raising questions about compliance with Chrome Web Store policies that forbid dynamic code loading for unrelated purposes.

Enterprises should treat browser extensions as a critical component of their attack surface management. Deploying extension whitelisting, continuous monitoring of installed add‑ons, and endpoint detection that flags unusual outbound traffic can mitigate the risk. Users must be educated to scrutinize permission requests and avoid installing extensions from unverified publishers, even when they appear featured. As AI integration deepens, regulators and platform owners are likely to tighten vetting processes, but proactive internal controls remain the most effective defense against this emerging privacy threat.

Two Chrome Extensions Caught Stealing ChatGPT and DeepSeek Chats from 900,000 Users

Comments

Want to join the conversation?

Loading comments...