New Method Measures Energy Dissipation in the Smallest Devices

•February 9, 2026

0

Why It Matters

It gives engineers a measurable metric for energy cost and speed limits in emerging nano‑electronics, accelerating the design of more efficient, faster hardware. It also narrows the gap between theoretical thermodynamics and practical nanoscale measurement.

Key Takeaways

- •Quantum dots used to measure entropy production directly

- •Machine learning optimized physics model for non‑equilibrium analysis

- •Technique reveals speed and efficiency limits for nanoscale devices

- •Bridges gap between theoretical thermodynamics and experimental nanoscale measurement

- •Could guide design of lower‑energy, faster computing hardware

Pulse Analysis

Understanding how energy dissipates at the nanoscale has become a critical bottleneck for next‑generation computing. Traditional thermodynamic tools work well for macroscopic engines, but they break down when fluctuations dominate and systems never reach equilibrium. Researchers therefore need a way to capture the hidden costs of information loss and irreversible processes that occur in quantum‑confined structures such as quantum dots. This new measurement paradigm provides that missing link, translating abstract entropy concepts into concrete, experimentally accessible data.

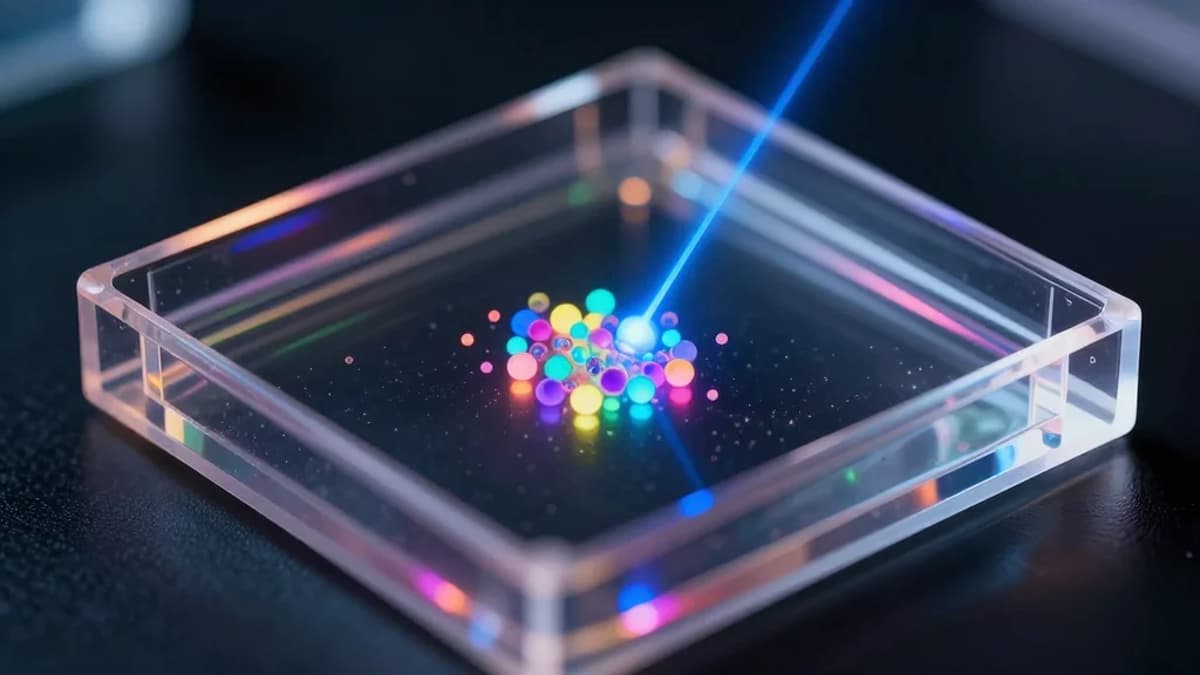

The Stanford team created a controlled non‑equilibrium environment by toggling a laser field on and off, which forces quantum dots to switch between distinct blinking statistics. High‑speed imaging captured these stochastic light emissions, and a custom machine‑learning pipeline tuned a physics‑based model to the noisy data. By fitting the model, the scientists extracted the entropy production rate—a direct indicator of how much energy is irreversibly spent during each transition. The approach combines quantum optics, statistical mechanics, and modern data science, achieving sensitivity levels that were previously thought unattainable.

Beyond the laboratory, this capability promises to reshape hardware design across the semiconductor industry. With a reliable metric for dissipation, engineers can benchmark the ultimate speed‑efficiency frontier of memory cells, processors, and photonic components, guiding material choices and architectural trade‑offs. The technique also opens avenues for exploring energy‑optimal pathways in other driven systems, from phase‑change memories to neuromorphic circuits. As machine‑learning tools and quantum‑measurement technologies continue to mature, the method could become a standard diagnostic for low‑power, high‑performance device development.

New method measures energy dissipation in the smallest devices

0

Comments

Want to join the conversation?

Loading comments...