Bipolar Switching and Synaptic Behaviors Observed in Titanium‐Constrained Phase‐Change Heterostructures

•February 6, 2026

0

Companies Mentioned

Why It Matters

The breakthrough enables stable, low‑voltage bipolar switching, simplifying circuit design for neuromorphic hardware and advancing scalable AI accelerators.

Key Takeaways

- •Titanium interlayer limits atomic diffusion.

- •Bipolar operation stabilizes voltage at ±0.6 V.

- •Endurance exceeds 80,000 switching cycles.

- •Demonstrates potentiation, depression, STDP synaptic functions.

- •Achieves 88% accuracy on MNIST-like benchmark.

Pulse Analysis

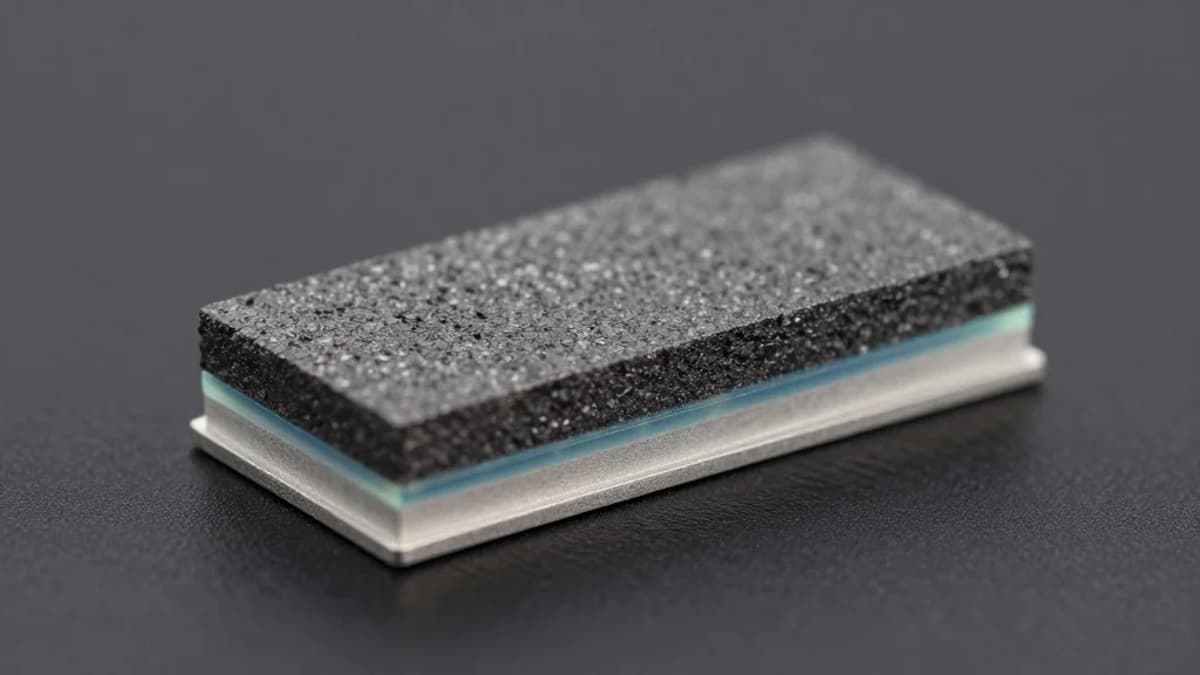

Phase‑change random‑access memory (PCRAM) has long been touted for its high on/off ratio and straightforward fabrication, yet most implementations rely on unipolar switching. Unipolar operation demands complex peripheral circuitry to emulate the bidirectional conductance changes needed for brain‑inspired computing, limiting scalability. By inserting an ultra‑thin titanium atomic barrier between the SbTe active layer and electrodes, researchers have effectively throttled long‑range atomic diffusion, a primary cause of voltage drift and device fatigue. This material‑level innovation stabilizes the switching window around ±0.6 V, a voltage range compatible with low‑power logic and neuromorphic arrays, while extending endurance to over 80,000 cycles—metrics that rival conventional flash memory.

Beyond raw endurance, the titanium‑constrained heterostructure exhibits true synaptic plasticity. The device can be programmed to incrementally increase (potentiation) or decrease (depression) its conductance, and it responds to the precise timing of voltage spikes, mimicking spike‑timing‑dependent plasticity (STDP). These behaviors are essential for on‑chip learning algorithms that rely on weight updates mirroring biological neurons. Experimental pulse trains produced repeatable conductance modulation, and when the measured conductance states were fed into a simulated neural network, the system classified images from the Modified NIST dataset with 88% accuracy—an impressive result for a single‑device prototype.

The implications for the broader AI hardware ecosystem are significant. A bipolar PCRAM that combines non‑volatile storage, high endurance, and intrinsic learning capabilities reduces the need for separate memory and compute layers, paving the way for densely packed neuromorphic processors. Moreover, the titanium interlayer approach is compatible with existing CMOS back‑end‑of‑line processes, suggesting a clear path toward mass production. As edge AI demands grow, such energy‑efficient, scalable memory‑compute hybrids could become foundational building blocks for next‑generation intelligent devices.

Bipolar Switching and Synaptic Behaviors Observed in Titanium‐Constrained Phase‐Change Heterostructures

0

Comments

Want to join the conversation?

Loading comments...