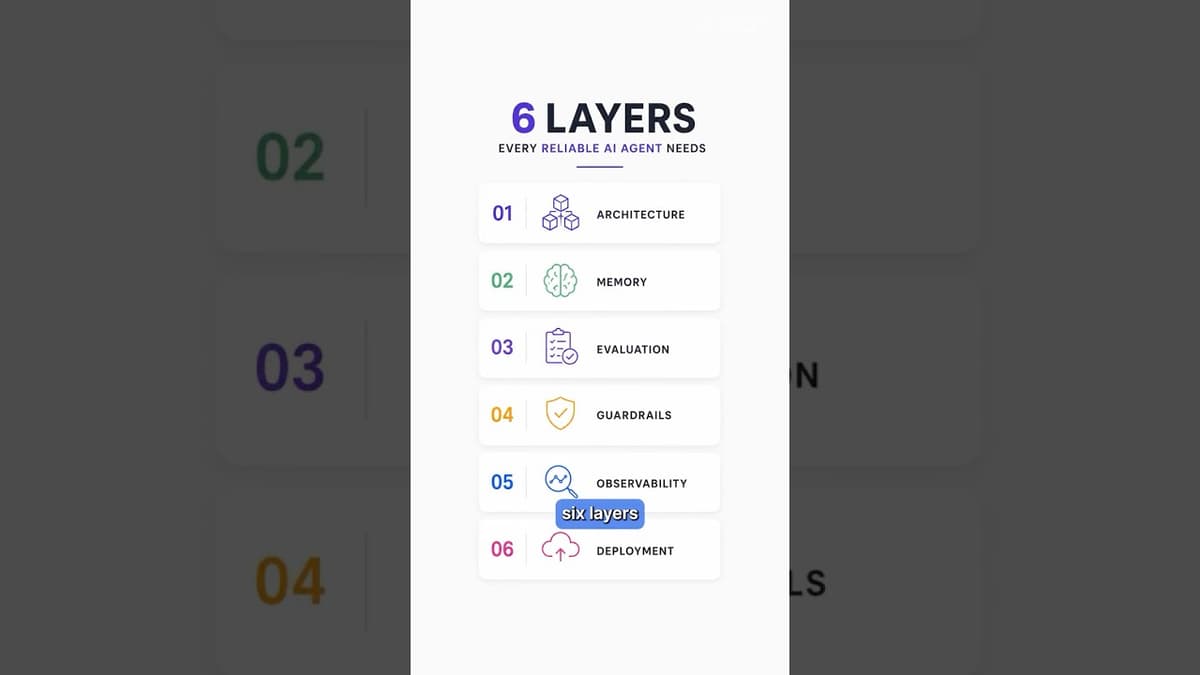

AI Agents Break in Production - Fix It With These 6 Layers

The video warns that AI agents that work in demos often collapse once deployed, and outlines a six‑layer framework required for production‑grade reliability. First, a proper architecture cycles through perception, reasoning, action, and observation, with frameworks like LangGraph providing memory‑aware routing. Second, memory must span short‑term context and long‑term knowledge stored in vector databases such as FAISS. Third, systematic evaluations—testing for hallucinations, retrieval quality, and relevance—can be automated with tools like DeepEval to halt faulty releases. Fourth, guardrails (e.g., Llama Guard, Nemo guardrails, PI reduction) enforce safety and compliance. Fifth, observability platforms such as LangSmith record every tool call and decision point, enabling rapid debugging. Finally, deployment demands robust APIs, secret management, graceful fallbacks, and transparent reasoning rather than a static chatbot screenshot. The presenter cites concrete examples: LangGraph’s multi‑step decision loops, FAISS for long‑term vector storage, DeepEval’s performance‑based fail‑over, and LangSmith’s trace visualizations. He also highlights an eight‑hour hands‑on workshop at the Data Hack Summit 2026 in Bengaluru where participants will build and deploy such agents using open‑source stacks. For businesses, adopting this layered approach transforms AI agents from fragile prototypes into dependable services, reducing downtime, compliance risk, and hidden costs while unlocking scalable automation across products.

Pinecone vs Chroma vs Weaviate: Which Vector DB Should You Ship to Production?

The video dissects three leading vector databases—Pinecone, Chroma, and Weaviate—to help engineers decide which to ship to production for Retrieval‑Augmented Generation (RAG) workloads. It explains that beyond storing high‑dimensional vectors, the critical differentiators are the ANN index (usually HNSW), the filtering...

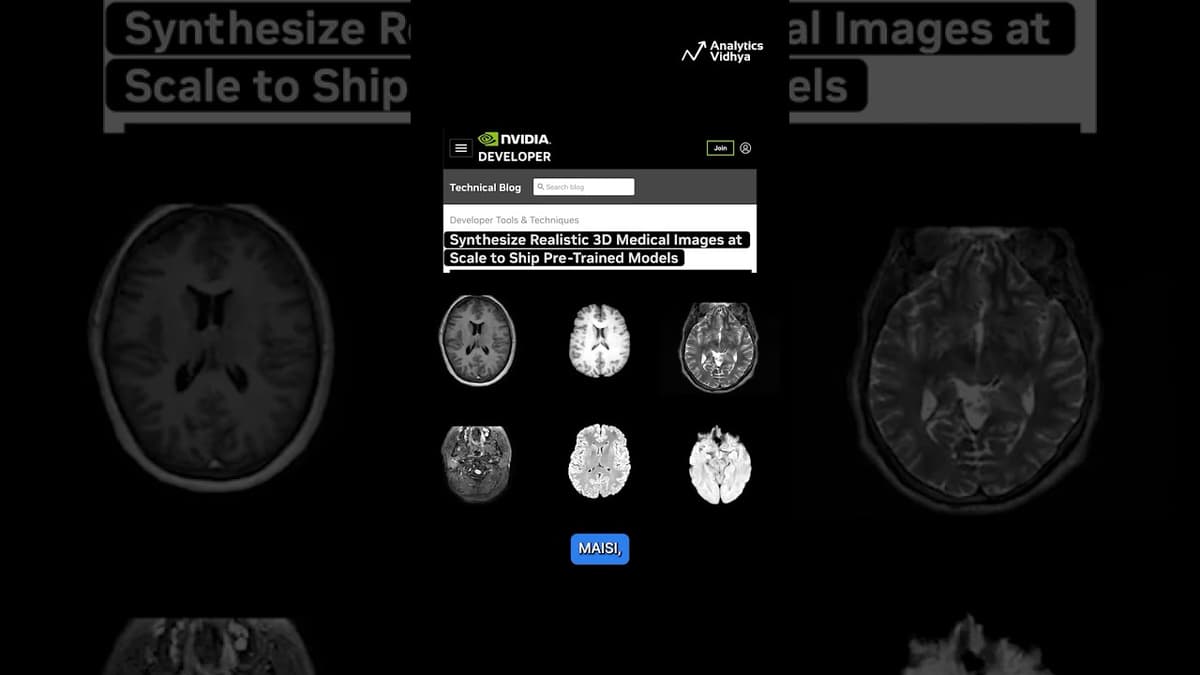

NVIDIA Just Solved the Biggest Data Problem in Medical AI

NVIDIA announced a breakthrough in medical artificial intelligence by unveiling MedSynth, a system that generates fully synthetic yet highly realistic three‑dimensional medical scans. The platform creates CT, MRI, and specialized brain MRI images from scratch, embedding pixel‑by‑pixel anatomical labels that...

GitHub Just Launched a Free AI Agent Certification

GitHub announced a free certification titled “Developing in Agentic AI Systems,” aimed at teaching developers how to incorporate AI agents into real‑world software development workflows. The curriculum covers practical skills such as configuring agents to review pull requests, flag code defects,...

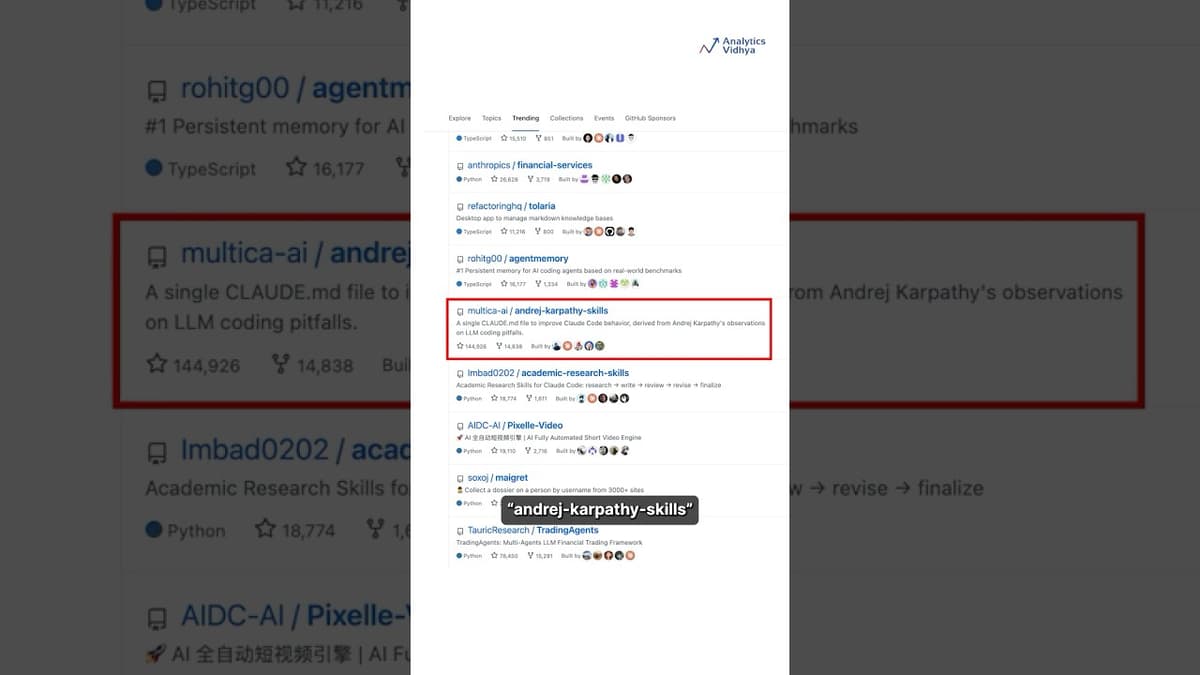

Andrej Karpathy's AI Coding Setup Just Went Viral"

Andrej Karpathy, former OpenAI founding member and ex‑Tesla AI director, has sparked a wave of interest with his new AI‑coding workflow, now hosted in a public GitHub repository called “andrej‑karpathy‑skills.” The repo packages a system‑prompt strategy that treats large‑language‑model assistants...

What Is FastAPI & Why It's Perfect for AI Backends

The video introduces FastAPI as the modern Python framework tailored for generative‑AI backends, explaining its role as the thin layer that exposes, serves, and hardens AI logic for production use. It highlights FastAPI’s foundation on Starlette for routing and async...

Qwen 3.7 Max: Why Claude Should Start Worrying

The video announces Qwen 3.7 Max as the first AI model to rival Anthropic’s Claude across the full spectrum of professional workloads. In developer tests, Qwen 3.7 Max scores higher than Claude on SWE Pro, SWE Multilingual Terminal, and Cycode benchmarks, and it exceeds Claude in MCP...

Scaling AI Employees: Troubleshooting, Optimization & AIOps

The video walks executives through moving an AI employee from a prototype to a production‑grade system, emphasizing that reliability, predictability, and scalability are essential once the basic “functional” bot is built. It introduces a diagnostic framework built on five independent failure...

X Revealed Their Secret Algorithm on Github #algorithm #twitter #tech

The video announces that XAI has published the full source code for the algorithm that curates the X (formerly Twitter) home feed, making the previously proprietary recommendation engine publicly available on GitHub. The system draws content from two distinct sources –...

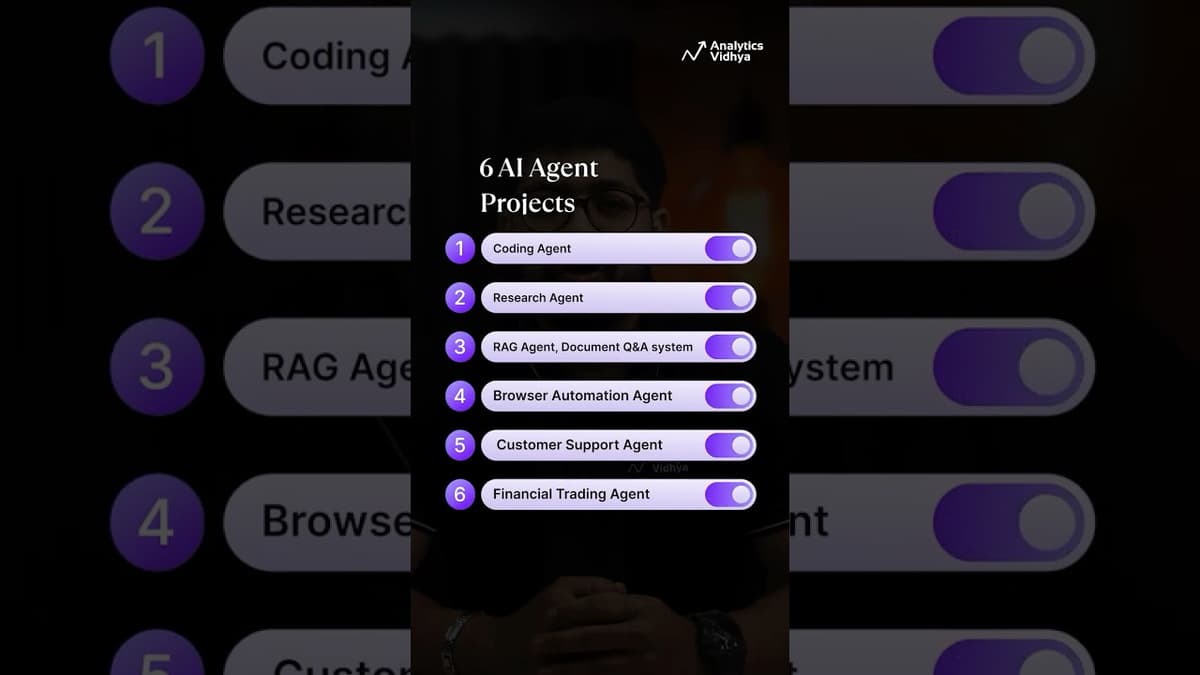

6 AI Agents Projects Every AI Engineer Must Build

The video targets aspiring AI engineers, urging them to move beyond tutorials and build six concrete AI agent projects that showcase end‑to‑end capabilities. It walks through each agent – a coding assistant that navigates repositories, spots bugs and runs tests (mirroring...

Kimi WebBridge: The Local AI Agent That Keeps Your Data Private

The video introduces Kimmy WebBridge, a Chrome extension from Moonshot AI that gives AI agents direct access to a user's browser while keeping all data on the local machine. Unlike most AI‑powered web tools that route clicks, form entries, and...

Hermes Agent Full Tutorial: Install, Build and Run an AI Agent With Memory

The video introduces Hermes Agent, an open‑source AI agent runtime from the News Research lab that upgrades the OpenClaw experience with built‑in, persistent memory and a self‑improving loop. Unlike typical prompt wrappers, Hermes offers a CLI, API server, and messaging...

Harness Engineering: The Most Important New Skill in Enterprise AI

The video introduces “harness engineering” as the emerging discipline that underpins reliable enterprise AI agents. It likens the harness to an operating system that surrounds a model, controlling how the agent interacts with tools, data, and business processes. The concept...

Unsloth Joins the PyTorch Ecosystem: A Game-Changer for LLM Fine-Tuning and Training 🚀

Unsloth, an open‑source library for fine‑tuning large language models, has officially become part of the PyTorch ecosystem, joining heavyweight projects such as Hugging Face Transformers, vLLM and SG Lang. The integration brings Unsloth’s custom Triton kernel, which accelerates training by 2.8× and...

How to Become an AI Agent Developer in 2026: A Step-by-Step Roadmap

In 2026, data‑science roles are shifting from writing SQL/Python scripts to designing AI agents that autonomously clean data, run analyses, and execute workflows. The video outlines a six‑phase roadmap for anyone—from novices to seasoned analysts—to transition into this emerging “AI...