Microsoft Fara Tutorial: Run a Browser-Use Agent in Google Colab with a Mock OpenAI-Compatible Endpoint

The MarkTechPost tutorial walks users through installing Microsoft Fara in Google Colab and running a browser‑use agent via a lightweight mock OpenAI‑compatible endpoint. It demonstrates cloning the repo, installing dependencies, configuring Playwright Firefox, and executing the agent loop without a GPU‑intensive Fara‑7B model. The mock server returns valid browser actions, allowing end‑to‑end testing before swapping to a real endpoint. Detailed instructions show how to switch the notebook to Azure Foundry, vLLM, LM Studio, or Ollama for production deployments.

15 Best Vibe Coding Tools in 2026 Compared: Pricing, Features, and Best Fit

Vibe coding, coined by Andrej Karpathy, lets developers describe software in plain language while an AI agent generates the code. The approach promises faster prototyping, lower costs, and reduced reliance on large engineering teams. Fifteen tools ranging from full‑stack platforms...

Building a Semantic Search Engine and Open-Status Classifier over the ResearchMath-14k Dataset

The MarkTechPost tutorial walks through a full pipeline for the ResearchMath-14k dataset, a collection of roughly 14,100 arXiv‑sourced mathematics problems. After cleaning and visualizing open‑status and field distributions, the author extracts field‑specific TF‑IDF keywords, generates sentence‑transformer embeddings, and reduces them...

Design a High-Precision Retrieve-and-Rerank Pipeline with ZeroEntropy Zerank-2 Reranker

The tutorial demonstrates how to integrate the zeroentropy/zerank-2-reranker, a 4 billion‑parameter Qwen‑3 cross‑encoder, into a two‑stage retrieve‑and‑rerank pipeline. First, a fast bi‑encoder fetches candidate passages, then zerank‑2 re‑orders them, delivering higher precision as measured by NDCG@10. The guide walks through pairwise...

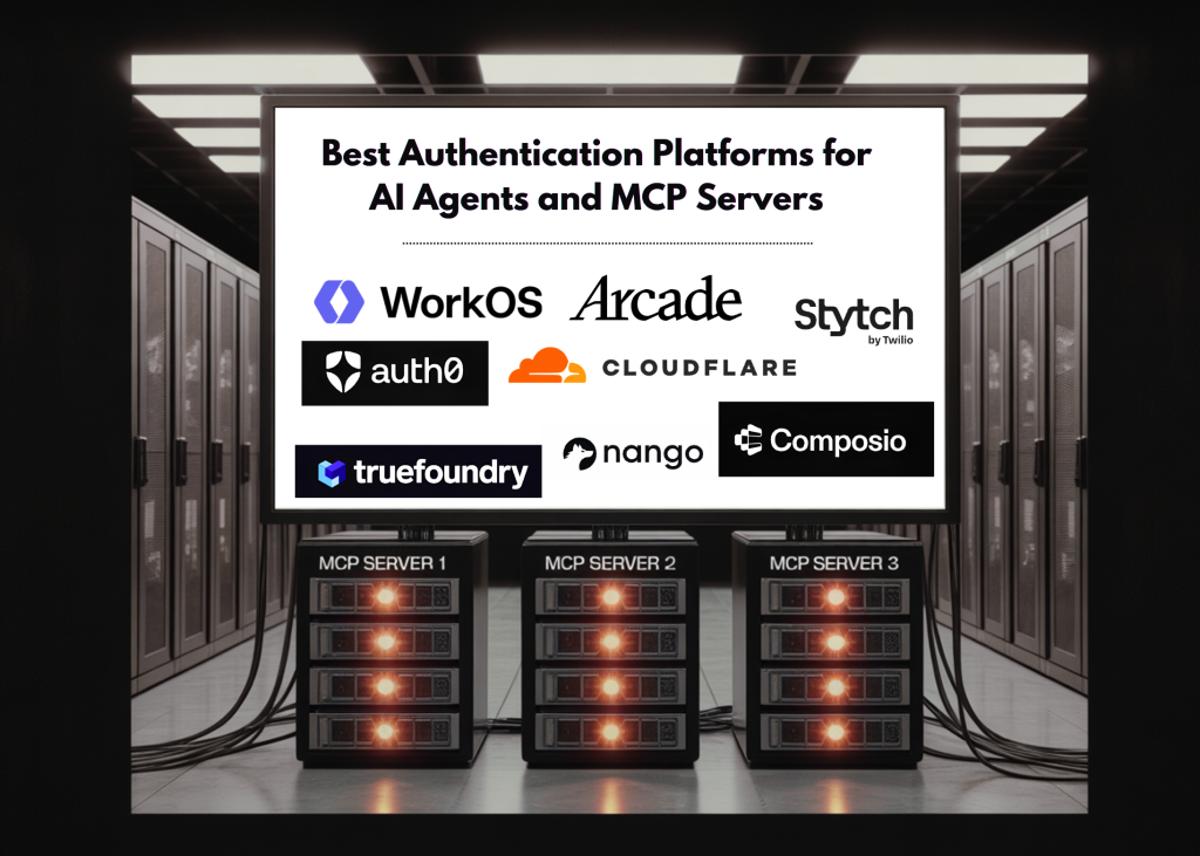

Best Authentication Platforms for AI Agents and MCP Servers in 2026

The Model Context Protocol (MCP) has vaulted from an Anthropic experiment to an industry‑wide standard, with OpenAI, Microsoft and millions of SDK downloads by late 2025. Gartner now predicts that 40% of enterprise applications will embed task‑specific AI agents by...

Build a Complete Langfuse Observability and Evaluation Pipeline for Tracing, Prompt Management, Scoring, and Experiments

The tutorial walks through building a full‑stack Langfuse pipeline that covers tracing, prompt management, scoring, dataset creation, and experiment execution for LLM applications. It demonstrates both real OpenAI model usage and a deterministic mock LLM, ensuring the workflow runs without...

StepFun Releases StepAudio 2.5 Realtime: An End-to-End Voice Model with Roleplay-Specific RLHF and Paralinguistic Comprehension

StepFun, a Shanghai AI lab, launched StepAudio 2.5 Realtime, an end‑to‑end real‑time speech large language model that supports Chinese and English. The model fuses speech recognition, reasoning and synthesis in a single network, delivering audio‑in/audio‑out via a WebSocket API. Its...

A Coding Implementation to Compress and Benchmark Instruction-Tuned LLMs with FP8, GPTQ, and SmoothQuant Quantization Using Llmcompressor

The MarkTechPost tutorial demonstrates how to apply post‑training quantization to the instruction‑tuned Qwen2.5‑0.5B‑Instruct model using the llmcompressor library. Starting from an FP16 baseline, it evaluates three PTQ recipes—FP8 dynamic, GPTQ W4A16, and SmoothQuant combined with GPTQ W8A8—by measuring disk size, generation latency,...

How to Build an MCP Style Routed AI Agent System with Dynamic Tool Exposure Planning, Execution, and Context Injection

The tutorial walks through building a Model Context Protocol (MCP)‑style routed AI agent from scratch, integrating tool discovery, hybrid routing, planning, execution, and context injection. It creates a modular tool server exposing web search, safe Python execution, dataset loading, and...

Google DeepMind Introduces an AI-Enabled Mouse Pointer Powered by Gemini That Captures Visual and Semantic Context Around the Cursor

Google DeepMind unveiled an experimental AI‑enabled mouse pointer powered by Gemini that captures visual and semantic context around the cursor, letting users interact with AI through pointing and speech. Live demos in Google AI Studio showcase image editing and map...

Build a Hybrid-Memory Autonomous Agent with Modular Architecture and Tool Dispatch Using OpenAI

The article walks through building a hybrid‑memory autonomous agent that blends semantic vector search with BM25 keyword retrieval and a modular tool‑dispatch loop. It defines abstract interfaces for memory, LLM providers, and tools, then implements a HybridMemory class that fuses...

Tilde Research Introduces Aurora: A Leverage-Aware Optimizer That Fixes a Hidden Neuron Death Problem in Muon

Tilde Research unveiled Aurora, a new optimizer that corrects a hidden flaw in the popular Muon optimizer which silently deactivates a quarter of MLP neurons in tall weight matrices. By enforcing both left‑semi‑orthogonality and uniform row norms, Aurora eliminates the...

A Coding Implementation to Portfolio Optimization with Skfolio for Building Testing, Tuning, and Comparing Modern Investment Strategies

The MarkTechPost tutorial walks readers through a full‑stack portfolio optimization workflow using the open‑source skfolio library, which mirrors scikit‑learn’s API. Starting with S&P 500 price data, it builds baseline equal‑weight and inverse‑volatility portfolios, then explores mean‑variance, risk‑parity, hierarchical clustering, robust covariance,...

How to Build Technical Analysis and Backtesting Workflow with Pandas-Ta-Classic, Strategy Signals, and Performance Metrics

The tutorial walks through building a full technical‑analysis pipeline with the open‑source pandas‑ta‑classic library, yfinance data, and matplotlib visualizations. It shows how to compute popular indicators—SMA, EMA, RSI, MACD, Bollinger Bands—and to craft a custom multi‑indicator strategy that blends daily...

Meta and Stanford Researchers Propose Fast Byte Latent Transformer That Reduces Inference Memory Bandwidth by Over 50% Without Tokenization

Researchers from Meta, Stanford and the University of Washington introduced three techniques—BLT Diffusion (BLT‑D), BLT Self‑Speculation (BLT‑S) and BLT Diffusion+Verification (BLT‑DV)—to accelerate the Byte Latent Transformer (BLT), a token‑free language model. By generating multiple bytes per decoder pass, the methods...