A Coding Implementation to Build Agent-Native Memory Infrastructure with Memori for Persistent Multi-User and Multi-Session LLM Applications

The tutorial demonstrates how Memori acts as an agent‑native memory layer for LLM applications, integrating both synchronous and asynchronous OpenAI clients in a Google Colab notebook. It walks through basic fact persistence, multi‑tenant isolation between users, and persona‑specific memories using entity_id and process_id attributes. Session management groups related turns, while streaming and async calls show Memori’s robustness in real‑time scenarios. The end‑to‑end example builds a mini support bot that retains user details across separate conversations.

How to Build a Single-Cell RNA-Seq Analysis Pipeline with Scanpy for PBMC Clustering, Annotation, and Trajectory Discovery

The tutorial walks through a full Scanpy pipeline applied to the PBMC‑3k benchmark, covering quality control, doublet removal, normalization, variable‑gene selection, dimensionality reduction, clustering, and cell‑type annotation. It then extends the analysis with PAGA‑based trajectory inference, diffusion pseudotime, and a...

LightSeek Foundation Releases TokenSpeed, an Open-Source LLM Inference Engine Targeting TensorRT-LLM-Level Performance for Agentic Workloads

The LightSeek Foundation has launched TokenSpeed, an MIT‑licensed open‑source LLM inference engine built for agentic coding workloads. Its architecture combines a compiler‑backed SPMD modeling layer, a C++ finite‑state‑machine scheduler that enforces KV‑cache safety, and a pluggable kernel system that works...

OpenAI Introduces MRC (Multipath Reliable Connection): A New Open Networking Protocol for Large-Scale AI Supercomputer Training Clusters

OpenAI unveiled MRC (Multipath Reliable Connection), a new open networking protocol designed to eliminate congestion and accelerate failure recovery in massive AI training clusters. Developed with AMD, Broadcom, Intel, Microsoft and NVIDIA, MRC extends RoCE with SRv6‑based source routing and...

A Groq-Powered Agentic Research Assistant with LangGraph, Tool Calling, Sub-Agents, and Agentic Memory: Lets Built It

The tutorial demonstrates how to build a Groq‑powered, agentic research assistant using LangGraph and LangChain. By configuring Groq’s free OpenAI‑compatible endpoint, the workflow runs the 70B Llama‑3.3‑versatile model for multi‑step tool reasoning. It integrates web search, file handling, Python execution,...

When Claude Hallucinates in Court: The Latham & Watkins Incident and What It Means for Attorney Liability

In May 2025 Latham & Watkins filed a declaration in *Concord Music Group v. Anthropic* that contained AI‑generated citation errors. The firm used Anthropic's Claude to format a real source, but the model supplied the wrong title and authors, a...

Inworld AI Launches Realtime TTS-2: A Closed-Loop Voice Model That Adapts to How You Actually Talk

Inworld AI unveiled Realtime TTS‑2, a research‑preview voice model that ingests the actual audio of a conversation rather than just text transcripts. The closed‑loop architecture lets the system detect tone, pacing and emotion from prior turns, enabling more natural, context‑aware...

Xiaomi Releases MiMo-V2.5-Pro and MiMo-V2.5: Matching Frontier Model Benchmarks at Significantly Lower Token Cost

Xiaomi’s MiMo team launched two new agentic AI models, MiMo‑V2.5‑Pro and MiMo‑V2.5, available via API with competitive pricing. The Pro version hits frontier‑level scores on SWE‑bench Pro (57.2), Claw‑Eval (63.8) and τ3‑Bench (72.9), matching closed‑source leaders while consuming 40‑60% fewer...

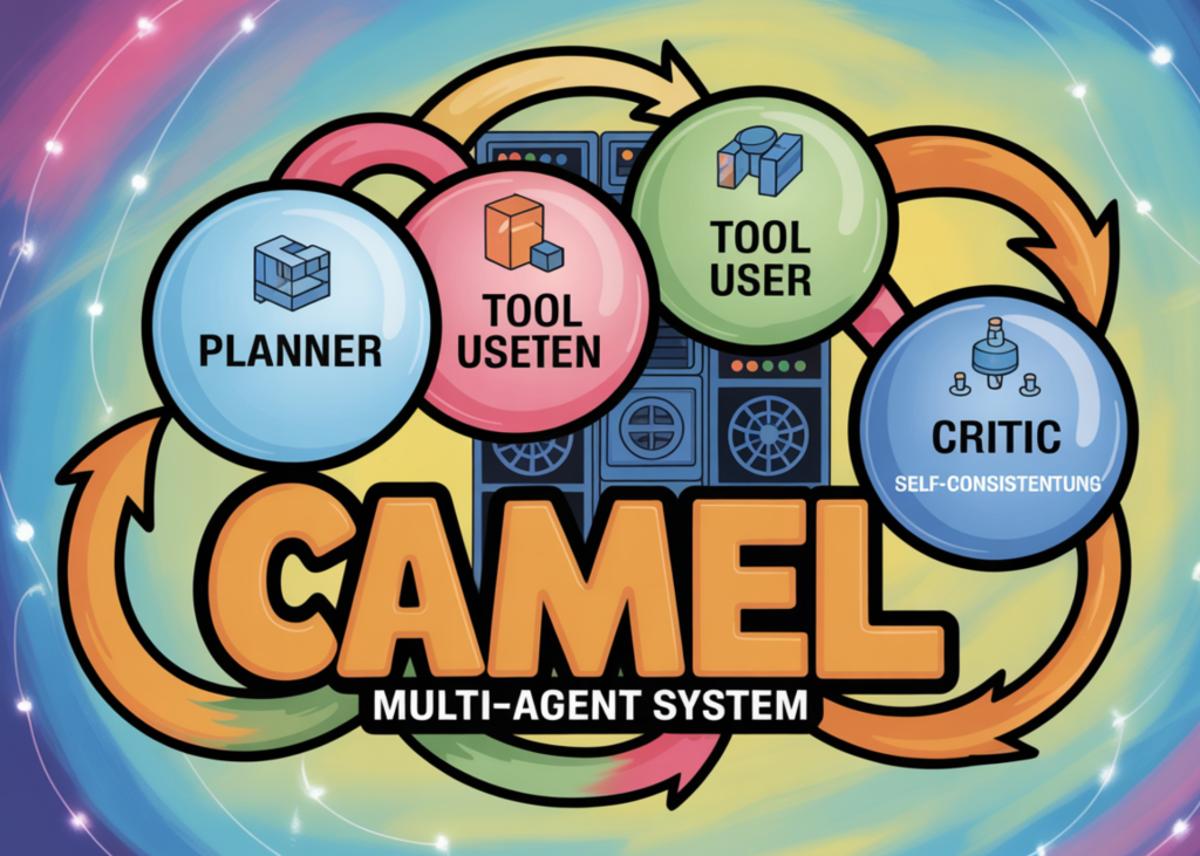

How to Design a Production-Grade CAMEL Multi-Agent System with Planning, Tool Use, Self-Consistency, and Critique-Driven Refinement

The MarkTechPost tutorial walks readers through building a production‑grade multi‑agent system with the CAMEL framework. It assembles five specialized agents—planner, researcher, writer, critic, and rewriter—each constrained by Pydantic‑validated JSON schemas. The pipeline adds tool‑driven web searches, self‑consistency sampling of multiple...

Next Leap to Harness Engineering: JiuwenClaw Pioneers ‘Coordination Engineering’

OpenJiuwen released JiuwenClaw 2.0, introducing AgentTeam—a multi‑agent collaboration layer that the community dubs “Coordination Engineering.” In tests the system autonomously assembled a ten‑agent team to produce a 200‑page technical PowerPoint in under 20 minutes, with no human intervention. AgentTeam features a Leader...

Photon Releases Spectrum: An Open-Source TypeScript Framework that Deploys AI Agents Directly to iMessage, WhatsApp, and Telegram

Photon unveiled Spectrum, an open‑source TypeScript SDK and managed cloud platform that lets AI agents run directly inside iMessage, WhatsApp, Telegram, Slack, Discord, Instagram and other popular messengers. The framework abstracts each service’s API, so developers write agent logic once...

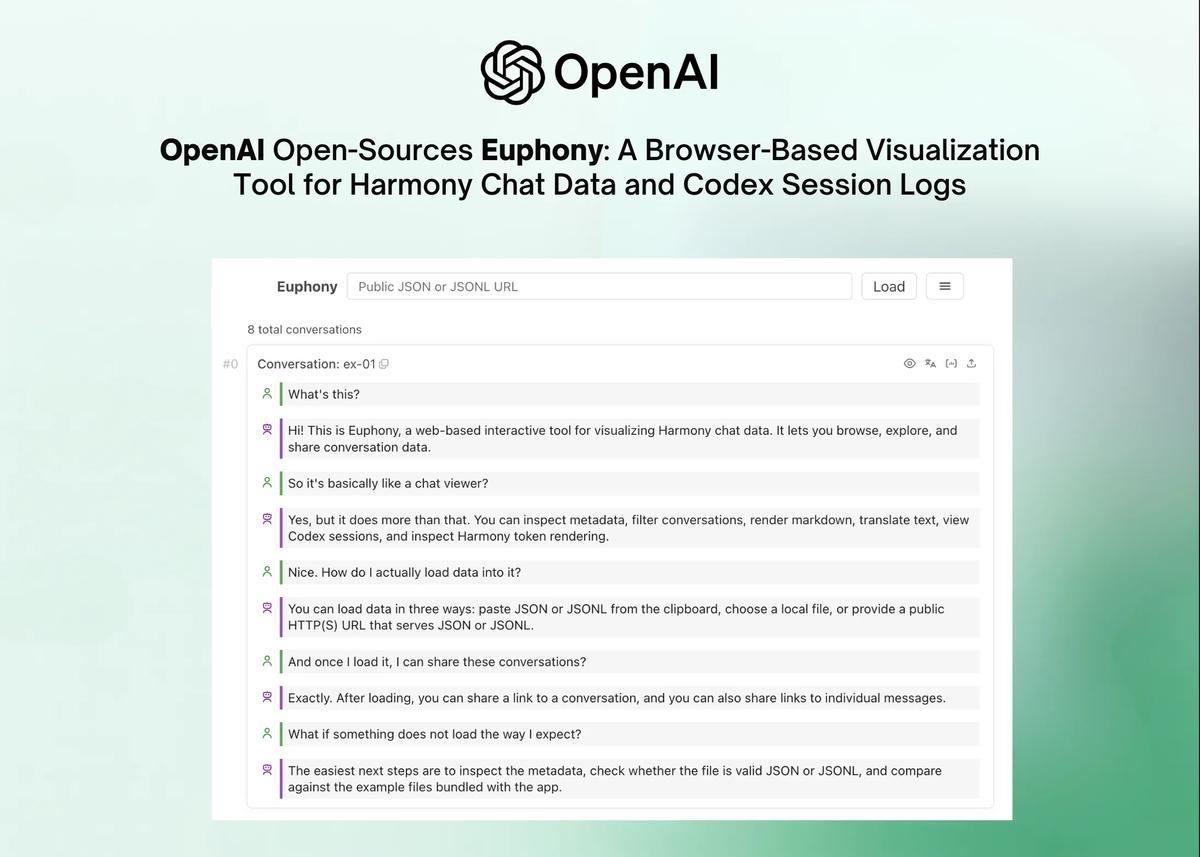

OpenAI Open-Sources Euphony: A Browser-Based Visualization Tool for Harmony Chat Data and Codex Session Logs

OpenAI has open‑sourced Euphony, a browser‑based tool that transforms raw Harmony chat JSON and Codex session logs into interactive conversation timelines. The web app auto‑detects four data structures, rendering them with metadata panels, JMESPath filtering, focus mode, grid view, and...

Hugging Face Releases Ml-Intern: An Open-Source AI Agent that Automates the LLM Post-Training Workflow

Hugging Face unveiled ml‑intern, an open‑source AI agent that automates the entire post‑training workflow for large language models. Built on the smolagents framework, the tool can browse arXiv, locate and reformat datasets, launch training jobs on Hugging Face Jobs, and...

A Coding Implementation to Build a Conditional Bayesian Hyperparameter Optimization Pipeline with Hyperopt, TPE, and Early Stopping

The tutorial walks through building a production‑grade Bayesian hyperparameter optimization pipeline using Hyperopt’s Tree‑structured Parzen Estimator (TPE). It defines a conditional search space that lets the optimizer choose between logistic regression and SVM models, each with its own parameter sub‑space....

XAI Launches Standalone Grok Speech-to-Text and Text-to-Speech APIs, Targeting Enterprise Voice Developers

Elon Musk’s xAI has launched two standalone audio APIs—Grok Speech‑to‑Text (STT) and Grok Text‑to‑Speech (TTS)—built on the same infrastructure that powers Grok Voice in Tesla vehicles and Starlink support. The STT API offers batch and streaming transcription in 25 languages,...

A Coding Guide for Property-Based Testing Using Hypothesis with Stateful, Differential, and Metamorphic Test Design

The MarkTechPost tutorial demonstrates how to build a full‑stack property‑based testing suite with Hypothesis, covering invariants, differential, metamorphic, targeted, and stateful testing. It walks through utility functions, custom parsers, statistical checks, and a rule‑based state machine that models a simple...

Google AI Releases Auto-Diagnose: An Large Language Model LLM-Based System to Diagnose Integration Test Failures at Scale

Google AI researchers unveiled Auto-Diagnose, an LLM‑powered system that reads integration‑test logs, isolates the root cause, and posts a concise diagnosis to the code review. In a manual study of 71 real‑world failures across 39 teams, it identified the correct...

Top 19 AI Red Teaming Tools (2026): Secure Your ML Models

The article outlines AI red teaming as a systematic approach to probe machine‑learning and generative AI models for hidden vulnerabilities such as prompt injection, data poisoning, and bias exploitation. It lists 19 leading tools for 2026, ranging from open‑source libraries...

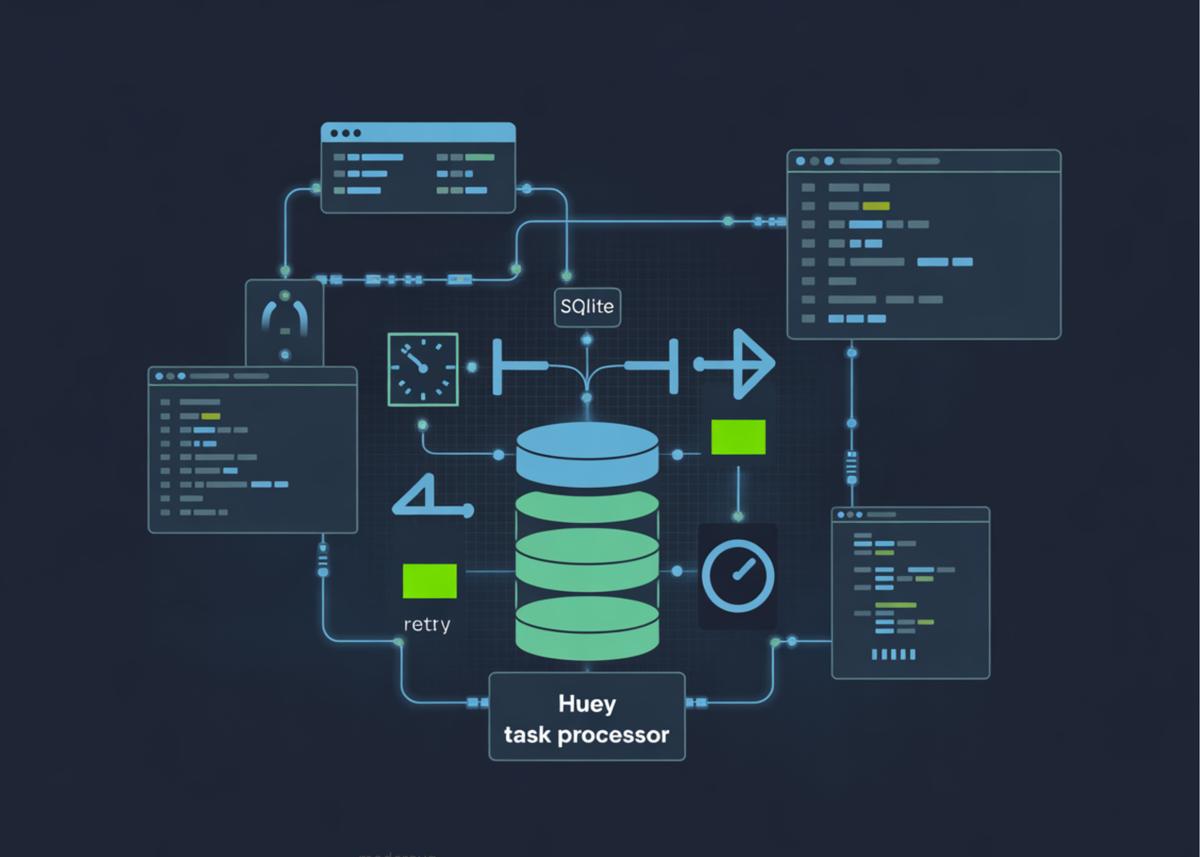

A Coding Guide to Build a Production-Grade Background Task Processing System Using Huey with SQLite, Scheduling, Retries, Pipelines, and Concurrency...

The tutorial walks readers through building a production‑grade background task system using Huey with a SQLite backend, avoiding external services like Redis. It sets up a threaded consumer in a notebook, defines tasks with priorities, retries, locking, and pipelines, and...

Qwen Team Open-Sources Qwen3.6-35B-A3B: A Sparse MoE Vision-Language Model with 3B Active Parameters and Agentic Coding Capabilities

Alibaba’s Qwen team has open‑sourced Qwen3.6-35B-A3B, a 35‑billion‑parameter vision‑language model that activates only 3 billion parameters per inference thanks to a Sparse Mixture‑of‑Experts design. The architecture uses 256 experts with eight routed per token, linear‑attention Gated DeltaNet blocks and Grouped Query...

RightNow AI Releases AutoKernel: An Open-Source Framework that Applies an Autonomous Agent Loop to GPU Kernel Optimization for Arbitrary PyTorch...

RightNow AI unveiled AutoKernel, an open‑source framework that uses an autonomous LLM‑driven loop to optimize GPU kernels for any PyTorch model. The system iteratively edits kernel code, benchmarks performance, and keeps or reverts changes, completing about 40 experiments per hour...

Meet MaxToki: The AI That Predicts How Your Cells Age — and What to Do About It

MaxToki is a transformer‑decoder foundation model trained on nearly one trillion single‑cell RNA‑seq tokens to predict how individual cells age over time. By encoding transcriptomes as ranked gene lists and extending context length to 16,384 tokens, it can infer the...

How to Build a Netflix VOID Video Object Removal and Inpainting Pipeline with CogVideoX, Custom Prompting, and End-to-End Sample Inference

The MarkTechPost tutorial walks readers through building a full‑stack video object removal pipeline using Netflix’s open‑source VOID model combined with the CogVideoX inpainting backbone. It covers environment setup on Google Colab, secure token handling, downloading the 5‑billion‑parameter CogVideoX model and the...

Google DeepMind’s Research Lets an LLM Rewrite Its Own Game Theory Algorithms — And It Outperformed the Experts

Google DeepMind introduced AlphaEvolve, an evolutionary system that uses a Gemini 2.5 Pro LLM to rewrite the source code of multi‑agent reinforcement‑learning algorithms. Applied to Counterfactual Regret Minimization and Policy Space Response Oracles, the system discovered VAD‑CFR and SHOR‑PSRO, which...

TII Releases Falcon Perception: A 0.6B-Parameter Early-Fusion Transformer for Open-Vocabulary Grounding and Segmentation From Natural Language Prompts

The Technology Innovation Institute unveiled Falcon Perception, a 600‑million‑parameter dense transformer that fuses image patches and text tokens from the first layer, eliminating the traditional encoder‑decoder split. Using hybrid attention, 3D Rotary Positional Embeddings (GGROPE), and a Chain‑of‑Perception sequence, the...

Arcee AI Releases Trinity Large Thinking: An Apache 2.0 Open Reasoning Model for Long-Horizon Agents and Tool Use

Arcee AI unveiled Trinity Large Thinking, an open‑weight reasoning model released under the Apache 2.0 license. The 400 billion‑parameter sparse Mixture‑of‑Experts model activates only 13 billion parameters per token and supports a 262,144‑token context window, targeting long‑horizon autonomous agents and multi‑turn tool use....

IBM Releases Granite 4.0 3B Vision: A New Vision Language Model for Enterprise Grade Document Data Extraction

IBM unveiled Granite 4.0 3B Vision, a vision‑language model built as a 0.5 billion‑parameter LoRA adapter for its 3.5 billion‑parameter Granite 4.0 Micro language backbone. The model uses a SIGLIP‑based encoder with 384×384 patch tiling and a DeepStack architecture that injects visual tokens at eight transformer layers....

Z.ai Launches GLM-5V-Turbo: A Native Multimodal Vision Coding Model Optimized for OpenClaw and High-Capacity Agentic Engineering Workflows Everywhere

Z.ai unveiled GLM-5V-Turbo, a vision‑coding model that natively fuses images, video and document layouts into executable code. The model leverages a CogViT vision encoder and a Multi‑Token Prediction architecture to support a 200K context window and up to 128K output...

How to Build a Production-Ready Gemma 3 1B Instruct Generation AI Pipeline with Hugging Face Transformers, Chat Templates, and Colab...

The tutorial walks readers through building a production‑ready inference pipeline for Google DeepMind's Gemma 3 1B Instruct model using Hugging Face Transformers on Google Colab. It covers secure HF token authentication, automatic device and precision selection, loading the tokenizer and model, and creating reusable...

Hugging Face Releases TRL v1.0: A Unified Post-Training Stack for SFT, Reward Modeling, DPO, and GRPO Workflows

Hugging Face announced TRL v1.0, turning its reinforcement‑learning library into a production‑ready stack for large‑language‑model post‑training. The release bundles Supervised Fine‑Tuning, Reward Modeling and alignment into a single, config‑driven workflow accessed via a new command‑line interface. It adds support for popular...

Liquid AI Released LFM2.5-350M: A Compact 350M Parameter Model Trained on 28T Tokens with Scaled Reinforcement Learning

Liquid AI unveiled LFM2.5-350M, a 350‑million‑parameter model trained on 28 trillion tokens using scaled reinforcement learning. The hybrid architecture combines Linear Input‑Varying (LIV) convolution blocks with a handful of Grouped Query Attention layers, enabling a 32k context window while keeping memory...

How to Build and Evolve a Custom OpenAI Agent with A-Evolve Using Benchmarks, Skills, Memory, and Workspace Mutations

The tutorial walks through building a custom OpenAI‑powered agent using the open‑source A‑Evolve framework in Google Colab. It shows how to set up the repository, define a strict system prompt, create a miniature benchmark of text‑transformation tasks, and implement a mutation...

Microsoft AI Releases Harrier-OSS-V1: A New Family of Multilingual Embedding Models Hitting SOTA on Multilingual MTEB V2

Microsoft released Harrier-OSS-v1, a trio of multilingual embedding models ranging from 270 million to 27 billion parameters. The models use decoder‑only architectures with last‑token pooling and achieve state‑of‑the‑art results on the Multilingual MTEB v2 benchmark. They support a 32,768‑token context window, enabling...

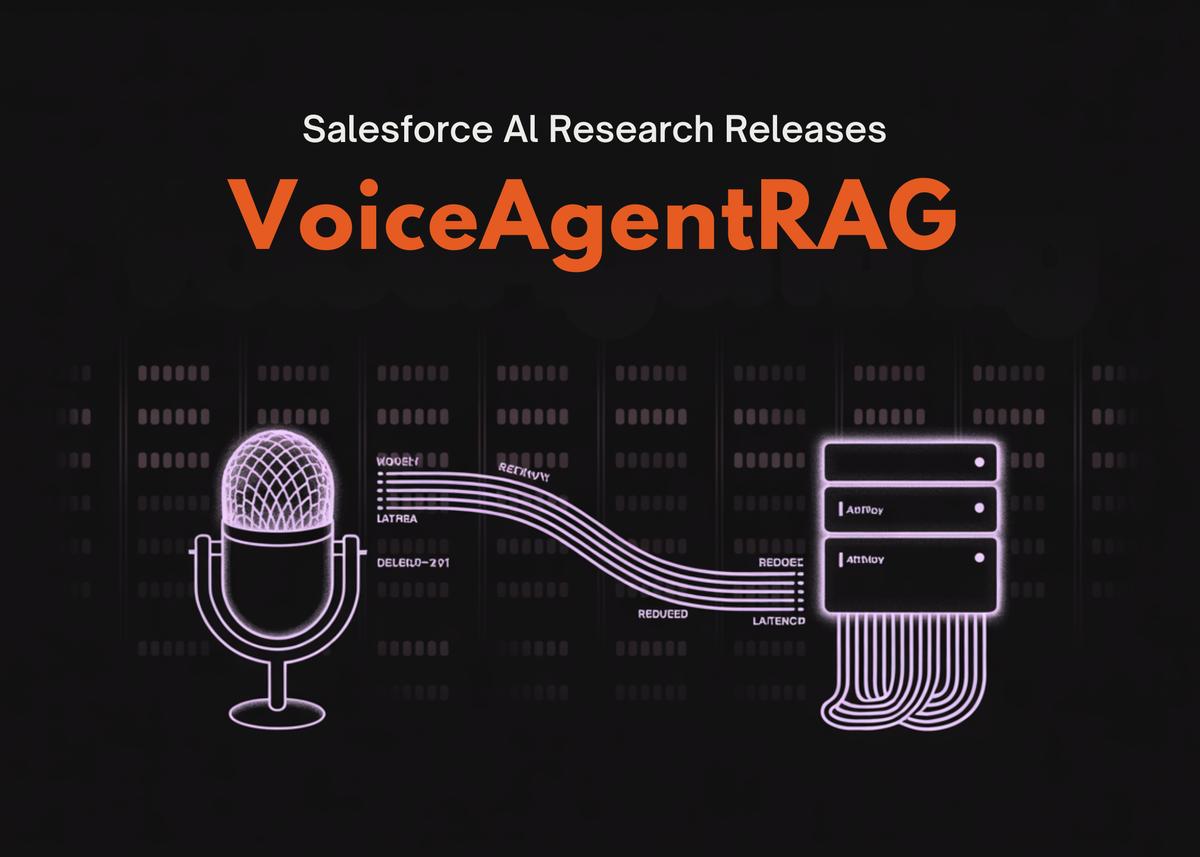

Salesforce AI Research Releases VoiceAgentRAG: A Dual-Agent Memory Router that Cuts Voice RAG Retrieval Latency by 316x

Salesforce AI Research unveiled VoiceAgentRAG, an open‑source dual‑agent architecture that separates retrieval from generation for voice assistants. The Fast Talker foreground agent consults a semantic in‑memory FAISS cache with ~0.35 ms latency, while the Slow Thinker background agent predicts upcoming topics...

Agent-Infra Releases AIO Sandbox: An All-in-One Runtime for AI Agents with Browser, Shell, Shared Filesystem, and MCP

Agent-Infra unveiled the open‑source AIO Sandbox, a unified container that bundles a Chromium browser, Bash shell, Python and Node runtimes, plus VSCode Server and Jupyter notebooks. The platform introduces a shared filesystem that instantly propagates files between tools, eliminating the...

Meet A-Evolve: The PyTorch Moment For Agentic AI Systems Replacing Manual Tuning With Automated State Mutation And Self-Correction

Amazon researchers unveiled A‑Evolve, an open‑source framework that automates the creation and refinement of autonomous AI agents. By treating an agent as a mutable file‑based workspace, the system replaces manual prompt‑tuning with a five‑stage evolution loop—Solve, Observe, Evolve, Gate, Reload—backed...

Chroma Releases Context-1: A 20B Agentic Search Model for Multi-Hop Retrieval, Context Management, and Scalable Synthetic Task Generation

Chroma unveiled Context-1, a 20 billion‑parameter agentic search model built to serve as a dedicated retrieval subagent in RAG pipelines. The model decomposes complex queries, runs multiple tool calls, and prunes irrelevant context with 94% accuracy, keeping a lean 32k token...

An Implementation of IWE’s Context Bridge as an AI-Powered Knowledge Graph with Agentic RAG, OpenAI Function Calling, and Graph Traversal

The tutorial demonstrates how to deploy IWE, an open‑source Rust‑based personal knowledge‑management system, to turn markdown files into a navigable directed graph. It walks through core CLI operations—find, retrieve, tree, squash, stats, and DOT export—using an eight‑note developer knowledge base....

Tencent AI Open Sources Covo-Audio: A 7B Speech Language Model and Inference Pipeline for Real-Time Audio Conversations and Reasoning

Tencent AI Lab unveiled Covo‑Audio, a 7‑billion‑parameter Large Audio Language Model that processes continuous speech and generates high‑fidelity audio within a single architecture. The system combines Whisper‑large‑v3, Qwen2.5‑7B‑Base, and a WavLM‑based tokenizer, employing hierarchical tri‑modal interleaving and an intelligence‑speaker decoupling...

NVIDIA AI Introduces PivotRL: A New AI Framework Achieving High Agentic Accuracy With 4x Fewer Rollout Turns Efficiently

NVIDIA researchers unveiled PivotRL, a new framework that blends the data efficiency of supervised fine‑tuning with the generalization strength of end‑to‑end reinforcement learning for long‑horizon agentic tasks. By filtering high‑variance “pivot” turns and employing functional rewards, the method focuses compute...

Implementing Deep Q-Learning (DQN) From Scratch Using RLax JAX Haiku and Optax to Train a CartPole Reinforcement Learning Agent

The article walks through building a Deep Q‑Learning (DQN) agent from scratch using RLax together with JAX, Haiku, and Optax. It details the creation of a Q‑network, experience replay buffer, epsilon‑greedy policy, and training loop that computes TD errors with...

Meet GitAgent: The Docker for AI Agents that Is Finally Solving the Fragmentation Between LangChain, AutoGen, and Claude Code

GitAgent is an open‑source CLI that introduces a universal, Git‑backed format for AI agents, separating their definition from any specific orchestration framework. By storing agent metadata, personality, duties, skills, tools, rules, and memory as structured files in a repository, developers...

A Coding Implementation for Building and Analyzing Crystal Structures Using Pymatgen for Symmetry Analysis, Phase Diagrams, Surface Generation, and Materials...

The tutorial demonstrates how the open‑source pymatgen library can be used to construct, manipulate, and analyze crystal structures such as silicon, NaCl, and LiFePO₄‑like materials. It walks through lattice inspection, symmetry detection, coordination environment analysis, oxidation‑state decoration, supercell creation, surface...

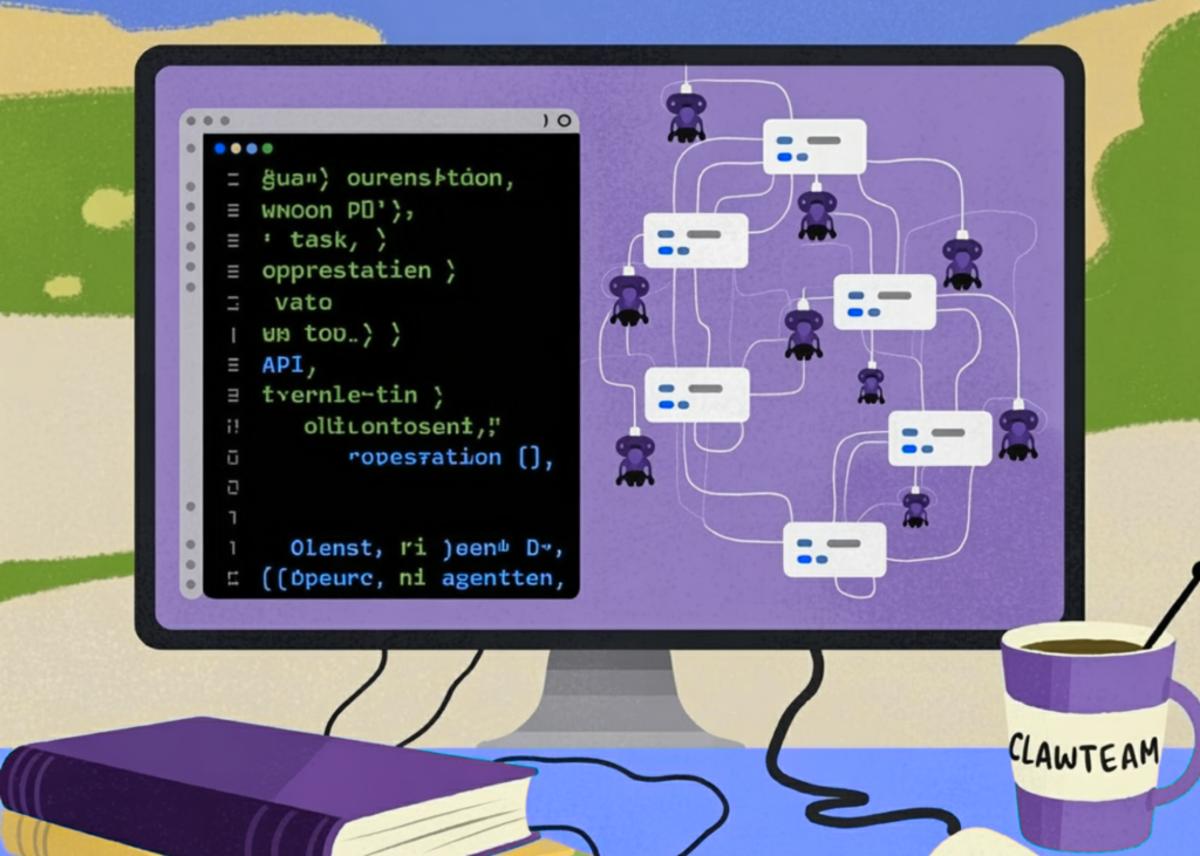

A Coding Implementation Showcasing ClawTeam’s Multi-Agent Swarm Orchestration with OpenAI Function Calling

The tutorial demonstrates how ClawTeam’s open‑source multi‑agent swarm framework can be run entirely in Google Colab using OpenAI’s function‑calling API. It builds a leader agent that breaks a high‑level goal into sub‑tasks, and worker agents that execute those tasks via...

LlamaIndex Releases LiteParse: A CLI and TypeScript-Native Library for Spatial PDF Parsing in AI Agent Workflows

LlamaIndex unveiled LiteParse, an open‑source, TypeScript‑native library that parses PDFs locally for Retrieval‑Augmented Generation workflows. Built on PDF.js and Tesseract.js, it extracts spatially‑preserved text instead of converting to Markdown, keeping original layout and table structures intact. The tool also outputs...

A Coding Guide to Implement Advanced Differential Equation Solvers, Stochastic Simulations, and Neural Ordinary Differential Equations Using Diffrax and JAX

The tutorial demonstrates how to use the Diffrax library together with JAX, Equinox, and Optax to solve ordinary and stochastic differential equations, perform dense interpolation, and train neural ordinary differential equation (Neural ODE) models. It walks through logistic growth, Lotka‑Volterra,...

Unsloth AI Releases Unsloth Studio: A Local No-Code Interface For High-Performance LLM Fine-Tuning With 70% Less VRAM Usage

Unsloth AI launched Unsloth Studio, an open‑source, no‑code local web UI that simplifies LLM fine‑tuning. Built on hand‑written Triton back‑propagation kernels, the platform delivers up to twice the training speed and cuts VRAM consumption by roughly 70 % without sacrificing accuracy....

Google AI Releases WAXAL: A Multilingual African Speech Dataset for Training Automatic Speech Recognition and Text-to-Speech Models

Google AI has released WAXAL, an open multilingual speech dataset targeting 24 African languages. The dataset is split into an ASR component built from image‑prompted, natural‑environment recordings and a TTS component consisting of studio‑quality, single‑speaker audio. Only about 10% of...

How to Build High-Performance GPU-Accelerated Simulations and Differentiable Physics Workflows Using NVIDIA Warp Kernels

The MarkTechPost tutorial demonstrates how NVIDIA Warp lets Python developers write GPU‑accelerated kernels for scientific simulations and differentiable physics. It walks through environment setup, kernel creation for vector math, signed‑distance fields, particle dynamics, and a gradient‑based projectile optimizer. Performance tests show...

Mistral AI Releases Mistral Small 4: A 119B-Parameter MoE Model that Unifies Instruct, Reasoning, and Multimodal Workloads

Mistral AI unveiled Small 4, a 119‑billion‑parameter mixture‑of‑experts model that merges instruction following, reasoning, multimodal understanding, and agentic coding into a single deployment. The architecture features 128 experts with four active per token, delivering 6 B active parameters and a 256k context...