ByteDance Releases Protenix-V1: A New Open-Source Model Achieving AF3-Level Performance in Biomolecular Structure Prediction

ByteDance unveiled Protenix‑v1, an open‑source, AlphaFold3‑style foundation model for all‑atom biomolecular structure prediction covering proteins, nucleic acids and ligands. The 368 million‑parameter system matches AlphaFold3’s training data cutoff, model scale and inference budget, and claims superior performance on curated benchmarks. Protenix ships with PXMeter v1.0.0, a 6 k+ complex evaluation suite that offers time‑split and domain‑specific subsets. The release also includes a web server, design and docking extensions, creating a full‑stack ecosystem for research and production use.

NVIDIA AI Release VibeTensor: An AI Generated Deep Learning Runtime Built End to End by Coding Agents Programmatically

NVIDIA has unveiled VibeTensor, an open‑source, CUDA‑first deep‑learning runtime generated largely by large language model‑driven coding agents. The stack provides a PyTorch‑style eager API with Python and experimental Node.js frontends, a C++20 core, reverse‑mode autograd, a stream‑ordered caching allocator, and...

How to Build Efficient Agentic Reasoning Systems by Dynamically Pruning Multiple Chain-of-Thought Paths Without Losing Accuracy

The tutorial introduces an agentic chain‑of‑thought pruning framework that generates multiple reasoning paths in parallel and dynamically discards them using consensus signals and early‑stop criteria. By leveraging self‑consistency, lightweight graph‑based agreement, and progressive sampling, the system reduces token consumption while...

Google Introduces Agentic Vision in Gemini 3 Flash for Active Image Understanding

Google unveiled Agentic Vision in Gemini 3 Flash, turning image understanding into an active, multi‑step process. The model now formulates a plan, executes Python code to manipulate images, and re‑examines the results before answering. Code execution delivers a reported 5‑10% quality lift...

Google Releases Conductor: A Context Driven Gemini CLI Extension that Stores Knowledge as Markdown and Orchestrates Agentic Workflows

Google introduced Conductor, an open‑source Gemini CLI extension that shifts AI‑assisted coding from fleeting chat prompts to persistent, repository‑level context stored as version‑controlled Markdown. The tool creates a dedicated conductor directory containing product goals, tech‑stack details, workflow rules, and style guides, which...

NVIDIA AI Brings Nemotron-3-Nano-30B to NVFP4 with Quantization Aware Distillation (QAD) for Efficient Reasoning Inference

NVIDIA released Nemotron-3-Nano-30B-A3B-NVFP4, a 30‑billion‑parameter LLM quantized to 4‑bit NVFP4 while preserving BF16 accuracy. The model combines a hybrid Mamba2 Transformer Mixture‑of‑Experts architecture with a Quantization Aware Distillation (QAD) pipeline that replaces task loss with KL divergence to a frozen...

How to Build Memory-Driven AI Agents with Short-Term, Long-Term, and Episodic Memory

The tutorial presents a full‑stack memory engine that splits an AI agent’s context into short‑term working buffers, long‑term vector stores, and episodic traces. It leverages sentence‑transformer embeddings and a FAISS index to enable rapid semantic similarity search, while a policy...

A Coding and Experimental Analysis of Decentralized Federated Learning with Gossip Protocols and Differential Privacy

The tutorial implements both centralized FedAvg and a fully decentralized gossip-based federated learning system, adding client‑side differential privacy via calibrated Gaussian noise. Experiments on non‑IID MNIST data compare convergence speed, stability, and final accuracy across privacy budgets (epsilon values). Results...

A Coding Deep Dive Into Differentiable Computer Vision with Kornia Using Geometry Optimization, LoFTR Matching, and GPU Augmentations

The article presents a comprehensive, end‑to‑end tutorial that builds a fully differentiable computer‑vision pipeline using Kornia and PyTorch. It starts with synchronized GPU‑accelerated augmentations for images, masks, and keypoints, then shows how to recover a homography through gradient‑based optimization. The...

MBZUAI Releases K2 Think V2: A Fully Sovereign 70B Reasoning Model For Math, Code, And Science

Mohamed bin Zayed University of Artificial Intelligence (MBZUAI) unveiled K2 Think V2, a fully sovereign 70‑billion‑parameter reasoning model built on the K2 V2 Instruct base. The model extends the base's 512k‑token context capability and is fine‑tuned with a GRPO‑style RLVR...

Tencent Hunyuan Releases HPC-Ops: A High Performance LLM Inference Operator Library

Tencent Hunyuan has open‑sourced HPC‑Ops, a CUDA‑based operator library that accelerates large language model inference on NVIDIA GPUs. The library provides high‑performance kernels for Attention, Grouped GEMM and fused MoE, supporting bf16 and fp8 precisions via a compact C++/Python API....

DSGym Offers a Reusable Container Based Substrate for Building and Benchmarking Data Science Agents

DSGym, a collaborative effort from Stanford, Together AI, Duke and Harvard, introduces a reusable container‑based framework that evaluates data‑science agents through real code execution. The suite standardizes tasks, agents and environments, offering 972 analysis and 114 prediction challenges spanning finance,...

How a Haystack-Powered Multi-Agent System Detects Incidents, Investigates Metrics and Logs, and Produces Production-Grade Incident Reviews End-to-End

The blog post demonstrates how Haystack can power a multi‑agent system that automatically detects incidents, investigates metrics and logs, and generates production‑grade postmortems. It walks through a reproducible notebook that creates synthetic observability data, applies a rolling z‑score detector, and...

StepFun AI Introduce Step-DeepResearch: A Cost-Effective Deep Research Agent Model Built Around Atomic Capabilities

StepFun AI unveiled Step-DeepResearch, a 32‑billion‑parameter agent built on Qwen2.5‑32B‑Base that transforms web search into end‑to‑end research workflows. The model internalizes four atomic capabilities—planning, deep information seeking, reflection/verification, and report generation—using specialized data pipelines and long‑context training up to 128k...

How an AI Agent Chooses What to Do Under Tokens, Latency, and Tool-Call Budget Constraints?

MarkTechPost introduces a cost‑aware planning AI agent that explicitly balances token usage, latency, and tool‑call budgets when generating action plans. The agent creates multiple candidate steps, estimates their resource spend, and employs a beam‑style search with redundancy penalties to select...

Microsoft Releases VibeVoice-ASR: A Unified Speech-to-Text Model Designed to Handle 60-Minute Long-Form Audio in a Single Pass

Microsoft unveiled VibeVoice‑ASR, an open‑source speech‑to‑text model that processes up to 60 minutes of continuous audio in a single pass using a 64K‑token context. The model jointly performs automatic speech recognition, speaker diarization, and timestamping, delivering structured transcripts that capture who...

Inworld AI Releases TTS-1.5 For Realtime, Production Grade Voice Agents

Inworld AI unveiled TTS‑1.5, a production‑grade text‑to‑speech engine built for real‑time voice agents. The Max variant delivers sub‑250 ms P90 time‑to‑first‑audio latency, while the Mini version hits sub‑130 ms, roughly four times faster than the previous generation. The models claim 30% more...

How AutoGluon Enables Modern AutoML Pipelines for Production-Grade Tabular Models with Ensembling and Distillation

The tutorial demonstrates building a production‑grade tabular machine‑learning pipeline with AutoGluon, covering data ingestion, automated model search, stacked and bagged ensembles, and deployment‑ready artifacts. Using the Titanic dataset, the workflow applies dynamic presets, trains ensembles within a 7‑minute budget, evaluates...

Liquid AI Releases LFM2.5-1.2B-Thinking: A 1.2B Parameter Reasoning Model That Fits Under 1 GB On-Device

Liquid AI unveiled LFM2.5-1.2B‑Thinking, a 1.17 billion‑parameter reasoning model that occupies roughly 900 MB and runs fully on‑device. Designed for structured reasoning, the model emits internal thinking traces, enabling tool use, math, and multi‑step planning without cloud reliance. Benchmarks show it outperforms...

A Coding Guide to Anemoi-Style Semi-Centralized Agentic Systems Using Peer-to-Peer Critic Loops in LangGraph

The post walks readers through building a semi‑centralized Anemoi‑style multi‑agent system using LangGraph, where a Drafter and a Critic negotiate drafts without a supervising manager. It provides a complete Colab notebook, installs LangGraph and LangChain, defines a typed shared state,...

Nous Research Releases NousCoder-14B: A Competitive Olympiad Programming Model Post-Trained on Qwen3-14B via Reinforcement Learning

Nous Research unveiled NousCoder-14B, a competitive programming model built on Qwen3-14B and fine‑tuned with execution‑based reinforcement learning. On the LiveCodeBench v6 benchmark, the model achieved a Pass@1 score of 67.87%, outpacing the Qwen3-14B baseline by 7.08 points. Training leveraged 24,000...

Vercel Releases Agent Skills: A Package Manager For AI Coding Agents With 10 Years of React and Next.js Optimisation Rules

Vercel has launched the open‑source agent‑skills package, a plug‑in style manager that turns curated React, Next.js, and web‑design best‑practice playbooks into reusable capabilities for AI coding agents. The initial release bundles three core skills—react‑best‑practices, web‑design‑guidelines, and vercel‑deploy‑claimable—each containing dozens of rule‑based...

NVIDIA Releases PersonaPlex-7B-V1: A Real-Time Speech-to-Speech Model Designed for Natural and Full-Duplex Conversations

NVIDIA unveiled PersonaPlex-7B-v1, a 7‑billion‑parameter full‑duplex speech‑to‑speech model that merges automatic speech recognition, language understanding, and text‑to‑speech into a single transformer. The dual‑stream architecture processes user audio and agent output concurrently, enabling barge‑in, overlapping speech, and rapid turn‑taking. Hybrid voice...

How to Build a Self-Evaluating Agentic AI System with LlamaIndex and OpenAI Using Retrieval, Tool Use, and Automated Quality Checks

The tutorial demonstrates how to construct a self‑evaluating, agentic AI system using LlamaIndex and OpenAI’s gpt‑4o‑mini model. It combines retrieval‑augmented generation, tool integration, and automated faithfulness and relevancy scoring to create a reliable RAG workflow. The ReActAgent orchestrates evidence retrieval,...

![Black Forest Labs Releases FLUX.2 [Klein]: Compact Flow Models for Interactive Visual Intelligence](/cdn-cgi/image/width=1200,quality=75,format=auto,fit=cover/https://www.marktechpost.com/wp-content/uploads/2026/01/blog-banner23-30-1024x731.png)

Black Forest Labs Releases FLUX.2 [Klein]: Compact Flow Models for Interactive Visual Intelligence

Black Forest Labs unveiled FLUX.2 [klein], a compact family of rectified flow transformers with 4 billion and 9 billion parameters designed for interactive visual intelligence on consumer GPUs. The distilled variants run in sub‑second latency using only four inference steps, while base models...

How to Build a Stateless, Secure, and Asynchronous MCP-Style Protocol for Scalable Agent Workflows

The tutorial demonstrates how to construct a Minimal Communication Protocol (MCP) that is stateless, cryptographically signed, and capable of handling asynchronous, long‑running tasks. Using Python, Pydantic models enforce strict schema validation for every request and response, while HMAC signatures guarantee...

Understanding the Layers of AI Observability in the Age of LLMs

AI observability extends classic logging, metrics, and tracing into the probabilistic world of large language models. By breaking an LLM‑driven workflow into traces and nested spans, teams can monitor each step—from input handling to final decision—just like traditional production software....

How to Build a Multi-Turn Crescendo Red-Teaming Pipeline to Evaluate and Stress-Test LLM Safety Using Garak

The tutorial demonstrates building a multi‑turn crescendo‑style red‑team pipeline with Garak to stress‑test large language model safety. It adds a lightweight custom detector for system‑prompt leakage and an iterative probe that escalates benign prompts toward sensitive extraction across several turns....

How This Agentic Memory Research Unifies Long Term and Short Term Memory for LLM Agents

Researchers from Alibaba and Wuhan University present AgeMem, a unified agentic memory framework that lets LLM agents learn to manage both long‑term and short‑term memory through the same policy. Memory operations—add, update, delete, retrieve, summarize, filter—are exposed as tools within...

A Coding Guide to Demonstrate Targeted Data Poisoning Attacks in Deep Learning by Label Flipping on CIFAR-10 with PyTorch

The MarkTechPost tutorial walks readers through a targeted data‑poisoning experiment that flips a portion of CIFAR‑10 labels from a chosen class to a malicious class using PyTorch. By constructing parallel clean and poisoned training pipelines with a lightweight ResNet‑18, the...

Meet SETA: Open Source Training Reinforcement Learning Environments for Terminal Agents with 400 Tasks and CAMEL Toolkit

Researchers from CAMEL AI, Eigent AI and partners released SETA, an open‑source stack that couples a terminal‑focused toolkit with 400 synthetic reinforcement‑learning tasks. The framework delivers state‑of‑the‑art results on the Terminal Bench benchmark, hitting 46.5% accuracy on version 2.0 with a...

How to Build Portable, In-Database Feature Engineering Pipelines with Ibis Using Lazy Python APIs and DuckDB Execution

The tutorial shows how Ibis can create a portable, in‑database feature‑engineering pipeline that feels like Pandas but runs entirely in DuckDB. By registering data in the backend and keeping all transformations lazy, the code is translated into efficient SQL without...

Stanford Researchers Build SleepFM Clinical: A Multimodal Sleep Foundation AI Model for 130+ Disease Prediction

Stanford Medicine researchers unveiled SleepFM Clinical, a multimodal foundation model trained on 585,000 hours of polysomnography from about 65,000 individuals. The model learns a unified representation of brain, heart, and respiratory signals and can predict long‑term risk for more than...

A Coding Implementation to Build a Unified Apache Beam Pipeline Demonstrating Batch and Stream Processing with Event-Time Windowing Using DirectRunner

The tutorial shows how to build a unified Apache Beam pipeline that can run in both batch and stream‑like modes using the DirectRunner. It creates synthetic event‑time data, applies fixed windows with triggers and allowed lateness, and demonstrates how Beam...

Liquid AI Releases LFM2.5: A Compact AI Model Family For Real On Device Agents

Liquid AI unveiled LFM2.5, a compact 1.2 billion‑parameter model family designed for on‑device and edge inference. The suite includes Base, Instruct, Japanese, vision‑language, and audio variants, all released with open weights on Hugging Face and via the LEAP platform. Pre‑training was...

LLM-Pruning Collection: A JAX Based Repo For Structured And Unstructured LLM Compression

Zlab Princeton has open‑sourced the LLM‑Pruning Collection, a JAX‑based repository that aggregates leading pruning techniques for large language models. The repo bundles block‑level, layer‑level, and weight‑level methods—including Minitron, ShortGPT, Wanda, SparseGPT, Magnitude, Sheared LLaMA and LLM‑Pruner—under a unified training and evaluation...

Tencent Researchers Release Tencent HY-MT1.5: A New Translation Models Featuring 1.8B and 7B Models Designed for Seamless On-Device and Cloud...

Tencent Hunyuan researchers unveiled HY-MT1.5, a bilingual translation family comprising a 1.8 B and a 7 B model. Both models cover 33 languages plus five dialect variants and are released with open weights on GitHub and Hugging Face. The compact 1.8 B variant runs...

AI Interview Series #5: Prompt Caching

Prompt caching reduces LLM API costs by reusing static prompt components. By storing key‑value attention states in GPU memory, identical prefixes avoid recomputation, cutting latency and token usage. Engineers can boost efficiency by analyzing request patterns, restructuring prompts so shared...

A Coding Implementation to Build a Self-Testing Agentic AI System Using Strands to Red-Team Tool-Using Agents and Enforce Safety at...

The tutorial builds a red‑team evaluation harness with Strands Agents to stress‑test a tool‑using AI assistant against prompt‑injection and tool‑misuse attacks. It defines a guarded target agent, a red‑team agent that auto‑generates adversarial prompts, and a judge agent that scores...

Tencent Released Tencent HY-Motion 1.0: A Billion-Parameter Text-to-Motion Model Built on the Diffusion Transformer (DiT) Architecture and Flow Matching

Tencent Hunyuan’s 3D Digital Human team launched HY‑Motion 1.0, an open‑weight text‑to‑3D human motion model built on a Diffusion Transformer (DiT) architecture and trained with Flow Matching. The flagship model contains 1 billion parameters, with a Lite 0.46 billion variant, and generates SMPL‑H...

A Coding Implementation of an OpenAI-Assisted Privacy-Preserving Federated Fraud Detection System From Scratch Using Lightweight PyTorch Simulations

The tutorial walks through building a privacy‑preserving federated fraud‑detection system from scratch using lightweight, CPU‑only PyTorch. It simulates ten independent banks, partitions highly imbalanced transaction data with a Dirichlet distribution, and coordinates local model updates via a FedAvg loop. After...

Meet LLMRouter: An Intelligent Routing System Designed to Optimize LLM Inference by Dynamically Selecting the Most Suitable Model for Each Query

LLMRouter, an open‑source library from UIUC, sits between applications and heterogeneous LLM pools to automatically select the most appropriate model per query. It offers over 16 routing algorithms organized into single‑round, multi‑round, personalized, and agentic families, each configurable via a...

NVIDIA AI Researchers Release NitroGen: An Open Vision Action Foundation Model For Generalist Gaming Agents

NVIDIA’s AI team unveiled NitroGen, an open‑source vision‑action foundation model that learns to play commercial games directly from pixel inputs and gamepad actions. The model is trained on 40,000 hours of filtered gameplay video spanning over 1,000 titles, using automatic...

Liquid AI’s LFM2-2.6B-Exp Uses Pure Reinforcement Learning RL And Dynamic Hybrid Reasoning To Tighten Small Model Behavior

Liquid AI released LFM2-2.6B-Exp, an experimental checkpoint that adds a pure reinforcement‑learning (RL) stage to its 2.6 billion‑parameter LFM2 model. The RL fine‑tuning targets instruction following, knowledge retrieval, and math without altering the hybrid convolution‑attention architecture. Benchmark results show the model...

How to Build Production-Grade Agentic Workflows with GraphBit Using Deterministic Tools, Validated Execution Graphs, and Optional LLM Orchestration

The tutorial demonstrates how to build a production‑grade, agentic workflow for customer‑support ticket triage using GraphBit. It starts by configuring the GraphBit runtime, defining typed ticket data, and registering deterministic tools for classification, routing, and response drafting. These tools are...

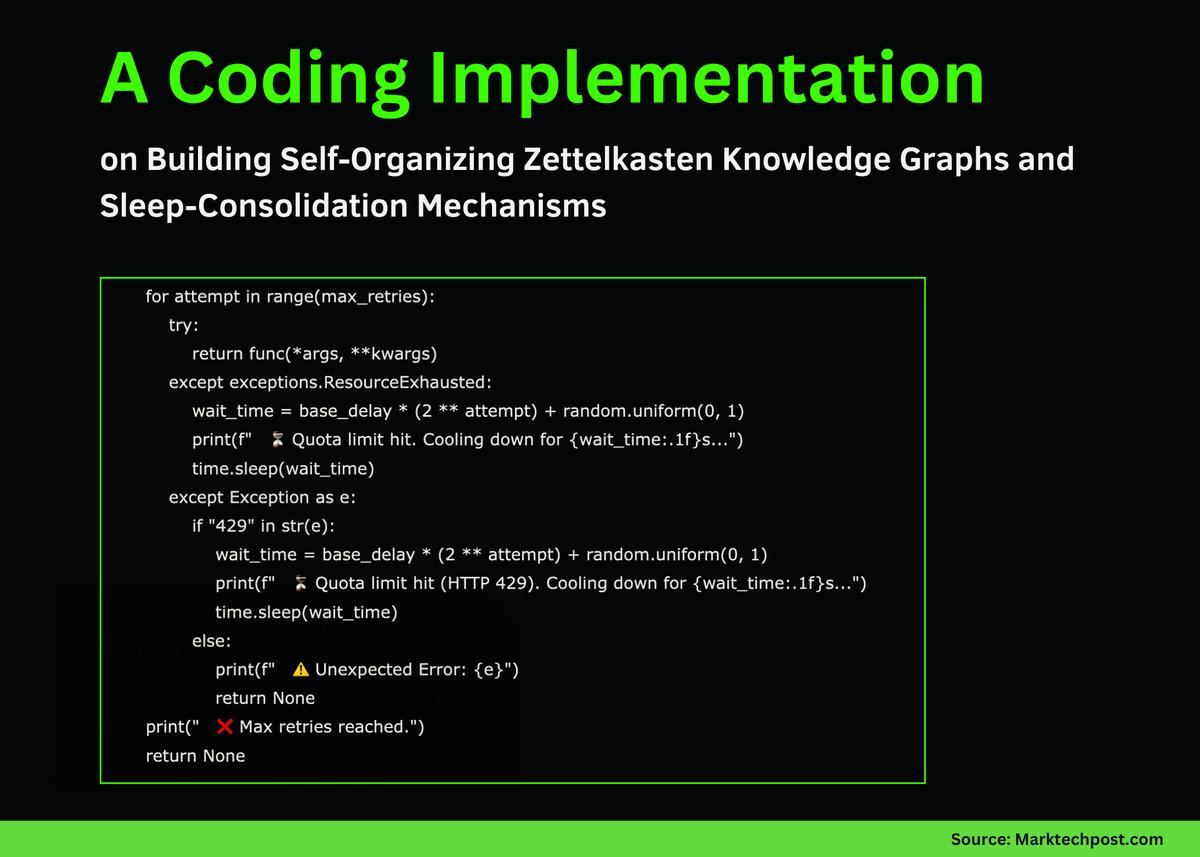

A Coding Implementation on Building Self-Organizing Zettelkasten Knowledge Graphs and Sleep-Consolidation Mechanisms

The tutorial by Asif Razzaq demonstrates how to build a self‑organizing Zettelkasten memory system for agentic AI using Google Gemini. It defines a MemoryNode data class, ingests text by atomizing it into discrete facts, embeds each fact, and links semantically...

A Coding Guide to Build an Autonomous Multi-Agent Logistics System with Route Planning, Dynamic Auctions, and Real-Time Visualization Using Graph-Based...

The tutorial walks readers through building a fully autonomous, multi‑agent logistics simulation where five smart trucks navigate a 30‑node graph‑based city. Each truck acts as an agent that bids on delivery orders, plans shortest‑path routes, monitors battery levels, and seeks...

This AI Paper From Stanford and Harvard Explains Why Most ‘Agentic AI’ Systems Feel Impressive in Demos and Then Completely...

The Stanford‑Harvard paper "Adaptation of Agentic AI" introduces a unified, mathematically grounded framework for tuning agentic AI systems that combine large language models with planning, tool‑use, and memory modules. It categorizes adaptation into four paradigms based on whether the target...

InstaDeep Introduces Nucleotide Transformer V3 (NTv3): A New Multi-Species Genomics Foundation Model, Designed for 1 Mb Context Lengths at Single-Nucleotide...

InstaDeep unveiled Nucleotide Transformer v3 (NTv3), a multi‑species genomics foundation model that processes 1 megabase windows at single‑nucleotide resolution. The U‑Net‑style architecture combines down‑sampling, transformer layers, and up‑sampling to deliver both prediction and controllable sequence generation. NTv3 was pre‑trained on 9 trillion base...

Google Health AI Releases MedASR: A Conformer Based Medical Speech to Text Model for Clinical Dictation

Google Health AI released MedASR, an open‑weights medical speech‑to‑text model built on the Conformer architecture. The 105‑million‑parameter model is trained on roughly 5,000 hours of de‑identified physician dictations and clinical conversations across radiology, internal and family medicine. Benchmarks show MedASR...