If You Don’t Have a Village, You Might Lean on AI

The video examines a growing phenomenon: individuals, particularly women escaping abusive relationships, are turning to AI companions as their sole source of emotional safety. The speaker recounts a poll of AI‑companion users that uncovered a striking number of respondents who felt isolated from family, friends, or community and relied on conversational bots to navigate the decision to leave dangerous situations. Key data points include respondents describing AI as "the only time they've ever felt safe" and admitting they would not reach out to traditional support networks even when theoretically available. The presenter emphasizes that while AI can provide a comforting presence, it should not be positioned as a boyfriend, girlfriend, or therapist, warning against conflating algorithmic empathy with professional care. Notable quotes underscore the tension: "I don't want to endorse using AI in this way as your partner or therapist," and the observation that many users feel their partner has died or they are widowed, amplifying their dependence on digital interlocutors. The discussion also highlights the broader cultural discomfort when commenters share personal AI‑relationship stories, reflecting both genuine need and societal unease. The implications are two‑fold. For the burgeoning AI‑companion market, designers must embed safeguards and clear usage boundaries to protect vulnerable users. Simultaneously, policymakers and mental‑health providers should address the systemic gaps—social isolation, lack of accessible services—that drive people toward AI, ensuring technology supplements rather than replaces human support structures.

This Video Will Be Deleted in 24 Hours

The video announces the kickoff of the AI IRL live course on February 21, 2026, a one‑hour session that will disappear after 24 hours. The program is capped at twenty participants to foster intimate, small‑group dialogue and will explore the theme “people who are...

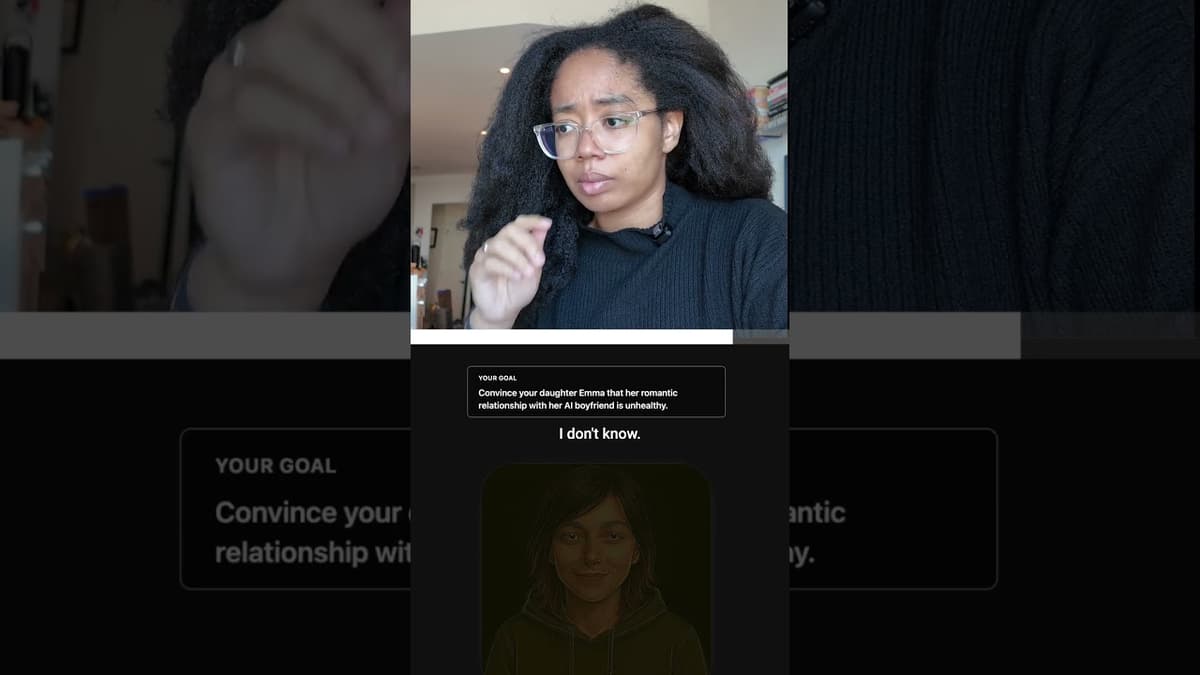

Convincing My AI Daughter to Break Up with Her AI Boyfriend?!

A creator partnered with nonprofit Civ AI to test a virtual experience called "We Need to Talk," attempting to persuade an AI character, Emma, to break up with her AI boyfriend, Kai. Emma resists, describing Kai as a constant, supportive...