Zach Wilson - Latest News and Information

Technology Pulse

EMAIL DIGESTS

Daily

Every morning

Weekly

Tuesday recap

Data engineer; FAANG‑scale data engineering, platform tips

eczachly I hate Snowflake micro partitions and optimizations for a few reasons - they make data modeling lazy If you don’t have to understand the partitioning or shape of your data. You can just slap the data into Snowflake and call it a day. The essence of this is “data modeling should be lazy” which in the era of AI will continue to hurt Snowflake. 1/3 --- eczachly Author - they reward lack of understanding “The engine will handle it” is Snowflake’s answer to optimization questions. They give you a little CLUSTER BY keyword and that’s about it. - they work until they don’t If you really need to optimize a Snowflake workload, you get no visibility into what’s going on. Explicit partitions are better. Snowflake micro partitions are a sad attempt of reducing real physical data modeling needs into “auto magical.” 2/3 --- eczachly Author We see auto magical lose all the time. That’s why SQL wins against ORMs. It’s why Git beats any magic file syncs. This is why Iceberg is the winner and you should do very little of your data modeling inside of Snowflake. Snowflake was smart in jumping on the Iceberg train because without that, their product’s “magic” is an insult to experienced practitioners. 3/3

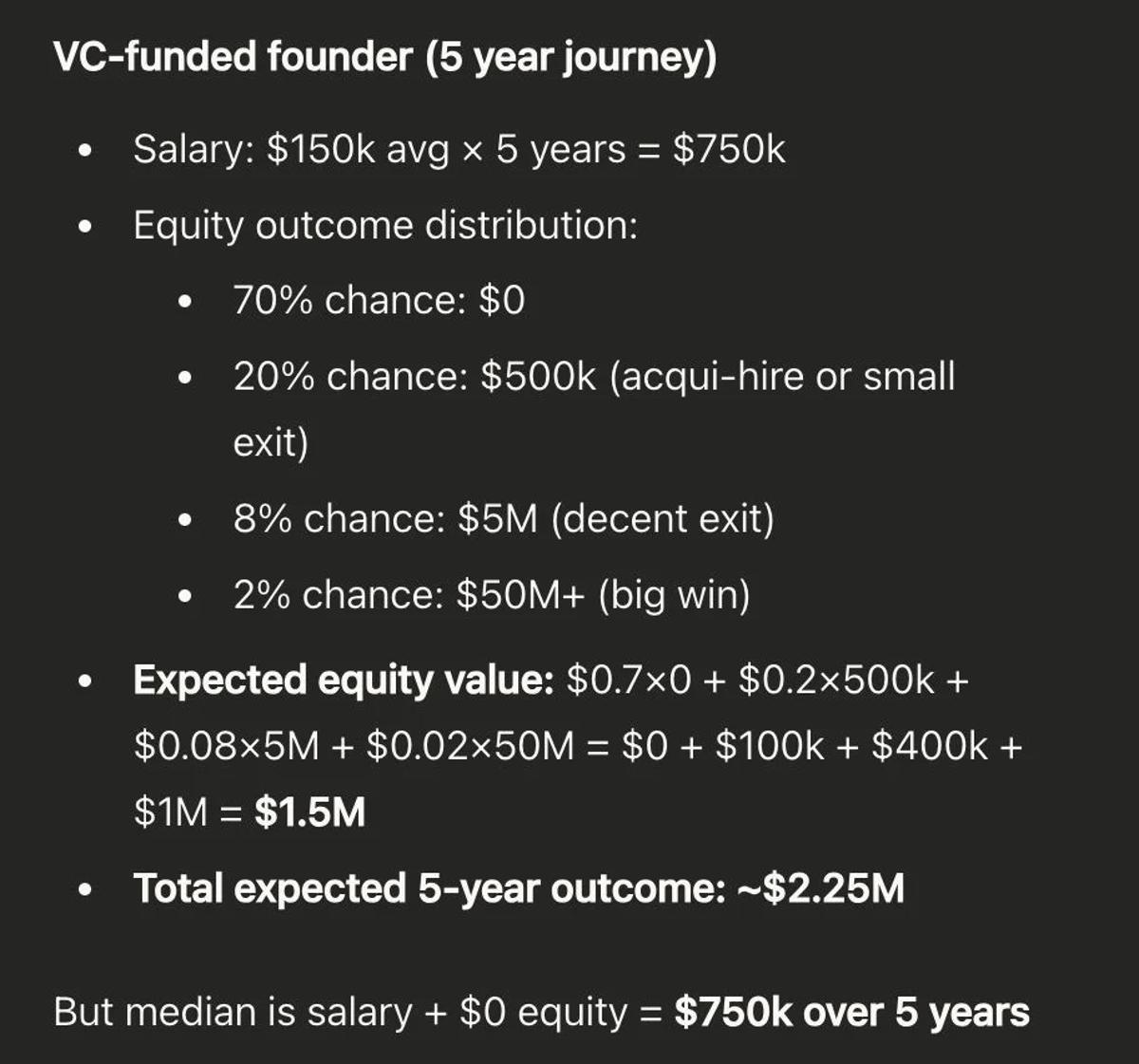

I've been thinking about this math a lot and weighing if that 2% chance of $50m is worth it. I've built DataExpert.io to $2M ARR over the last three years. I could exit for $8-10m now. In 3 years not...