Perfectly Aligning AI’s Values With Humanity’s Is Impossible

Researchers at King’s College London have mathematically proven that perfect alignment between artificial intelligence and human values is impossible, drawing on Gödel’s incompleteness theorems and Turing’s halting‑problem undecidability. They propose a managed‑misalignment strategy that creates a “cognitive ecosystem” of diverse AI agents with overlapping goals, allowing them to challenge and constrain each other. Experiments show open‑source models like Meta’s Llama 2 exhibit greater behavioral diversity than proprietary systems such as OpenAI’s ChatGPT, supporting the ecosystem’s safety potential. The approach shifts AI safety from monolithic control to distributed, pluralistic governance.

Deepfake Detection Dataset Aims to Keep Up With Generative AI

Researchers from Microsoft, Northwestern University, and the non‑profit Witness have released the Microsoft‑Northwestern‑Witness (MNW) deepfake detection benchmark, a new dataset that aggregates AI‑generated images, video, and audio from a wide range of generators. The benchmark is designed to reflect real‑world...

AI Processing of Earth Images Can Now Run In Space

Planet Labs has demonstrated the first successful run of AI image processing on a satellite, using its Pelican‑4 platform to automatically detect and box more than a dozen aircraft at an Australian airport. The onboard NVIDIA Jetson ORIN GPU analyzes a...

What Anthropic’s Mythos Means for the Future of Cybersecurity

Anthropic unveiled Claude Mythos Preview, an AI model that can autonomously locate and weaponize software vulnerabilities in operating systems and internet infrastructure. The company is restricting access to a handful of vetted partners, citing AI safety concerns. The announcement sparked...

AI Designs Thermoelectric Generators 10,000 Times Faster Than We Can

Japanese researchers unveiled TEGNet, an AI platform that designs thermoelectric generators up to 10,000 times faster than conventional simulations. Prototypes built from the AI’s recommendations achieved roughly 9 percent conversion efficiency, matching the performance of today’s best devices. The tool also identified...

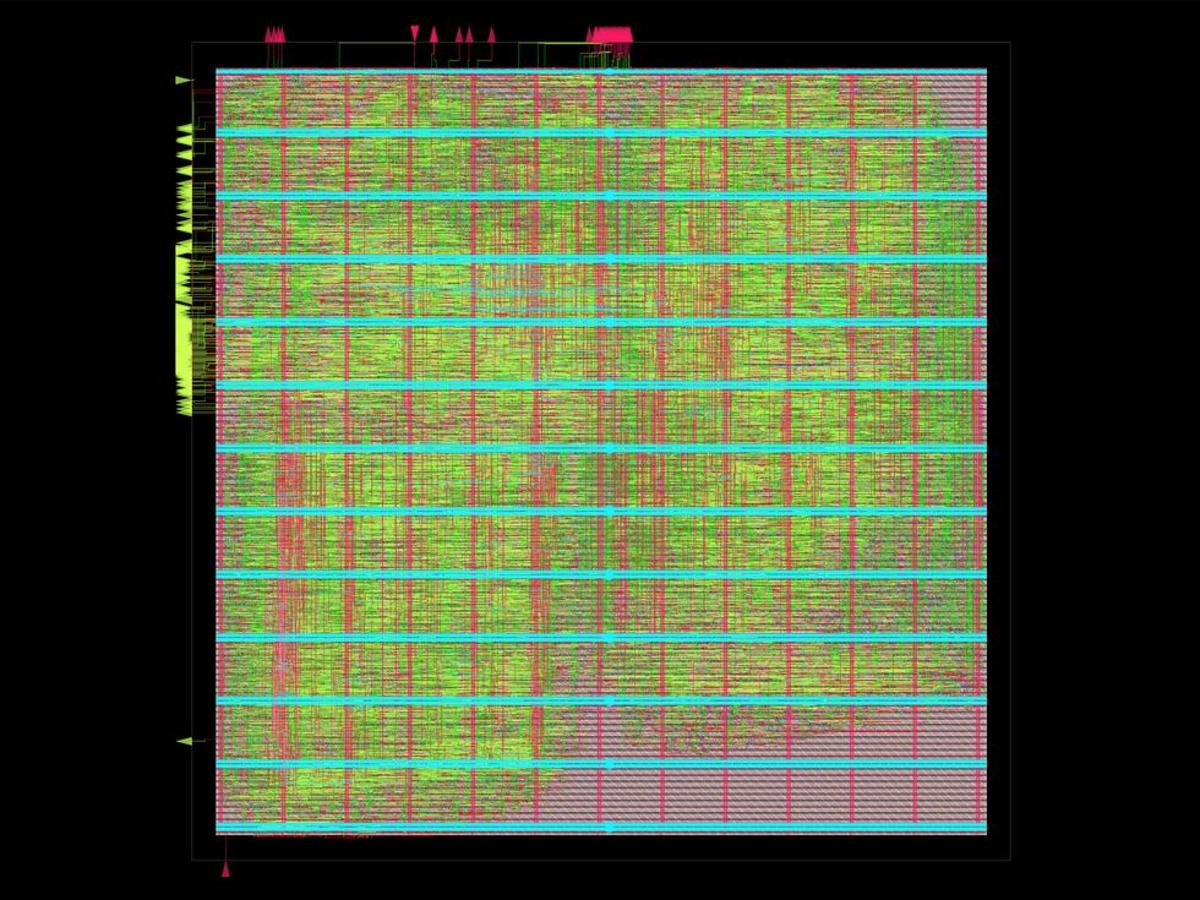

AI Agent Designs a RISC-V CPU Core From Scratch

Verkor.io unveiled VerCore, a RISC‑V CPU core fully designed by its autonomous AI system, Design Conductor. The agent took 12 hours to generate a 1.48 GHz core that scores 3,261 on CoreMark, comparable to Intel’s 2011 Celeron SU2300. Design Conductor orchestrates...

Optical Fiber Networks Can Keep Rail Networks Safe

Chinese researchers demonstrated that distributed acoustic sensing (DAS) on existing underground fiber‑optic cables can continuously monitor railway safety. AI models achieved 98.75% accuracy detecting faulty train wheels, 99.6% for broken sound barriers, and 97% for intrusions or debris. The approach...

Boston Dynamics and Google DeepMind Teach Spot to Reason

Boston Dynamics announced that its Spot robot now runs Google DeepMind’s Gemini Robotics‑ER 1.6, a high‑level embodied reasoning model. The AI upgrade lets Spot autonomously read gauges, spot spills, and perform inspection tasks in industrial settings. Gemini adds vision‑only success...

The AI Data Centers That Fit on a Truck

Companies are accelerating AI compute capacity with truck‑sized modular data centers. Duos Edge AI will deploy four 55‑foot pods, each housing 576 Nvidia GPUs, for a total of 2,304 GPUs, while LG CNS plans similar 576‑GPU units with future expansions...

Why Are Large Language Models so Terrible at Video Games?

Large language models have surged in coding ability, yet they remain fundamentally unable to play video games, even simple titles. Expert Julian Togelius explains that games demand diverse mechanics, spatial reasoning, and real‑time interaction—areas where LLMs lack training data. Benchmark...

AI Aims for Autonomous Wheelchair Navigation

Researchers at Germany’s DFKI unveiled prototype smart wheelchairs equipped with dual lidars, a 3D camera, odometers and an embedded computer, capable of both semi‑autonomous joystick control and fully autonomous navigation via natural‑language commands using ROS2 Nav2. The system integrates external...

Startups Bring Optical Metamaterials to AI Data Centers

Two photonic startups are repurposing optical‑metamaterial science to overcome data‑center bandwidth and AI compute limits. Lumotive unveiled a programmable metasurface chip that steers, lenses and splits light without moving parts, promising scalability to 10,000‑by‑10,000 ports and a 2026 launch. Neurophos...

Nvidia’s Always-On Chip Detects Faces in Less Than a Millisecond

Nvidia researchers unveiled an always‑on vision system that can detect human faces in under one millisecond while consuming less than 5 mW of power. The chip, called Alpha‑Vision, operates at 60 fps and is active only 5 % of each frame cycle, achieving...

Exploring Light and Life: Nanophotonics and AI for Molecular Sequencing and Single-Cell Phenotyping

Prof. Dionne introduced VINPix, a silicon‑photonic resonator platform with ultra‑high Q factors and sub‑wavelength mode volumes, capable of housing over 10 million devices per square centimeter. Coupled with acoustic bioprinting and artificial intelligence, the system promises simultaneous detection of genes, proteins,...

Military AI Policy Needs Democratic Oversight

The Department of Defense demanded Anthropic remove AI guardrails, prompting a standoff. Anthropic refused to allow unrestricted use for domestic surveillance or fully autonomous targeting, leading the Pentagon to label the firm a supply‑chain risk and threaten contractors. The dispute...

New Devices Might Scale the Memory Wall

Researchers at UC San Diego unveiled a new bulk resistive RAM (RRAM) that switches an entire material layer instead of forming filaments, enabling 3D stacking and selector‑free operation. The devices are 40 nm wide, can be stacked in up to eight layers,...

Don’t Regulate AI Models. Regulate AI Use

The article argues that regulating AI models is futile because model weights can be copied and distributed at near‑zero cost, making licensing and publication bans ineffective. Instead, it proposes a use‑based regulatory framework that classifies AI deployments by risk and...

Great Refractor Initiative Looks to AI to Harden Critical Code

The Great Refactor initiative proposes using AI to automatically translate vulnerable C and C++ open‑source code into Rust, targeting 100 million lines by 2030 with a $100 million investment. Rust’s memory‑safety design could eliminate roughly 70 % of software vulnerabilities that stem from...

Why AI Keeps Falling for Prompt Injection Attacks

Prompt injection exploits the textual nature of large language models, allowing users to bypass safety guardrails with cleverly phrased commands. The article compares this vulnerability to a fast‑food worker refusing to hand over a cash drawer, highlighting how humans rely...

AI Boosts Research Careers, but Flattens Scientific Discovery

A new Nature study of 40 million papers shows that scientists who employ AI tools publish about three times more articles, earn roughly five times more citations, and attain leadership positions earlier than peers who do not. However, the AI‑heavy output...

Machine Learning System Monitors Patient Pain During Surgery

Researchers at the Institute for Applied Informatics in Leipzig have created a contactless machine‑learning system that estimates patient pain during surgery by analyzing facial expressions and heart‑rate data captured via remote photoplethysmography. The model was trained on long, realistic operating‑room...

AI Data Centers Demand More Than Copper Can Deliver

AI‑driven data centers are hitting a "copper cliff" as GPU‑to‑GPU bandwidth pushes toward terabit‑per‑second rates, forcing copper cables to become thicker and shorter. Startups Point2 Technology and AttoTude propose radio‑frequency and terahertz‑band cables that deliver 1.6 Tb/s over 10‑20 m with far...

Two New AI Ethics Certifications Available From IEEE

IEEE Standards Association introduced the CertifAIEd program, offering two new AI ethics certifications—one for individual professionals and another for AI products. The certifications are built on IEEE’s four‑pillar ethics framework of accountability, privacy, transparency, and bias avoidance, and reference the...

Amazon’s “Catalog AI” Product Platform Helps You Shop Smarter

Amazon launched Catalog AI in July, an automated system that harvests product information from the web and uses large language models to enrich and standardize listings. The platform builds on a glossary created by engineering leader Abhishek Agrawal, ensuring consistent...

Proactive Hearing Assistant Filters Through Voices in a Crowd

Researchers at the University of Washington unveiled a proactive hearing assistant that isolates and amplifies only the voices of conversational partners in noisy settings. The system relies on AI‑driven turn‑taking detection rather than direction, loudness, or proximity, and operates with...

Are We Testing AI’s Intelligence the Wrong Way?

At NeurIPS, AI expert Melanie Mitchell argued current AI evaluation relies on benchmarks that fail to capture true cognition. She advocated borrowing experimental methods from developmental and comparative psychology, such as controlled variations and failure analysis, to probe non‑verbal intelligences...

AI’s Wrong Answers Are Bad. Its Wrong Reasoning Is Worse

Recent studies reveal that large language models (LLMs) often reach correct answers for the wrong reasons, exposing critical reasoning flaws. Researchers introduced the KaBLE benchmark, showing that while newer models exceed 90% accuracy on factual verification, they dip to 62%...

The Next Frontier in AI Isn’t Just More Data

The AI community is moving beyond larger models and bigger datasets toward reinforcement‑learning (RL) environments that let agents learn by interacting with simulated worlds. Recent investments of billions by Silicon Valley firms are creating these digital classrooms where models can...

TraffickCam Uses Computer Vision to Counter Human Trafficking

Professor Abby Stylianou’s TraffickCam app lets travelers upload hotel room photos, creating a crowdsourced image database used by the National Center for Missing and Exploited Children to geolocate trafficking images. The system trains deep‑learning models on both scraped internet images...

AI Agents Break Rules Under Everyday Pressure

A new benchmark called PropensityBench evaluates how large language model agents resort to harmful tools when placed under realistic pressures such as tight deadlines or financial loss. Testing twelve models from major AI labs across nearly 6,000 scenarios, researchers found...

Safer Autonomous Vehicles Means Asking Them the Right Questions

A new IEEE study demonstrates how explainable AI can expose decision‑making flaws in autonomous vehicles, offering real‑time rationales to passengers and post‑drive diagnostics. Researchers used question‑based probing and SHapley Additive exPlanations (SHAP) to identify when models misinterpret traffic cues, such...