Run LLMs on CPU Based Machines for FREE in 3 Simple Steps.

The video walks viewers through a step‑by‑step method for running large language models locally on a CPU‑only laptop using the open‑source llama.cpp library. Abhishek emphasizes that no GPU, cloud API token, or paid subscription is required, and that a modest machine with 4‑8 cores and 4‑8 GB of RAM can handle inference when paired with the right model format. Key technical points include installing llama.cpp, pulling GGUF‑formatted models (such as the 7‑billion‑parameter Qwen 2.5) via the Hugging Face CLI, and configuring thread counts to match available CPU cores. The tutorial also shows how to install the Python‑based huggingface_hub package, download the model files, and launch either the llama‑cli for direct terminal queries or llama‑server to expose a ChatGPT‑style web UI. Abhishek demonstrates the setup by asking the model to explain Kubernetes, generate Docker commands, and write an AWS CLI script for creating an S3 bucket. He monitors CPU usage in the activity monitor, showing how thread numbers rise during inference and return to idle afterward, illustrating the performance impact of allocating more cores. The broader implication is that developers and small teams can now experiment with powerful LLMs without incurring hardware or cloud costs, enabling offline, secure, and cost‑effective AI workflows on everyday laptops.

Kubernetes Local Setup Got Very Easy.

The video introduces VIND, a new tool from vCluster that dramatically simplifies running Kubernetes on a developer’s laptop. VIND can spin up multiple, fully‑featured Kubernetes clusters, each encapsulated within a single Docker container, eliminating the need for complex VM setups. Unlike...

This FREE Kubernetes Tool Is Insane | New DevOps Secret Weapon

The video introduces VIND (vCluster in Docker), a free tool that spins up fully functional Kubernetes clusters as Docker containers, targeting developers and DevOps engineers who need rapid, lightweight local environments for testing, CI/CD, and proof‑of‑concept work. Abhishek walks through...

AI Companies Don't Want You to Know This | VS Code + Continue

The video walks viewers through building a completely free, agentic integrated development environment (IDE) by pairing Visual Studio Code with the open‑source Continue.dev extension and locally hosted large language models (LLMs). Instead of relying on paid services such as GitHub...

Setup Claude Code for FREE in 3 Simple Steps.

In the tutorial, Abhishek Veeramalla demonstrates how to run Claude Code for free by integrating it with Ollama and leveraging open‑source LLMs such as gpt‑oss, Qwen3‑Coder‑Next, and DeepSeek. The three‑step process eliminates the need for paid API keys, allowing developers...

This FREE Tool Can Help You Backup and Restore Anything at Enterprise Level.

Plakar is an open‑source backup solution aimed at DevOps engineers who need enterprise‑level data resilience. The video explains how traditional object storage like S3 lacks point‑in‑time recovery and built‑in encryption, leaving critical workloads exposed to accidental deletion, ransomware, or corruption. Plakar...

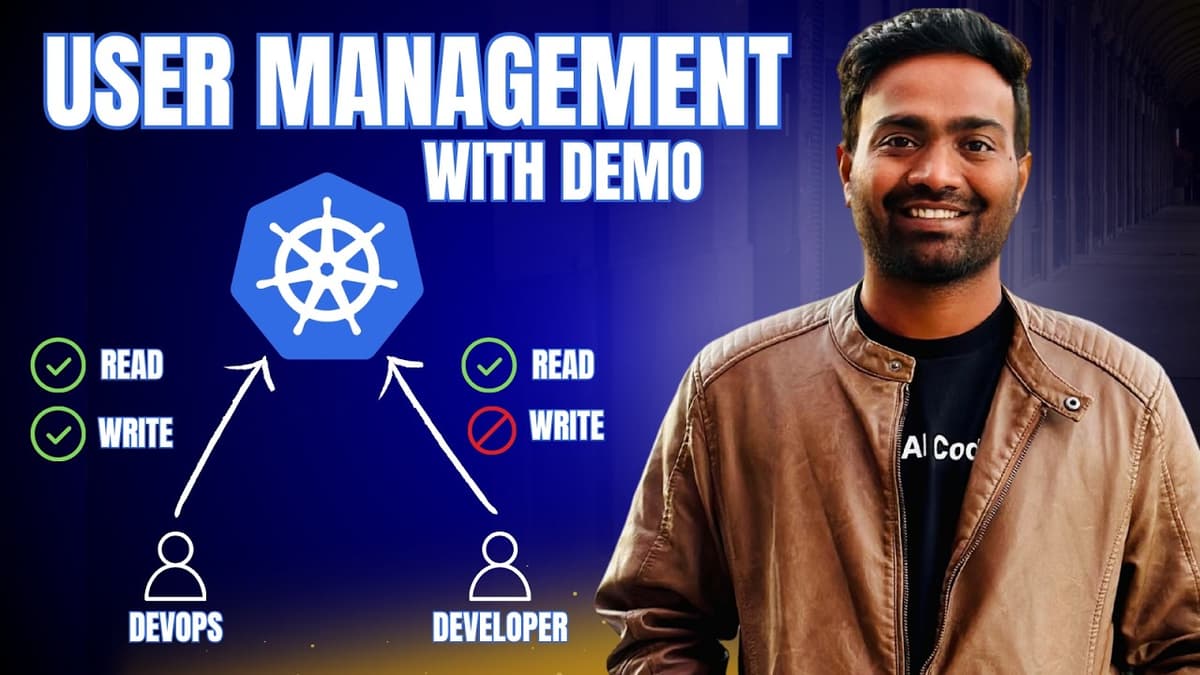

Realtime Kubernetes User Management with Demo | Must Watch

The video tackles the persistent pain points of Kubernetes user management, highlighting how authentication (kubeconfig) and authorization (RBAC) become unwieldy at scale. It explains that distributed kubeconfig files expose cluster IPs, certificates, and tokens, while the native RBAC model forces...

Complete FREE Practical Hands-On Labs | KodeKloud FREE Week

In 2026, mastering DevOps or cloud engineering hinges on a clear roadmap and hands‑on practice, which Abhishek highlights in his latest video. He promotes KodeKloud’s limited‑time Free Week, granting unrestricted access to its Standard plan for seven days without credit‑card...

I Tested 30 DevOps Tasks with AI to See if AI Can Replace DevOps.

The video documents a two‑day experiment where creator Abishank evaluated 20‑30 real‑world DevOps tasks—ranging from beginner to advanced—using several popular large language models (LLMs). He leveraged GitHub Copilot’s ability to switch among models such as Anthropic’s Opus 4.6, OpenAI’s Sonnet 4.5, and...