AI Runs Locally on iPhone 17, No Subscription Required

This is insane. Adrien's running the new Qwen 3.5 locally on an iPhone 17 in airplane mode 🤯 Nothing leaves his phone. There’s ZERO subscription attached. AI subs are now optional. https://t.co/moeq49i6aX https://t.co/u98zDLxQ9G

Scrapling Gives AI Agents Unlimited, Adaptive Web Access

🚨 Give your AI agent unrestricted internet access now. @Scrapling_dev just crossed 20k ⭐ on GitHub 🤯 Here is why it is the ultimate backbone for modern web agents. It solves the "Brittle Tool" problem. When a website renames a CSS class, normal bots...

MaxClaw: Free Credits, No API Fees, Flexible AI Tasks

This seems like one the most cost-effective way to deploy @OpenClaw rn. MaxClaw removes all API fees and tech hurdles so you can focus on building 💥 Their workflow is uber flexible: → start a task on Telegram → review results on their web...

Claude Code Adds Free Voice Mode for Faster Prompts

🚨 Voice Mode is officially coming to Claude Code. Currently rolling out to ~5% of users (Pro, Max, Team, and Enterprise). It's super simple to use. Here's how: 1️⃣ Use `/voice` to enable 2️⃣ Hold Space to talk, then release 3️⃣ Mix typing and voicing...

When AI Tools Crash, Boss Still Demands Rewrite

My face when my boss asks me to rewrite that article real quick but Gemini and Claude are down https://t.co/05L5K13Wqn

Gemini 3.1 Arrives, Nature Healing, All Is Well

no Gemini 3.0 Pro, but we got Gemini 3.1 Nature is healing and all is well. https://t.co/3fhraJgyOv

Claude 4.6 Reveals Blueprint for Perfect Prompts

This guy literally shared the anatomy of the perfect prompt in Claude 4.6 🧵 ↓ https://t.co/V10gEBOOvP

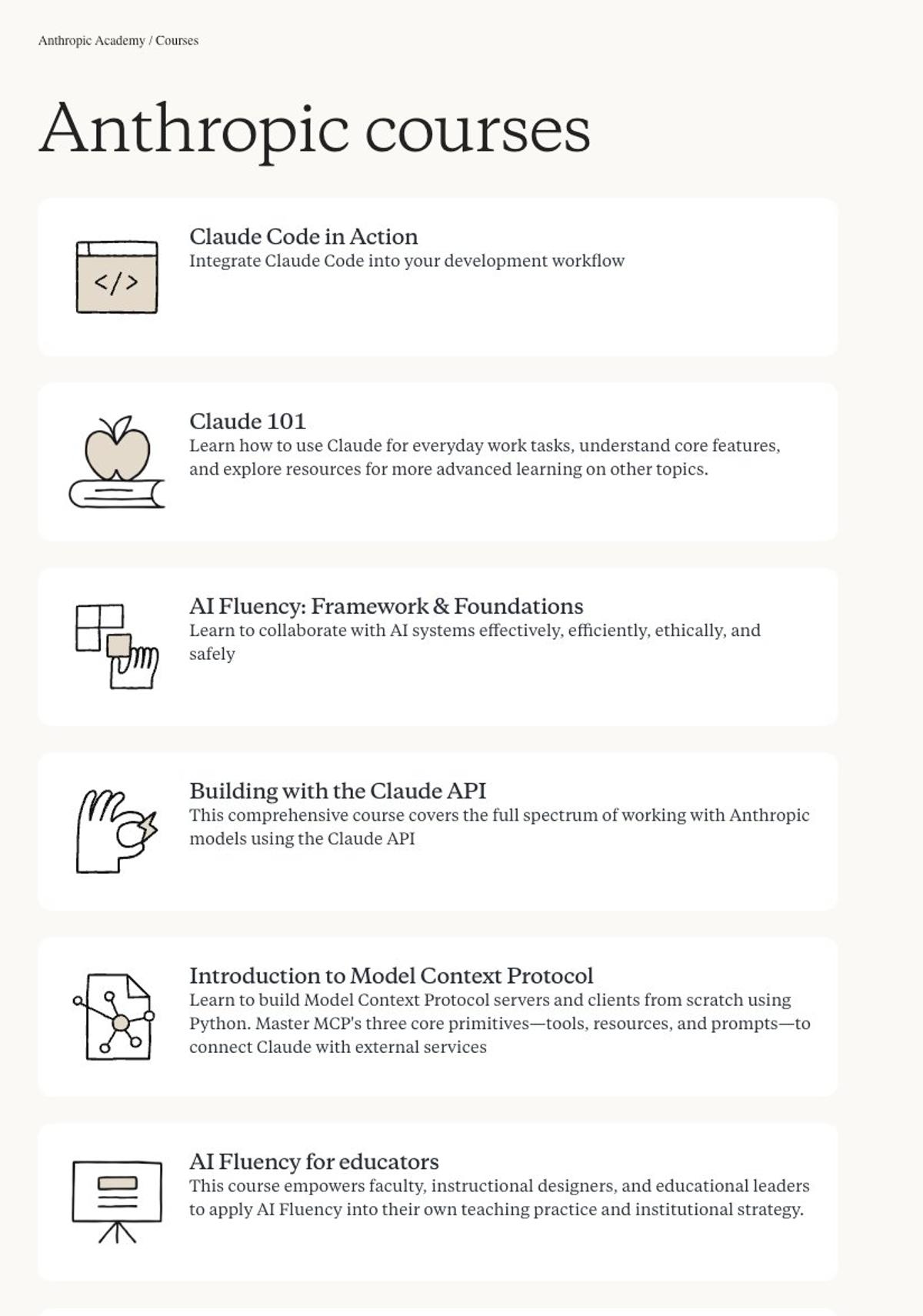

Anthropic Offers Free AI Mastery Courses with Certificates

In case you were living under a rock this weekend, Anthropic has launched free courses to master AI with certificates for $0.00 → https://t.co/AvC7x0FuxS https://t.co/xv990hlLj6

CEO’s Trump‑Pentagon Response Becomes Anthropic’s Strongest Advertisement

Anthropic's CEO’s response to Trump's order and the Pentagon clash is the best ad for Anthropic ever https://t.co/ACsEDGBiws

Anthropic Launches Free, Comprehensive AI Curriculum

Anthropic has quietly dropped a massive curriculum of free courses covering the entire AI ecosystem The syllabus is STACKED 🔥 → Claude Code: CLI automation for your workflow → MCP Mastery: building custom tools and resources in Python → API: a complete guide to...

Google Launches Gemini Code Wiki for Free Repo Docs

ICYMI, Google dropped Gemini Code Wiki. A free too that generates interactive docs from any GitHub URL 🤯 The tool lets you: → Chat with Gemini to understand *any* repo → Visualize code structure → Create docs from public repos 100% free. Link in 🧵↓ https://t.co/1k1gCmTUQv

AI PALs Blur Reality, Remember History, Form Opinions

The line between real and AI isn't just blurred, it's GONE. The nuance on these new @tavus PALs is scary good. They don't just listen, they remember history and push back with their own opinions 🤯↓

OrchidsApp 1.0 Enables Building Any App on Any Stack

Been testing the new @orchidsapp 1.0. It's unlike anything I've seen. → Build *ANY* app → Across *ANY* stack → Web, Mobile, Agents, Python, Chrome extensions, robotics.. ... you can even plug in your ChatGPT/Claude Code subs 🔥 8 wild use cases 🧵↓ https://t.co/VFNQWkHqiv

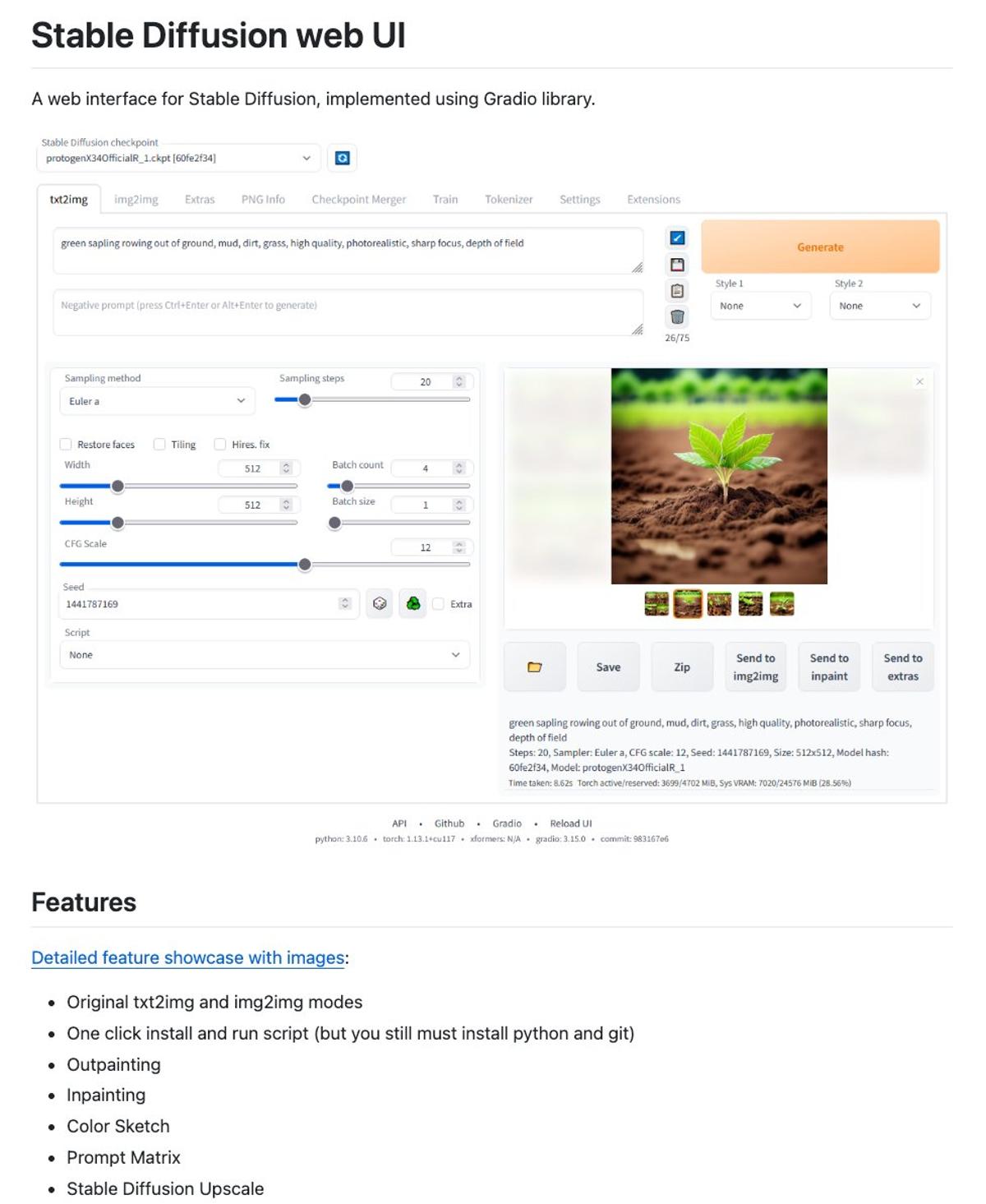

Unlimited Free AI Image Generation via Open-Source Diffusion

Someone built an open-source Stable Diffusion interface that lets you generate unlimited AI images for free 🤯 It has over 160k stars on GitHub. No limits or monthly fees. → Handles text-to-image, image-to-image, and inpainting → Runs on your own GPU → Switch between...

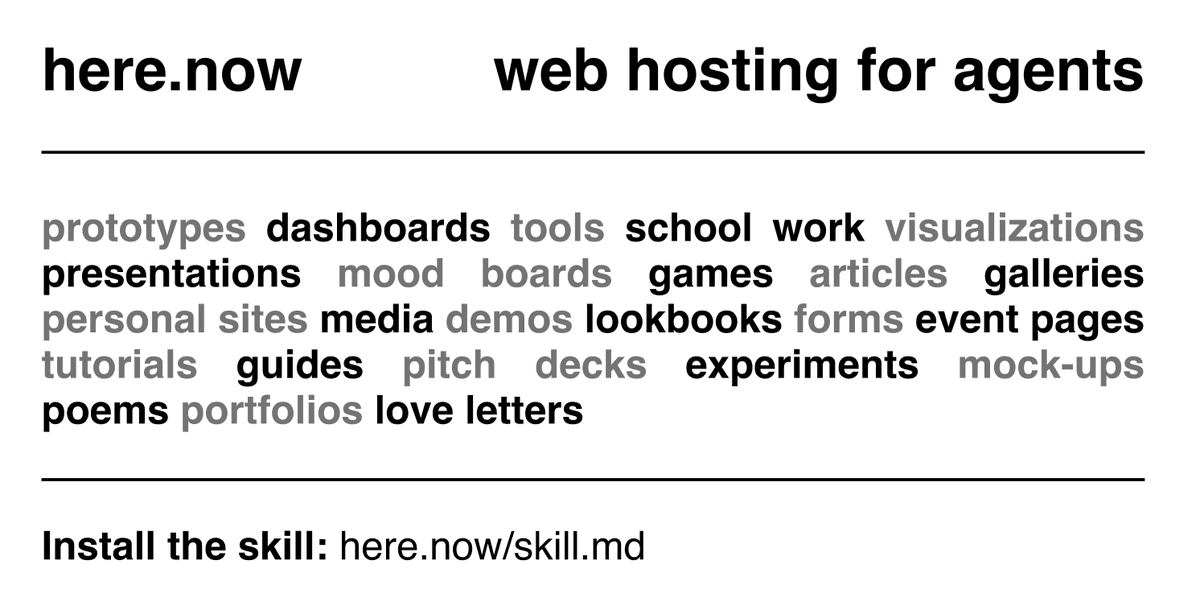

Instant Free Hosting for AI Agents, No Sign‑Up Required

🚨 If Vercel and Railway are for full-on apps, https://t.co/v46R668Id4 is for everything else. My friend @adamludwin just dropped web hosting for AI agents. Just tell your agent: "publish to https://t.co/v46R668Id4", get a URL back in seconds 🤯 Free. No sign-up required. https://t.co/BJm3jOTehK

Gemini Pro 3.1 Replaces PowerPoint with Instant Slides

PowerPoint is officially dead. With Gemini Pro 3.1, anyone can now design breathtaking slide decks in seconds 🤯 Steal the prompt below 🧵↓ https://t.co/3Q9G7kykus

Earn a Certificate with Google’s 7 AI Courses

These seven Google AI courses are a masterclass. They take you from writing simple prompts to building working web apps with no code. Best part? You earn a certificate at the end 🔥 Here are the direct links to get started 🧵↓ https://t.co/jWIxRp4V4O

ZeroClaw Runs on $10 Hardware with <5 MB RAM

Why buy a Mac Mini when you can run ZeroClaw on $10 hardware? ZeroClaw 🦀 is a new, 100% Rust-based alternative to OpenClaw that consumes less than 5MB of RAM. Runs on $10 hardware. Uses <5MB RAM. That’s a 99% reduction in memory...

Agent Swarms Let Anyone Build Full Applications

🚨 @AnthropicAI just released their 2026 Agentic Coding Trends Verdict → Everyone has become a developer. We moved from single assistants to autonomous agent swarms. They now form teams, work days on full systems, and let non-techies ship full apps 💥 18-page report in...

AI Proxies with Persistent Memory Now Shipping

The fact that we can now birth, raise, and unleash an AI proxy with persistent memory is wild. Sounds like pure sci-fi... but @pika_labs is actually shipping it 🔥

Claude Code's Blueprint Revealed: Deep Design Dive

Wow. The internal blueprint for Claude Code is now public 🤯 @bcherny, creator of Claude Code, just broke the silence. → 50-minute breakdown of the build process → Deep dive into core design decisions → Zero-fluff look at past mistakes + future plans https://t.co/I1mmXLBCDz

Voicebox Dethrones ElevenLabs with Open-Source, Local TTS

ElevenLabs just lost its moat 🤯 Voicebox just dropped: → Powered by Alibaba's Qwen3-TTS for near-perfect cloning → Ships with a DAW-like "Stories Editor" → No cloud, runs locally on your machine 100% Open Source. 100% Local. Link to repo in 🧵↓ https://t.co/m7aQ5wutK3

AI Agents Get Visual Avatars with Phoenix-4

Building AI agents? Text interfaces were just the warm-up. @tavus just launched Phoenix-4 to finally give your agents a face that matches their skills 🦾 You have to see this ↓

AI Agents Now Autonomously Purchase Freelance Services

OpenClaw + Contra = Agents buying creative services 24/7 🔥 For the first time AI agents can buy from freelancers autonomously. This is an amazing development for everyone. So I put together a doc with the top 20 services agents are buying right...

GenAI Turns Specs Into Self‑healing Tests, Ending E2E Trap

E2E testing is a trap. You write the script. The UI updates. The pipeline explodes 💥 I’ve been testing Kane AI by @TestMuAI (formerly LambdaTest). It finally breaks the cycle. It’s a GenAI agent that turns plain English (or JIRA specs) into resilient, self-healing...

New AI Agent Beats ChatGPT with Persistent Memory

I’ve been testing a new AI agent that actually remembers who I am. Meet @getsurething. It hits 59.3% on the `REAL-Agent Benchmark`, crushing both OpenClaw and ChatGPT. And unlike them, it has Persistent Memory, SOC 2 security, and connects to 1000+ apps 🔥 Let's...

Karpathy Offers Free 3.5‑hour Deep Dive Into ChatGPT

Still can’t believe @karpathy released this 3.5-hour free deep dive on how ChatGPT actually works for free 🤯 https://t.co/Qs7EabRgMW

Nvidia, Khosla Invest $200M to Power Instant Multilingual AI Agents

Nvidia and Khosla just put $200M into @polyaivoice, and looking at the stats, it makes sense. They already handle 500M+ calls for giants like Marriott & PG&E. Now, you can build a 45-language agent in 5 mins just from a URL. Massive...

Cara-3 Nails Micro‑expressions, Fixing AI Avatar Eye Issue

AI avatars usually feel a bit 'off'. IMO the eyes always give it away 😅 But Cara-3’s micro-expressions are actually on point 👀

SureThing Automates Gmail, Calendar, and More Offline

Seriously curious about @getsurething. The fact that it can automate my Gmail, my Calendar, and a zillion other apps while I’m offline seems wild 👀

Kling 3.0 Sets New 1080p Video Generation Standard

🚨 Kling 3.0 vs Veo 3.1 vs Sora 2. I’ve been testing all 3, Kling 3.0 takes the crown 👑 The native 1080p quality is insane. Feels like we just crossed a major threshold for video gen. Watch the showdown 🧵↓ https://t.co/SIGsdjQLCD

Cline CLI 2.0:

FINALLY AI agents that don't force you into VS Code. @Cline CLI 2.0 is out 🔥 → Fully headless, open-source, and model-agnostic. → Run parallel agents in tmux or pipe them into CI/CD. → It just works where you work (Neovim/Zed included). 3 wild use cases...

Seeking Hosted Multi‑LLM Chat App with RAG Folders

Been enjoying @TypingMindApp so far. But always on the lookout for alternatives to compare. Main requirements: • Multi-LLM support • Chat folders with per-folder knowledge bases (RAG) • Hosted inference only (no local models → so no @ollama / @lmstudio) Any suggestions?

Gemini 3 Pro Replicates My Style Instantly, No Fine‑tuning

Gemini 3 Pro gets my writing style out of the box. No fine-tuning needed 😁 https://t.co/vqnDwLlf7z

Anthropic’s Super Bowl Ad Drives 11% User Growth

In other news, Anthropic got an 11% user boost from its OpenAI-bashing Super Bowl ad, data shows Dario wins. Again. https://t.co/rqAlCrxbRO

One Day with Gemini Reshapes My View of Google

how I feel about Google rn after trying the Gemini app for a day https://t.co/AvDWD718eH

User Complaint Prompts Swift Google VP Response

My thread actually worked. From complaining about the lack of folders in Gemini to getting a "soon" from Google’s VP in just a few hours 😁

Run Full OpenClaw Suite in Browser—No Mac Mini Needed

Your hosted OpenClaw, no Mac Mini required 🔥 https://t.co/N8AEGAQquE is a fully dedicated computer in your browser with EVERYTHING pre-installed → Search (Brave) → Scraping (Firecrawl) → Audio (ElevenLabs) → Media (Fal) You don't need accounts. It just works automatically

AI Safety Lead Quits, Warns World Spiraling Apart

My morning headline: Anthropic’s AI safety lead quits, saying the world is drifting into peril as our values and actions keep diverging. Just a casual day in 2026. https://t.co/xHc7rZBTzE

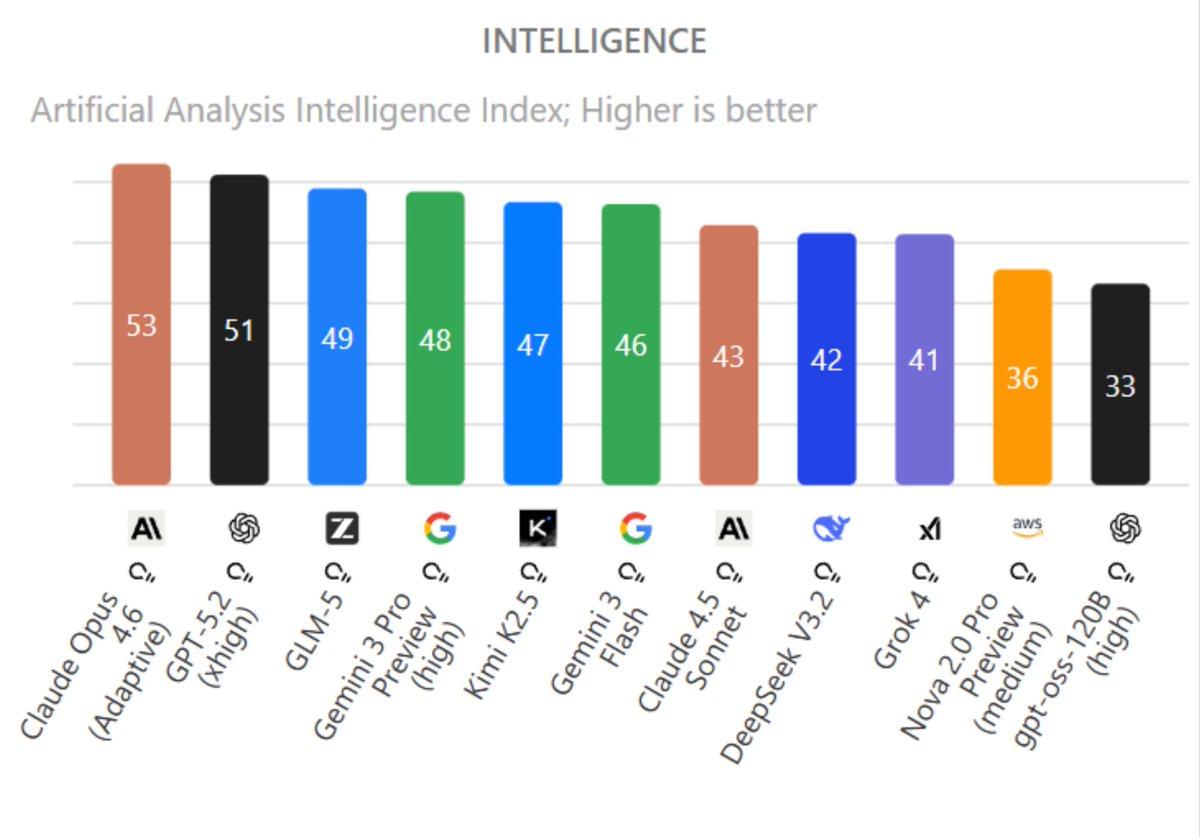

GLM‑5 Surpasses Gemini 3 Pro on Intelligence Index

GLM-5 is now the highest-scoring open-weight model, ranking above Gemini 3 Pro on @ArtificialAnlys’ Intelligence Index 🤯 https://t.co/2zWxmZSYV0

GeminiApp's Two-Year Rise From Underdog to Success

Hard to believe @GeminiApp is 2 years old already. From being the underdog (as Bard) and getting roasted online to where it is now. What. a. journey.

Distinguishing LLM Authors From Their Output Is Hard

Be honest, can you actually tell which LLM wrote something (ChatGPT, Claude etc.), just from the text?

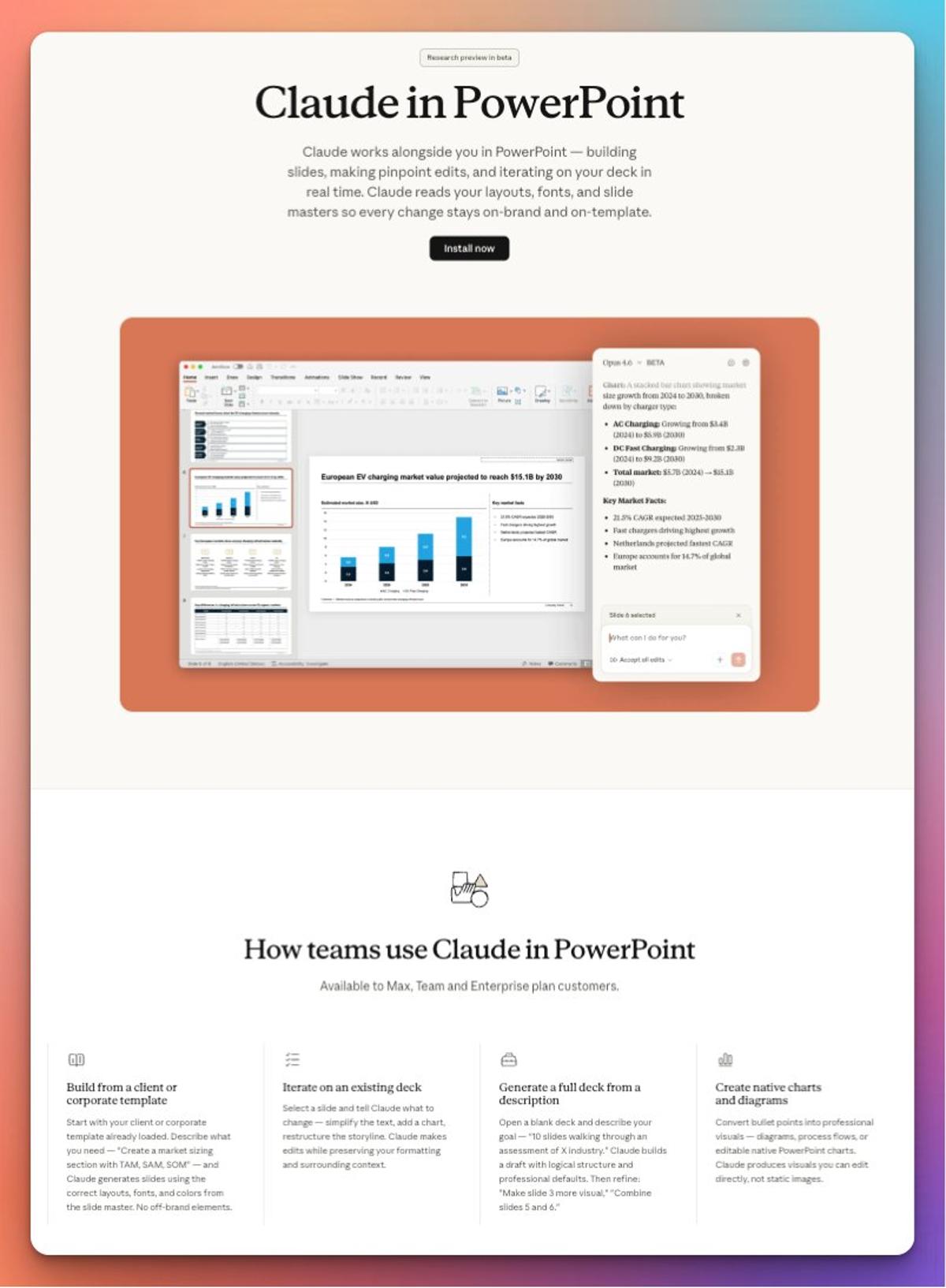

Claude AI Now Powers PowerPoint Deck Creation

🚨 Claude is now in PowerPoint, and it's just insane how good it is getting. → it works inside your template → generate full decks with logical structure → iterate to simplify → combine slides .. it can even creates editable diagrams directly in PPT...

Everyone's a Developer Thanks to Autonomous Agent Swarms

🚨 @AnthropicAI just released their 2026 Agentic Coding Trends Verdict → Everyone has become a developer. We moved from single assistants to autonomous agent swarms. They now form teams, work days on full systems, and let non-techies ship full apps 💥 18-page report in...

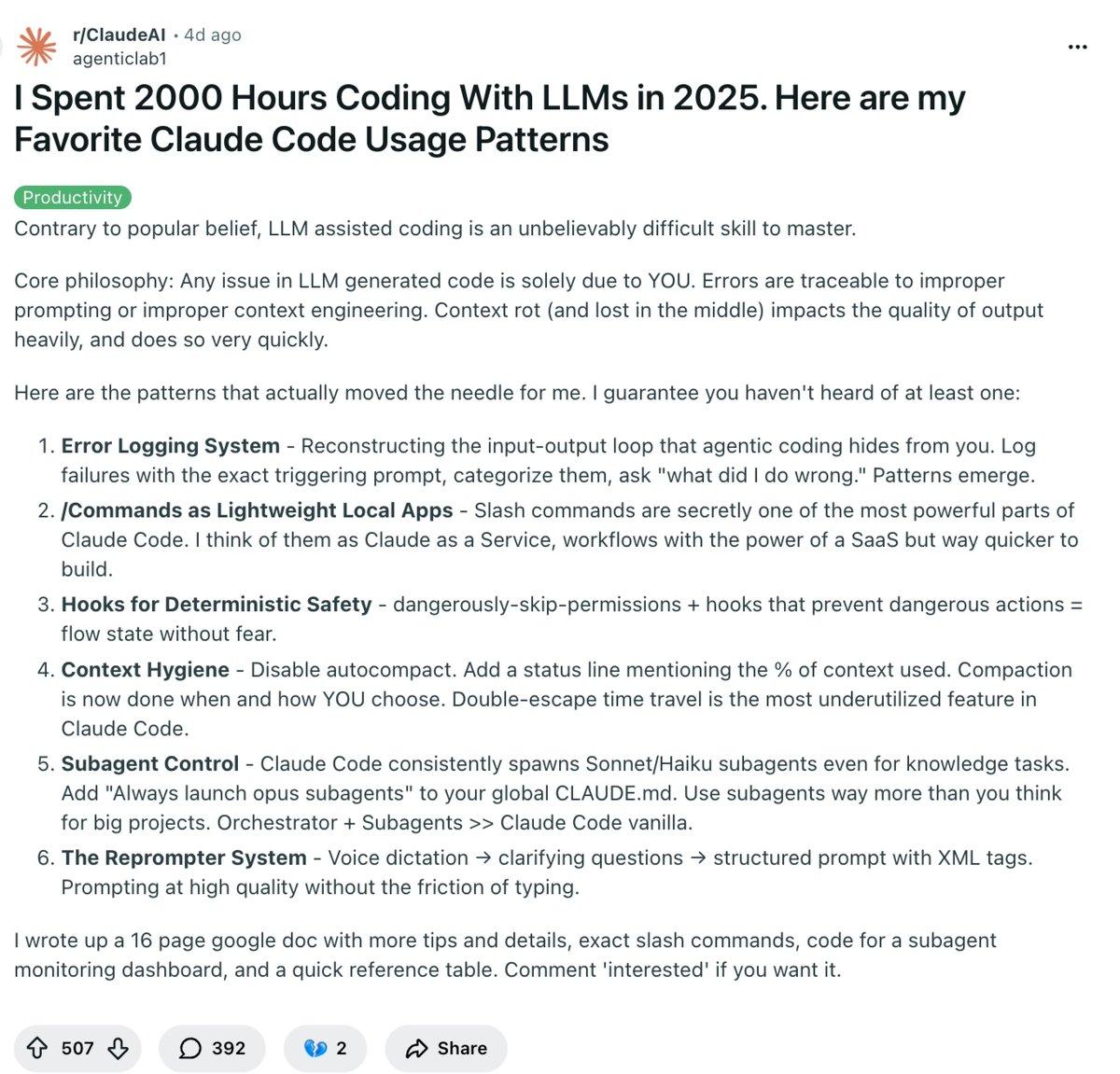

2000 Hours of L

This guy literally spent 2,000 hours coding with LLMs in 2025 and shared advanced Claude Code usage patterns that actually work. download link to his free 17-page Google doc below 🧵↓ https://t.co/TQWSQNufRa

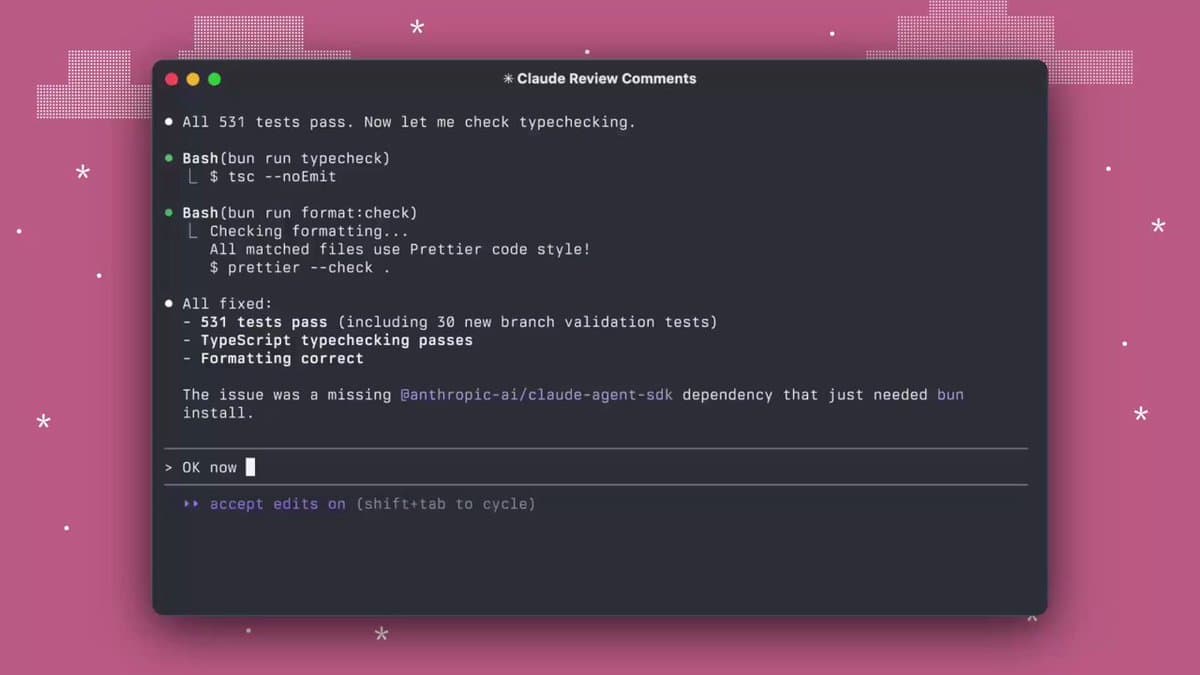

Claude Code Adds AI-Generated Next-Prompt Suggestions

Such a cool addition in the latest Claude Code update (Dec 17th). Claude now automatically suggests your next prompt 🤯 Once a task completes, you’ll see a ghost-text follow-up Hit Enter to run it, or Tab to prefill your next prompt ↓ https://t.co/cdJ9yQjPUv

AI Generates Complete Hermès‑Inspired Ad End‑to‑End

Claude Code is just wild. This entire Hermès-inspired ad was generated autonomously: → script, voice, visuals, music, branding... everything. Claude Opus 4.5 wrote the script, orchestrated ElevenLabs, Google Veo 3, ffmpeg, downloaded music, and handled production end to end 🤯 https://t.co/CxyQmrahlw

NVIDIA Launches Ultra‑low‑latency Open‑

Wow. @NVIDIA just kicked off the year by pushing open source voice agents way forward. They just dropped Nemotron Speech ASR, a speech to text model designed from the ground up for low latency use cases like real time voice agents 🔥 The...

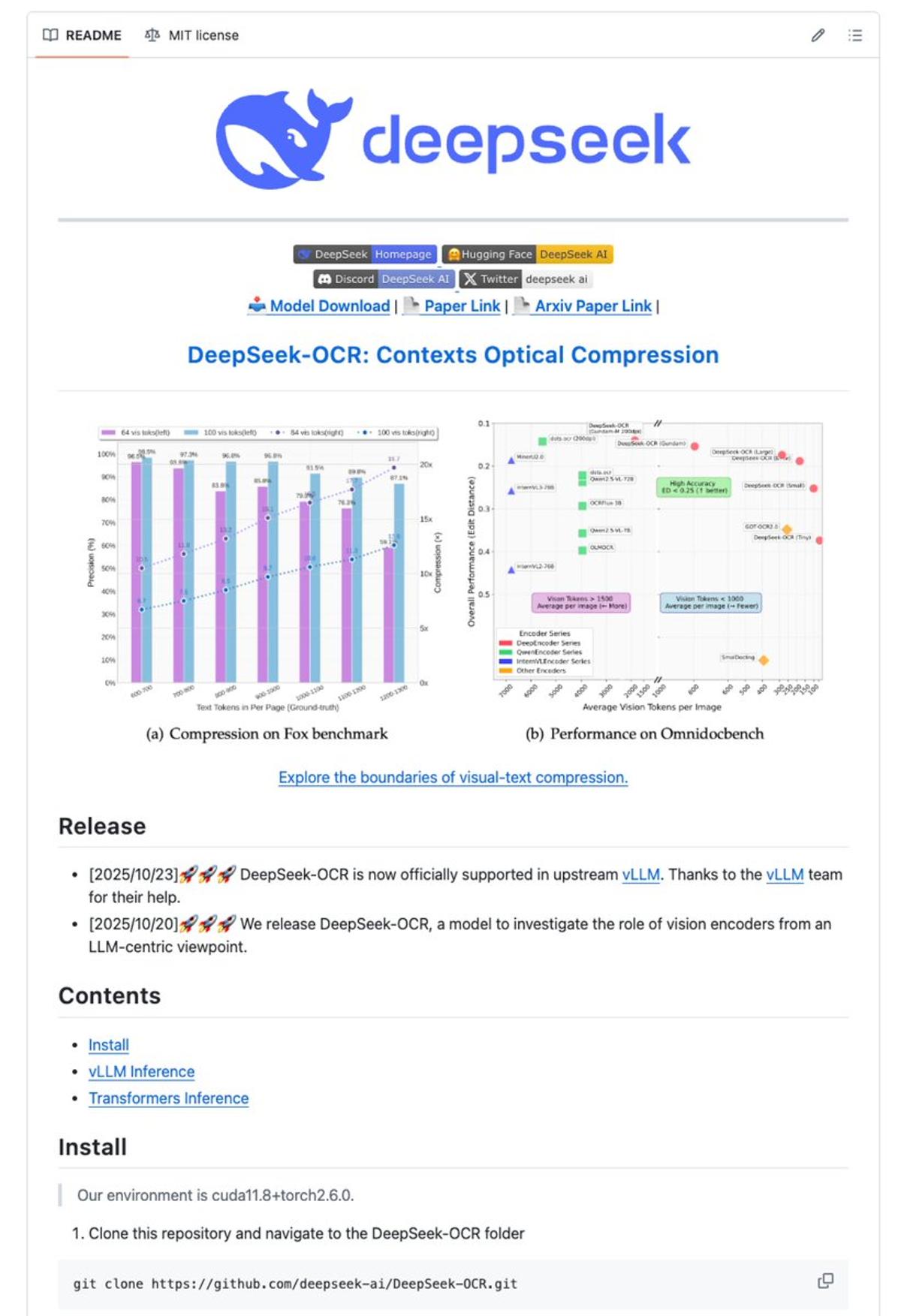

DeepSeek OCR Cuts Token Usage 10×, Hits 97% Accuracy

ICYMI DeepSeek has just unveiled an OCR monster 🤯 DeepSeek-OCR is a 3B-parameter model that redefines document intelligence. It reaches 97% character-level accuracy with 10× input compression, preserving every detail. Most OCR systems require over 6,000 tokens per page. DeepSeek-OCR achieves the same...

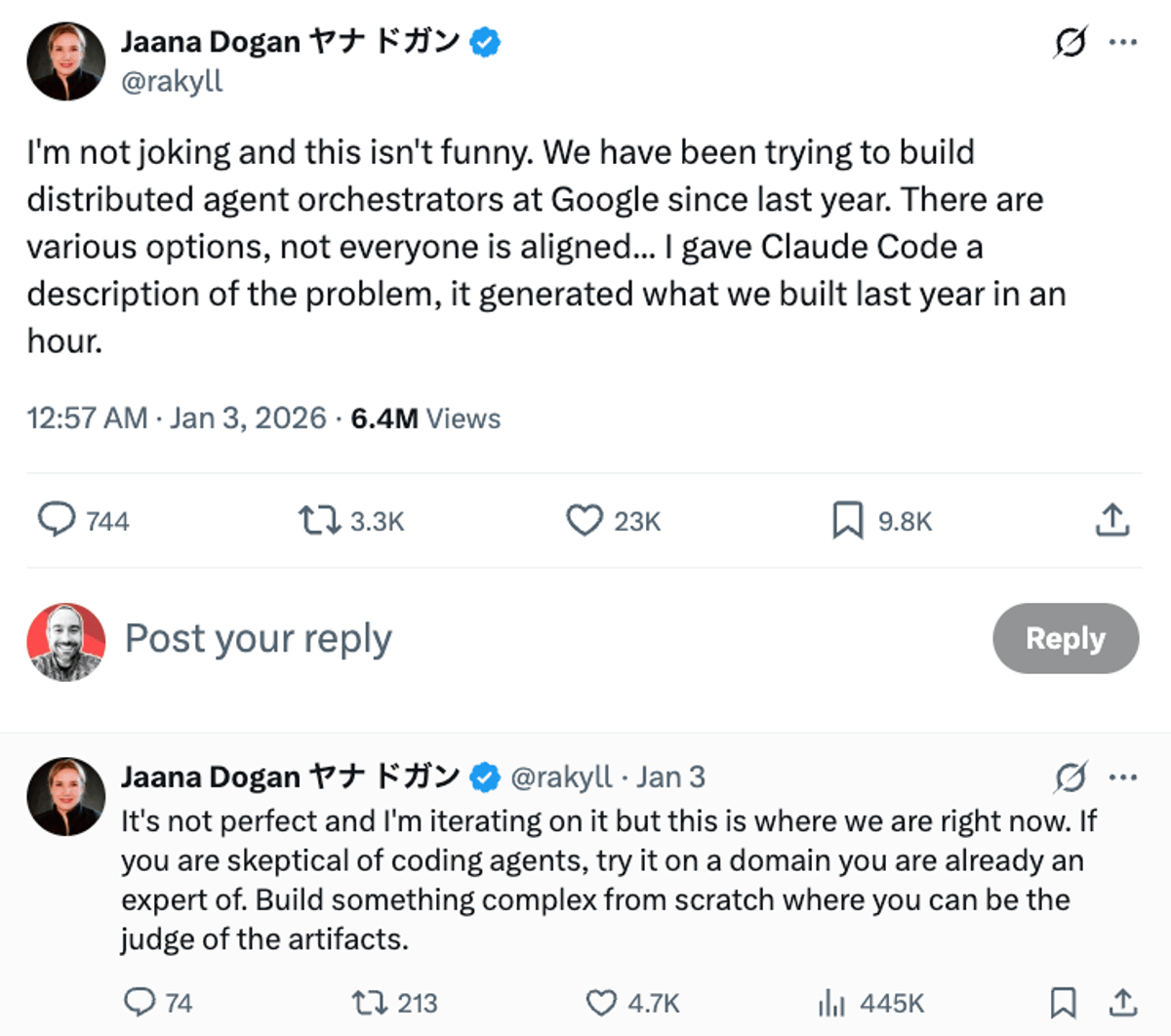

Claude Code Completes a Year's Work in One Hour

Wow. Principal Engineer at Google reveals Claude Code built a year’s worth of work in just one hour, matching months of engineering effort by her team. https://t.co/QhPgnYwwgt