GLM 5.1 Fails to Process Tool Output, Earlier Versions Work

@Zai_org In Cursor, GLM 5.1 completely fails to find/read any tool output, where GLM 4.7 and 5.0 works with the same endpoint -- just different model name. Not exactly sure what GLM 5.1 is doing differently, looks the same in IDE and different approaches and tools also failed... https://t.co/c3kDJIJTPz

Cursor’s Unlicensed K2.5 Model Signals Strategic Misstep

A member of the Moonshot team claimed (in now deleted tweets) they confirmed Cursor's new model is based on K2.5 without a license. Hard to not see this as a massive strategic mistake of Cursor's management and the board. If you're...

LLM‑Driven RL Environment Learns During Interaction

Currently writing an article on my work where the RL environment (or agent harness) learns back while the LLM interacts with it... If you have any references or pointers for releated work on this topic, I'd welcome it! https://t.co/DGUCi3PLpv

GLM‑5 Shows Regression in Python Coding Tasks

Alright, I'm calling it: GLM-5 is a regression from GLM 4.7 for Python coding. 🫠 Subscribed to Z(.)ai on the basis of 4.7 as it reliably took over all my devops too, and been using GLM 5 since launch. But with...

2.6k‑parameter RL Model Matches 62B Baseline on GSM8k

Oh My! I just built a new RL-native system with 2.6k parameters that matches a specialized 62B model from a 2024 paper, specifically on GSM8k. That's over 20,000,000x smaller than the baseline — but the approach is so different the traditional...

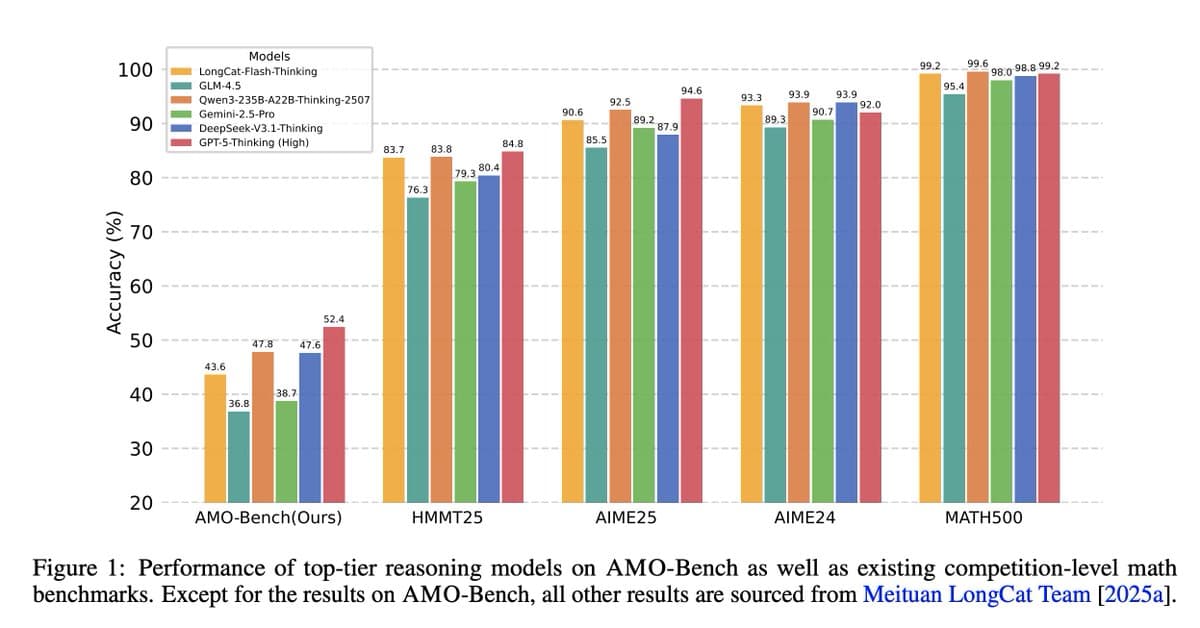

Intellect-3 Beats Larger Models with 34% Gain

If the initial benchmarks scores (and graphs used for PR) showcased a 3x reduction in size for the same performance, I think the broader public reception would have been less tepid. Just looking at this, it just seems 7% behind other...

Tiny 6k Model Replicates Behaviors of Massive Systems

A latent process that operates over plans? I've been working on this recently! What's most fascinating to me: my 6k parameter system can match aspects of models 100,000x bigger. Scaling laws apply very differently too... 🤔

Opus 4.5 Mitigates Cursor AI Bugs, Not Solely Responsible

For Gemini 3, I don't rule out bugs in @cursor_ai — as many new features don't work, worktrees getting trashed or even renamed (!) mid-way through agent working. But since Opus 4.5 manages around those bugs, it can't...

New Model Outperforms Gemini 3 with Greater Polish

My verdict is that it's significantly better than Gemini 3. It's at least as smart and just got more polish to it. Alignment on little details also significantly higher. Gemini 3 gets many things mixed up after a half-dozen messages, and...

Opus 4.5 Executes Tasks Seamlessly Beyond Token Limits

With Opus 4.5, it seems you don't need to ask multiple times or ORDER it to do work, it just gets stuff done — even beyond 50% the token limit and after chat compaction! This kind of message is a thing...

Benchmarks Mislead; Human Review Is the Real Bottleneck

These kinds of benchmarks are misleading without a joint metric showing much work was necessary by humans after the fact. How much time to clean up that 2h42m of code? Style and architecture need to make sense, not just passing tests. That's...

LLMs Lose Context After 100k Tokens, Need Frequent Resets

People working on basic code and reset their Agent chats every 4-5 replies I envy you. Having to work on deep context design work and at about 100k tokens, LLMs start to get lazy / confused. I resorted to giving them...

Gemini 3: Fast but Unreliable, Files Get Corrupted

Gemini 3 review: it's fast, it's not dumb, but it's completely unusable in practice. It will get lost after a few edits then completely trash the file: issuing patch commands that include line numbers at best, and at worst it will...

Mid-Tier Language Models only Hit 75‑85% on Basic Math

Language models perform poorly on high-school math? 🙄 You don't want to hear this, but the problems started in grade-school. The moment we (collectively) found acceptable that mid-tier models could score only 75%-85% on a GSM test set of 1.32k straightforward...

Fast Coding Model Feels Overpriced Despite Performance Gains

The speed of a faster coding model is worth it, but it seems mis-priced. C1 gobbles through files, reasons more, expect extra feedback to reach similar place as slower model do with less of everything. Intuitively it feels more expensive "the...