Hand‑Centric Policy Gains Flexibility and Real‑World Viability

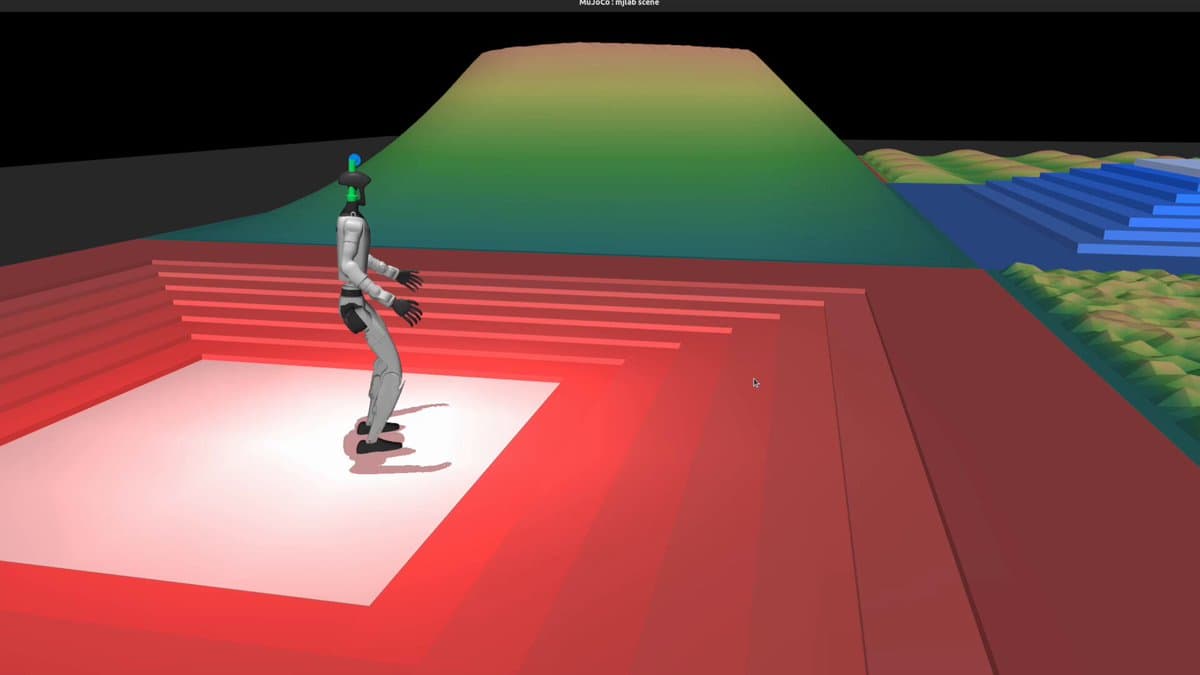

some improvements to the hand-centric policy crouches really well and even crouch walks, we can definitely pick stuff up off the floor with this I think I'd like to penalize torso velocity beyond a certain speed steerable dynamic hand rotation. I initially always made the hand flat palm down, this worked great and then I realized I should just make it steerable completely bc that'd be epic and that works p well would like to convince the policy next to walk forward to next destination instead of sideways. also encourage the left arm to not look SO goofy but dang i really like how this is turning out and I think the concept of a hand-centric style policy could work really well for actual deployment use cases.

Custom Reward Tweaks Yield Rapid, Satisfying Learning

we do a bit of reaching. modifying the mjlab velocity demo reward function to do custom behaviors is so satisfying because the base function just seems to learn so darn fast. It only takes a few minutes to start seeing...

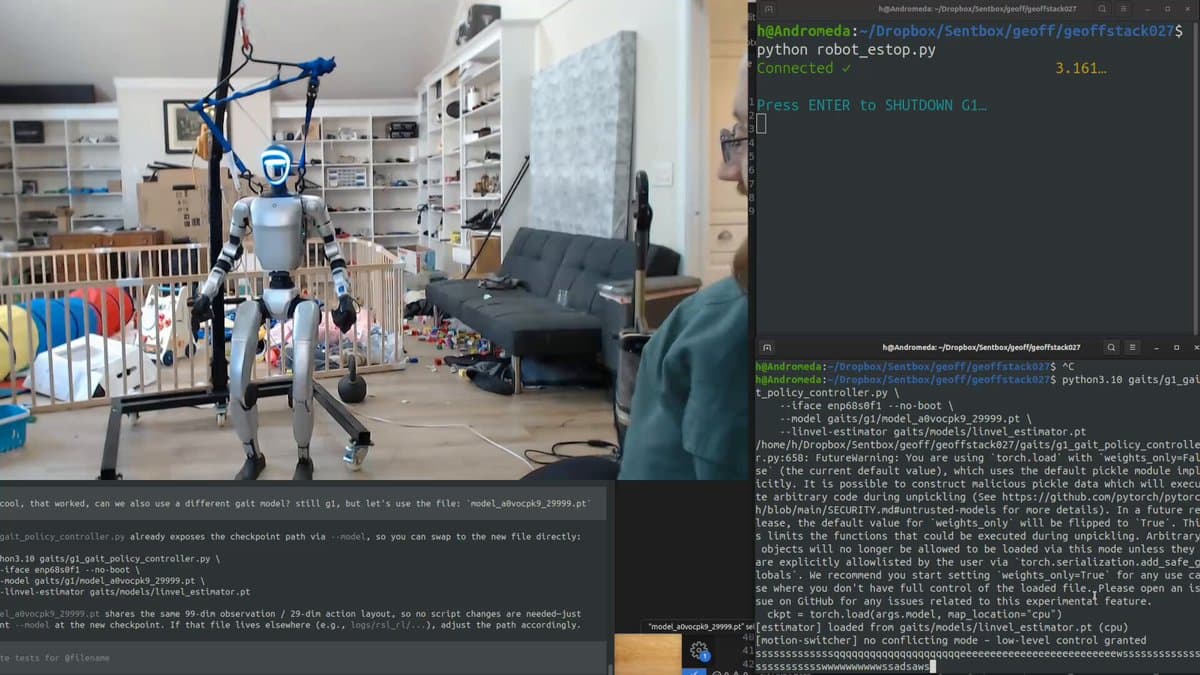

First Successful Sim‑to‑Real RL Walk for Unitree G1

New video is out, teaching a Unitree G1 humanoid to walk using reinforcement learning (PPO). First time I've ever got sim2real to actually work with robotics, sharing what I've learned and testing out how good the policy actually is by...

Training a Unitree G1 to Walk W/ Reinforcement Learning

The video chronicles a creator’s effort to teach a Unitree G1 quadruped to walk using reinforcement‑learning techniques, emphasizing the transition from pure simulation (Sim2Sim) to real‑world deployment (Sim2Real). After years of attempting Sim2Real, the presenter finally succeeded thanks to advances...

End-to-End Neural Network Enables Real-Time Bipedal Gait Control

This is a vertically integrated end to end deep neural network performing forward pass inference real-time, controlling individual actuator's torque output for bidpedal gait generation in adverse, GPS denied, envs. ok its standard PPO rl trained in mjlab, strapped to a...

First‑mover Edge Fades in AI and Robotics

idk when first mover advantage was actually a thing, but it sure seems to not be a thing for AI and robotics.

Kitchen Robot Unloading Beats Self‑Driving in Complexity

im convinced getting a robot to be generally good at going into a random kitchen and unloading a dishwasher with e2e ml is orders of magnitude harder than self driving cars with e2e ml.

Gemini’s CLI Outshines GPT‑5.2, Leaving Codex Lagging

gpt-5.2 is a fine a model, but tbh, I'm going back to gemini because it's also a fine model and the CLI ui/ux is like 10x better. Really feels like codex cli is falling way behind competition.

Exploring GPT‑5.2’s Potential for Coding Agents

just when I think i'm out, openai pulls me back in with gpt-5.2. Let's see what it's all about. Any opinions on 5.2 in the context of coding agents?

Gemini 3 Pro CLI: Finally a Frustration‑Free Agent

Still my fav model and cli. Gemini 3 pro + cli also is the first agent/terminal combo that doesn't routinely make my blood boil while using it.

Training Gait Models to Detect and Recover From Falls

Today, we're hoping for the best, preparing for the worst. In gait training, the episode terminates if a fall is detected, so a fall in real is wildly out of distribution. So we need to handle for falls. Eventually be cool to...

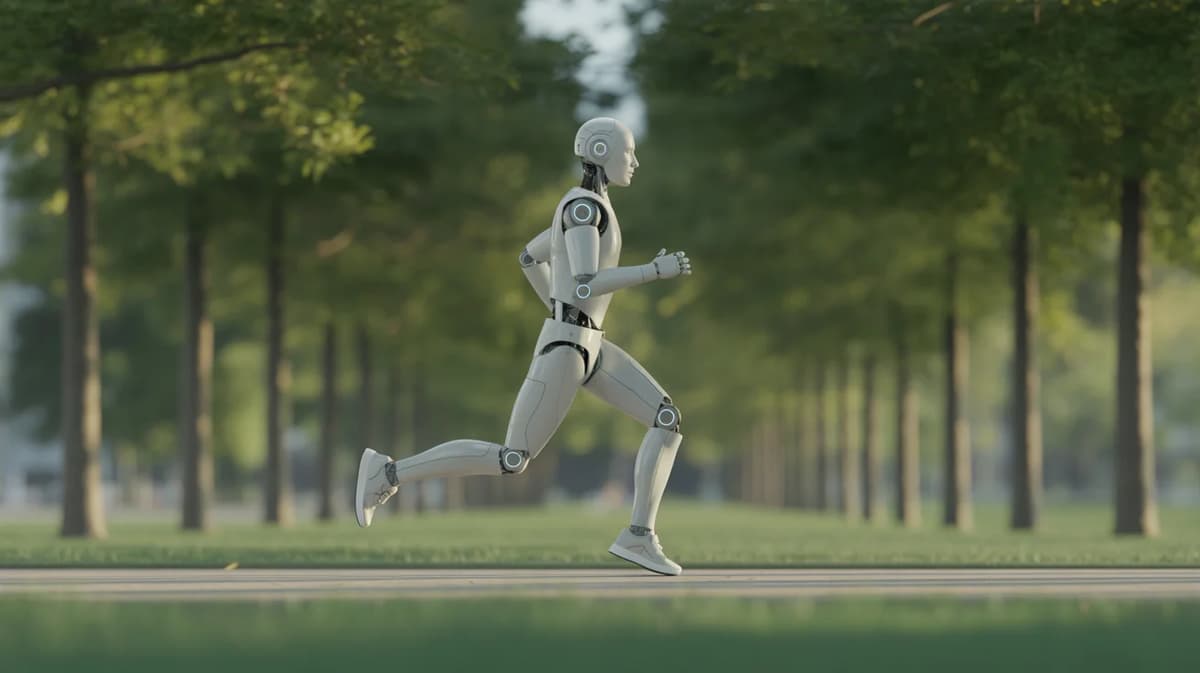

Impressive Humanoid Jog Raises Curriculum Questions

This might be the best humanoid jog I've seen yet. Doubt this is pure RL, I'd love to know the curriculum here.

Bad Reward Function Uncovered Surprisingly Effective Robot Model

Geoff the G1 preparing to go offroading IRL. I did a terrible job at the reward function here and was actually just tuning in to see all of what was broken and instead found a pretty good model. The robots just want...

Gemini CLI + 3 Pro: Top Terminal AI, Occasional Dropouts

After a couple days of heavy use, tldr: it’s a good model and gemini cli is very good. The pair might be the best for terminal-based agents. But it’s not perfect. Pros: I never hit any limit for gemini 3 pro and...

First Successful Sim2Real Transfer Achieved on Geoff G1

Ladies and gentlemen, we have our first successful sim2real transfer on Geoff the G1! https://t.co/eywBjbiLxy

Discovered a Small Yet Effective Local Optimum

we found ourselves a nice lil local optimum. https://t.co/PXevVKYcpL

No Model yet Outperforms Codex+O3 in Real Work

This is my sentiment. I still have yet to experience a model in actual useful professional work that beats codex+o3. You can play with strengths to get some bench perf to move, at cost of another capability. All we're doing is...

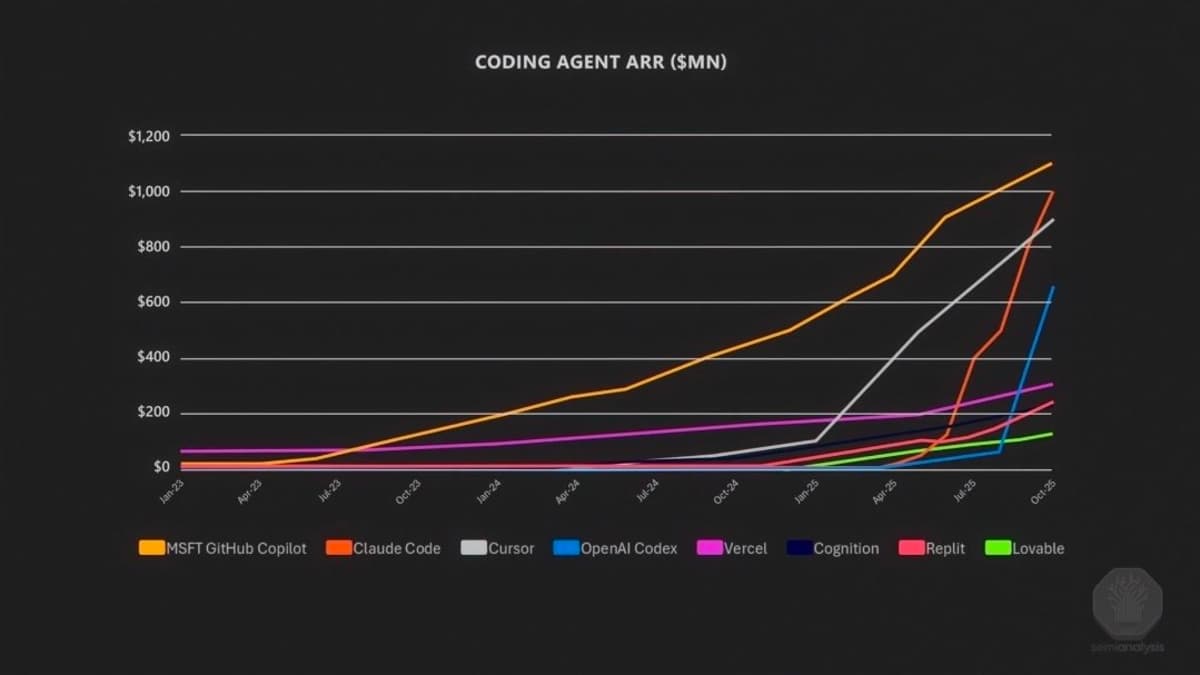

Demand for AI Coding Agents Is Rising

The people want coding agents. https://t.co/dXL3ikiKwD

Top Robotics Influencers and Companies to Watch

a list of people and companies to watch for robotics

AMD's Deep Learning Gap Stems From Software Deficit

can anyone actually explain, with hard facts, how it's possible AMD still doesn't meaningfully compete in the deep learning space? I understand they lack certain pieces of software. I do not understand how they could reasonably still lack it though.

Sim-to-Real Transfer Achieves Real-World Walking and Running

sim2real got hands, but making progress towards our own gait and transferring walking/running successfully from sim to real. ignore toddler mess. its all strategically placed with purpose to test challenging scenarios for robots of course. https://t.co/Xbgayfrfik

Debating Sim2Real for New Sprinter Policy

just cooked up a new sprinter policy, do we attempt sim2real? https://t.co/DIDvOrcm23

Kids' Early Rolling Mimics Reinforcement Learning

Watching kids learn these first traits really does look a heck of a lot like reinforcement learning. Our twins' first mode of travel was just rolling to the desired location.

Neo's $20K Price Risky; $500/Mo Housekeeping Steal

After some time of thought on the 1x Neo $20K for Neo is a significant investment with a lot of risk since the bot is proprietary. $500/month is an outright steal for housekeeping tho, even if it's through a teleoped robot. No need...

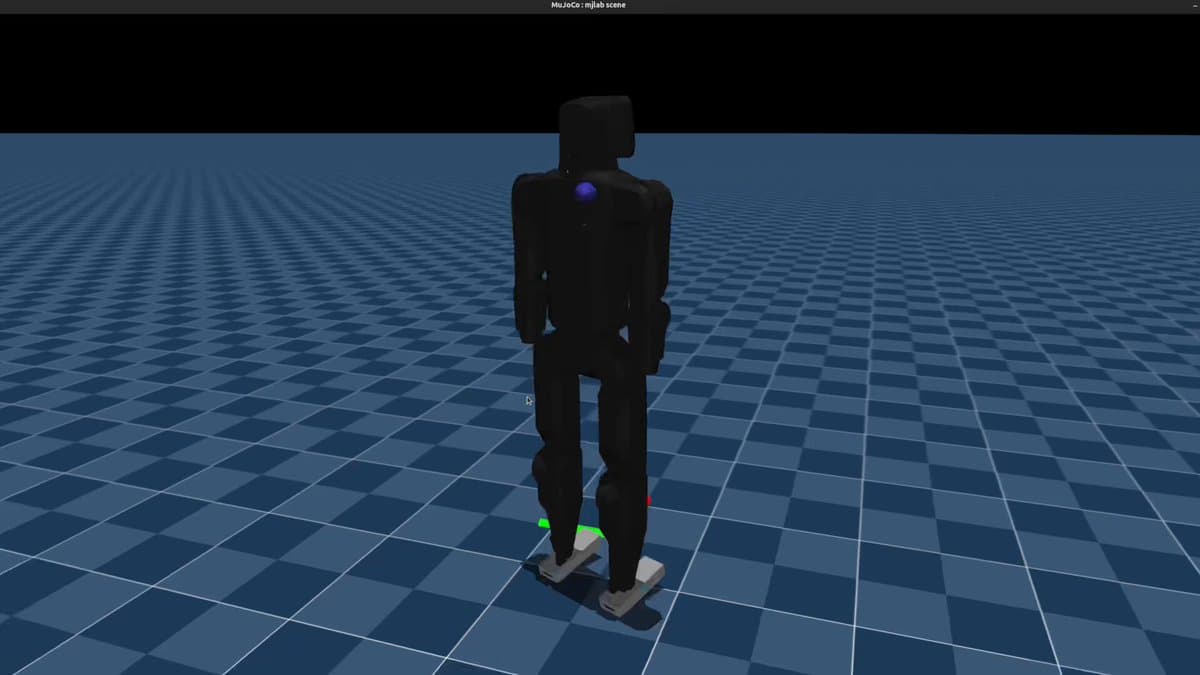

Manual Curriculum Yields First Walking Gait in KScale KBot

Finally getting a semblance of a gait for the kscale kbot in mjlab sim! Ended up essentially manually managing a curriculum of first balance, then forward vector walking. https://t.co/UTuZUSF4dz

Reward Hacking with Mjlab's Seamless Wandb Integration

We do a bit of reward hacking. Enjoying mjlab's robust wandb integration for tracking runs and storing chkpts Always a fan of wandb but usually too lazy to do the integration for the stats/files like this We'll see if I ever actually make...

Browser‑Based Viz Makes Training Progress Easy Anywhere

exceptionally easy install and demo at least. Didn't realize viz ran via browser immediately makes it easy to visualize training progress from a personal computer while processing occurs on a server/workhorse machine true test will be trying this on custom problem and...

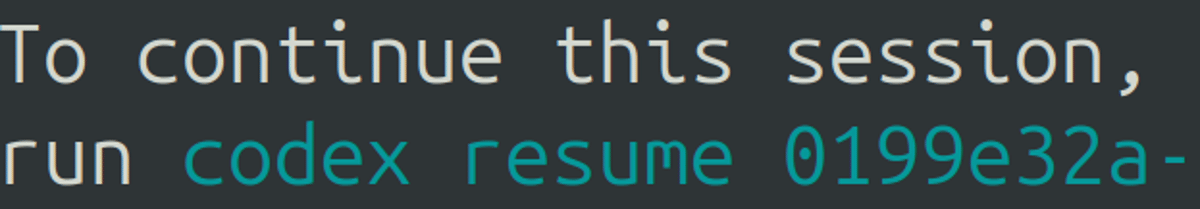

Codex CLI Exit Saves Sessions, Huge QOL Boost

whoever added this to the codex cli when you exit is a lifesaver cannot tell you how many times I've mindlessly ctrl+c trying to copy paste only to break a session then i gotta dig up the latest chat. such a simple...