Patients Choose Risky Care When Health Systems Fail

I don’t think patients want ChatGPT or an AI doctor startup to replace their health system - but when you’re desperate, you’ll take what you can get - the safety and efficacy be damned. So if health systems don’t fill their own gaps, someone else will. It’s not like AI represents the first time we’ve seen patients willing to make trade offs of this sort. Medical tourism has been around for decades. No, it’s not safer for a patient to get surgery in a less developed country, with a less developed healthcare system and lower standards for healthcare quality/safety - not to mention, a lack of continuity of care from the surgical team abroad. But if the choice is between getting a surgery they can afford and no surgery at all, are we surprised patients made that trade off? Of course, those inside the healthcare system are often disgruntled with this: “we’ve seen patients get low quality surgery, develop complications, and our healthcare system at home has to treat the downstream problem created by medical tourism”. We’re going to see this play out with AI as well. Patients can’t wait months to see a doctor - so they’ll turn to ChatGPT to try and deal with it on their own - or as we’ve seen in some cases, they’ll turn to a purpose-built AI doctor for care. And yes, despite the few miracle stories that circulate on social media, there are also horror stories that will come out with patients experiencing real harm because of some of these AI tools - especially those that weren’t developed with the expertise of clinicians who deeply understand the complexity of medicine. But here’s the thing we’ve already seen with medical tourism: demand hasn’t slowed - in fact, it continues to grow 13% - 18% per year. If you think that’s a lot… well, ChatGPT and AI doctors are only going to increase because the demand for any healthcare is much higher than medical tourism specifically. So what does this mean for health systems? You have to lead - you can’t follow. You need to be in the trenches tinkering with AI, launching your own products, and leading from the front. Now it doesn’t mean being reckless like some of those companies who shall not be named. It means being nimble, fast, progressive AND safe/appropriate. It’s why at @SeamlessMD we’re thrilled our health system customers have been progressive with us on using RAG-based Conversational AI for patients using safe, health system-approved clinical content. Our customer partners are leading from the trenches AND doing it responsibly. We need health systems doing more AI, not less - because if health systems don’t fill the gap, then folks who don’t care as much about quality/safety will - and there needs to be a thoughtful, counterbalance to that.

AI Advice Still Needs Doctor Confirmation for New Parents

A month into fatherhood, I've used every clinical AI tool I have access to: OpenEvidence, DoximityGPT, Gemini - and I'm STILL asking my doctor friends for advice... but WHY? Isn't AI supposed to make physicians obsolete? Let’s not act surprised: of...

Integrations Only When Customers Demand, Not Startup Pitch

Recently more than one person called my Health Tech startup @SeamlessMD the “incumbent”. While we’ve been around 13+ years, it feels strange to call ourselves that - probably because we view the EHR as the incumbent. That said, there are...

AI Medical Tools Need Evidence, Not RCTs, to Trust

How can we trust CDS AI like OpenEvidence, DoximityGPT, etc if no one's done studies showing their use improves patient outcomes? While I understand the intent, I think it's ultimately a misguided question. Anytime a new medical textbook comes out, should...

Health Systems Split: Epic AI vs Third‑Party Solutions

Every health system on Epic is facing the same question: trust Epic’s Patient AI or go 3rd Party? Sutter and Hartford just chose opposite answers. My 4 thoughts: The news this week: → Sutter Health was the 1st health system to go-live...

Hard-to-Measure ROI Stifles Life‑Saving Healthcare Innovation

I've seen hospital CEOs cancel innovations that reduced readmissions and ED visits. The outcomes were incredible - but the financial ROI was too hard to prove. And since "ROI is needed to justify everything right now in healthcare" many great innovations...

AI Delivers Rapid, Clear Interpretation of Newborn Test Results

My son was 11 days old and his blood test came back "abnormal". In the past, I would've spent six hours sick with worry waiting to hear from his doctor. Instead, AI got me the answer first - and explained...

Clinician Endorsement Drives Patient Adoption of Digital Health

I found a Digital Health tool in our patient portal early in my wife's pregnancy. Even though I knew what it was, even though I literally build Digital Health tools for a living - we did NOT use it. Why? Because...

OpenEvidence Turns Societies Into Evidence Creators, Securing AI CDS Moat

OpenEvidence started as the place clinicians go to find clinical evidence. Now they're becoming the place medical societies go to CREATE it - that's a whole new moat for winning the AI CDS market. My 5 thoughts... First, the gist of...

Diverse Nursing Approaches Enrich Patient Experience

I've been a dad for five days - and somehow, it's already made me question what I thought about AI and the patient experience. Lemme explain… We had an incredible experience in the hospital. Not because of any Tech… but because...

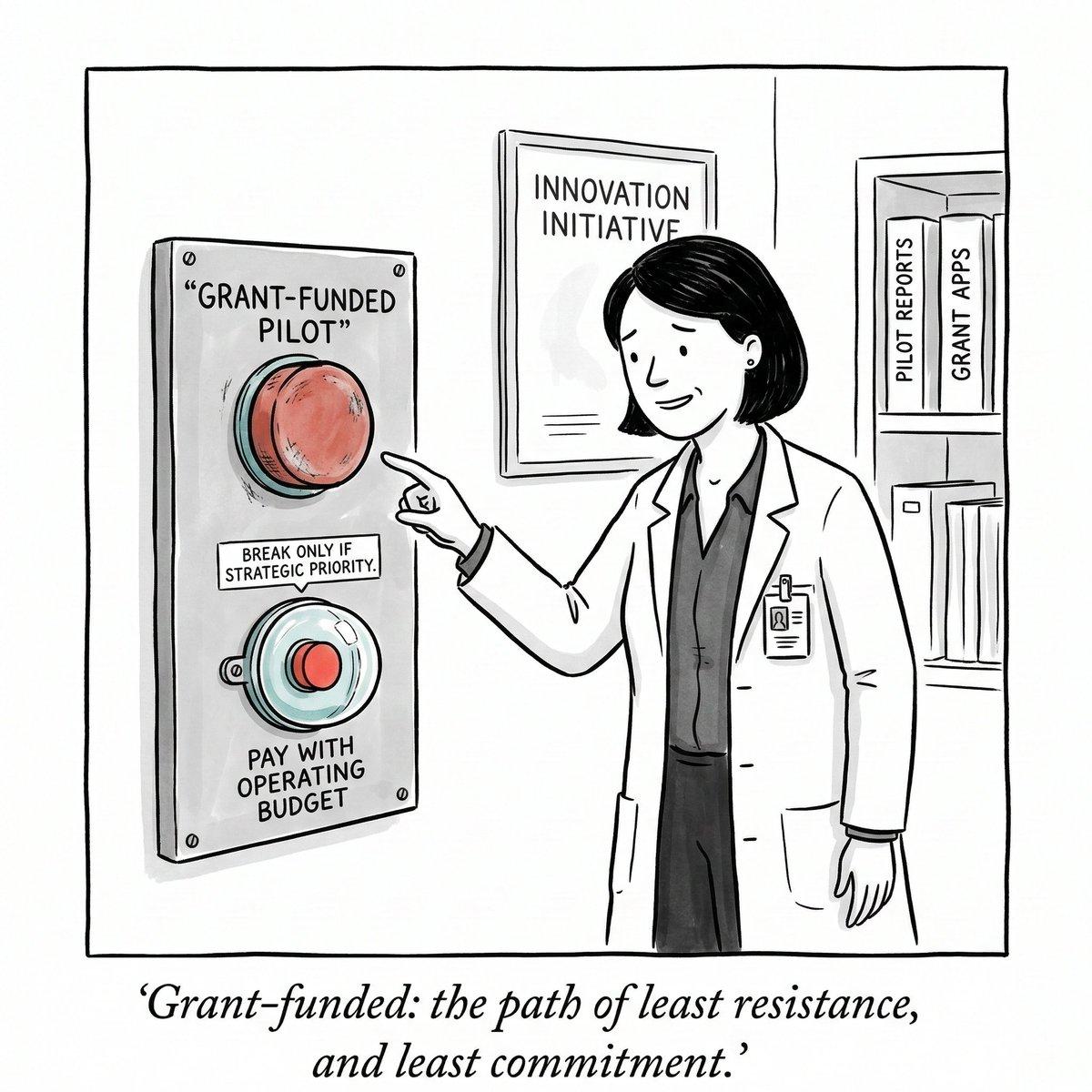

Grant-Funded Pilots Die without Upfront Purchase Commitment

A health system once celebrated our Health Tech partnership’s amazing results, and then walked away - all because of the most dangerous word in innovation: “grant-funded.” I've watched more grant-funded pilots quietly die than I care to count. The problem? A...

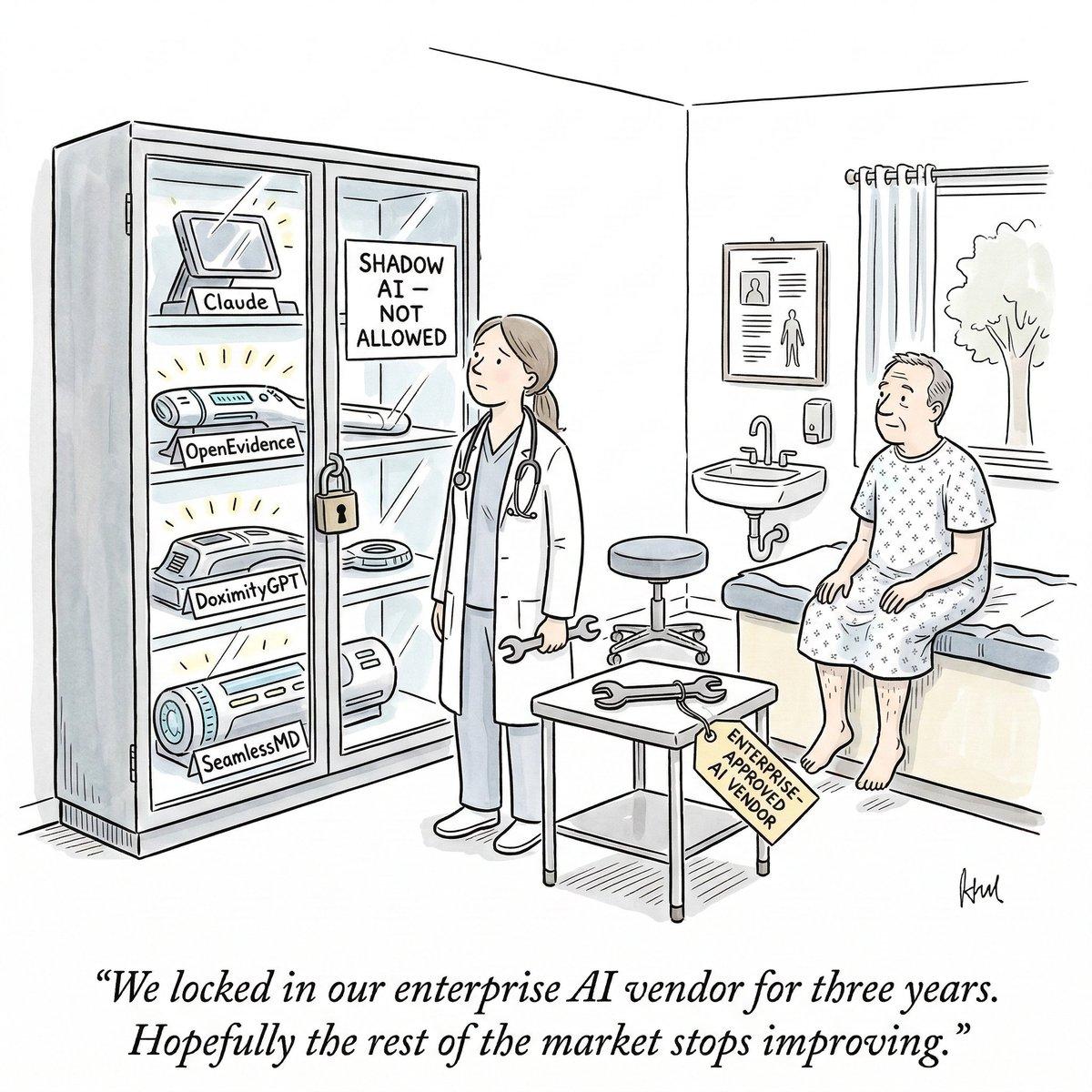

Don't Bet on One AI Vendor: Claude Beats ChatGPT, Gemini

The AI tool I called “best in class” just got dethroned - in three months. So when health systems go all-in on one AI vendor, is that a BIG mistake? I was loyal to ChatGPT. Then I became Gemini-pilled. Recently my...

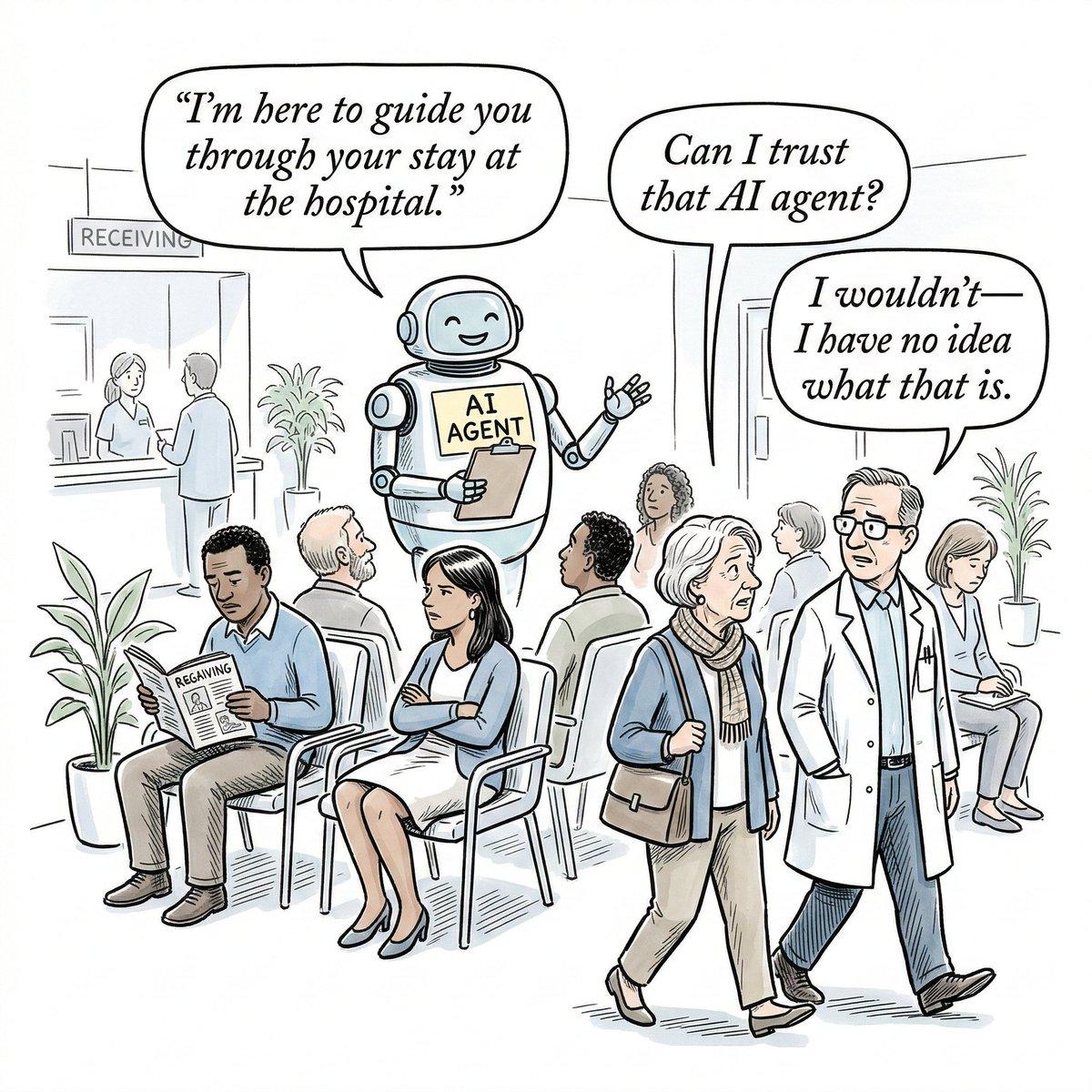

Human Trust Beats AI in Patient Engagement

I keep seeing AI-pilled people arguing that AI agents will magically solve patient engagement - but it won’t. If it were that easy, non-Tech solutions would’ve solved it already. At @SeamlessMD, I’ve spent the last 13+ years working with health systems...

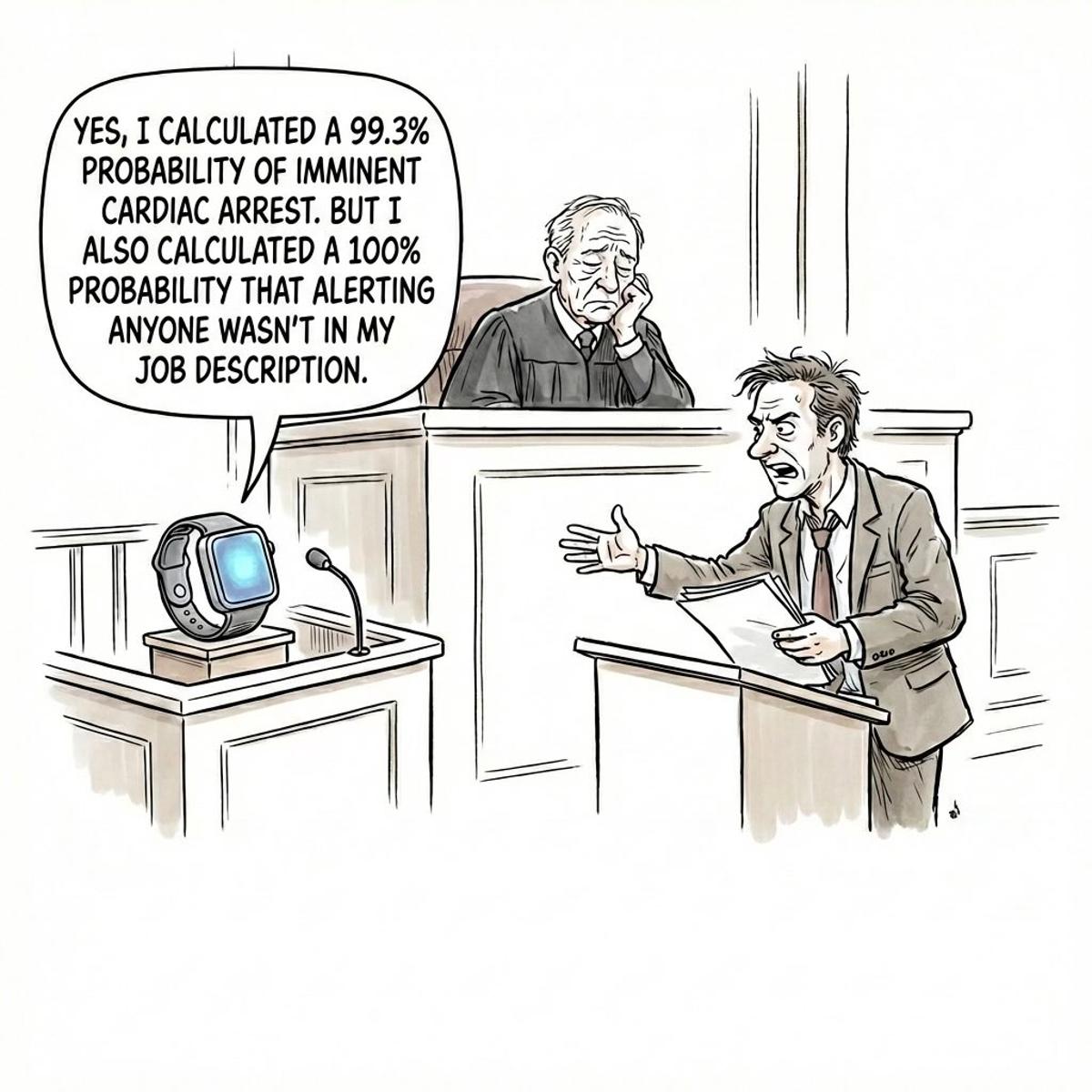

AI Health Alerts Force Companies to Choose Duty over Silence

In 2026, software doesn’t just store health data - AI allows it to see medical risk before anyone else. When consumers use AI for their health, we are increasingly faced with the dilemma of whether AI companies have a duty...

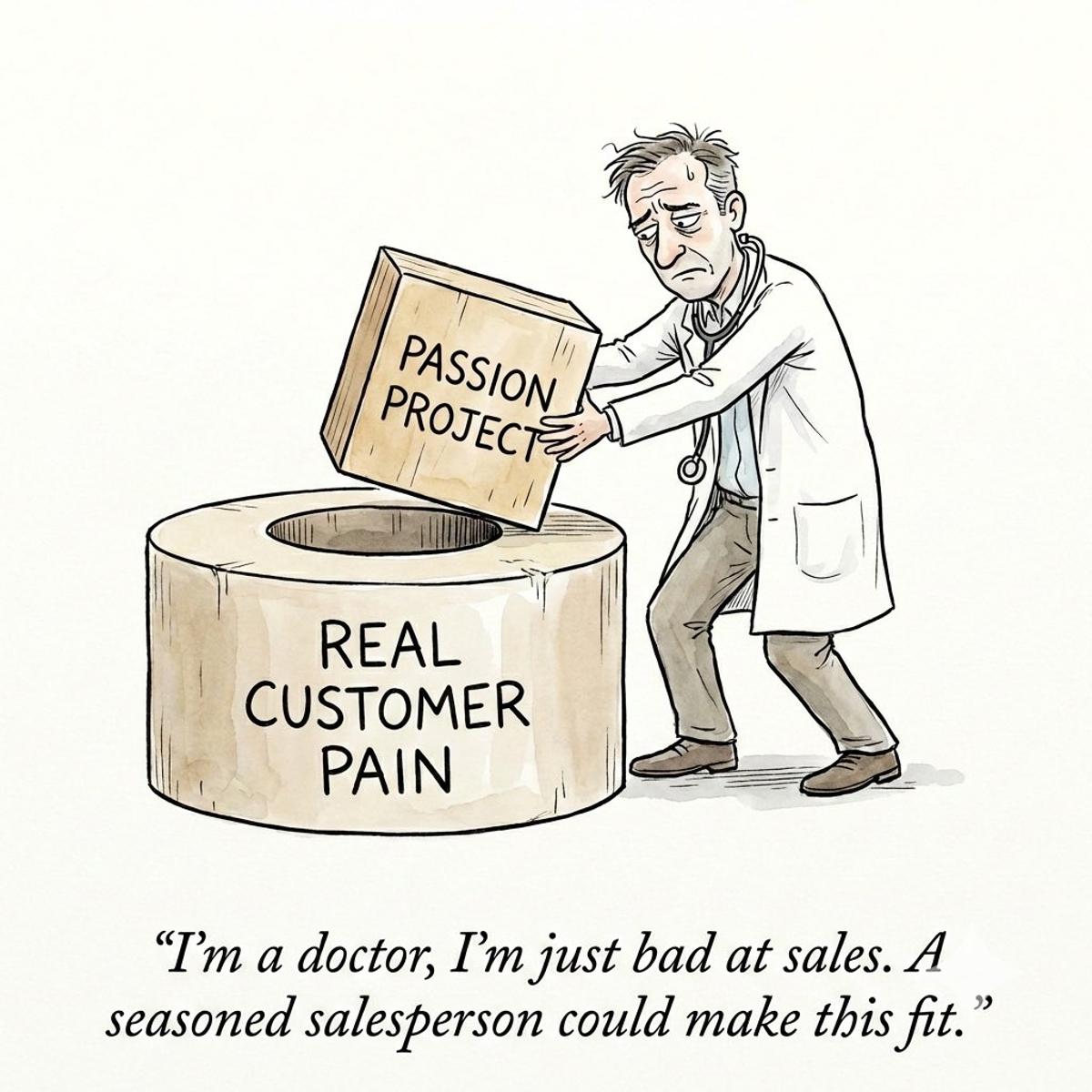

Clinician Startups Fail Because Product, Not Sales, Misses Market

When Clinician Entrepreneurs can’t get adoption, the Number 1 mistake is to think: “It’s not a product problem, it’s a sales problem.” Sorry but 99% of the time it IS a product problem. I’ve seen it over qnd over again from...