Future Class Divide: Focus vs AI‑Controlled Slop

A lot of folks talk about "escaping the permanent underclass". If AGI pans out, the future class divide won't be based on wealth, but on cognitive agency. There will be a "focus class" (those who control their attention and actually do things) and a "slop class" (those whose reward loops are fully RL-managed by AI)

Agent-Native Payments Set to Drive 10x Transaction Surge

I basically agree. Agents are soon going to be buying services/resources online, and the world is going to need agent-native payment infra, like Sam's @agentcashdev We're probably looking at a >10x transaction increase.

True AGI Must Self‑Create Its Own Problem Harnesses

AGI will make its own harness (or whatever else it needs to solve a new problem). As long as you need a human engineer to handcraft a task-specific harness/system for each new problem, AI isn't general. It's an automation tool...

New AGI Eval Highlights Gaps, Drives Real Progress

If you care about the rate of AGI progress, you should be excited about a new eval that focuses research efforts by pointing out important gaps & providing a way to measure progress towards fixing them If instead you only care...

AI Must Master Tiny Scientific Loops Before Curing Cancer

Many people expect that current AI is ready to cure cancer and do breakthrough new science. ARC-AGI-3 envs are like a microcosm of the scientific method: you must observe a tiny world, form a theory of how it works, test...

Ordinary Testers Solve ARC‑AGI‑3 Tasks without Training

To be clear, all ARC-AGI-3 environments are feasible by humans with no prior ARC-AGI-3-specific training. Our bar for feasibility is the following... Each environment was seen by 10 human testers. If 2 testers could independently clear it (successfully solving *all* levels...

ARC-AGI-4 Slated for Early 2027, Yearly Benchmarks

For those wondering about ARC-AGI-4 timing: it will be released in early 2027. We are aiming for a yearly release schedule for new benchmarks. We are also aiming for each new benchmark to be fully unsaturated upon release, and to...

ARC Prize Sets Actionable Goals and Tracks AGI Progress

ARC Prize's mission is to provide actionable goals for AGI research and to measure progress towards them.

ARC-AGI: Evolving Benchmark, Not Final AGI Test

Keep in mind: ARC-AGI is *not* a final exam that you pass to claim AGI. Including ARC-AGI-3. The benchmarks target the residual gap between what's hard for AI and what's easy for humans. It's meant to be a tool to measure...

True AGI Learns New Tasks as Efficiently as Humans

Human-level general intelligence is achieved when an AI system can approach a new task and figure it out, without human intervention, *with the same learning efficiency as humans*. If every new task requires human intervention, it's not general. If every new...

ARC-AGI-3 Launch Next Week After Year-Long Team Triumph

The ARC-AGI-3 launch is next week. Incredible work by the team over the past year.

Science Needs Exploration, Not Just AI‑Curated Knowledge

Current AI is a librarian of existing knowledge. Science requires an explorer of the unknown. You don't win a Nobel Prize by staying in the library.

Latent Space: Vectors Unleash Pure Possibility

There is a poetic depth to the term "latent space" that transforms vector coordinates into a frontier of pure possibility

Future Breakthroughs Will Come From Beyond Parametric Models

The next major breakthrough will branch out at a much lower level than deep learning model architecture. It will be a new approach. A better model architecture can lead to incremental data efficiency & generalization gains, but it won't fix...

Coining “Selfmaking”: Crafting Identity Through Creation

There should be a word for this trait. Something like "selfmaking". You make your self by making things yourself.

Self‑thinking Needed Before genAI; Missed It? Good Luck

The time to learn how to think for yourself was before genAI, if you missed your chance, good luck

Prompt Engineering Shows We're Still Far From AGI

The persisting importance of prompt engineering -- and now harness engineering -- is one of the best indicators of how far we are from AGI. A general system doesn't need a task-specific harness. And when provided with instructions, it is...

Sell Automation Tools Widely; Keep Inventions for Personal Profit

If you build an automation machine, the way to monetize it is to sell it to as many people as possible -- anyone who has tasks to automate. But if what you build is an invention machine, then the best way...

Seeking Latest AI-Generated Music Hits and Tools

What's the SotA for AI music generation these days? Any AI generated bangers you've listened to lately?

Current AI Still Mirrors Human Guidance, Not Autonomy

The bottleneck of current AI is simple: the techniques we use are still predicated on pattern memorization and retrieval, and thus they need *someone* to tell them which patterns to memorize (training data, RL envs...) That role cannot yet be played...

Civilian Tech Now Fuels Military; AI Will Speed It

Up until roughly the 1970s, most civilian technological progress was downstream of military technological progress. The trend has since inverted. Today most of the modern (e.g. Ukraine) military playbook comes from consumer tech from the past two decades. With AI entering...

AI Agents Becoming Independent Economic Actors Within Two Years

AI agents will soon graduate to fully-fledged economic actors that buy services, compute, and even data in the course of accomplishing high-level goals. 1-2 years before we start seeing this at scale.

More Raw Data ≠ Higher Intelligence, Says Visual Compression

I keep reading this take (below) every few months, presented as if extremely profound, and it is just offensively dumb. It confuses data and information, it ignores the fact that not all information is equally valuable, and it ignores the...

AGI Emergence Unpredictable: Build, Don’t Theorize

It's basically impossible to predict what emergent properties you might get from scaling up a given algorithm. That's why AGI is much more an engineering endeavor than a theoretical one. It's a process of discovery through building.

AI Today Mirrors 1870s Physics: Early Yet Transformative

If you ever feel like you're late to the game, consider that in the 1890s many scientists thought physics as a field was completely solved (quote below is from Albert Michelson in 1894). On the front of intelligence science, it feels...

Task-Specific Training Covers Minuscule Slice of Possible Tasks

By explicitly training on specific tasks, we ended up covering a very large area (in absolute terms) of the space of all possible tasks humans can do, but this large area only amounts to 0.00...01% of the total space. And...

AI Excels with Familiar Tasks, Stalls on Novel Domains

Even after the steep progress of the past 3 months, it remains that AI performance is tied to task familiarity. In domains that can be densely sampled (via programmatic generation + verification), performance is effectively unbounded, and will keep increasing...

Principled Stance on Surveillance & Killbots Earns Respect

I gained a lot of respect for Dario for being principled on the issues of mass surveillance and autonomous killbots. Principled leaders are rare these days

We Choose AI's Impact, Not Its Destiny

A lot of the current discourse about AI comes from a fatalistic position of total surrender of agency: "tech is moving in this direction and there's nothing anyone can do about it" (suspiciously convenient for those who stand to benefit...

Cheaper Engineer Hiring Accelerates Software Ecosystem Growth

It's probably accelerating from here. More code, more software engineers. More apps, more SaaS usage and revenue. More cloud consumption. And a whole lot of tokens through it all. If the cost of hiring software engineers was previously a bottleneck on...

AI's Task-Specific Skills Don't Equal General Intelligence

The field of AI is still struggling with the fact that task-specific skill is not the same as general intelligence

AI Boosts Engineer Efficiency, Driving Higher Demand

It is becoming clearer that Jevons paradox applies to competent human software engineers. If AI makes them more efficient and more productive, demand for their work will increase.

Use AI to Amplify Thinking, Not Replace It

The best way to use AI is an interface to information that lets you deepen and improve your own knowledge and mental models. The worst way to use AI is as a crutch to outsource and forsake your own cognition

Agentic Coding Mirrors ML, Inheriting Its Pitfalls

Sufficiently advanced agentic coding is essentially machine learning: the engineer sets up the optimization goal as well as some constraints on the search space (the spec and its tests), then an optimization process (coding agents) iterates until the goal is...

Google Grants TPU Compute Awards for Keras 3 Researchers

If you're a researcher in academia using Keras 3 (PhD student, postdoc, professor...) and you want to train on TPUs, you could receive compute awards from Google for your research. Google is running a new academic grant program, separate from...

6th‑Gen AV Platform Costs Halve

The 6th gen platform reportedly costs ~$70k per vehicle ($50k base + $20k custom fit & sensors). The cost has room to fall by 50% in the next 2 years (>$30k vehicle + $10k sensors). Waymo currently does over 500,000 driverless...

Two Types: Bias‑aware Users and Self‑proclaimed Polymaths

There are two categories of people: those who quickly figure out that chatbots give you the answer you expect when you ask questions in a biased way, and the ascended polymaths currently out-thinking every expert on Earth

Impossible Returns Signal Superhuman AGI's Arrival

One of the first signs of the emergence of superhuman AGI will be the emergence of a quantitative trading firm with impossible returns

Short-Term References Matter; Long-Term Lose Relevance

Short term, this is still the best reference point. Long term, past references might become much less useful.

Frontier Models Overfit ARC Format, Limiting Generalization

Interesting finding on frontier model performance on ARC -- due to extensive direct targeting of the benchmark, models are overfitting to the original ARC encoding format. Frontier model performance remains largely tied to a familiar input distribution.

AI Diagram Tool Still Slower than Manual Slides

Right now it's still taking me more time to generate medium-complexity diagrams by describing them to Nano Banana than by drawing them manually in Google Slides...

AGI Growth Limited by Fundamental Bottlenecks, Not Scaling

I don't think the rise of AGI will lead to a sudden exponential explosion in AI capabilities. There are bottlenecks on the sources of new capability improvements, and horizontally scaling intelligence in silicon (even by a massive factor) doesn't lift...

AGI Arrives When No Test Shows Human‑AI Gap

Reaching AGI won't be beating a benchmark. It will be the end of the human-AI gap. Benchmarks are simply a way to estimate the current gap, which is why we need to continually release new benchmarks (focused on the remaining...

Call Centers Signal AI‑Driven Job Crisis Ahead

A good canary in the coal mine for AI-caused job loss will be call centers. We're currently projecting ~2.75M call center jobs in the US in 2026. In 2016 it was ~2.63M. The global call center market size has grown...

ARC Advances Driven by Test-Time Adaptation, Not LLM Scaling

Lots of folks spread false narratives about how ARC-1 was created in response to LLMs, or how ARC-2 was only created because ARC-1 was saturated. Setting the record straight: 1. ARC-1 was designed 2017-2019 and released in 2019 (pre LLMs). 2. The...

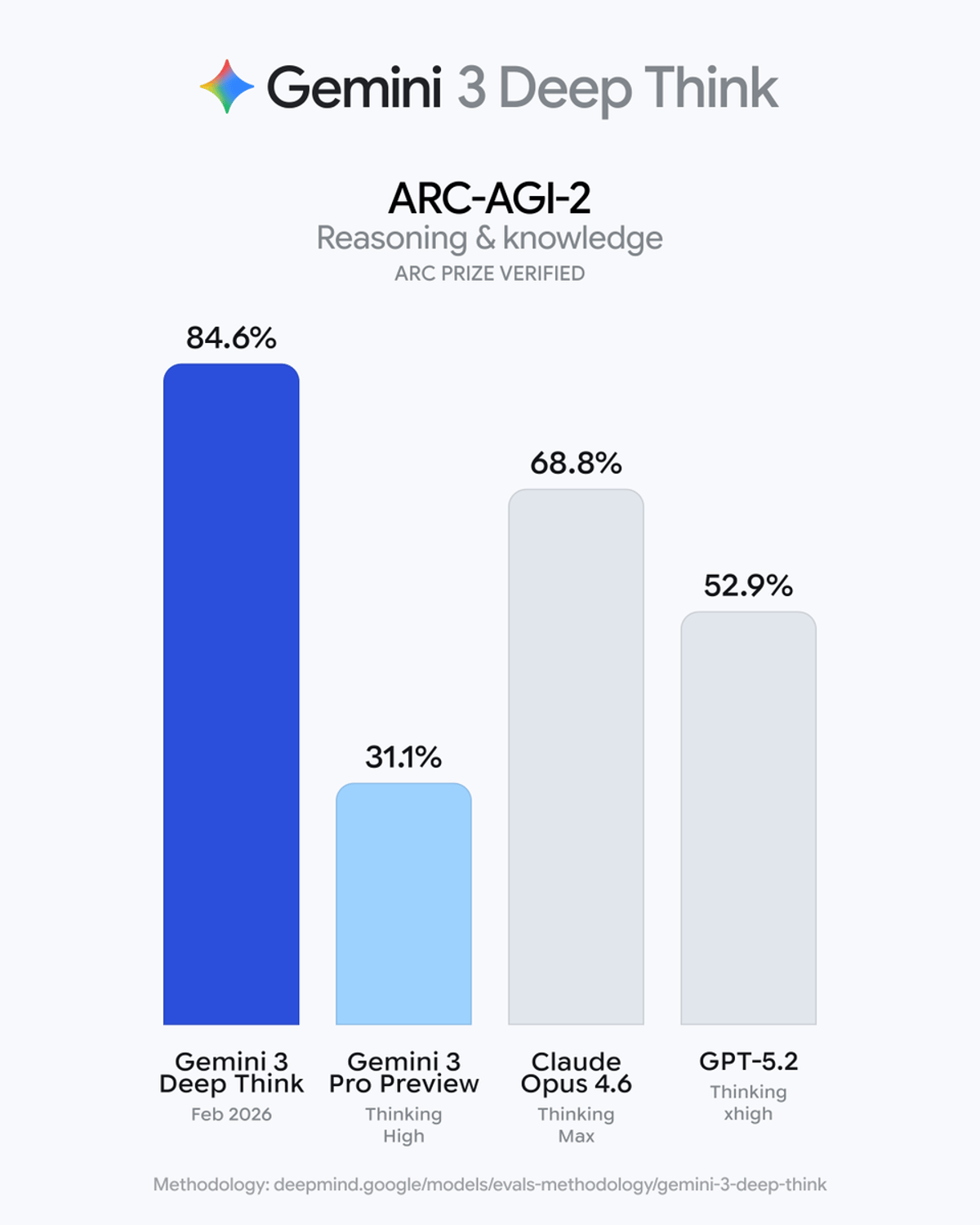

Gemini Deep Think Sets Record on ARC‑AGI‑2

The new Gemini Deep Think is achieving some truly incredible numbers on ARC-AGI-2. We certified these scores in the past few days. https://t.co/Q9qeJbCObK

Write High‑performance TPU/GPU Kernels in Python

You no longer need to leave Python to write high-performance hardware kernels. Learn how to use Pallas in Keras to author custom ops that lower to Mosaic for TPUs or Triton for GPUs: https://t.co/oeV4cmV4M0

AI Offloads Cognitive Load, Defying Age‑Related Brain Decline

One positive outcome of AI is that it will make the aging-related decline of your brain power less relevant. You can keep doing the same things just as nimbly at any age by offloading more.

More Code, More Liability: Speed Isn't Always Blessing

I tend to view code as more of a liability than an asset. In this light, making it cheaper and faster to generate a lot of code might not be an unmitigated blessing.

GenAI Raises Baseline, Makes Mediocrity Economically Irrelevant

GenAI will not replace human ingenuity. It will simply raise the floor for mediocrity so high that being "pretty good" becomes economically worthless.