Overused Vagueposting Fuels AI Lab Fatigue

Grandiose vagueposting on Twitter is the one tried and true marketing strategy for AI labs. But as it gets overused it eventually creates fatigue

Transformers Need Internal Scratchpad for Sequential Reasoning

The Transformer architecture is fundamentally a parallel processor of context, but reasoning is a sequential, iterative process. To solve complex problems, a model needs a "scratchpad" not just in its output CoT, but in its internal state. A differentiable way to...

AI Should Augment, Not Replace, Human Thought

The goal of AI should not be to replace human thought and human agency, but to expand them. Not everything needs to be automated.

From Library to Scientist: AI’s Next Evolution

LLMs represent the "library" phase of AI. The next phase will be the "scientist" phase. A library contains answers, but a scientist knows how to find answers that don't exist yet.

LLMs Aid Brainstorming, but AI Needs Fresh Ideas

Evaluating the potential of LLMs to help with scientific discovery. In short: new ideas are direly needed to move AI towards invention. LLMs can be useful as brainstorming partners though. https://t.co/Zd0EKf8Z3n

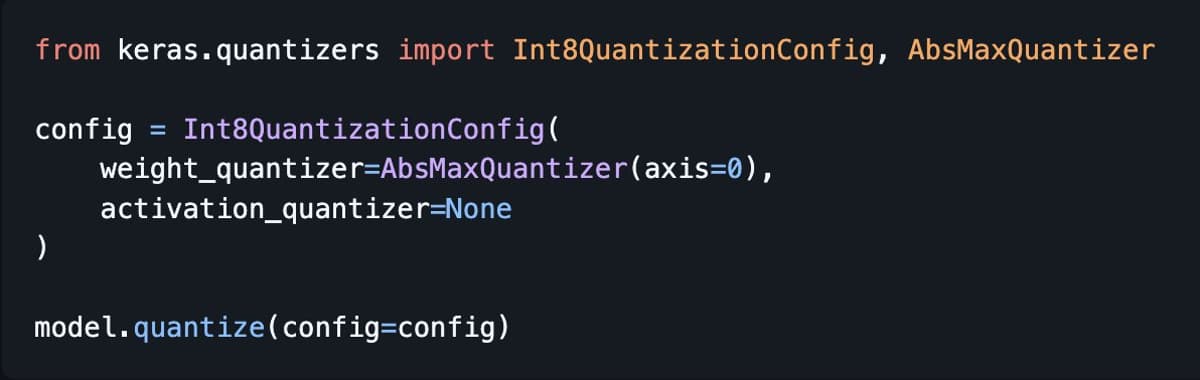

Keras 3.13 Introduces LiteRT Export and GPTQ Quantization

New Keras release: 3.13 🎉 Some major new features: • Model export to LiteRT (formerly TFLite) for mobile/edge • GPTQ quantization support for post-training compression • New Adaptive Pooling layers for dynamic architectures https://t.co/Ogmag7FYCY

Innovation Thrives on Strong Links, Not Weakest Links

Innovation is a "strong-link problem". In a chain (weak-link problem), the weakest element breaks the system. In discovery (strong-link problem), the strongest element makes the breakthrough. The rest of the system provides the infrastructure that allows the outlier to function

Our Brains Are Tuned to the Universe’s Stable Laws

Because our universe follows stable laws, a sufficiently general intelligent system adapted to it, like human-driven science, can eventually model any phenomenon within it. Human intelligence may not be "universal" in the mathematical sense (see No Free Lunch theorem), but we...

Collective General Intelligence Enables Science to Solve Any Solvable Problem

I would say there is no such thing as "universal" intelligence but there is definitely such a thing as "general" intelligence, and as a collective, we have it. "Science", modeled as an intelligent system (primarily powered by human intelligence) can solve...

Benchmark Humans Against the Best Alternative, Not Averages

You should measure human capability on a task not in terms of "average human" or "random human", but in terms of your best alternative (to AI) if you were to hire a human to solve the task. Which isn't average...

Anticipating the Upcoming ARC‑AGI‑3 Performance Numbers

Looking forward to the ARC-AGI-3 numbers :)

AI Shifts From Automation to Invention via Symbolic Search

AI will evolve from being an automation machine to becoming an invention machine. This will require a fundamentally new paradigm, with symbolic search as its core, not curve-fitting

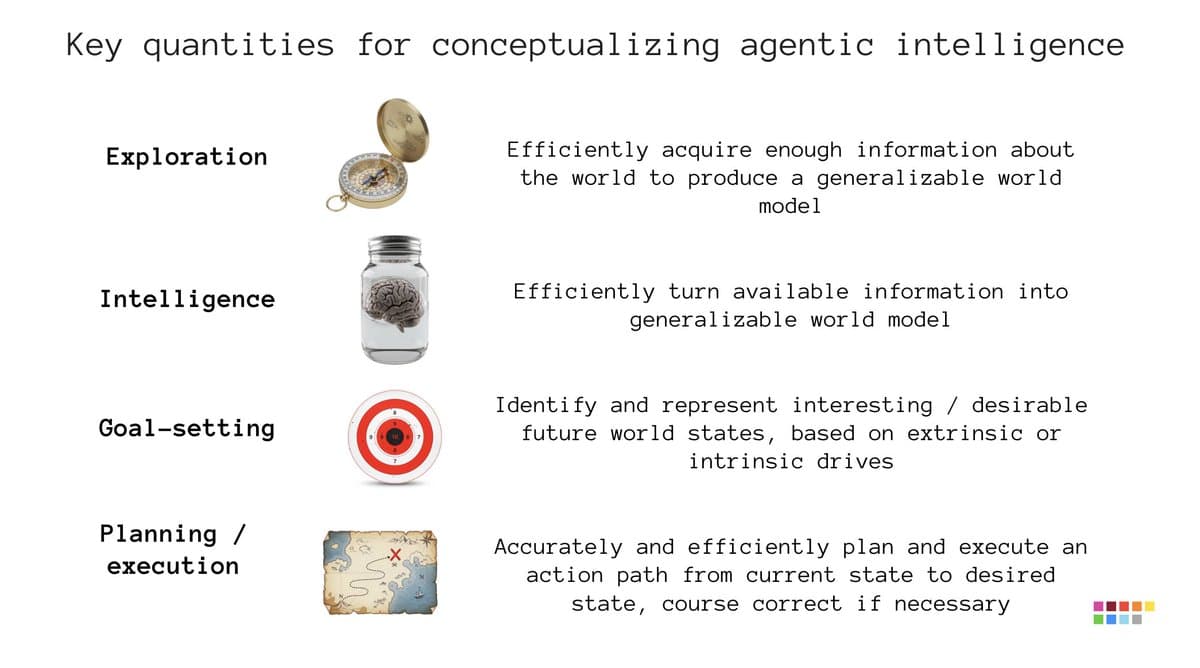

Intelligence Requires Exploration, Goal‑Setting, and Planning

Fluid intelligence as measured by ARC 1 & 2 is your ability to turn information into a model that will generalize. That's not the only thing you need to make an intelligent agent. To start with, when you're an agent in...

Test‑time Adaptation Unlocks Fluid Intelligence, but AGI Still Distant

Back in 2019, ARC 1 had one goal: to focus the attention of AI researchers towards the biggest bottleneck on the way to generality, the ability to adapt to novelty on the fly, which was entirely missing from the legacy...

Edit LLM Behavior Safely without Retraining, Says CTGT

Cyril and the team at CTGT are productizing mechanistic interpretability. They make it possible to edit the behavior of LLMs to add safety policy guarantees without retraining, in a way that is much more reliable than simple prompting.

ARC 2025 Highlights LLM Refinement & Zero‑Pretraining Advances

Congrats to the ARC Prize 2025 winners! The Grand Prize remains unclaimed, but nevertheless 2025 saw remarkable progress on LLM-driven refinement loops, both with "local" models and with commercial frontier models. We also saw the rise of zero-pretraining DL approaches like HRM...

Join Keras Community Meeting Today for Roadmap Updates

The Keras community video meeting is happening today at 10am PT (in 1 hr 10 min). Join to get updates on the development roadmap and ask questions to the Keras team. URL in next tweet

True AGI Demands General Learning, Not Task Stacking

Either you crack general intelligence -- the ability to efficiently acquire arbitrary skills on your own -- or you don't have AGI. A big pile of task-specific skills memorized from handcrafted/generated environments isn't AGI, not matter how big.

Waymo on Track to Cover over Half US by 2028

My prediction of Waymo covering >50% of the US by eoy 2028 is looking good

AI Will Cross a Self‑improvement Threshold, Leading to Gradual Progress

There's a specific threshold of complexity and self-direction below which a system degenerates, and above which it can open-endedly self-improve. Current AI systems aren't close to it yet. But it's inevitable we will reach this point eventually. When we do, we...

Waymo Goes Fully Driverless in Dallas After Rapid Growth

Waymo started testing with a safety driver in Dallas just 4 months ago. They're now fully driverless -- no one but you in the car. Waymo has been expanding at >500% per year.

True Understanding Equals Minimal Compression, Not Massive Parameter Counts

To perfectly understand a phenomenon is to perfectly compress it, to have a model of it that cannot be made any simpler. If a DL model requires millions parameters to model something that can be described by a differential equation of...

Half‑Price Deep Learning with Python 3rd Edition Today

Black Friday deal for Deep Learning with Python (3rd edition): 50% off, just today. Go buy it: https://t.co/EL58J1Zl22

I’m Unable to View the Linked Content, so I Can’t Create a Headline.

https://t.co/XJNnjRCyYL

Gemini 3 Hits 31.1% on ARC‑AGI‑2 Benchmark

Gemini 3 scores 31.1% on ARC-AGI-2. Impressive progress.

Waymo Expands to Five New Cities, Scaling Fivefold Annually

Waymo is adding 5 new cities: Miami, Dallas, Houston, Orlando. Waymo has been growing about 5x per year since it started scaling its service in 2023.

Infinite AGI Returns Myth Inflates Tech Investment Tenfold

The notion that AGI would have infinite returns has been used to justify investment far above expected returns (by 10x-100x) for technology that is neither AGI nor on the path to AGI

Algorithms Now Outpace Labs as Science’s Primary Tool

The most powerful scientific instrument of the 21st century isn't the electron microscope or the particle collider. It's the algorithm. Today, a scientist in biology, physics, chemistry etc. is more likely to be debugging a Python script than to be running...

TSGM: Keras 3 Library for Synthetic Time‑Series Generation

TSGM is a Keras 3 based library for generating synthetic timeseries datasets: https://t.co/cKNN2PJtG7

Deep Learning Demystified: Intuition‑Driven Modern Stack Guide

Deep learning is not a collection of black-box tricks, contrary to what many believe. It can be learned as a principled engineering discipline. This latest edition of Deep Learning with Python is my best attempt so far at teaching it. It...

Boost Colab Training Speed 4‑5× with TPU and Steps_per_execution

If you're using Colab and you feel like training your model on GPU is slow, switch to the TPU runtime and tune the "steps_per_execution" parameter in model.compile() (higher = more work being done on device before moving back to host...

ML Research: Build, Test, Learn—Not Just Speculate

ML research is an engineering discipline, not a philosophy seminar. You build, you test, you learn. Untested ideas are just speculation.

Autonomous AI Evolves by Coding Its Own Models

The path to autonomous AI is a system that learns to solve new problems by synthesizing models of them on the fly (as code), and that gets smarter over time by adding new abstractions to its own library (also as...

Understanding = Ability to Act Appropriately in Any Situation

For me, what it means to "understand" something can be characterized purely behaviorally. You understand a thing if you have the ability to act appropriately in response to situations related to the thing. You understand how to make coffee if you...

AGI's Solution Will Seem Obvious in Hindsight

When you see the solution to AGI you will find that it was in fact so straightforward as to be obvious, and that it could have been developed decades ago

One Day Left: Leaderboard Shifts, Overfitting Warning

One day left to submit to ARC Prize 2025 on Kaggle! Big changes at the top of the leaderboard these past few days, with the rise of teams NVARC and North Stars. Close contest between GiottoAI and ARChitects for the top...

Use JAX + Keras 3 for Scalable Deep Learning

For anyone getting started with deep learning in 2025 and looking to do large scale training: use JAX + Keras 3. Unless you like suffering.

Babies Invent Crawling, Proving Invention Is Human Core

Crawling isn't innate (unlike walking). Every baby must *invent* crawling, from scratch, using extremely little data, and no reference to imitate. Which is why different babies end up with different ways of crawling. Sometimes people tell me, "you say AI isn't...

Boredom, Not IQ, Limits Mastery—Persist to Reach Flow

The bottleneck for deep skill isn't usually intelligence, but boredom tolerance. Learning has an activation energy: below a certain skill threshold, practice is tedious, but above it, it becomes a self-sustaining flow state. The entire battle is persisting until that transition.

Gemini Overtakes ChatGPT as Default AI Reference

People (outside of tech) used to say "ChatGPT" to mean "an LLM chatbot", because most of the time, that's what it was. But recently I've been hearing a lot of "Gemini told me..." The writing is on the wall

Clear Problem Specs Unlock Solutions, Vague Ones Stall

If a problem seems intractable, it's almost always because your specification of it is vague or incomplete. The solution doesn't appear when you "think harder". It appears when you describe the problem in a sufficiently precise and explicit fashion -- until...

ARC Prize Seeks Backend Engineer for AGI Evaluation

The ARC Prize foundation is hiring a backend engineer. If you're a builder with a strong track record and you're passionate about our mission of building the best AGI evals possible, please apply ⬇️

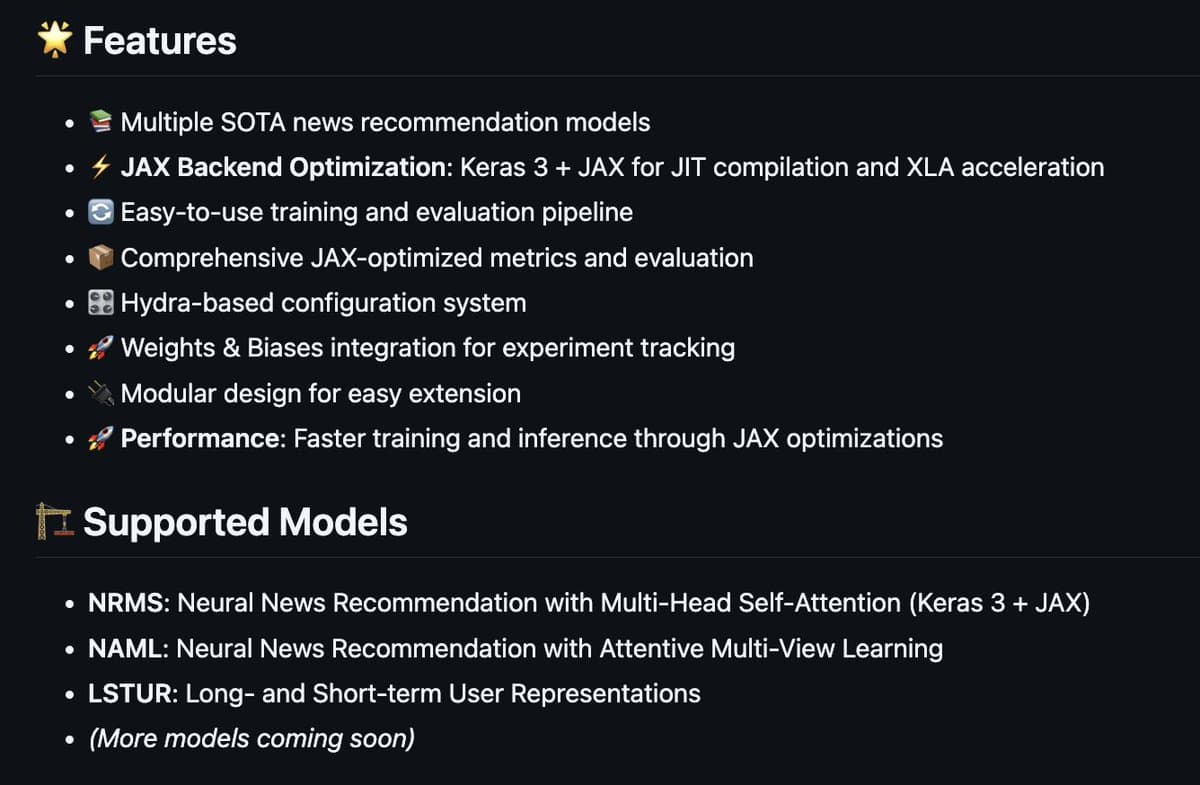

Modular News Recommendation Framework Scales with Keras 3 and JAX

NewsRex! 🦖 A modular framework for SOTA news recommendation, built on Keras 3 + JAX backend for extreme scalability and performance with XLA acceleration. Extensible and easy to use. GitHub link in next tweet. https://t.co/zSVkOgIqQC

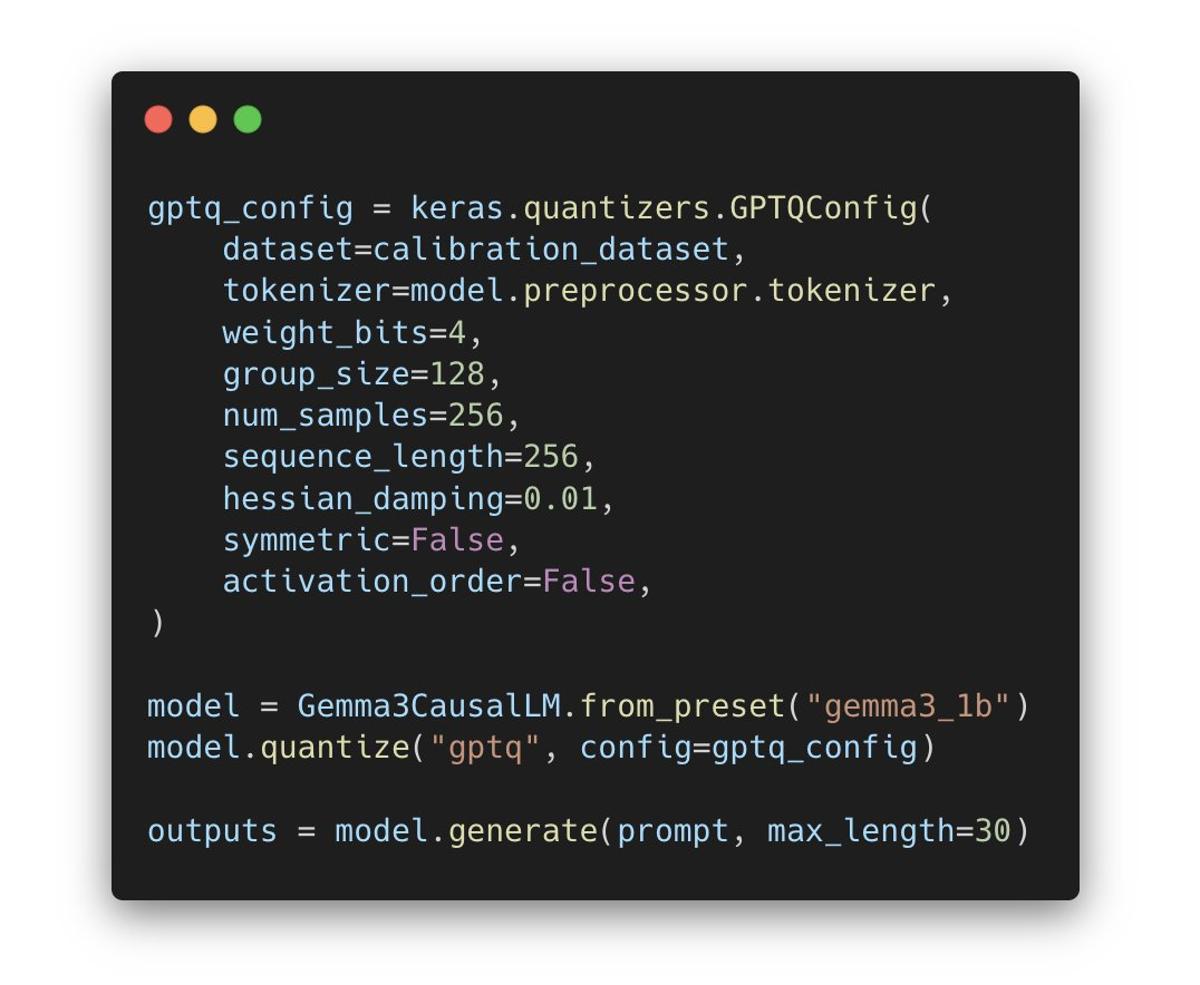

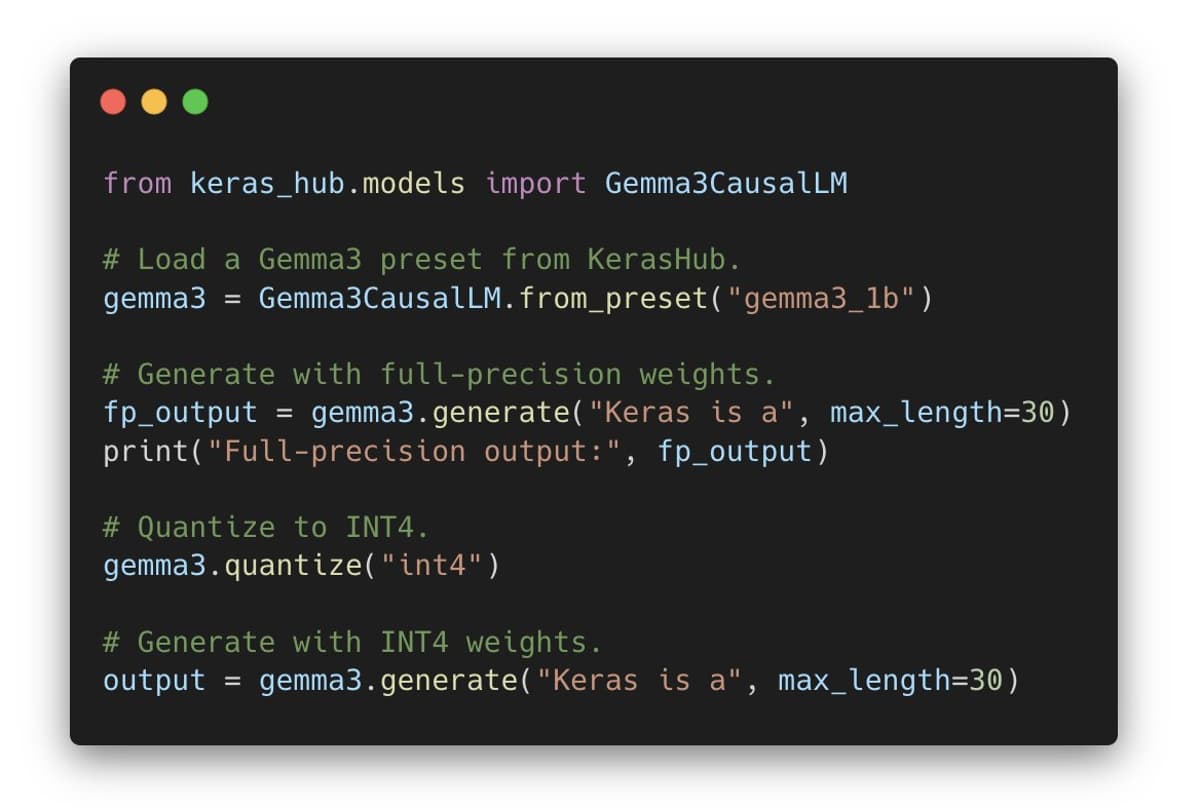

GPTQ Brings Int4 Layerwise Quantization to Keras 3

GPTQ is a post-training, weights-only quantization method that compresses a model to int4 layer by layer. For each layer, it uses a second-order method to update weights while minimizing the error on a calibration dataset. It comes built-in in Keras 3...

Accelerate Keras Models with Low‑precision Quantization

Run your models faster with quantized low precision in Keras! You can quantize any model (one of your own models or a KerasHub pretrained model) via `model.quantize(mode)`. Supports int4, int8, float8, GPTQ. Works with JAX, TF and torch. Links to guides in next...