Know What's Happening in Big Data

Know What's Happening in Big Data

Immuta launches Agentic Data Access module for AI agents

Immuta unveiled an Agentic Data Access module that lets autonomous AI agents retrieve enterprise data in real time while enforcing governance policies. The module treats agents as first‑class data users, applying least‑access and zero standing privileges and providing audit trails, all built on Immuta’s policy engine.

CIQ announced the CIQ Startup Program, giving early‑stage, VC‑backed startups six months of free access to its high‑performance AI infrastructure and up to an 80% discount for the following two years. The offering includes Rocky Linux AI with pre‑integrated frameworks, the Fuzzball container orchestration platform, hardened Linux images for regulated markets, and direct engineering support. The program targets companies from seed to Series C that run production AI workloads or need compliance‑ready environments. Startups report faster deployments and greater infrastructure efficiency under the new scheme.

In this episode, Tim Wilson, Val Kroll, and Spotify product manager/data scientist Mårten Schultzberg discuss the limits of focusing solely on win rates in experimentation and introduce a broader "learning rate" metric that captures wins, regressions (avoiding bad outcomes), and neutral...

Rocket Software announced a definitive agreement to acquire the Vertica analytics database from OpenText. Vertica, known for high‑performance, cloud‑ready analytics and AI/ML capabilities, will join Rocket’s portfolio of modernization tools. The cash‑funded deal is slated to close in mid‑2026, pending...

Commvault has launched Geo Shield, a sovereign‑data protection suite that lets enterprises dictate where data resides, who controls access, and who holds encryption keys. The offering spans four deployment models—from local hyperscaler SaaS to private sovereign clouds—supporting both BYOK and HYOK...

In this episode, Tim Berglund chats with data infrastructure veteran Richie Artoul about his unconventional path—from running a LAN gaming café to building log storage at Datadog and now leading WarpStream at Confluent. Richie shares the technical and cultural challenges...

The February 2 storage news ticker packed a series of vendor recognitions, product launches and strategic moves across data quality, protection, AI and memory markets. Ataccama earned the top Forrester strategy score, while Coldago’s 2025 map highlighted Cohesity, Commvault, Rubrik and...

4 practical AI lessons from sport. Sport is one of the best stress tests for AI, because decisions are fast, public, and high stakes. Here are 4 AI lessons every executive can steal from elite sport 👇 4) Fan Engagement...

Choosing The Right AI In 2026 Is No Longer About Choosing The Right Model In 2026, choosing the right #AI comes down to matching #capability profiles to specific tasks, risk levels and business outcomes, rather than chasing benchmark winners. This...

Converting binary floating‑point numbers to decimal strings is a core step in JSON, CSV, and logging pipelines. Recent research benchmarks modern algorithms—Dragonbox, Schubfach, and Ryū—showing they are roughly ten times faster than the original Dragon4 from 1990. The study finds...

An empirical analysis of 200 B2B AI projects from 2022‑2025 reveals a “Budget Paradox”: deployments under $20,000 achieve a median ROI of 159.8%, while larger, monolithic programs frequently fail to break even within two years. The study, validated by Harvard...

The article maps a data‑engineering career trajectory that begins with hardware‑oriented roles and ends in building scalable data pipelines. It highlights how circuit‑design thinking translates into logical data modeling, while emphasizing the need to acquire SQL, Python, and cloud‑native tools....

The article pits Apache Airflow, the open‑source workflow orchestrator, against Databricks Lakeflow, a newer Lakehouse‑native pipeline engine. It outlines core differences in architecture, integration depth with cloud data platforms, and pricing models. Airflow remains favored for heterogeneous environments, while Lakeflow...

The article highlights a single Polars pattern—using the pipe operator—to streamline data‑frame code, cutting boilerplate and boosting readability up to tenfold. By chaining transformations in a lazy execution graph, developers avoid intermediate variables and gain clearer, more maintainable pipelines. The...

In this 34‑minute episode, Juan and Tim unwind over a beer to discuss recent developments in the data landscape and share their key takeaways from Data Day Texas. They cover topics such as the hype around AI versus real monetary...

Etleap announced a managed pipeline platform purpose‑built for Apache Iceberg, addressing the missing orchestration layer in Iceberg deployments. The solution consolidates ingestion, transformation, orchestration, and table operations into a single service that runs inside the customer’s virtual private cloud. By...

Photonic computing is emerging as a realistic path to higher‑throughput, more energy‑efficient data centers, potentially arriving before general‑purpose quantum machines. By using photonic integrated circuits to perform linear‑algebra operations in the optical domain, these systems promise faster speeds, greater bandwidth,...

Data center construction will need 2 million metric tons of cement by 2030, potentially releasing 1.9 million tons of CO₂ if conventional concrete is used. Tech giants such as Microsoft, Amazon and Meta have signed low‑carbon concrete offtake agreements with startups like...

California fabless chipmaker AmberSemi announced its new PowerTile, a quarter‑size, 1,000‑amp vertical power‑delivery module designed to sit behind AI processors in servers. The device claims to cut board‑level power distribution losses by 85%, potentially saving 225 MW of electricity per year...

AI performance hinges not just on GPUs or LLMs but on the storage layer that feeds data to accelerators. A Meta‑Stanford white paper shows storage can consume up to one‑third of the power used for deep‑learning training. When storage cannot...

In this episode, host Joe Reis shares his hobby of stress‑testing AI analytics agents and introduces his own testing framework. He evaluates Cube's new AI analytics agent, highlighting how its semantic‑layer approach prevents common failures like hallucinated tables and incorrect...

The episode highlights Gartner's new survey of 119 chief audit executives (CAEs), revealing that building a culture of innovation and leveraging data analytics and generative AI are the top internal audit priorities for 2026. While 83% of audit functions are...

Important new course: Agent Skills with Anthropic, built with Anthropic and taught by Elie Schoppik ! Skills are constructed as folders of instructions that equip agents with on-demand knowledge and workflows. This short course teaches you how to create them...

Digital Edge announced a $4.5 billion investment to build a 500 MW AI‑ready hyperscale data‑center campus in Bekasi, Indonesia, with scalability to 1 GW. The CGK Campus will roll out in three phases, delivering the first building by Q4 2026 and completing the initial...

Bruce Momjian delivered a talk at Prague PostgreSQL Developer Day titled “What’s Missing in Postgres?” highlighting that most gaps in the open‑source database are performance‑related rather than functional. He fielded audience questions that ranged from the role of extensions to...

The UK government has launched a suite of free and subsidised AI training courses aimed at upskilling the adult workforce. Designed with input from Amazon, Google and Microsoft, the programme offers 14 modules that culminate in a virtual badge. The...

Meta Platforms has entered a multi‑year, $6 billion agreement with glassmaker Corning to supply optical fiber, cable and connectivity solutions for its expanding U.S. data‑center portfolio. The partnership will boost Corning’s manufacturing capacity in North Carolina, adding up to 20 % more...

AI‑driven workloads are pushing rack power densities beyond 100 kW, forcing data‑center operators to adopt hybrid cooling that blends direct liquid cooling with traditional airflow. Greenfield projects offer a clean slate for liquid‑first designs, heat‑reuse systems, and renewable integration, but they...

The article explains how unused PostgreSQL indexes silently degrade performance by slowing write operations, increasing vacuum workload, and wasting disk space. It outlines a systematic, production‑safe process for identifying such indexes using statistics, constraint checks, and usage counters. The guide...

AI is transforming how organisations explore and understand their data. In this video, I show how natural language interfaces, real-time insight detection, and AI agents are reshaping decision-making and democratising analytics for everyone. 📊🤖 #DataAnalytics #AI #BusinessIntelligence #FutureOfWork #TechInnovation

In this episode, Genetec highlights data‑privacy best practices for physical‑security systems ahead of International Data Protection Day. Principal Security Architect Mathieu Chevalier stresses the need for clear data‑use limits, privacy‑by‑design controls, and continuous protection throughout the data lifecycle. The company recommends...

The article introduces the concept of a financial weather map, using data analytics to turn static financial reports into dynamic, forward‑looking risk and opportunity visualizations. By blending historical records with real‑time inputs, organizations can spot early warning signals such as...

Artificial intelligence is now being used to map the ripple effects of late payments across enterprises. By correlating invoice delays with cash‑flow strain, borrowing costs, and operational bottlenecks, AI turns raw payment data into actionable insight. Predictive models flag high‑risk...

Financial analytics is exposing the hidden expense of keeping legacy systems, revealing that seemingly functional tools mask productivity losses, delayed decision‑making, and compliance risks. The analysis shows minutes wasted on manual workarounds compound into millions of labor hours, while slower...

In this episode, Adi Polak interviews Bryan Oliver of Thoughtworks about his journey from building swimming pools to engineering massive GPU racks for AI workloads. Oliver explains the technical and operational challenges of running $3M GPU data centers, focusing on...

Enterprises are racing to adopt AI, yet 77% fear hallucinations and 70‑85% of projects fall short. Multi‑model AI, also called ensemble or consensus AI, tackles this by running several independent models and using majority agreement to validate outputs. Early adopters...

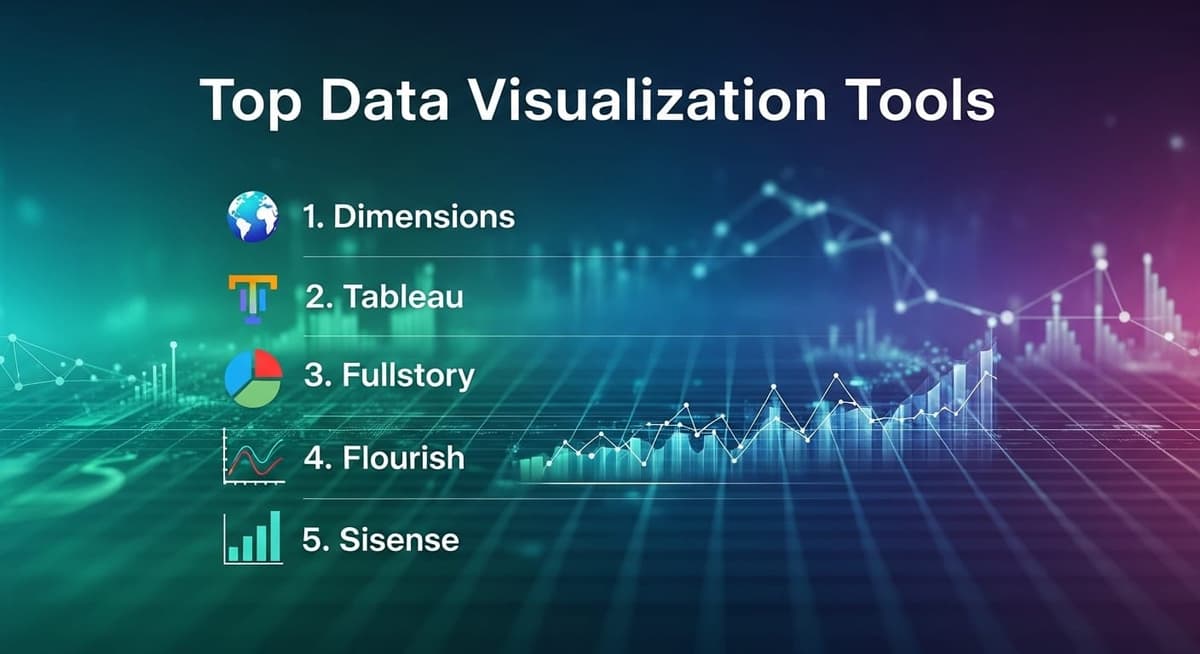

The article reviews five leading data‑visualization platforms—Dimensions, Tableau, Fullstory, Flourish and Sisense—highlighting each tool’s core capabilities for research projects. Dimensions pairs with VOSviewer for bibliometric network mapping, while Tableau excels at drag‑and‑drop dashboards across multiple data sources. Fullstory offers session‑replay...

The article evaluates enterprise‑grade cybersecurity platforms, outlining key criteria such as AI/ML capabilities, coverage breadth, autonomous response, total cost of ownership, and scalability. It reviews five leading solutions—Darktrace, CrowdStrike, SentinelOne, Palo Alto Networks, and Microsoft Defender—detailing each vendor’s strengths and...

AI Agents Are Killing Brand Loyalty — And Reshaping How We Shop AI driven personalisation and recommendations are changing consumer habits — the article argues we may be witnessing the end of traditional brand loyalty. Read more 👉 https://lnkd.in/eKJxW8du #AI...

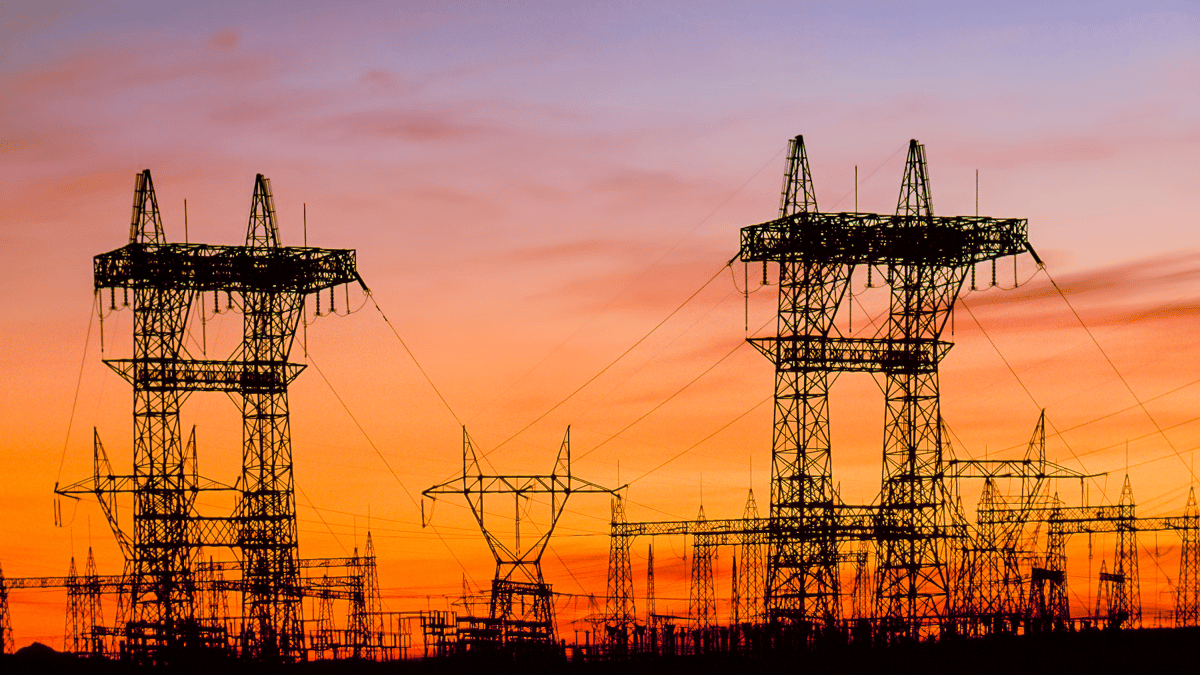

Power purchase agreements (PPAs) have long helped data‑center operators meet sustainability targets, but rising grid constraints are prompting a reassessment of their role. As AI workloads drive higher electricity demand, PPAs can provide fixed‑price, volume‑guaranteed contracts that may grant priority...

📊 AI is transforming business, education, healthcare, and the global economy at an unprecedented speed. But how big is the AI revolution really? In this video, I break down the most important AI facts, statistics, and trends that everyone needs...

5 Important Davos 2026 Signals Leaders Mustn’t Ignore Discover the five under-the-radar themes from #Davos2026 that are reshaping #AI #strategy , digital sovereignty, #supplychain #resilience , #workforce #skills , and #trust in business. Learn what global leaders discussed away from...

Machine learning is now central to satellite‑object tracking, where algorithms scan thousands of orbital coordinates to flag collision risks far earlier than traditional radar. With over 12,000 active satellites, manual monitoring is infeasible, prompting platforms like Orb to blend telescope,...

DayOne, the Singapore‑based data‑center operator, announced an early‑stage plan to build a up‑to‑560 MW facility in Nurmijärvi, Finland, following a $2 billion Series C equity raise. The project, located in Klaukkala, will partner with Finnish utility Fortum to secure zoning approvals and grid...

FuelCell Energy and Sustainable Development Capital (SDCL) have signed a letter of intent to deliver up to 450 MW of fuel‑cell power for data‑center applications worldwide. The partnership focuses on Molten Carbonate Fuel Cells that can operate behind the meter, supplying...

The digital infrastructure sector is poised for trillion‑dollar growth as demand for cloud, AI and emerging technologies fuels massive data‑center expansion. Owners and operators must adopt holistic risk‑management that addresses interdependent threats from construction delays to cyber attacks. Advanced risk‑transfer...

Checkout this video of how to use Agent Skills in Databricks. 👩🏽🔬 Gavita does a great job in this video.

In this episode, Unity Stoakes interviews Robin Roberts, CEO of datosX Digital Health Labs, about transforming digital health validation from a bottleneck into a catalyst for adoption. Roberts explains how datosX leverages tier‑1 health system partnerships to run regulatory‑grade validation...

The episode examines why the data‑industry’s discussion of data contracts stalled at theory rather than implementation, contrasting it with the software world’s shift toward spec‑driven development where specifications become the system itself. It argues that data contracts should be treated...

In episode #289 the hosts discuss the essential role of business acumen for data and analytics professionals, defining it as both a grasp of general business fundamentals (finance, marketing, P&L) and deep knowledge of one’s own organization and industry context....

In this episode, Armin Ronacher warns that AI agent psychosis could be making us collectively uneasy, while Dan Abramov breaks down the AT Protocol as a social filesystem for decentralized apps. RepoBar is highlighted as a tool that surfaces your...