Dashboard Dread to AI-Driven Decisions: How Tira Rebuilt Its Analytics Workflow

India’s leading beauty retailer Tira overhauled its analytics workflow by integrating Amplitude AI agents and the Model Context Protocol. The new stack automatically monitors KPI dashboards, sends targeted alerts, and generates AI‑driven daily summaries, reducing the analysis cycle from over a week to just one or two days. By enforcing role‑based access and avoiding PII, Tira maintained governance while scaling AI‑powered insights. The shift enables teams to ask questions directly rather than building static views.

MAHA Institute Names Chief Data Strategist Focused On Interoperability Issues

The MAHA Institute, a policy hub backing HHS Secretary Robert F. Kennedy Jr.'s "Make America Healthy Agenda," has appointed health‑technology executive Jaime Bland as its chief data strategist. Bland’s mandate centers on improving patient access to medical records and establishing...

Stop Adding Indexes: What's Actually Slowing Your SQL Server Queries When SSIS Loads Data

A non‑clustered index reduced a single query from 12 seconds to 400 ms, but the same indexes later doubled an SSIS load window from 40 to 90 minutes. Each index adds write‑time overhead on every INSERT, UPDATE, and DELETE performed by...

Turning SAP Data Into Agentic Insights with Qlik

Enterprises using SAP often struggle with siloed, hard‑to‑access data that hampers AI initiatives. Qlik’s new Data Products for SAP aim to turn that complexity into "agentic" intelligence by delivering pre‑configured models, automated field translations and real‑time AI querying. The solution...

ETL Tool Evaluation Checklist: 6 Things to Look for Before You Choose

Choosing the right ETL platform is critical for moving, preparing, and accessing data efficiently. A six‑point checklist—covering integration, usability, performance, transformation depth, security, and business fit—helps separate robust solutions from those that become costly bottlenecks. The guide highlights Zoho DataPrep...

Google’s Cloud Storage Gets Faster and Smarter for AI

Google Cloud unveiled a suite of AI‑focused storage upgrades at Next ’26, including the high‑throughput Cloud Storage Rapid service, an enhanced Managed Lustre offering, and new Smart Storage capabilities. Rapid Bucket promises over 15 TB/s bandwidth and sub‑millisecond latency, while Rapid...

How Norway's Welfare System Moved 400GB of Daily Logs to Managed OpenSearch without a Service Interruption

Norway’s welfare agency NAV replaced its legacy Elasticsearch logging stack with a managed OpenSearch service from Aiven, driven by a license change and a broader cloud migration. The migration used a dual‑write approach, sending logs to both systems simultaneously, which...

The Best Data Platform Development Companies for High-Growth Teams

Companies growing fast often hit data bottlenecks that stall reporting, AI, and supply‑chain visibility. The article lists the top data platform development firms for 2026, evaluating technical depth, delivery record, and industry fit rather than size or marketing hype. Overcode,...

Database World Trying to Build Natural Language Query Systems Again – This Time with LLMs

Database vendors are reviving the quest for natural‑language query tools, this time leveraging large language models. AWS unveiled a Bedrock‑based text‑to‑SQL service, Snowflake introduced Cortex Analyst, and MongoDB released a LangChain‑powered query API. Benchmarks show current LLM‑driven solutions achieve roughly...

How Earnix Elevate Data Accelerates Pricing and Underwriting Decisions

Earnix introduced Elevate Data, a modern data‑management layer that centralizes and automates data preparation for insurers and banks. The platform connects to enterprise sources like Snowflake, Amazon S3, and Databricks, delivering automated profiling, transformation, and governance. By refreshing datasets in...

Watershed Launches New AI Agents to Clean “Messy” Sustainability Data

Watershed, a climate‑solutions platform, unveiled AI‑driven agents that clean and structure messy sustainability data. The agents automate unit conversions, duplicate removal, missing‑value handling and can produce disclosure‑ready ESG reports with suggested decarbonization actions. Alongside the tools, Watershed launched an eight‑week...

Real-Time Analytics: Oldcastle Integrates Infor with Amazon Aurora and Amazon Quick Sight

Oldcastle APG migrated its Infor Cloud ERP to AWS and built a real‑time analytics platform using Amazon Aurora PostgreSQL and Amazon QuickSight. By leveraging Infor Data Fabric Stream Pipelines, an NLB, RDS Proxy, and API Gateway, the company streams ERP...

Litmus and InfluxDB Collaborate to Modernize the Industrial Data Stack

InfluxData and Litmus announced a strategic partnership at Hannover Messe to integrate Litmus Edge with InfluxDB 3 Enterprise, creating a unified industrial data stack. The solution bridges OT systems to modern IT, delivering real‑time, high‑resolution telemetry with edge buffering and centralized analytics....

What Effective Oversight Looks Like in an Agent-Driven World

Enterprises are confronting a governance gap as AI agents move from answering queries to autonomously initiating workflows, updating records, and making decisions at machine speed. Traditional oversight—built around human roles, approvals, and periodic audits—cannot keep pace with the real‑time, cross‑system...

Cortex Code Expands: One Governed Agent for Your Entire Data Stack, Everywhere You Work

Snowflake announced that its Cortex Code AI coding agent is now available as a governed Cloud Agent inside Snowsight, adding zero‑install execution, web browsing, persistent storage and background tasks. The update introduces Plan Mode and Snap & Ask, giving users a previewable...

Automating Threat Detection Using Python, Kafka, and Real-Time Log Processing

Real‑time threat detection can be hardened by treating logs as a durable Kafka stream, normalizing them into a stable schema, and evaluating detections continuously. The article outlines a streaming‑first design that captures raw telemetry, applies Elastic Common Schema or OpenTelemetry‑style...

Addressing the Challenges of Unstructured Data Governance for AI

Enterprises in regulated sectors are expanding data governance beyond warehouses to the massive, unstructured data that now fuels AI models. Leaders cite visibility, lineage, and dynamic access‑control as the toughest hurdles, especially for documents like contracts, health records, and design...

Miovision Launches GenAI Agent for Traffic Departments

Miovision has introduced Mateo, the industry’s first purpose‑built generative AI agent for intelligent mobility, embedded in its Miovision One platform. The agent translates complex traffic datasets into natural‑language insights, producing charts, maps and safety metrics on demand. In beta trials...

PostgreSQL Performance: Is Your Query Slow or Just Long-Running?

PostgreSQL performance issues fall into two distinct categories: slow queries caused by inefficient execution, and long-running queries that simply process large workloads. Slow queries consume excess CPU and I/O due to missing indexes, bad statistics, or poor join strategies, while...

What Is a Business Intelligence Strategy? A Guide to Scalable, AI-Ready Analytics

Business Intelligence (BI) strategy is a long‑term blueprint that defines how organizations collect, govern, analyze, and operationalize data to achieve business goals. It ensures consistent metric definitions, embeds insights into workflows, and balances governance with self‑service access. Modern BI strategies...

3 Data Trends Shaping the Race to AI Across Industries in 2026

Snowflake’s Data Trends 2026 report identifies three cross‑industry AI imperatives: agentic AI is moving from pilot to production, the data foundation remains the chief bottleneck, and governance with semantic standards is emerging as a competitive moat. The report cites that...

Why Embedding Pipelines Break at Scale and How Lakehouse Architecture Fixes Them

Embedding pipelines work well for small prototypes but quickly break when the document corpus grows to millions and models evolve. Re‑embedding entire datasets becomes costly, and vector databases lack the lineage needed to answer compliance questions about which model or...

Pinecone Makes Dedicated Read Nodes Generally Available

Pinecone announced the general availability of Dedicated Read Nodes (DRN), a new tier that offers fixed hourly pricing, always‑hot data, and scalable read capacity for vector‑search workloads. DRN delivers predictable low‑latency, high‑throughput reads by provisioning memory and local SSD, while...

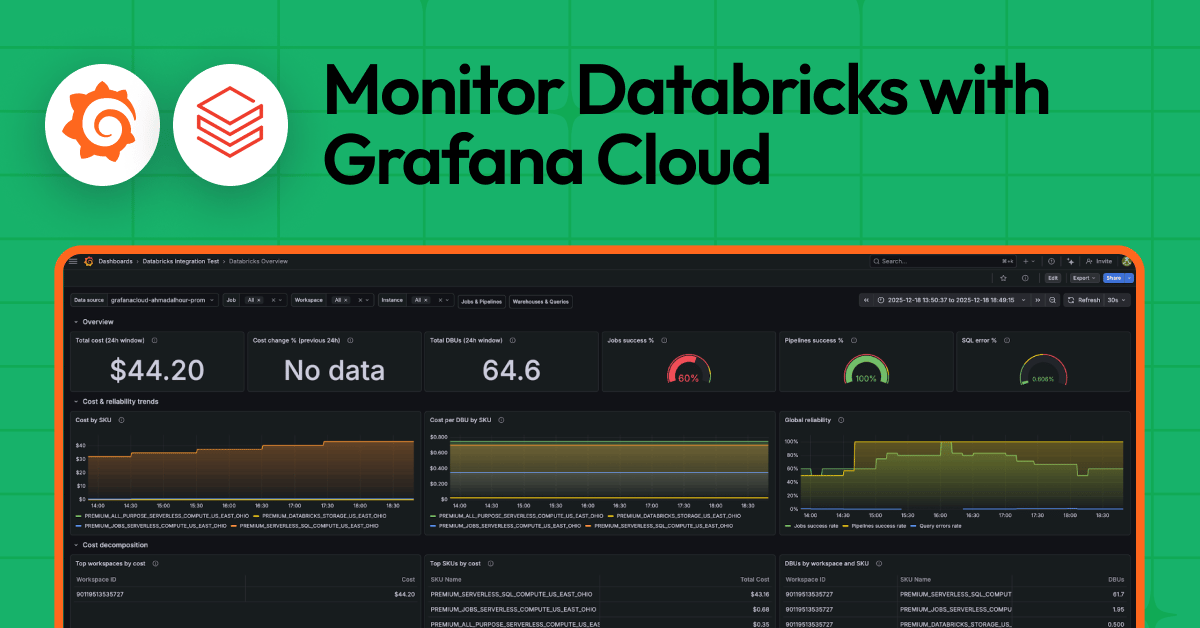

Monitor Databricks with Grafana Cloud for Instant Visibility Into Your Workloads

Grafana Cloud launched a native Databricks integration that streams billing, job, pipeline, and SQL warehouse metrics directly into Grafana dashboards. The offering includes three prebuilt dashboards and 14 default alert rules tailored for FinOps, SRE, and analytics teams, eliminating the...

Metadata Driven Data Engineering: Declarative Pipeline Orchestration in Lakeflow

Databricks Lakeflow introduces a metadata‑driven, declarative model for streaming pipelines, letting engineers define tables and flows with simple Python decorators instead of hand‑coded Spark jobs. The platform automatically infers dependencies, builds an execution DAG, and orchestrates jobs with built‑in retry,...

BESS Analytics ‘Bridge the Gap Between Technical Performance and Commercial Outcomes’

Battery energy storage system (BESS) operators are turning to cloud‑based analytics to overcome fragmented data and inconsistent performance metrics. TWAICE, a German firm with a growing U.S. presence, provides a platform that standardizes health, performance, and lifetime metrics across individual...

Kleene.ai Launches KAI Assistant A Native AI Interface for Its Data and Analytics Platform

Kleene.ai introduced KAI Assistant, a native AI layer that lets data teams and business users generate SQL, debug pipelines, and explore data using natural language. The tool, built on Google Gemini via Vertex AI, converts data to synthetic form with...

Tredence Named a Market Leader in the Inaugural ISG Provider Lens™ 2026 Databricks Ecosystem Partners Report

Tredence has been named a Leader in ISG’s inaugural Provider Lens™ 2026 Databricks Ecosystem Partners report. The firm is praised for its AI‑first, agent‑based approach to modernizing data estates and accelerating decision intelligence on Databricks. The recognition highlights Tredence’s portfolio...

The Hidden Complexity of Multi-Cloud Data Architecture (And How to Master It)

A Fortune‑500 enterprise migrated 440 products to a multi‑cloud environment spanning AWS, Azure and GCP, ending up with 57 Snowflake accounts and soaring egress costs. The team discovered that compute, not storage, accounted for over 80% of spend and that...

What Power BI DirectQuery Does to Your SQL Server (and How to Fix It)

Power BI DirectQuery pushes every visual interaction to SQL Server as live T‑SQL, turning dashboards into a flood of ad‑hoc queries. The generated SQL is verbose, with nested subqueries, CASTs and non‑sargable predicates that strain the plan cache and indexes....

GitHub Copilot's New Policy for AI Training Is a Governance Wake-Up Call

GitHub announced that, beginning April 24, 2026, interaction data from Copilot Free, Pro and Pro+ users—including prompts, code snippets and context—will be used to train its AI models by default, unless users opt out. Business and Enterprise customers are exempt...

Codelco Taps Microsoft for Analytics, AI

Codelco, the world’s largest copper miner, has signed an 18‑month partnership with Microsoft to deploy artificial intelligence, advanced analytics, automation and digital security solutions. A joint governance board will oversee strategic and operational execution, ensuring pilots and early‑stage tests align...

Survey: Poor Data Infrastructure Creates Waste in AI Spending

A Hitachi Vantara survey of 1,200 executives reveals that legacy data environments are hampering AI returns, with 84% of North American firms describing their data stacks as overly complex. AI budgets are set to surge 76% over the next two...

Oracle Delivers Semantic Search without LLMs

Oracle introduced Trusted Answer Search, a semantic search solution that relies on vector similarity rather than large language models. Enterprises define a curated search space of approved documents and metadata, enabling deterministic, auditable responses such as reports or URLs. The...

Dbt Projects on Snowflake: Build & Deploy with Cortex Code

Snowflake’s Cortex Code adds an AI‑driven layer to dbt projects, accessible via the Snowsight UI or a lightweight CLI. The tool bridges local development and Snowflake, auto‑generating SQL, documentation, tests, and YAML updates from natural‑language prompts. It also scans run...

Do You Still Need to Centralize Your Data if Your Interface Is Claude?

The article argues that Claude‑style AI agents can serve as a universal interface for analytics, CRM, and campaign tools, potentially eliminating the need for a centralized data warehouse. However, this only works when user identities and schemas are consistent across...

Storage News Ticker – April 17

April 17’s data‑management ticker highlighted a wave of product launches and market milestones aimed at simplifying AI‑driven data workflows and bolstering sovereign‑cloud resilience. Adeptia’s Automate 5.2 adds natural‑language querying for workflow diagnostics, while Attacama ONE offers audit‑ready evidence to satisfy the EU...

Shaun Thomas: Enforcing Constraints Across Postgres Partitions

PostgreSQL’s partitioned tables cannot enforce a global unique or primary key unless the constraint includes the partition key, because each partition maintains its own index. Developers often need uniqueness across all partitions for deduplication, but the built‑in limitation forces workarounds....

DuckDB Uses RDBMS to Attack Classic 'Small Changes' Problem in Lakehouses

DuckDB Labs released DuckLake v1.0, a production‑ready lakehouse format that uses an embedded RDBMS as a metadata catalog to batch tiny data changes before flushing them to Parquet files. By storing row‑level inserts and deletes in DuckDB, PostgreSQL or SQLite, the...

Why Hospital Dashboards Tell the Future But Operations Remain Stuck in the Past

Over the past decade, hospitals have poured capital into data warehouses, interoperability and predictive dashboards, creating an abundance of real‑time intelligence. Yet most health systems still treat analytics as a reporting layer, with decisions anchored in historical precedent and negotiated...

When, and when Not, to Use LLMs in Your Data Pipeline

Data teams often rush to add large language models (LLMs) to pipelines, but misapplication can cause cost, latency, and compliance headaches. The guide outlines where LLMs truly add value—unstructured text enrichment, semantic search with retrieval‑augmented generation, natural‑language‑to‑SQL, and anomaly explanation—while...

Stop Waiting for Insights. Build the System That Produces Them

Marketing leaders often centralize customer data expecting instant insights, but they end up with clean dashboards that lack actionable guidance. The article argues that insight emerges only when data is coupled with a systematic execution engine that captures response metrics...

The End of the Informatica Era: Why Chief Data Officers Are Replacing Legacy MDM with Agentic Architecture

In November 2025 Salesforce completed an $8 billion acquisition of Informatica, prompting CDOs to reassess their MDM roadmaps. Gartner forecasts that by late 2026 roughly 65% of MDM tasks will be handled autonomously, making agentic architectures the new norm. Syncari’s cloud‑native,...

What Engineering Leaders Get Wrong About Data Stack Consolidation

IBM's $11 billion acquisition of Confluent underscores a growing trend of open‑source data tools being folded into large vendor platforms. The article warns that such consolidation creates hidden architectural debt, eroding engineering autonomy and long‑term portability. It urges leaders to evaluate...

Snowflake Storage for Apache Iceberg™ Tables: Snowflake Simple Interoperability

Snowflake announced public availability of Snowflake Storage for Apache Iceberg™ tables on AWS and Azure, letting customers host Iceberg data on Snowflake‑managed storage. The service eliminates the need for self‑managed cloud buckets, providing built‑in encryption, IAM handling, and metadata tracking....

Isle of Man Wants to Turn Datasets Into Balance-Sheet Assets

The Isle of Man has introduced a Data Asset Foundation (DAF), a statutory legal structure that lets companies register curated datasets as formal property assets. Once entered into a DAF, data can be listed on balance sheets, used as collateral,...

Quantiiv’s Virtual Executive for Restaurants; Popmenu and SpotOn Create a Unified Commerce Platform

Quantiiv has introduced ROGER, an AI‑powered virtual executive that lets restaurant managers ask business questions via email and receive actionable insights. The tool pulls data from POS, inventory, marketing, weather and macro‑economic sources, mimicking a team of analysts. Over 650...

Redpanda Connect Eliminates Silos with Salesforce Connectors, Streaming CDC for Oracle and DynamoDB

Redpanda announced the general availability of four new Redpanda Connect components—a DynamoDB change‑data‑capture (CDC) input, an Oracle CDC input, and both a processor and an output for Salesforce. The connectors run natively in any Kubernetes cluster, letting teams replace fragile...

Why ETL Needs a Mindset Shift

Most organizations still treat ETL as a one‑time project, assuming pipelines are set once and never need change. In reality, evolving CRM fields, new finance rules, and compliance requirements constantly break static pipelines, forcing teams back to manual fixes. The...

Press Release: IntellectEU Launches Catalyst Data Intelligence to Enable ISO 20022 Structured Data Readiness

IntellectEU announced the launch of Catalyst Data Intelligence, an AI‑driven platform that converts unstructured CBPR+ address data into ISO 20022‑compliant formats. The tool is designed for banks operating in regions with fragmented postal systems, where Swift’s 2026 Standard Release will reject...