Class Takeaways — Turning Data Into a Superpower

Professor Mosen Bay, a Stanford GSB faculty member, outlines five core principles from his Business Intelligence from Big Data course, framing data mastery as a strategic superpower in today’s information‑rich environment. He stresses grounding decisions in diverse data rather than gut instinct, illustrating the point with a hospital VP who misreads random infection‑rate fluctuations as a successful protocol. The course then urges hands‑on experimentation with AI APIs to reveal capabilities and limits, emphasizes formulating the right business question before model selection, and advocates layering expert human judgment on AI outputs to counter bias and blind spots. Finally, Bay highlights that data‑driven decision‑making thrives on cross‑functional collaboration among domain experts, engineers, and AI scientists. Key quotes include, “Don’t just consume the analysis, actively shape it,” and the reminder that “random noise shows up as a trend.” These anecdotes reinforce the need to separate signal from noise and to treat AI as an augmentative tool, not a replacement for human insight. The implications are clear: leaders who internalize these habits can navigate uncertainty with greater clarity, accelerate proof‑of‑concept initiatives, and ensure that AI investments translate into tangible business value, positioning their organizations for competitive advantage in an increasingly AI‑driven market.

Is AI Going to Take Over Data Engineering?

The speaker argues that AI will significantly affect data engineering but not fully replace it, using a crane-building analogy: AI is a tool that speeds work and increases productivity. While automation may reduce some roles, it can also expand capacity,...

How Vera Rubin's Insane Data Pipeline Works. And How You Can Use It

The video explains how the Vera Rubin Observatory’s massive time‑domain survey generates an unprecedented flood of alerts—millions of transient detections each night—and how those data are handed off to a network of seven data brokers. The raw images are taken in...

Why Real-World Projects Beat Online Courses

The video argues that learning through real‑world projects is far more effective than relying solely on online courses or theoretical lectures. The speaker illustrates this with a personal example: receiving a fraud alert on an American Express card makes the concepts...

Data Governance Analyst Skills 2026 | Data Governance Analyst Roadmap 2026 | #Shorts | #Simplilearn

The video spotlights the emerging role of the Data Governance Analyst in 2026, emphasizing that companies are shifting focus from traditional data analysts to professionals who can certify the reliability of massive data stores. It argues that trust in data...

Stop Learning 100 Tools—Master AWS Instead

The speaker advises job-seekers targeting higher-paying data roles to deepen expertise in one dominant cloud platform—AWS—rather than skimming many tools like Snowflake, Databricks or other clouds. They argue proficiency exists on a continuum and recommend mastering enough AWS to reliably...

Global Medical Data Infrastructure for AI Systems with MedSyntra - Life Sciences Today Podcast Ep 52

The Life Sciences Today podcast introduced Medentra, a Tel‑Aviv‑based startup building a global infrastructure that transforms fragmented radiology and imaging data into AI‑ready assets for research and clinical use. Medentra’s platform normalizes DICOM files, strips proprietary tags, and fully de‑identifies patient...

Can You Really Make $200K–$500K in Data? Students Ask the CEO

In an AMA, a CEO fielded prospects’ questions about transitioning into high-paying data and AI roles, outlining a structured program that builds technical skills, produces portfolio projects, and reshapes resumes. He emphasized leveraging existing career experience while learning data engineering/AI...

From Zero to Billion Row Analytics with Exasol Personal

The video walks through a hands‑on workshop where the presenter builds a data pipeline capable of processing one billion prescription records from the UK’s GP prescribing dataset, using Exasol Personal, a free version of the in‑memory columnar analytical database. He explains...

AI Won’t Replace Data Engineers—It’ll Multiply Them

The video argues that artificial intelligence will not eliminate data engineering roles but will function like a construction crane—speeding up the building process without replacing architects or laborers. By framing AI as a productivity tool, the speaker emphasizes that data...

How to Use AI to Work Faster as a Data Engineer

The video explains how data engineers can leverage AI tools like ChatGPT to speed up routine tasks such as writing SQL and provisioning AWS infrastructure. It emphasizes that AI should be used after mastering core skills; AI can instantly locate bugs...

Will AI Replace Data Engineers? The Real Answer

The video tackles the hot question of whether artificial intelligence will make data engineers obsolete. The speaker argues that AI will not replace these professionals; instead, it depends on them to supply clean, well‑structured data and the compute power needed...

🚨 AI That Fixes Data Pipelines By Itself? Big Tech Is Already Doing This

Big tech is rapidly embedding AI into data pipelines to detect anomalies and errors in real time and, increasingly, to automatically repair issues as data flows through systems. Advances now extend these capabilities from structured to unstructured data—like audio and...

The Job Application Funnel That Got Me $450K at Lyft

In this video, Data Engineer Academy founder Chris Garzone breaks down the job‑application funnel that helped him earn $450,000 at Lyft and shows data‑professionals how to replicate the process. He emphasizes the first step of measuring the interview conversion rate—how...

Sam Jenkins Interview | Turning Data Into Better Business Decisions

Sam Jenkins, managing partner at Punchcard Systems, discusses how organizations can turn raw data into decision‑making products that drive better business outcomes. His upcoming talk focuses on building high‑performing digital enterprises by treating data strategically, designing tools around specific decision...

Data Engineering Is Booming—Stop Being Skeptical

The video addresses the rapid growth of data engineering and challenges the skepticism many software, backend, and QA professionals feel about entering the field. The speaker argues that formal data‑engineering degrees or certificates are scarce, keeping the talent bar low. Recruiters...

Software Support to Data Engineer: $130K to $180K+

The video argues that moving from a software support position to a data‑engineering role can dramatically increase compensation, citing a typical jump from $130,000 to $180,000 or more. The speaker emphasizes the “up‑and‑over” strategy: by leveraging fifteen years of technical experience,...

Data Engineers Are #1 in Demand—Do Your Research

In a recent talk titled “Data Engineers Are #1 in Demand—Do Your Research,” the speaker urges data professionals to replace cynicism about AI with concrete market research. He argues that the prevailing belief that upskilling is unnecessary because AI will...

Meet Zo Computer: The AI That Acts Like Your Personal Cloud

Zo Computer, founded by Rob, is marketed as a personal cloud computer powered by AI that acts as an always‑on, intelligent assistant rather than a traditional user‑managed machine. The service runs on industrial‑grade cloud infrastructure but is largely operated by its...

How FINRA Is Streamlining Data Requests

FINRA’s latest initiative focuses on streamlining data requests to improve oversight while reducing the compliance burden on member firms. Under the FINRA Forward agenda, senior leaders Sam Dradi and Jay Koutros explained how the regulator is shifting from broad,...

Batch Processing Explained in 2 Minutes

Batch processing aggregates data over a defined time window before executing a single job, as illustrated by bank reconciliation and payroll cycles. In practice, batch jobs run on schedules ranging from every 15 minutes to weekly, offering predictability and cost efficiency....

Trustworthy Data Beats Shiny AI at CES 2026: Horizon Media's Dominic Venuto

Horizon Media is prioritizing trustworthy, transparent data over flashy AI features as it rolls out its Blue AI-native platform, enriching client data and enabling natural-language interrogation down to atomic-level records so customers can validate and act on insights. The agency...

Data Engineering Salary Questions Answered | $60K to $450K Journey

The video is an AMA session that walks prospective data‑engineering candidates through the full compensation spectrum—from entry‑level $60K salaries to senior roles that can command $450K. The host fields live questions from participants, explains how the program tailors cover letters...

6 Job Offers in 2026 for Data Engineering

The video is a podcast where host Shashank interviews Samir, a data engineer who secured six job offers in 2026 and ultimately chose a remote‑first role. Samir’s journey from an electrical‑engineering graduate with no campus placement to a senior software...

I Made $450K as a Data Engineer at Lyft—Here's My Exact Path

The video chronicles a former Boston College pre‑med student who pivoted to data engineering, ultimately earning $450 K at Lyft and launching a career‑coaching side hustle. He emphasizes self‑directed learning, rapid skill acquisition, and the power of community platforms to fill...

Introduction to Amazon SageMaker Notebooks: Managed Jupyter for ML

Amazon SageMaker Notebooks, now integrated into SageMaker AI, provides a fully managed JupyterLab environment for data science and machine learning. The service removes the need for manual infrastructure setup, offering elastic compute, shared persistent storage, and AI‑assisted coding tools. Users...

AWS Compute for Data Science: EC2 Vs. SageMaker Vs. Lambda Explained

Amazon Web Services offers three primary compute options for data science: EC2, SageMaker, and Lambda. EC2 provides full control and custom environments for high‑performance computing, while SageMaker delivers an integrated platform for training, tuning, and deploying machine learning models. Lambda...

590 - From Patient Flow to Operational Efficiency: Optimising Workflows at the Enterprise Level

Talking Health Tech’s latest episode spotlights Roland’s enterprise‑level Concentric Care platform, designed to streamline patient flow and boost operational efficiency across hospitals. Executive Director Steve Gomes explains how a 25‑person engineering team in Victoria has built a middleware layer that...

Data Engineering Explained in 2 Minutes (The Most Underrated Career)

The video demystifies data engineering, describing it as the hidden foundation that powers every modern tech function. Using a house‑building analogy, the speaker likens data engineers to the electricians and plumbers who lay the wiring and pipes before designers add...

Why Soft Skills + PySpark Can Land You Lead Data Engineer Roles

The video highlights how a blend of soft skills and PySpark proficiency can catapult engineers into lead data‑engineer positions, especially in the Bay Area’s startup ecosystem. The speaker recounts a chance encounter with a medical‑device startup founder that led to...

MLflow Leading Open Source

Databricks’ leaders Corey Zumar, Jules Damji, and Danny Chiao discussed the latest evolution of MLflow on the MLOps Podcast. The open‑source platform is being rebuilt to handle generative AI, agent workloads, and production‑grade governance, moving beyond its original data‑science‑only focus....

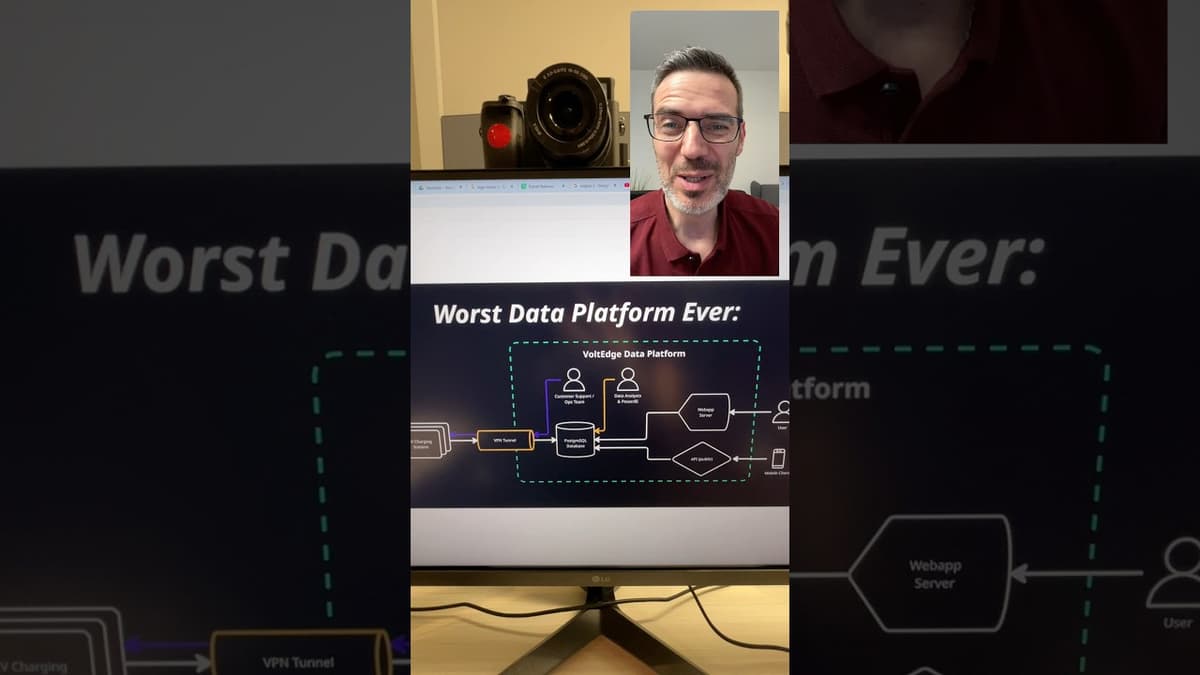

The Worst Data Platform Ever?

The video spotlights a deliberately terrible data architecture built around electric‑vehicle charging stations, using a single PostgreSQL instance as the backbone for every function—from charger telemetry to customer‑facing applications. By routing each charger through a VPN tunnel straight to the central...

How Pure Storage Powers Real-Time Telco AI

The video spotlights Pure Storage’s push at MWC 26 to become the go‑to data platform for telcos entering the AI‑driven, cloud‑era. By positioning its Pure Data Stream solution as “telco‑grade” infrastructure, Pure aims to give carriers a unified layer that...

TOP 10 AI Tools For Serious Data Engineer | 10x Growth | High Paying Salary | Real Example

The video, presented by a veteran data engineer, outlines ten AI‑powered utilities that promise to turn ordinary data engineers into “10x engineers” by automating coding, debugging, and design tasks while also promoting a new AWS‑focused bootcamp. The presenter walks through each...

Stop Blaming the Market: Land Your Data Job Now

The video challenges the common excuse that a "bad" or "good" job market dictates when to pursue a data career. Instead, it argues that market conditions are perpetually shifting, and candidates should focus on actions within their control—namely, skill acquisition...

Data Engineering Is Undervalued

Data engineering is often the most overlooked discipline, yet it provides the essential infrastructure that powers analytics, AI, and business intelligence. By constructing reliable extraction, transformation, and loading pipelines, engineers turn raw inputs into clean, query‑ready datasets. This foundational work...

18 Years Experience & Only Making $160K in Data (Live AMA with CEO of Data Engineer Academy)

The live AMA hosted by the CEO of Data Engineer Academy centered on a senior data professional who, after 18 years in the field, was earning only $160,000. Participants asked how to break through the compensation ceiling and transition into...

Can SAP HANA Hold Up To Scrutiny? I CIO Talk Network

The CIO Talk Network episode examines whether SAP HANA can withstand rigorous scrutiny, featuring SAP’s VP of product strategy Jeff W. and HP’s CTO Chris Ninwit. They frame HANA as a purpose‑built, in‑memory platform that unifies transactional (OLTP) and analytical...

10 System Design Interview Tips That Got Me to $500K

The video, presented by Chris Garzone, founder of Data Engineer Academy, outlines a systematic approach to acing system‑design interviews for data‑engineering roles. Garzone shares tips drawn from his "System Design Cheat Code" book, emphasizing how candidates can move from a...

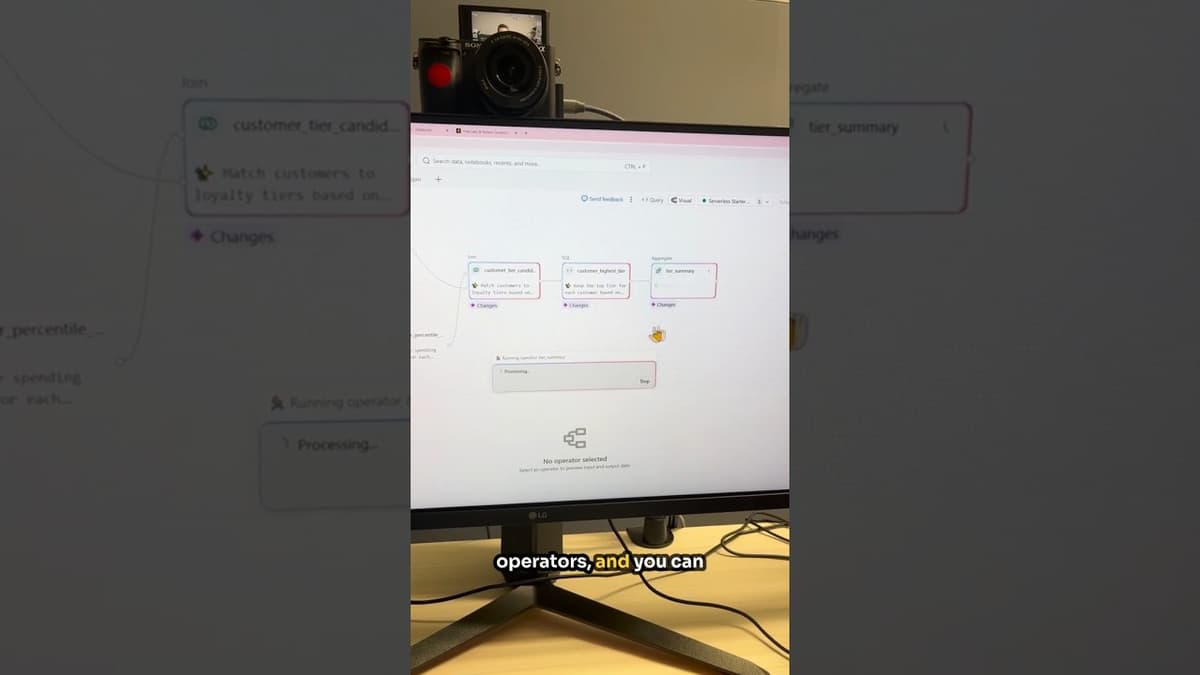

Databricks Keeps Raising the Bar With This New Feature!

Databricks unveiled its LakeFlow pipeline editor, a visual tool that lets users design, inspect, and run data pipelines without writing extensive SQL code. The feature integrates a graph‑based view of each pipeline step and a preview pane that shows sample...

ETL Explained in 2 Minutes

The video “ETL Explained in 2 Minutes” breaks down the extract‑transform‑load process using a food‑factory analogy, illustrating how raw data from disparate sources must be cleaned before reaching a warehouse. It outlines the three stages: extraction from transactional databases, APIs or...

For AI in Ads to Work, IAB Says Fix the ‘Garbage In’ Problem First

The Interactive Advertising Bureau (IAB) announced that its new Project Eidos will tackle the “garbage‑in” problem that is limiting AI‑driven advertising measurement. The initiative comes as the ecosystem wrestles with fragmented data sources, privacy‑driven signal loss, and an expanding mix of...

Manifest Vegas | Dan Keto, Easy Metrics, on Warehouse Performance Management

At Manifest Vegas, Easy Metrics co‑founder Dan Keto explained how distribution centers are moving from simple labor tracking to a holistic, engineering‑driven Warehouse Performance Management model. He emphasized that the proliferation of robotics and siloed software creates a fragmented data...

Online Bootcamps vs Data Engineering Course - Which One Gets You Hired? (Live Q&A)

The video is an Ask‑Me‑Anything session where a data‑career coach fields live questions from professionals eyeing higher‑paid roles in data engineering, product management, or technical program management. Participants range from senior clinical data programmers to administrative service managers, each seeking...

FAA, DOD Data Silos Were Partly to Blame for Last Year’s DCA Crash

Federal investigators concluded last year’s DCA mid-air collision was preventable, citing a history of ignored safety recommendations—notably wider adoption of ADS‑B In—and a poor safety culture within air traffic operations that suppressed employee reporting. The probe found many staff were...

From APIs to Warehouses: AI-Assisted Data Ingestion with Dlt - Aashish Nair

The workshop, led by Ashish Nair of dlt Hub, introduced an AI‑assisted approach to ingesting data from public APIs into analytical warehouses using the open‑source dlt Python library. Over a 90‑minute session, participants saw how dlt abstracts the typical ETL...

Stop Chasing Titles—Maximize Your Data Salary Instead

The video urges engineers to stop obsessing over titles and instead invest in soft‑skill development that drives business value. It argues that while technical prowess gets candidates through early screening, the interview’s most anxiety‑inducing stage—soft‑skill assessment—filters out the majority of...

Why Legal AI Fails without Trusted Data: Key Takeaways From Our Denodo Webinar

The Denodo webinar, hosted by Legal IT Insider’s Caroline Hill, examined why legal‑focused artificial intelligence projects frequently stall despite sophisticated tools. Speakers argued that the root cause is not algorithmic weakness but the inability of law firms to rely on...

Bain: Telcos Have a Data Goldmine

Bain & Company highlighted that telecom operators sit on a massive, under‑exploited data trove, ranging from event attendance to travel routes. One carrier piloted a program that anonymized this information, satisfying strict regulator requirements while delivering personalized discount offers. The...

Will AI Replace Data Engineers? Here’s the Truth

The video tackles the hot question of whether artificial intelligence will supplant data engineers, concluding that AI is a powerful augmenting tool rather than a job‑killer. Using a crane analogy, the speaker illustrates how new technology speeds construction without eliminating...