Know What's Happening in Big Data

Know What's Happening in Big Data

Immuta launches Agentic Data Access module for AI agents

Immuta unveiled an Agentic Data Access module that enables autonomous AI agents to retrieve enterprise data in real time while enforcing governance policies. The system treats agents as first‑class data users, applying least‑access and zero standing privileges with full audit trails. Built on Immuta’s policy engine, it adds registration, guardrail policies and multi‑approval controls.

Databricks has overtaken Snowflake in quarterly revenue, now leading by $120 million after a $220 million gap two years ago. The shift is driven by AI’s demand for unstructured data, which Databricks processes directly from object storage without migration. Databricks SQL grew from $100 million to $1 billion in under three years, while AI‑focused products accelerate even faster. Snowflake is responding with its Intelligence suite and partnerships with OpenAI and Anthropic, but analysts project Databricks will reach $8.9 billion by FY27 versus Snowflake’s $5.7 billion.

Stop Writing UNION ALL for Multi-Level Aggregations You need regional totals AND product totals AND grand totals. So you write three separate queries with UNION ALL. There's a better way: GROUPING SETS. UNION ALL - Scans the table 3 times. Slow. GROUPING SETS - One...

Statewide longitudinal data systems (SLDS) can boost education and workforce outcomes, but designs vary based on intended functions—public reporting, research analytics, and individual support. The brief by Stefaan Verhulst explains how policymakers can align system architecture, governance, and legal frameworks...

As I was building my MRR analysis feature, I realized that there is much more power in our MRR schedule than we realize. With the correct metadata, we have a revenue intelligence engine that will provide more insight for our...

Folks asked me "what's your plan for gwenchmarks"? At first, it was a joke. But... teaching people how to plan, execute and read benchmarks is a good goal. So I wrote The Gwenchmarks Manifesto as a start. Still a bit...

Databricks launched Zerobus Ingest, a fully managed serverless streaming service that moves data directly into Delta Lake tables. The platform streams data from sources such as manufacturing systems, financial trading apps, IoT devices, and cybersecurity tools. It promises sub‑five‑second latency,...

Entity resolution, knowledge graphs, and geospatial analytics together dismantle hidden fraud networks across government programs and insurance lines. By linking fragmented records—tax filings, social media, transaction logs—into unified 360‑degree profiles, investigators can spot duplicate registrations, synthetic identities, and collusive entities....

There's a moment in every data engineer's career when they discover they can query a 10GB Parquet file on their laptop in seconds. That's the DuckDB moment. It changes how you think about what requires a cluster and what doesn't. Spoiler: most...

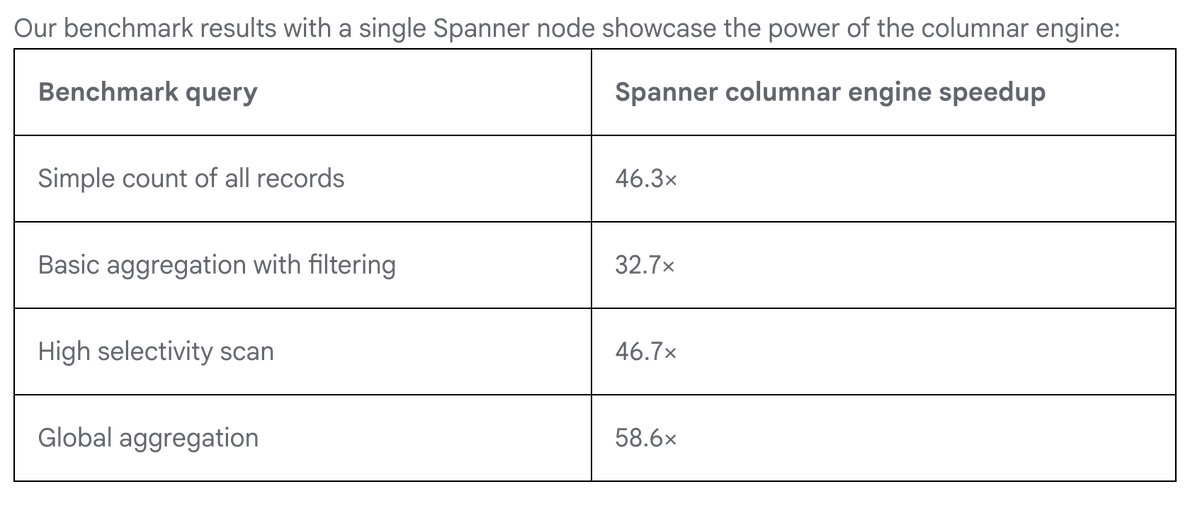

"The columnar engine uses a specialized storage mechanism designed to accelerate analytical queries by speeding up scans up to 200 times on live operational data" The new @googlecloud Spanner capability means you can serve Iceberg lakehouse data ... https://t.co/dxmgEAI0cA https://t.co/TUe0vNnzfN

QBO Cloud and MinIO announced a joint solution that merges QBO’s bare‑metal cloud platform with MinIO’s AIStor, an exascale, S3‑compatible object storage system. The partnership delivers a unified, high‑performance data layer designed for modern AI and analytics workloads, emphasizing scalability,...

A strong data strategy is more than storage. Its context, quality, & governance. The “useless” data may hold insights GenAI needs, but without curation, access controls, and trust, innovation risks becoming noise instead of value. https://t.co/ParkENiwRg

The article outlines how Azure Databricks and Azure Machine Learning can be tightly integrated to create a unified intelligence pipeline. Databricks handles large‑scale data ingestion, cleaning, and feature engineering using Spark and Delta Lake, while Azure ML supplies model versioning,...

Legal technology leaders are hosting a March 18 webinar to dissect "data readiness" as the decisive factor for AI success in the legal sector by 2026. They argue that fragmented repositories, inconsistent metadata, and weak governance are the primary obstacles...

At last year’s CIO Summit in Mumbai, senior leaders from banking, fintech, telecom and manufacturing debated the growing risk profile of open‑source databases, with PostgreSQL emerging as the focal point. The conversation has moved from pure performance to trust, encompassing...

Emerald Intelligence has introduced Embedded Analytics, a new SaaS feature that provides real‑time, macro‑level dashboards for the licensed cannabis and hemp market. The initial release includes four interactive dashboards covering state sales, company leaderboards, product sales, and store status, all...

Accurate B2B data appending is a strategic lever that drives sales and marketing performance. Companies that rely on internal teams often face technical, resource, and compliance hurdles, leading to stale or incomplete records. Partnering with specialized data‑append providers delivers fresh,...

Companies face mounting sustainability regulations and consumer scrutiny, yet their legacy supply‑chain systems hold fragmented, inconsistent product data. The article outlines five actions—gaining product visibility, feeding tools with clean inputs, extending traceability beyond distribution, building compliance‑ready data infrastructure, and treating...

Germany’s Mobility Data Space (MDS) and the pan‑European Data for Road Safety (DFRS) consortium have signed an agreement to exchange safety‑related traffic data from connected vehicles across the EU. The partnership enables near‑real‑time sharing of sensor‑derived incident information, supporting the...

The article argues that AI readiness starts with robust SQL Server schema design, not with machine‑learning models. It highlights that stable, non‑recycled primary keys, preserved historical records, and clear audit columns are essential for future feature engineering. By separating raw...

PostgreSQL’s query planner relies on catalog statistics from pg_class and pg_statistic to estimate costs. When these statistics become stale—due to bulk loads, schema changes, or insufficient vacuum—the planner can choose inefficient plans, turning milliseconds queries into minutes. The article explains...

Shopify’s 2026 guide outlines what makes cloud analytics truly modern, emphasizing a cloud‑native stack that couples elastic compute with a semantic layer, governed self‑serve, and built‑in FinOps. It contrasts legacy on‑prem and “cloud‑washed” BI with a fully modular architecture that...

A recent benchmark shows that standard Python UDFs in PySpark dramatically slow pipelines because each row must be serialized to a Python worker. Using Pandas (vectorized) UDFs cuts execution time by roughly fourfold by leveraging Apache Arrow’s columnar transfer. Native...

Instead of loading CSVs into pandas just to run one query, you can use DuckDB to run SQL directly on files. No loading. No waiting. Just query the file and get results. It’s also 20x faster and uses way less memory. Here’s how...

The article presents five open‑source Python scripts that automate common data‑quality checks, ranging from missing‑value analysis to cross‑field consistency validation. Each tool reads CSV, Excel or JSON inputs, applies schema‑driven rules or statistical methods, and generates detailed reports with actionable...

The Dunkirk Port Authority has opened a 21‑hectare brownfield site for a potential AI‑focused data center, offering developers a power connection ranging from 400 MW to 700 MW. Power will be supplied by RTE from the nearby Flanders Maritimes substation, with a...

An upcoming research initiative will evaluate digital‑twin technology for data centers, aiming to identify high‑ROI use cases that surpass basic spreadsheet analysis. The study will assess available solutions, pinpoint scenarios—such as infrastructure vendor selection—that deliver quick, measurable value, and define...

In this episode, Luke Flemmer, head of private assets at MSCI, explains how standardizing and normalizing data can unlock transparency, price formation, and liquidity in private markets, drawing parallels to past evolutions in bonds, FX, and equities. He argues that...

Bindplane announced native destinations for the VictoriaMetrics ecosystem, allowing users to route OpenTelemetry metrics, traces, and logs directly to VictoriaMetrics, VictoriaTraces, and VictoriaLogs. The integration provides vendor‑neutral, OpenTelemetry‑native pipelines that eliminate manual exporter configuration and mitigate collector drift. It also...

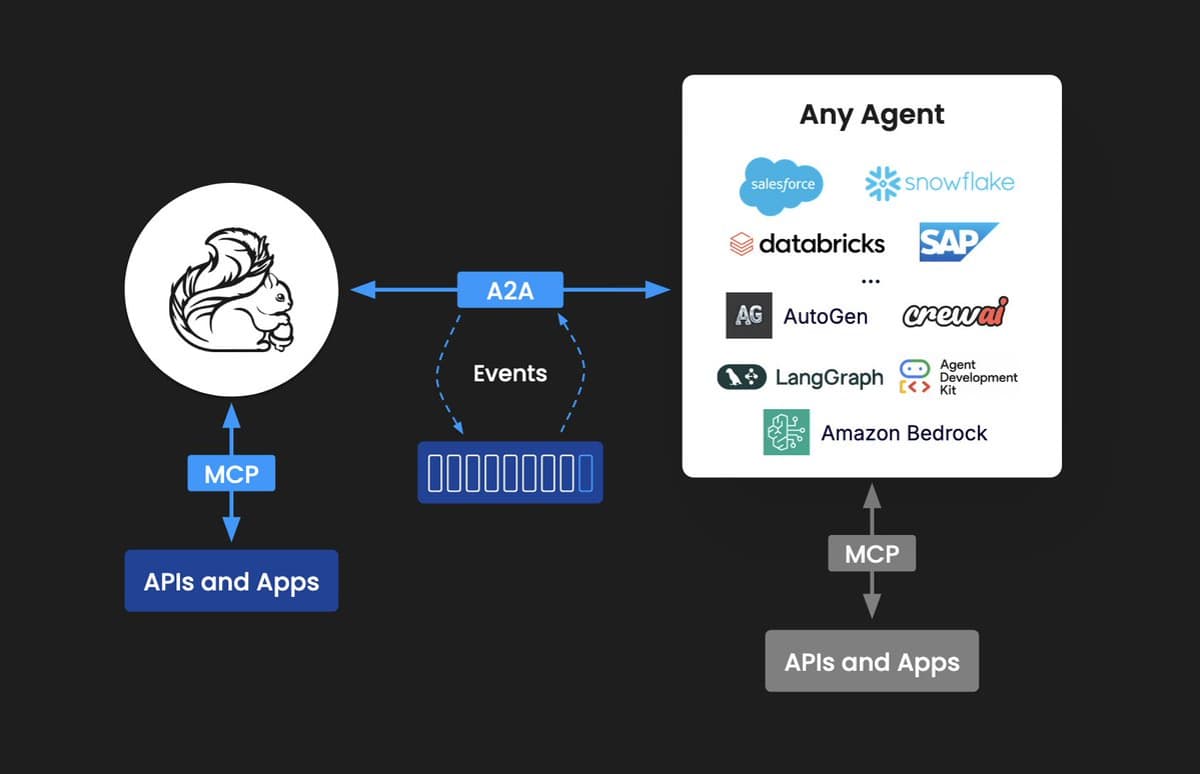

Confluent Intelligence has introduced Streaming Agents, built on Google’s Agent2Agent protocol, to enable AI agents to share real‑time context and collaborate across platforms. The preview feature connects data sources such as BigQuery, Databricks, Snowflake and LangChain to third‑party systems like...

Clickhouse is trying to push postgres + clickhouse as the ultimate analytics DB stack. But tbh adding an eventually consistent database to your stack that you needed to sync too is anything but trivial. Love the product but I'd just use...

Tomas Vondra revisits PostgreSQL's long‑standing default of random_page_cost = 4.0, showing that modern SSDs make random I/O far more expensive than the parameter suggests. By timing sequential and index scans on a 4.4 GB table, he derives a random_page_cost of roughly 25‑35 on...

Snowflake prints 30% revenue growth and record bookings momentum $SNOW delivered Q4 revenue of $1.28B (+30% YoY) with product revenue at $1.23B (+30%) and EPS of $0.32, beating estimates by 23%. Growth is re-accelerating at scale. RPO climbed to $9.77B (+42%), billings...

Security firm Truffle Security revealed that publicly exposed Google API keys can be upgraded to full‑access Gemini credentials, enabling data exfiltration from any organization using them. A November scan uncovered 2,863 such keys, affecting major banks, security vendors, and even...

An AI proof of concept (POC) is a focused, short‑term project that validates technical feasibility and business value before full‑scale investment. Costs vary widely, driven primarily by data readiness, problem complexity, integration needs, and infrastructure choices, with data preparation often...

Data Product: why do we need this data? Data Asset: what is this data and how do we maintain it? I've found the most practical definition of data products comes from Dagster's software-defined assets. Each asset has a clear definition, dependencies, and...

SONAR is having the best quarter in years. One of my board members asked if I were concerned about the rise of AI and if it would be a drain our data business. I told her that we are seeing...

The tutorial walks through building an elastic vector‑database simulator that uses consistent hashing with virtual nodes to shard embeddings across distributed storage. It includes a live, interactive ring visualization that shows how adding or removing nodes only reshuffles a tiny...

Agent standards like MCP and A2A are starting to show up in more types of packaged software. @confluentinc just shipped updates to their data products, including their "intelligence" platform that now supports A2A and MCP integrations. https://t.co/n8teMonFMW https://t.co/1f7afnSw8K

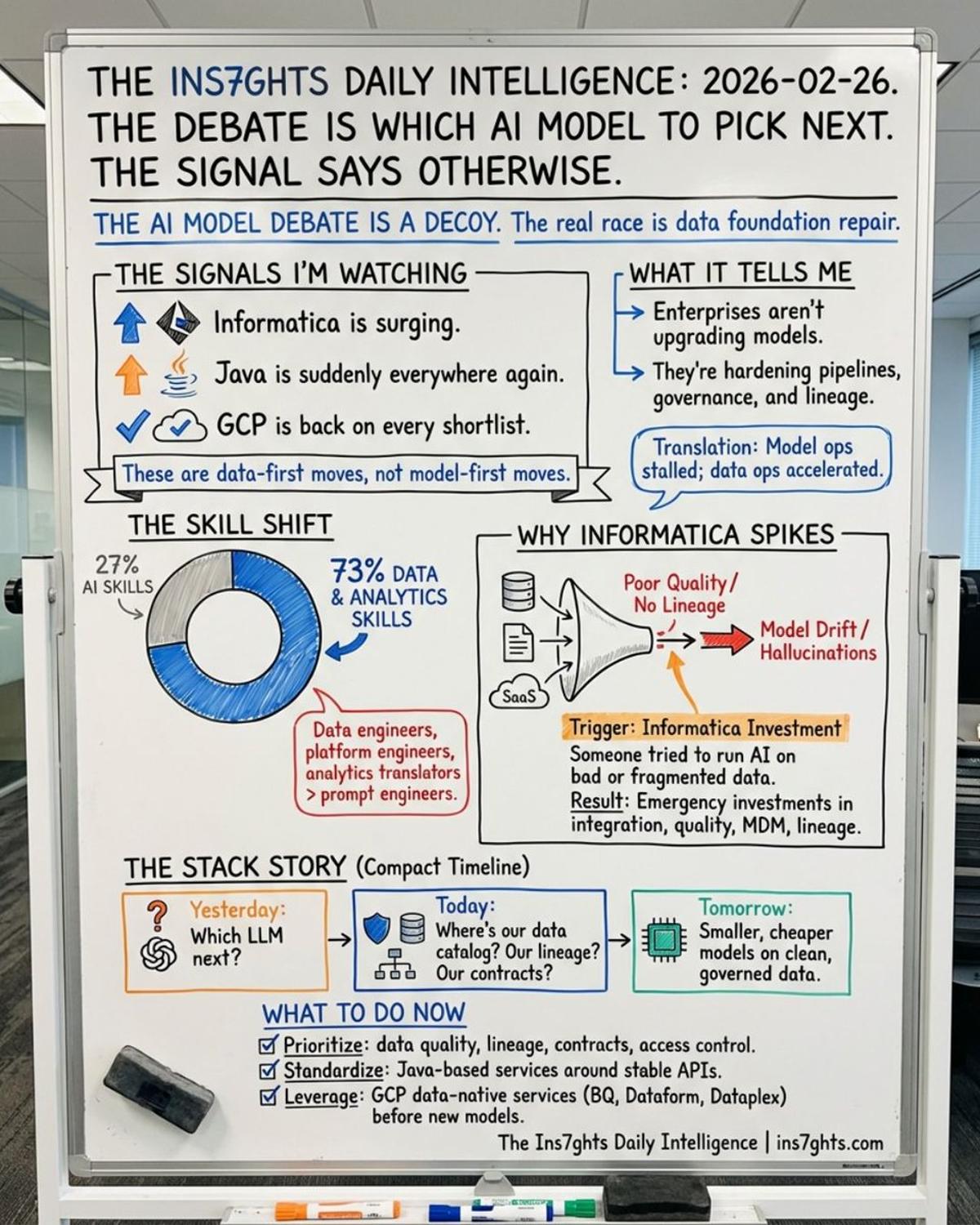

73% of companies are investing more in data and analytics skills right now. Not AI skills. Data skills. Your AI returns depend entirely on your data foundation. The benchmark comparison doesn't change that.

Vast Data and Nvidia have launched the CNode‑X, a GPU‑powered server that embeds the Vast Data AI Operating System directly onto Nvidia hardware. The integrated solution is optimized for AI pipelines, high‑performance analytics, vector search, retrieval‑augmented generation and agentic workloads....

The debate: which AI model to bet on. What I'm watching instead: Informatica surging. Java everywhere. GCP back on the shortlist. 73% of companies investing more in data skills. Not AI skills. Your AI strategy is only as strong as the data layer...

Recently I caught up with Amiet Dhagat, Head of Data Services Analytics and AI for HCF, to get the inside story around their stellar success in transition from static data to dynamic data, and why real-time data has been so...

The United States and the European Union are negotiating the Enhanced Border Security Partnership (EBSP), which would grant visa‑free travel to EU citizens in exchange for access to European biometric databases. The latest draft does not explicitly prohibit the use...

Slack will be the Waterloo of open vs closed data. Someone is going to make a slack clone where you get unfettered access to your own data, and people really will switch en masse.

Why are hyperscalers racing to offer managed Iceberg? Because whoever controls the catalog controls the ecosystem. If your tables are in a managed Iceberg service, you can query them from any engine - Spark, Trino, DuckDB, whatever. But your metadata stays with...

Percona released Operator for MongoDB version 1.22.0, adding automatic Persistent Volume Claim resizing, HashiCorp Vault integration for system user credentials, and native service‑mesh compatibility via the appProtocol field. The update also expands backup and restore capabilities, including replica‑set name remapping,...

Are we still using table catalogs for open table formats? Haven't heard too much lately. I like OTFs, but making it non-optional to have a catalog isn't great. That's why I like prefer the option to use one without. But if you...

RT High-level policies aren't enough. It's time for audits, training, DSPM, and privacy-by-design in AI workflows. If privacy isn't built into how data moves, you're hoping - not leading. #DataGovernance #AI #CIO @Star_CIO https://t.co/Naq82FuMWZ

Why we're excited about Lakebase GA: most database services are based on outdated assumptions leading to poor operability, scalability and devex.

Every data conversation I tracked this week led back to the same concept. Data Governance. Not as compliance. As the center of gravity. 47 data sources. 6 different owners. 3 definitions of "customer." Your AI agent has no idea who to believe. https://t.co/ZrW6iptSqs