OpenPOIs

OpenPOIs is an open‑source toolkit that aggregates and conflates Points of Interest (POIs) across major U.S. geospatial datasets. It pulls current POI snapshots from OpenStreetMap and Overture Maps, merging them into a single unified dataset. Each POI receives a confidence score that reflects the probability of its existence based on both sources. A web‑based map lets users explore and compare the original datasets side by side.

Sensitive Data as Venn Diagram

Healthcare data is split into "Normal" (non‑sensitive) and "Restricted" (sensitive) categories. Sensitive records receive specific sensitivity codes in the FHIR Resource.meta.security tag, creating a Venn diagram of overlapping topics such as Sexual Health, Mental Health, and Substance Use. The tags...

NAB Show 2026: Hydrolix Named “Data Observability Solution Provider of the Year” In 2026 Data Breakthrough Awards

Hydrolix was named Data Observability Solution Provider of the Year in the 2026 Data Breakthrough Awards, marking its third straight win. The award follows previous honors for cloud data warehousing and observability innovation, underscoring the platform’s real‑time visibility at petabyte...

Insurers Need Real-Time Data Capabilities

Insurers are no longer struggling to collect data but to act on it before it becomes stale. Legacy batch‑processing systems and entrenched data silos create 24‑hour delays that expose insurers to fraud and inefficiencies. The article outlines a five‑step roadmap—prioritizing...

NOODL. An Experiment in Equitable Data Licensing: Promise and Limits

The Nwulite Obodo Open Data License (NOODL) is a tiered licensing model designed for African language datasets, aiming to close the equity gap between researchers in the Global South and multinational firms. Built on Creative Commons foundations, it grants permissive...

![The $22K Neural Search Pipeline That Was Silently 7 Days Behind [Edition #6]](/cdn-cgi/image/width=1200,quality=75,format=auto,fit=cover/https://substackcdn.com/image/fetch/$s_!fOxT!,w_256,c_limit,f_auto,q_auto:good,fl_progressive:steep/https%3A%2F%2Fsubstack-post-media.s3.amazonaws.com%2Fpublic%2Fimages%2F444d8dff-2e3d-4216-b86d-30b379177d49_1200x1200.png)

The $22K Neural Search Pipeline That Was Silently 7 Days Behind [Edition #6]

Briefly.ly, a Series B newsletter aggregator with 5.2 M daily users, runs a two‑tower neural retrieval system costing about $22.6 K per month. The pipeline trains on a six‑month static snapshot and refreshes its FAISS index only once a week, leading to...

Your Data Platform Costs More Than It Should

A Snowflake migration revealed unexpectedly high cloud spend, prompting a deep dive into data platform economics. The author demonstrates how simple SQL queries can surface the most credit‑hungry warehouses and queries, exposing idle compute and full‑table scans. By adjusting auto‑suspend...

How I Solved for Data Validation with AI

During a company hack week, an analytics engineering team tackled the persistent problem of validating data changes introduced by AI‑driven code refactoring. Using Claude Code, they built an AI skill that automatically opens a GitHub pull request and launches a...

Proxy Governance for Alternative Data: A Practical Playbook for Funds

The HedgeThink playbook outlines how funds can harvest alternative data through proxy‑enabled web scraping while meeting investor due‑diligence and regulator expectations. It urges teams to start with a narrow, documented use case, verify site terms, and map GDPR obligations—where a...

Lean Manufacturers: You’ve Implemented Dynamics 365 F&SCM, Now Unlock Its Full Value with a Fabric Lakehouse

Lean manufacturers using Dynamics 365 Finance and Supply Chain Management can now amplify their data capabilities with Microsoft Fabric lakehouse. The lakehouse consolidates ERP transactional data with shop‑floor signals, quality metrics, and operational feeds into a single, clean data environment....

Code Crunch Japan 2025: Redefining the Quantitative Workflow Through Human-AI Collaboration

On October 9, 2025, seven of Japan’s top financial institutions showcased their AI‑enhanced quantitative workflows at Code Crunch Japan, using Bloomberg’s BQuant Enterprise platform. The demo highlighted three proprietary applications: a multi‑agent system that fuses internal data with Bloomberg feeds and automates...

Mastercard International Assigned Patent

Mastercard International has been assigned U.S. Patent No. 12,596,828 for a "method and system for sovereign data storage." The invention, developed by a team of Irish researchers, outlines a computer‑implemented process that authenticates write requests, determines regulatory domains, and enforces...

Data Authenticity & Accountability Crucial in the AI Age

Data authenticity has become a cornerstone of AI deployment as deepfake and synthetic‑data threats rise, exposing firms to fraud, litigation and reputational damage. The EU’s new digital omnibus aims to streamline AI, cybersecurity and data rules, promising roughly $6 billion in...

Day 158: User Behavior Analytics - Catching the Insider Threat

The post outlines building a User Behavior Analytics (UBA) system that learns normal employee activity and flags anomalies in real time. By establishing a behavioral baseline, the solution can spot insider threats such as off‑hours server access or sudden data‑exfiltration...

How to Scrape JavaScript-Heavy Websites for LLM Pipelines with Cloudflare Browser Rendering

Modern LLM pipelines struggle with JavaScript‑heavy sites because traditional scrapers only capture the initial HTML, missing hydrated content. Cloudflare’s Browser Rendering (now called Browser Run) runs headless Chrome on the edge and offers two layers: Quick Actions for single‑request rendered...

![$220K Lost to a Fraud Model That Passed a 0.82 Accuracy Check [Edition #5]](/cdn-cgi/image/width=1200,quality=75,format=auto,fit=cover/https://substackcdn.com/image/fetch/$s_!fOxT!,w_256,c_limit,f_auto,q_auto:good,fl_progressive:steep/https%3A%2F%2Fsubstack-post-media.s3.amazonaws.com%2Fpublic%2Fimages%2F444d8dff-2e3d-4216-b86d-30b379177d49_1200x1200.png)

$220K Lost to a Fraud Model That Passed a 0.82 Accuracy Check [Edition #5]

FinFlow AI, a Series B fintech processing 15 million daily transactions, lost $220,000 after a schema change rendered the merchant_zip feature null. The XGBoost fraud model still met its 0.82 accuracy threshold, so the corrupted data went undetected and fraud capture...

Day 52: Implement a Simple Inverted Index for Log Searching

The post walks through building a real‑time inverted index for log data, ingesting messages from Kafka, tokenizing them, and persisting the index in Redis for hot lookups and PostgreSQL for cold storage. It adds a search API that ranks results...

Why Your Pipeline Finishes Later Every Month

Data pipelines increasingly finish later each month, a phenomenon the author calls “shifting right.” A junior engineer’s daily timestamps revealed a steady drift from 5:47 AM to 7:23 AM, threatening a 9 AM SLA. The article explains why slow‑down is harder to detect...

The Rise of Experimental Data Lakes

Experimental data lakes are emerging as a new scientific data foundation, capturing raw instrument output together with full experimental context. They differ from traditional enterprise lakes by handling messy, high‑volume data and preserving metadata for reuse. The shift is driven...

Understanding Data Ownership Is Key Before Hotel Budget Season

Hotel operators are increasingly focused on data ownership as they approach the annual budget cycle. The article highlights that while software upgrades are routine, the ability to export, migrate, and control historic data can become costly and time‑consuming. It stresses...

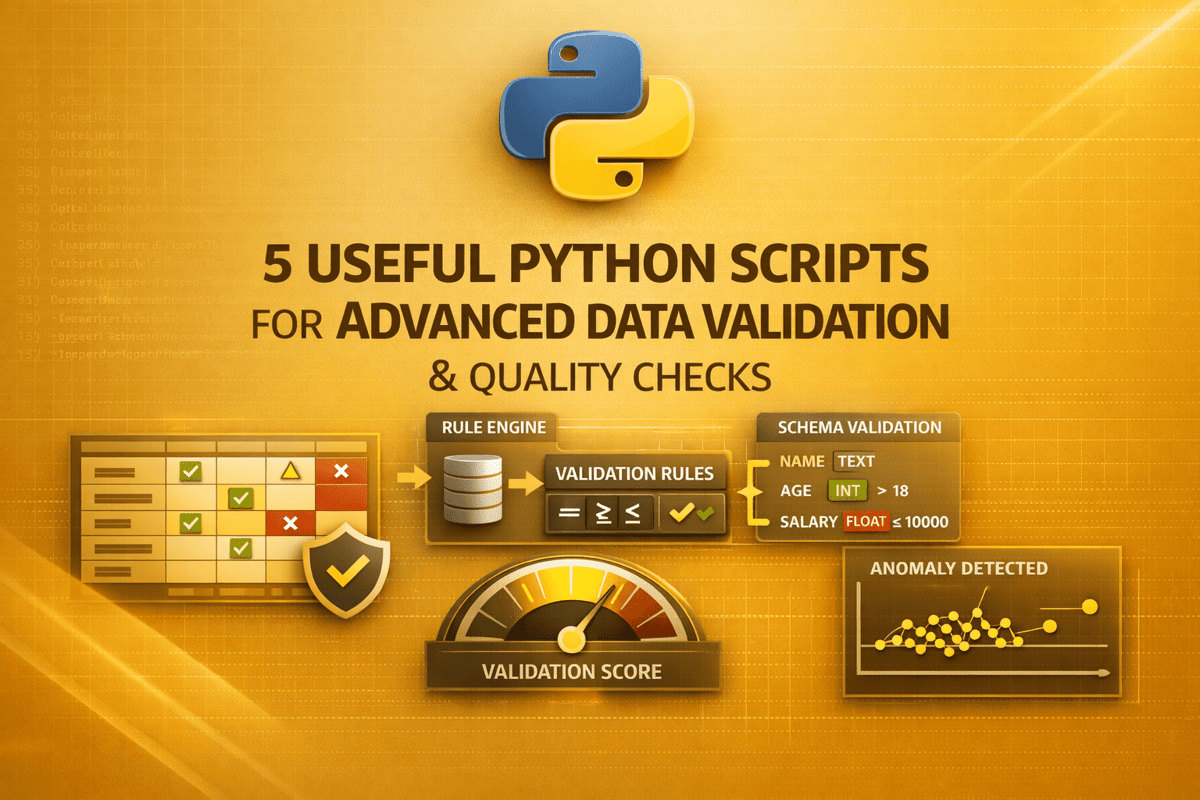

5 Useful Python Scripts for Advanced Data Validation & Quality Checks

The article presents five open‑source Python scripts that tackle advanced data‑validation challenges beyond basic null or duplicate checks. Each script focuses on a specific pain point—time‑series continuity, semantic business‑rule enforcement, data drift and schema evolution, hierarchical graph integrity, and cross‑table...

Automate Data Management for Enterprise Commerce (2026) – Shopify

Shopify’s 2026 guide explains how automated data management can streamline the entire data lifecycle for enterprise commerce, from ingestion to analytics. It cites that 64% of organizations spend over half their data team’s time on repetitive manual tasks, and that...

Coordinate Convergence and Calm Complexity

HighByte partnered with Amazon Web Services to give Brazilian glassmaker Vivix Vidros Planos a scalable industrial data‑fabric built on the Intelligence Hub platform. The solution curates, normalizes and contextualizes OT data from PLCs, SQL servers and edge devices before publishing...

The Digital Omnibus Reopens the EU Data Acquis Before It Has Even Been Tested

The European Union’s Digital Omnibus proposal folds the Data Governance Act, Open Data Directive and other recent statutes into the 2023 Data Act, turning it into the central hub for data access, reuse and governance. While marketed as simplification, critics...

New Pulse Survey Just Dropped: The State of Data Modeling (April 2026).

The Practical Data Community launched a new pulse survey titled "The State of Data Modeling" for April 2026. Almost nine‑in‑ten respondents indicated at least one modeling pain point, underscoring widespread challenges. The survey is brief—six questions that take roughly 90 seconds...

Day 51: Build Dashboards for Visualizing Analytics Results

The post outlines how to build a real‑time analytics dashboard that consumes aggregated metrics from Kafka streams and pushes updates via WebSockets. It highlights a query‑optimization layer that combines Redis caching with PostgreSQL time‑series partitioning to keep latency sub‑second. Multi‑dimensional...

Databricks Acquires Quotient AI

Databricks announced the acquisition of Quotient AI, a startup specializing in model governance, versioning and reproducibility tools. The deal embeds Quotient AI’s automation layer into Databricks’ lakehouse, creating a unified environment for data preparation, feature engineering, model training and deployment....

Centerbase Launches AI-Powered Business Intelligence Tool That Gives Firms Citation-Backed Answers From Their Own Data

Centerbase, the practice‑management platform for midsized law firms, announced the limited release of Centerbase IQ, an AI‑powered business intelligence tool that answers firm‑specific questions using the firm’s own data and provides citation links to source documents. The solution leverages a...

Fordham 33 (Report 2): Top 5 Takeaways: Data Governance, Privacy, & Cybersecurity in an AI World

The Fordham Law data governance session highlighted how AI is upending traditional data‑management practices, demanding full traceability and new vendor oversight. Panelists compared stark regulatory splits, noting the EU’s aggressive AI legislation versus Japan’s relaxed consent rules for training data....

How I Built a Data Catalogue From Scratch As a Data Engineer

A lone data engineer at a mid‑size manufacturing firm built a data catalogue from scratch, turning ad‑hoc notes into a structured metadata repository. The organization lacked documentation, ownership, and a data strategy, causing slow, risky deliveries and hidden changes. By...

Data Pipeline Failures Cost Enterprises $3 Million per Month, Fivetran Benchmark Finds

Fivetran’s 2026 Enterprise Data Infrastructure Benchmark, based on a survey of 500 senior data leaders at firms with over 5,000 employees, reveals that fragile data pipelines are costing large enterprises an average of $3 million each month. While organizations spend roughly...

Replication vs Sharding: A Beginner’s Guide

A single database eventually hits CPU, memory, and I/O limits, causing latency and availability risks. Replication creates multiple copies of the same dataset, improving read scalability and fault tolerance through synchronous or asynchronous modes. Sharding splits data across nodes, allowing...

ColorCloud 2026 Preview: Prepare for Power BI Everywhere

ColorCloud 2026, the Microsoft Business Applications conference, takes place in Hamburg from April 15‑17. The event features a session titled “Power BI Everywhere: Embedding Apps and Automations,” co‑presented by Capgemini’s Power Platform architect Keith Atherton and Sarah Guest. Atherton will also...

Probabilistic Data Structures: When to Use Bloom Filters and HyperLogLog

Probabilistic data structures like Bloom filters and HyperLogLog let engineers handle massive datasets with minimal memory by accepting a controlled error margin. Bloom filters provide fast, space‑efficient membership tests, while HyperLogLog offers near‑accurate distinct‑count estimates. Both replace costly exact structures...

Same Platform, Different Outcomes: Metadata Practices and Open Data Use

The study examines how metadata design on open‑government data portals influences user behavior across 15 U.S. cities, analyzing 5,863 datasets. Using affordance theory, researchers measured metadata quality and linked it to two usage metrics: dataset views and downloads. Results show...

MCPs vs APIs in a Production Enrichment Pipeline

Rick Koleta’s GTM Vault episode shows how Skyp’s enrichment pipeline combines Claude Code’s plan mode with the Apollo API to deliver high‑quality leads at roughly fifty cents each. The build demonstrates that while MCP connectors (Gmail, Stripe, Grain, Slack) provide...

Exploring the Upcoming OSDU® Data Platform Standard Version 1.0

The Open Group OSDU Forum is set to launch OSDU Data Platform Standard Version 1.0, a stable subset of the platform’s capabilities that defines consistent API behavior. The standard provides detailed guidelines for services such as secure access, search, and file...

Data Governance in the AI Era: 10 Shifts Redefining Data, Institutions, and Practice

The essay argues that data governance is the foundation of AI governance, as AI systems depend on high‑quality input data. It outlines ten transformative shifts, including redefined data definitions, expanded ownership, real‑time pipelines, and new ethical risk assessments. These changes...

StatGPT and the Fourth Wave of Open Data

Decades of investment in statistical systems have yielded abundant official data, yet users still struggle to discover, interpret, and apply it. The IMF’s new StatGPT report argues that the core issue is not data availability but (re)usability, highlighting fragmented portals,...

Day 49: Implement Anomaly Detection Algorithms for Distributed Log Processing

The post outlines a production‑grade anomaly detection system for streaming log data, combining Z‑score and IQR statistical filters, time‑series baseline analysis, multi‑dimensional clustering, and adaptive thresholds. It emphasizes sub‑second latency and horizontal scalability, referencing Netflix’s 800‑service monitoring, Uber’s 100,000‑event‑per‑second fraud...

Stop Building Salesforce Integrations From Scratch

Engineers often build custom Salesforce‑to‑warehouse pipelines, but frequent schema changes, API limits, and hidden failures turn maintenance into a monthly time sink. Snowflake’s OpenFlow connector automates schema detection and runs as a native, managed service within Snowflake, eliminating the need...

State Management in Stream Processing: How Apache Flink and Kafka Streams Handle State

The article compares how Apache Flink and Kafka Streams manage state in real‑time stream processing. Flink treats state as a first‑class citizen, persisting snapshots to durable storage like S3 via periodic checkpoints. Kafka Streams materializes state changes in compacted Kafka...

Day 48: Sessionization for User Activity Tracking

The post outlines a production‑grade sessionization pipeline that turns raw event streams into actionable user sessions using Kafka Streams session windows, a Redis‑backed active‑session cache, and PostgreSQL for persistence. It highlights real‑time session tracking with sub‑millisecond lookups and a REST...

The Missing Interface in Data Platform Engineering

Data platform teams often deliver technically complete stacks, yet consumer teams struggle because the operating interface is missing. The article argues that beyond schemas and APIs, platforms need explicit operational contracts, ownership models, adoption models, and communication patterns. It outlines...

RSAC 2026: Commvault Extends Enterprise Resilience to Structured and AI Data with Real-Time Governance Controls

Commvault announced an expansion of its data security posture management (DSPM) to include structured data and AI‑driven vector databases, leveraging its recent acquisition of Satori. The new real‑time data access governance lets security teams monitor and control structured data usage,...

Orchestrating and Designing Data Collaboratives: What Governance Model Is Fit for Purpose?

Stefaan Verhulst’s paper surveys the surge of data‑governance models—data trusts, commons, cooperatives, intermediaries, unions, sandboxes and data spaces—and argues they are not competing solutions but purpose‑driven responses to distinct coordination challenges. He proposes a typology of seven governance archetypes, each...

How to Query GDELT's Dataset Using Google BigQuery

OSINT Jobs released a tutorial showing how to access GDELT’s comprehensive news archive through Google BigQuery at no cost. The guide walks users through setting up the BigQuery environment, exploring the two core GDELT tables, and running a SQL query...

![800ms Latency Spikes From A $45K Redis Cluster That Looked Healthy [Edition #2]](/cdn-cgi/image/width=1200,quality=75,format=auto,fit=cover/https://substackcdn.com/image/fetch/$s_!fOxT!,w_256,c_limit,f_auto,q_auto:good,fl_progressive:steep/https%3A%2F%2Fsubstack-post-media.s3.amazonaws.com%2Fpublic%2Fimages%2F444d8dff-2e3d-4216-b86d-30b379177d49_1200x1200.png)

800ms Latency Spikes From A $45K Redis Cluster That Looked Healthy [Edition #2]

Fintech firm Veritas Pay, processing 800 million transactions annually, saw its real‑time fraud detection engine exceed the 150 ms SLA, with P99 latency spiking to 800 ms during peak loads. The root causes include Redis write saturation during six‑hour batch syncs, a Python...

The Data Engineering Revolution | Spark, AI, and What’s Coming Next

The article outlines how Apache Spark has become the backbone of modern data engineering, driving real‑time analytics and large‑scale ETL workloads. It highlights the infusion of generative AI models into pipeline orchestration, enabling automated schema evolution and anomaly detection. Recent...

TACC Launches CFDE Cloud Workspace for NIH Common Fund Datasets

The Texas Advanced Computing Center (TACC) has publicly launched the Common Fund Data Ecosystem (CFDE) Cloud Workspace, a collaborative effort with Johns Hopkins, Penn State and the San Diego Supercomputer Center’s CloudBank. The platform gives researchers instant, no‑cost access to...